PyBATS is a package for Bayesian time series modeling and forecasting. It is designed to enable both quick analyses and flexible options to customize the model form, prior, and forecast period. The core of the package is the class Dynamic Generalized Linear Model (dglm). The supported DGLMs are Poisson, Bernoulli, Normal (a DLM), and Binomial. These models are primarily based on Bayesian Forecasting and Dynamic Models.

PyBATS is hosted on PyPI and can be installed with pip:

$ pip install pybats

The most recent development version is hosted on GitHub. You can download and install from there:

$ git clone git@github.com:lavinei/pybats.git pybats

$ cd pybats

$ sudo python setup.py install

This is the most basic example of Bayesian time series analysis using PyBATS. We'll use a public dataset of the sales of a dietary weight control product, along with the advertising spend. First we load in the data, and take a quick look at the first couples of entries:

import numpy as np

import pandas as pd

from pybats.shared import load_sales_example

from pybats.analysis import *

from pybats.point_forecast import *

from pybats.plot import *

# Load example sales and advertising data. Source: Abraham & Ledolter (1983)

data = load_sales_example()

print(data.head())

Sales Advertising

1 15 12.0

2 16 20.5

3 18 21.0

4 27 15.5

5 21 15.3

The sales are integer valued counts, which we model with a Poisson Dynamic Generalized Linear Model (DGLM). Second, we extract the outcome (Y) and covariates (X) from this dataset. We'll set the forecast horizon k=1 for this example. We could look at multiple forecast horizons by setting k to a larger value. analysis, a core PyBATS function, will automatically:

- Define the model (a Poisson DGLM)

- Sequentially update the model coefficients with each new observation

$y_t$ (also known as forward filtering) - Forecast

$k=1$ step ahead at each desired time point

The main parameters that we need to specify are the dates during which the model will forecast. In this case we specify the start and end date with integers, because there are no actual dates associated with this dataset.

Y = data['Sales'].values

X = data['Advertising'].values.reshape(-1,1)

k = 1 # Forecast 1 step ahead

forecast_start = 15 # Start forecast at time step 15

forecast_end = 35 # End forecast at time step 35 (final time step)

By default, analysis will return samples from the forecast distribution as well as the model after the final observation.

mod, samples = analysis(Y, X, family="poisson",

forecast_start=forecast_start, # First time step to forecast on

forecast_end=forecast_end, # Final time step to forecast on

k=k, # Forecast horizon. If k>1, default is to forecast 1:k steps ahead, marginally

prior_length=6, # How many data point to use in defining prior

rho=.5, # Random effect extension, which increases the forecast variance (see Berry and West, 2019)

deltrend=0.95, # Discount factor on the trend component (the intercept)

delregn=0.95 # Discount factor on the regression component

)

beginning forecasting

The model has the posterior mean and variance of the coefficients (also known as the state vector) stored as mod.a and mod.C respectively. We can view them in a nicer format with the method mod.get_coef.

print(mod.get_coef())

Mean Standard Deviation

Intercept 0.63 0.36

Regn 1 0.08 0.01

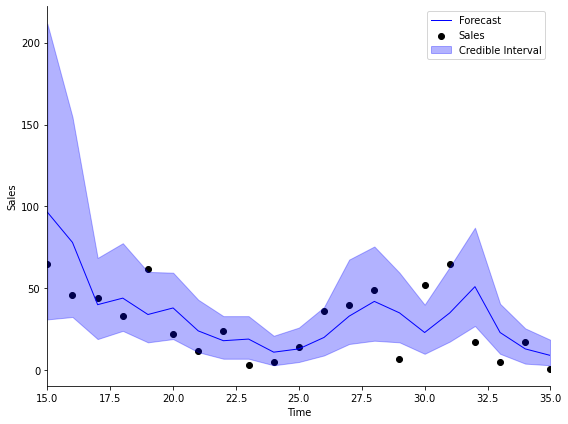

Finally, we turn our attention to the forecasts. At each time step within the forecast window,

import matplotlib.pyplot as plt

# Take the median as the point forecast

forecast = median(samples)

# Plot the 1-step ahead point forecast plus the 95% credible interval

fig, ax = plt.subplots(1,1, figsize=(8, 6))

ax = plot_data_forecast(fig, ax, Y[forecast_start:forecast_end + k], forecast, samples,

dates=np.arange(forecast_start, forecast_end+1, dtype='int'))

ax = ax_style(ax, ylabel='Sales', xlabel='Time', xlim=[forecast_start, forecast_end],

legend=['Forecast', 'Sales', 'Credible Interval'])

All models in PyBATS are based on DGLMs, which are well described by their name:

- Dynamic: The coefficients are changing over time

- Generalized: We can choose the distribution of the observations (Normal, Poisson, Bernoulli, or Binomial)

- Linear: Forecasts are made by a standard linear combination of coefficients multiplied by predictors

The correct model type depends upon your time series, dlm). PyBATS is unique in the current Python ecosystem because it provides dynamic models for non-normally distribution observations. The base models supported by PyBATS are:

- Normal DLMs (

dlm) model continuous real numbers with normally distributed observation errors. - Poisson DGLMs (

pois_dglm) model positive integers, as in the example above with counts of daily item sales. - Bernoulli DGLMs (

bern_dglm) model data that can be encoded as$0-1$ , or success-failure. An example is a time series of changes in stock price, where positive changes are coded as$1$ , and negative changes are coded as$0$ . - Binomial DGLMs (

bin_dglm) model the sum of Bernoulli$0-1$ outcomes. An example is the daily count of responses to a survey, in which$n_t$ people are contacted each day, and$y_t$ people choose to respond.

The linear combination in a DGLM is the multiplication (dot product) of the state vector by the regression vector

{% raw %}

Where:

-

$\lambda_t$ is called the linear predictor -

$\theta_t$ is called the state vector -

$F_t$ is called the regression vector

The coefficients in a DGLM are all stored in the state vector,

PyBATS supports

The default setting is to have only an intercept term, which we can see from the model defined in the example above:

mod.ntrend

1

We can access the mean mod.itrend, to view the trend component of the state vector.

mod.a[mod.itrend].round(2), mod.R.diagonal()[mod.itrend].round(2)

(array([[0.63]]), array([0.13]))

We can also access this information using get_coef, while specifying the component we want. Note that get_coef provides the coefficient standard deviations, rather than the variances.

print(mod.get_coef('trend'))

Mean Standard Deviation

Intercept 0.63 0.36

This means that the intercept term has a mean of forecast_end, or when we hit the final observation in Y, whichever comes first.

To add in a local slope, we can re-run the analysis from above while specifying that ntrend=2.

mod, samples = analysis(Y, X, family="poisson",

ntrend=2, # Use an intercept and local slope

forecast_start=forecast_start, # First time step to forecast on

forecast_end=forecast_end, # Final time step to forecast on

k=k, # Forecast horizon. If k>1, default is to forecast 1:k steps ahead, marginally

prior_length=6, # How many data point to use in defining prior

rho=.5, # Random effect extension, increases variance of Poisson DGLM (see Berry and West, 2019)

deltrend=0.95, # Discount factor on the trend component (intercept)

delregn=0.95 # Discount factor on the regression component

)

beginning forecasting

mod.ntrend

2

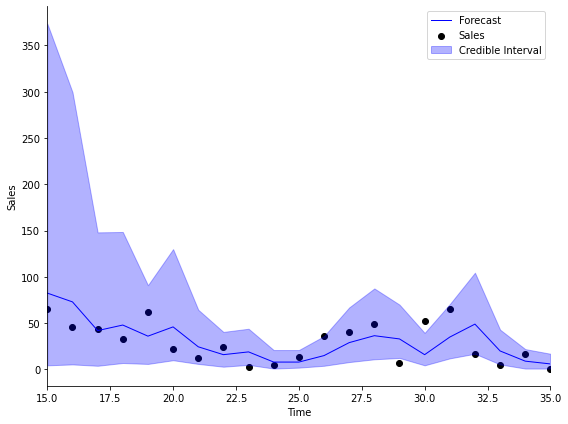

We can plot the forecasts with this new model, and see that the results are quite similar!

# Take the median as the point forecast

forecast = median(samples)

# Plot the 1-step ahead point forecast plus the 95% credible interval

fig, ax = plt.subplots(1,1, figsize=(8, 6))

ax = plot_data_forecast(fig, ax, Y[forecast_start:forecast_end + k], forecast, samples,

dates=np.arange(forecast_start, forecast_end+1, dtype='int'))

ax = ax_style(ax, ylabel='Sales', xlabel='Time', xlim=[forecast_start, forecast_end],

legend=['Forecast', 'Sales', 'Credible Interval'])

The regression component contains all known predictors. In this example, the advertising budget is our only predictor, which is stored in the

X[:5]

array([[12. ],

[20.5],

[21. ],

[15.5],

[15.3]])

The analysis function automatically detected that

mod.nregn

1

To understand the impact of advertising on sales, we can look at the regression coefficients at the final time step. Similar to the trend component, the indices for the regression component are stored in mod.iregn.

mod.a[mod.iregn].round(4), mod.R.diagonal()[mod.iregn].round(4)

(array([[0.0954]]), array([0.0005]))

And just as before, we can also view this information using get_coef, with component='regn'.

print(mod.get_coef('regn'))

Mean Standard Deviation

Regn 1 0.1 0.02

The coefficient mean is pois_dglm, in the dglm module.

To quantify the uncertainty of the parameter, many people like to use the standard deviation (or standard error) of the coefficient, which is simply the square root of the variance. A good rule of thumb to get a pseudo-confidence interval is to add

mean, sd = mod.get_coef('regn').values[0]

np.round(mean + 2 * sd, 2), np.round(mean - 2 * sd, 2)

(0.14, 0.06)

Seasonal components represent cyclic or periodic behavior in the time series - often daily, weekly, or annual patterns. In PyBATS, seasonal components are defined by their period (e.g.

For example, if the period is seasPeriods=[7]) then a fully parameterized seasonal component has harmonic components seasHarmComponents = [1,2,3].

If there is an annual trend on daily data, then the period is seasHarmComponents = [1,2,...,182], is far too many parameters to learn. It is much more common to use the first several harmonic components, such as seasHarmComponents = [1,2]. The

For more details, refer to Chapter 8.6 in Bayesian Forecasting and Dynamic Models by West and Harrison.

To see this in action, we'll load in some simulated daily sales data:

from pybats.shared import load_sales_example2

data = load_sales_example2()

print(data.head())

Sales Price Promotion

Date

2014-06-01 15.0 1.11 0.0

2014-06-02 13.0 2.19 0.0

2014-06-03 6.0 0.23 0.0

2014-06-04 2.0 -0.05 1.0

2014-06-05 6.0 -0.14 0.0

The simulated dataset contains daily sales of an item from June 1, 2014 to June 1, 2018.

- The Price column represents percent change in price from the moving average, so it's centered at 0.

- Promotion is a 0-1 indicator for a specific sale or promotion on the item.

Before we run an analysis, we again need to specify a few arguments

prior_length = 21 # Number of days of data used to set prior

k = 1 # Forecast horizon

rho = 0.5 # Random effect discount factor to increase variance of forecast distribution

forecast_samps = 1000 # Number of forecast samples to draw

forecast_start = pd.to_datetime('2018-01-01') # Date to start forecasting

forecast_end = pd.to_datetime('2018-06-01') # Date to stop forecasting

Y = data['Sales'].values

X = data[['Price', 'Promotion']].values

And most importantly, we need to specify that the retail sales will have a seasonality component with a $7-$day period:

seasPeriods=[7]

seasHarmComponents = [[1,2,3]]

mod, samples = analysis(Y, X,

k, forecast_start, forecast_end, nsamps=forecast_samps,

family='poisson',

seasPeriods=seasPeriods,

seasHarmComponents=seasHarmComponents,

prior_length=prior_length,

dates=data.index,

rho=rho,

ret = ['model', 'forecast'])

beginning forecasting

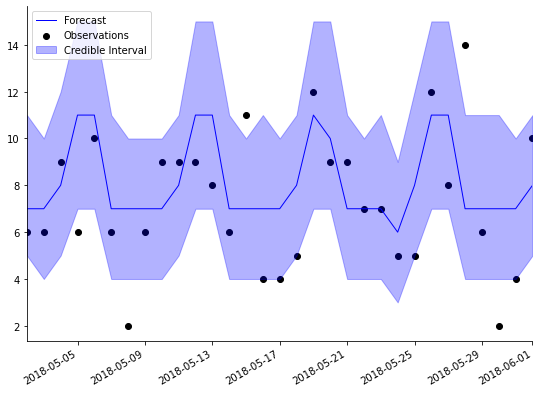

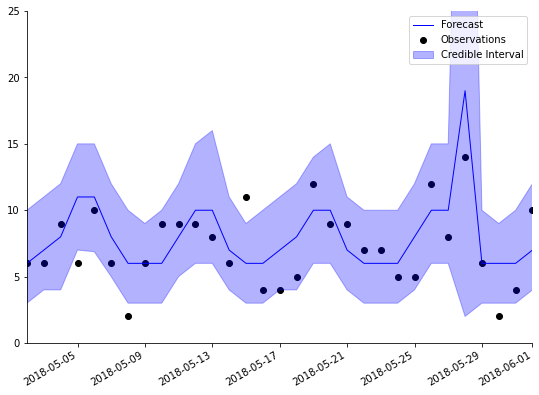

We can visualize the forecasts, and instantly see the pattern in the forecasts coming from the weekly seasonality:

plot_length = 30

data_1step = data.loc[forecast_end-pd.DateOffset(30):forecast_end]

samples_1step = samples[:,-31:,0]

fig, ax = plt.subplots(1,1, figsize=(8, 6))

ax = plot_data_forecast(fig, ax,

data_1step.Sales,

median(samples_1step),

samples_1step,

data_1step.index,

credible_interval=75)

PyBATS provides a method to specify known holidays, special events, and other outliers in the data. analysis will automatically add in an indicator (dummy) variable to the regression component for each occurance of the holiday.

There are two reasons this can be useful. First, adding in a known and repeated special event can improve forecast accuracy. Second, the indicator variable will help protect the other coefficients in the model from overreacting to these outliers in the data.

To demonstrate, let's repeat the analysis of simulated retail sales from above, while including a holiday effect:

holidays = USFederalHolidayCalendar.rules

mod, samples = analysis(Y, X,

k, forecast_start, forecast_end, nsamps=forecast_samps,

family='poisson',

seasPeriods=seasPeriods, seasHarmComponents=seasHarmComponents,

prior_length=prior_length, dates=data.index,

holidays=holidays,

rho=rho,

ret = ['model', 'forecast'])

beginning forecasting

We've just used the standard holidays in the US Federal Calendar, but you can specify your own list of holidays using pandas.tseries.holiday.

holidays

[Holiday: New Years Day (month=1, day=1, observance=<function nearest_workday at 0x7fdc45eda290>),

Holiday: Martin Luther King Jr. Day (month=1, day=1, offset=<DateOffset: weekday=MO(+3)>),

Holiday: Presidents Day (month=2, day=1, offset=<DateOffset: weekday=MO(+3)>),

Holiday: Memorial Day (month=5, day=31, offset=<DateOffset: weekday=MO(-1)>),

Holiday: July 4th (month=7, day=4, observance=<function nearest_workday at 0x7fdc45eda290>),

Holiday: Labor Day (month=9, day=1, offset=<DateOffset: weekday=MO(+1)>),

Holiday: Columbus Day (month=10, day=1, offset=<DateOffset: weekday=MO(+2)>),

Holiday: Veterans Day (month=11, day=11, observance=<function nearest_workday at 0x7fdc45eda290>),

Holiday: Thanksgiving (month=11, day=1, offset=<DateOffset: weekday=TH(+4)>),

Holiday: Christmas (month=12, day=25, observance=<function nearest_workday at 0x7fdc45eda290>)]

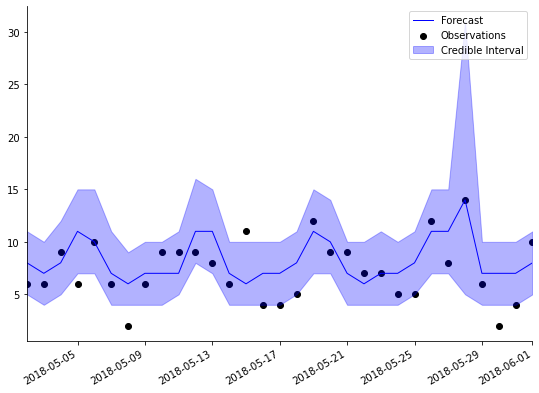

Look again at the plot of forecasts above. A number of the observations fall outside of the

plot_length = 30

data_1step = data.loc[forecast_end-pd.DateOffset(30):forecast_end]

samples_1step = samples[:,-31:,0]

fig, ax = plt.subplots(1,1, figsize=(8, 6))

ax = plot_data_forecast(fig, ax,

data_1step.Sales,

median(samples_1step),

samples_1step,

data_1step.index,

credible_interval=75)

The point forecast (dark blue line) is higher for Memorial Day, and the

Discount factors are model hyperparameters that have to be specified before an analysis begins. They control the rate at which coefficients are allowed to change, and have a very simple interpretation. For a discount factor

There is a trade-off to lowering the discount factors. While it allows the coefficients to change more over time, it will increase the uncertainty in the forecasts, and can make the coefficients too sensitive to noise in the data.

PyBATS has built-in safety measures that prevent the variance from growing too large. This makes the models significantly more robust to lower discount factors. Even so, most discount factors should be between

PyBATS allows discount factors to be set separately for each component, and even for each individual coefficient if desired.

deltrend = 0.98 # Discount factor on the trend component

delregn = 0.98 # Discount factor on the regression component

delseas = 0.98 # Discount factor on the seasonal component

delhol = 1 # Discount factor on the holiday component

rho = .3 # Random effect discount factor to increase variance of forecast distribution

mod, samples = analysis(Y, X,

k, forecast_start, forecast_end, nsamps=forecast_samps,

family='poisson',

seasPeriods=seasPeriods, seasHarmComponents=seasHarmComponents,

prior_length=prior_length, dates=data.index,

holidays=holidays,

rho=rho,

deltrend = deltrend,

delregn=delregn,

delseas=delseas,

delhol=delhol,

ret = ['model', 'forecast'])

beginning forecasting

The default discount factors in PyBATS are fairly high, so in this example we've set them to

We also changed the parameter

plot_length = 30

data_1step = data.loc[forecast_end-pd.DateOffset(30):forecast_end]

samples_1step = samples[:,-31:,0]

fig, ax = plt.subplots(1,1, figsize=(8, 6))

ax = plot_data_forecast(fig, ax,

data_1step.Sales,

median(samples_1step),

samples_1step,

data_1step.index,

credible_interval=75)

ax.set_ylim([0, 25]);

PyBATS has built in more several combinations of DGLMs, which allow for more flexible forecast distributions.

The Dynamic Count Mixture Model (dcmm) is the combination of a Bernoulli and a Poisson DGLM, developed in Berry and West (2019).

The Dynamic Binary Cascade Model (dbcm) is the combination of a DCMM and a binary cascade, developed in Berry, Helman, and West (2020).

The Dynamic Linear Mixture Model (dlmm) is the combination of a Bernoulli DGLM and a normal DLM, developed in Yanchenko, Deng, Li, Cron, and West (2021).

PyBATS also allows for the use of latent factors, which are random variables serving as regression predictors.

In the class latent_factor, the random predictors are described by a mean and a variance, developed in Lavine, Cron, and West (2020).

They can also be integrated into a model through simulation, which is a more precise but slower process, as in Berry and West (2019). The necessary functions are found on the latent_factor_fxns page.

This example continues the analysis of retail sales, and dives into interpretation of model coefficients, especially the weekly seasonal effects. It also demonstrates how to use the PyBATS plotting functions to effectively visualize your forecasts.

This example demonstrates how to use a dcmm for modeling retail sales. A latent factor is derived from aggregate sales, and used to enhance forecasts for an individual item.

This example demonstrates how to use a dbcm for modeling retail sales. A latent factor is derived from aggregate sales, and used to enhance forecasts for an individual item.

PyBATS was developed by Isaac Lavine while working as a PhD student at Duke Statistics, advised by Mike West, and with support from 84.51.

Please feel free to contact me with any questions or comments. You can report any issues through the GitHub page, or reach me directly via email at lavine.isaac@gmail.com.

Contributors: