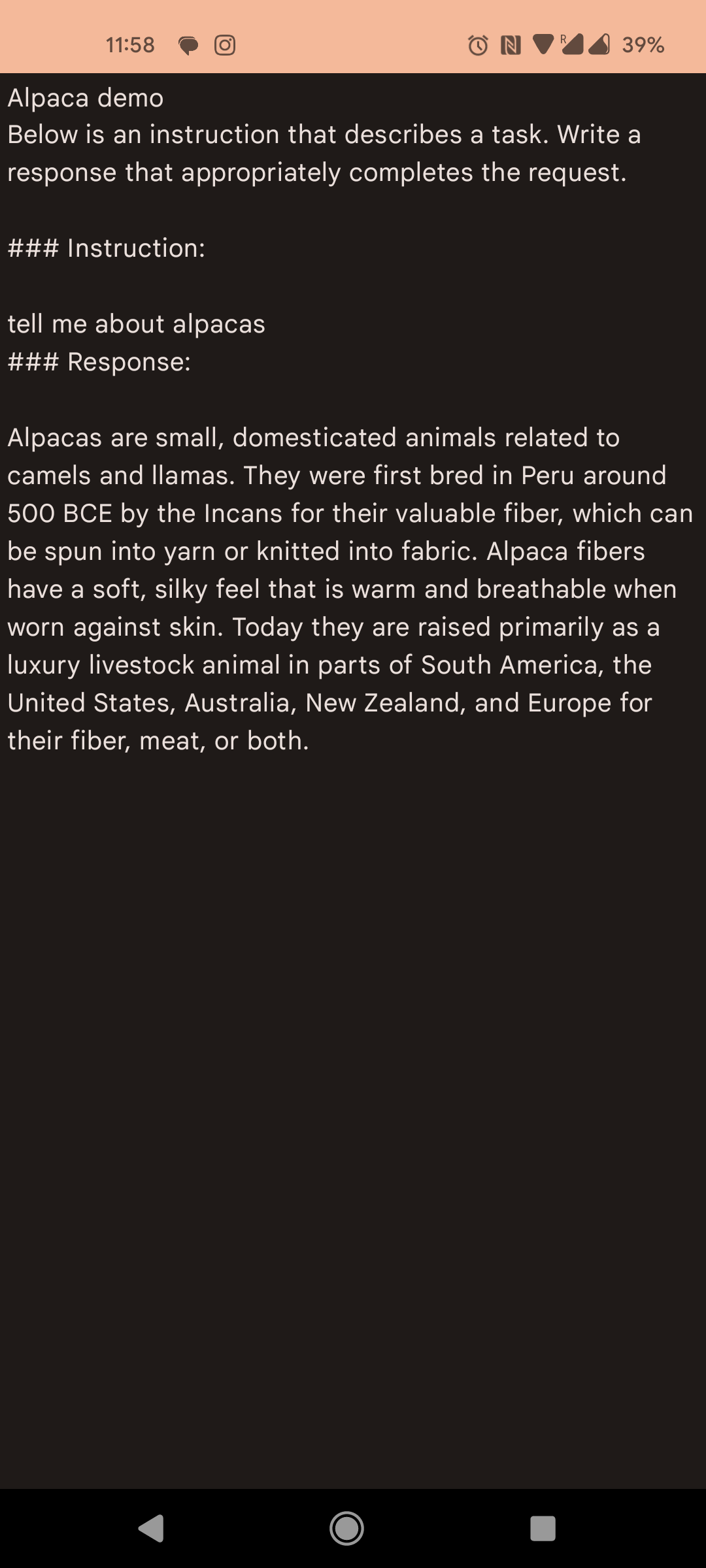

PoC for running Alpaca on-device. That uses a modified version of alpaca.cpp to perform inference.

- A device with a >8GB storage, and enough RAM to load the model into memory (>6GB). Download ggml-alpaca-7b-q4.bin, rename it to

alpaca7b.binand upload it to the device in the Application Files Directory undermodels. - Time, since on-device inference is currently incredibly slow.

- What does GPU accelerated inference look like?

- mmap? How, and does it make a difference.

- Consider using a smaller model to improve speed.