This repository contains the source code and the dataset for the EMNLP 2021 paper Detecting Speaker Personas from Conversational Texts. Jia-Chen Gu, Zhen-Hua Ling, Yu Wu, Quan Liu, Zhigang Chen, Xiaodan Zhu.

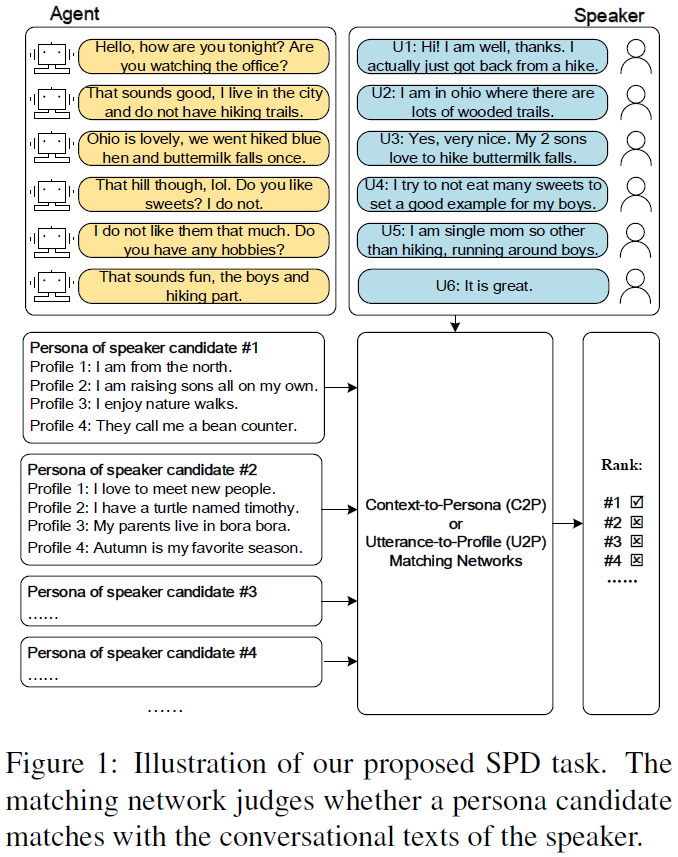

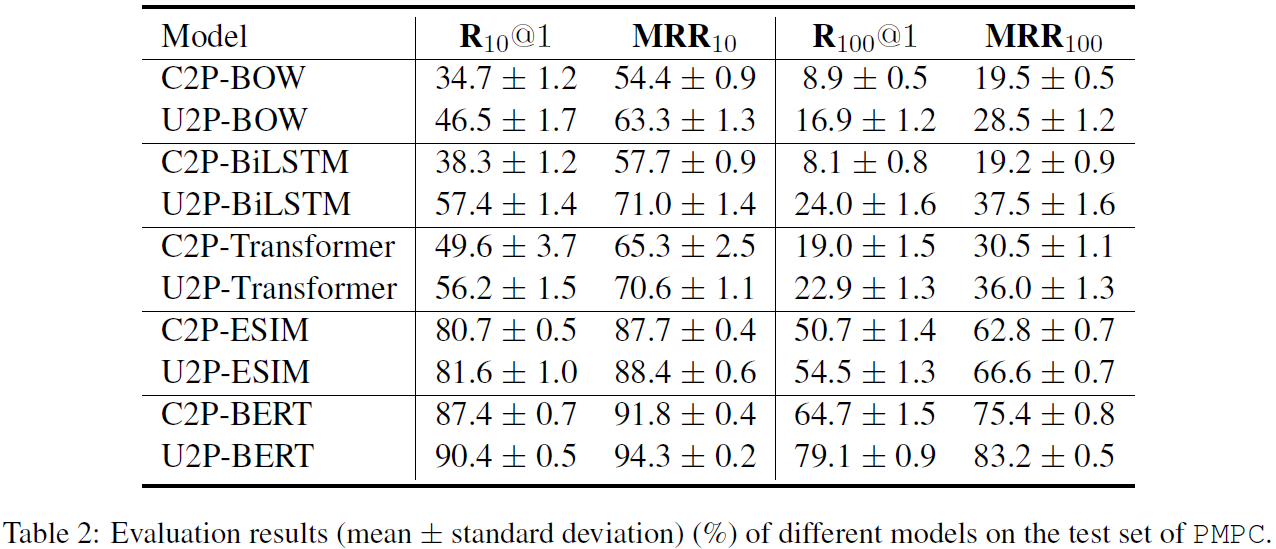

Personas are useful for dialogue response prediction. However, the personas used in current studies are pre-defined and hard to obtain before a conversation. To tackle this issue, we study a new task, named Speaker Persona Detection (SPD), which aims to detect speaker personas based on the plain conversational text. In this task, a best-matched persona is searched out from candidates given the conversational text. This is a many-to-many semantic matching task because both contexts and personas in SPD are composed of multiple sentences. The long-term dependency and the dynamic redundancy among these sentences increase the difficulty of this task. We build a dataset for SPD, dubbed as Persona Match on Persona-Chat (PMPC). Furthermore, we evaluate several baseline models and propose utterance-to-profile (U2P) matching networks for this task. The U2P models operate at a fine granularity which treat both contexts and personas as sets of multiple sequences. Then, each sequence pair is scored and an interpretable overall score is obtained for a context-persona pair through aggregation. Evaluation results show that the U2P models outperform their baseline counterparts significantly.

Python 3.6

Tensorflow 1.13.1

-

Download the BERT released by the Google research, and move to path: ./Pretraining-Based/uncased_L-12_H-768_A-12

-

Download the PMPC dataset used in our paper, and move to path:

./data_PMPC

Train a new model.

cd Non-Pretraining-Based/C2P-X/scripts/

bash train.sh

The training process is recorded in log_train_*.txt file.

Test a trained model by modifying the variable latest_checkpoint in test.sh.

cd Non-Pretraining-Based/C2P-X/scripts/

bash test.sh

The testing process is recorded in log_test_*.txt file. A "output_test.txt" file which records scores for each context-persona pair will be saved to the path of latest_checkpoint. Modify the variable test_out_filename in compute_metrics.py and then run the following command, various metrics will be shown.

python compute_metrics.py

You can choose a baseline model by comment/uncomment a model package (from model_BOW, model_BiLSTM, model_Transformer and model_ESIM) in the first several lines in train.py. The same process and commands can be done for those Non-Pretraining-Based U2P-X Models.

Create the fine-tuning data.

cd Pretraining-Based/C2P-BERT/

python data_process_tfrecord.py

Running the fine-tuning process.

cd Pretraining-Based/C2P-BERT/scripts/

bash train.sh

Test a trained model by modifying the variable restore_model_dir in test.sh.

cd Pretraining-Based/C2P-BERT/scripts/

bash test.sh

Modify the variable test_out_filename in compute_metrics.py and then run the following command, various metrics will be shown.

python compute_metrics.py

The same process and commands can be done for U2P-BERT.

NOTE: Since the dataset is small, each model was trained for 10 times with identical architectures and different random initializations. Thus, we report (mean ± standard deviation) in our paper.

If you think our work is helpful, or use the code or dataset, please cite the following paper: "Detecting Speaker Personas from Conversational Texts" Jia-Chen Gu, Zhen-Hua Ling, Yu Wu, Quan Liu, Zhigang Chen, Xiaodan Zhu. EMNLP (2021)

@inproceedings{gu-etal-2021-detecting,

title = "Detecting Speaker Personas from Conversational Texts",

author = "Gu, Jia-Chen and

Ling, Zhenhua and

Wu, Yu and

Liu, Quan and

Chen, Zhigang and

Zhu, Xiaodan",

booktitle = "Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing",

month = nov,

year = "2021",

address = "Online and Punta Cana, Dominican Republic",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2021.emnlp-main.86",

pages = "1126--1136",

}

Please keep an eye on this repository if you are interested in our work. Feel free to contact us (gujc@mail.ustc.edu.cn) or open issues.