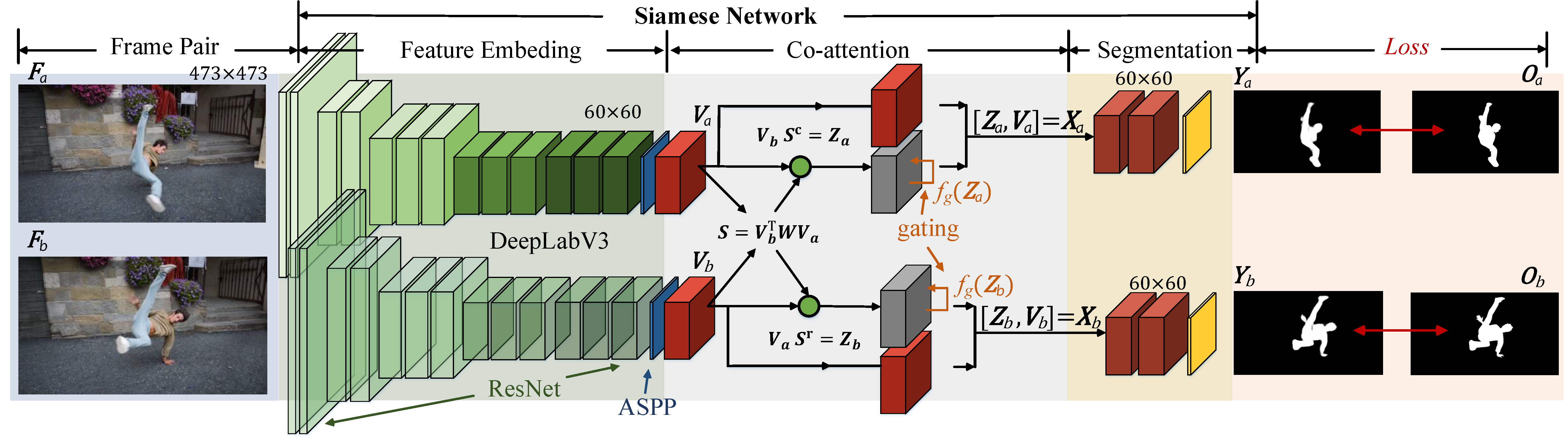

Code for CVPR 2019 paper: See More, Know More: Unsupervised Video Object Segmentation with Co-Attention Siamese Networks.Paper

###The pre-trained model and testing code:

-

Install pytorch (version:1.0.1).

-

Download the pretrained model. Run 'test_coattention_conf.py' and change the davis dataset path, pretrainde model path and result path.

-

Run command: python test_coattention_conf.py --dataset davis --gpus 0

The pretrained weight can be download from GoogleDrive.

The segmentation results on DAVIS, FBMS and Youtube-objects can be download from GoogleDrive.

If you find the code and dataset useful in your research, please consider citing:

@InProceedings{Lu_2019_CVPR,

author = {Lu, Xiankai and Wang, Wenguan and Ma, Chao and Shen, Jianbing and Shao, Ling and Porikli, Fatih},

title = {See More, Know More: Unsupervised Video Object Segmentation With Co-Attention Siamese Networks},

booktitle = {The IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

year = {2019} }