hep-analysis aims to reproduce the traditional data-analysis workflow in high-energy physics using Python. This allows us to employ the wide range of modern, flexible packages for machine learning and statistical analysis written in Python to increase the sensitivity of our analysis.

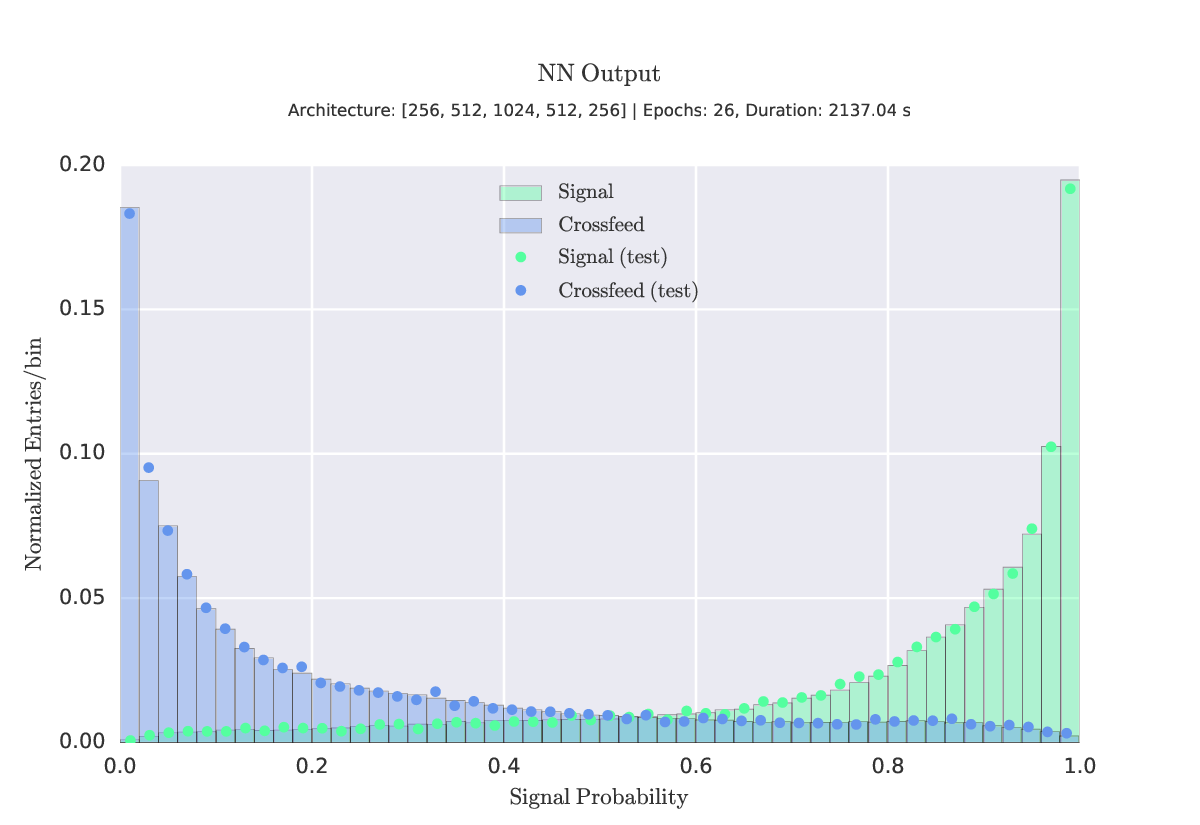

- Deep Neural Networks -

TensorFlow,PyTorch - Recurrent Neural Networks -

TensorFlow - Gradient Boosted Trees -

XGBoost,LightGBM - Hyperparameter Optimization -

scikit-learn,HyperBand

- Multi-GPU

- Distributed Training

- Smart Model Ensembles

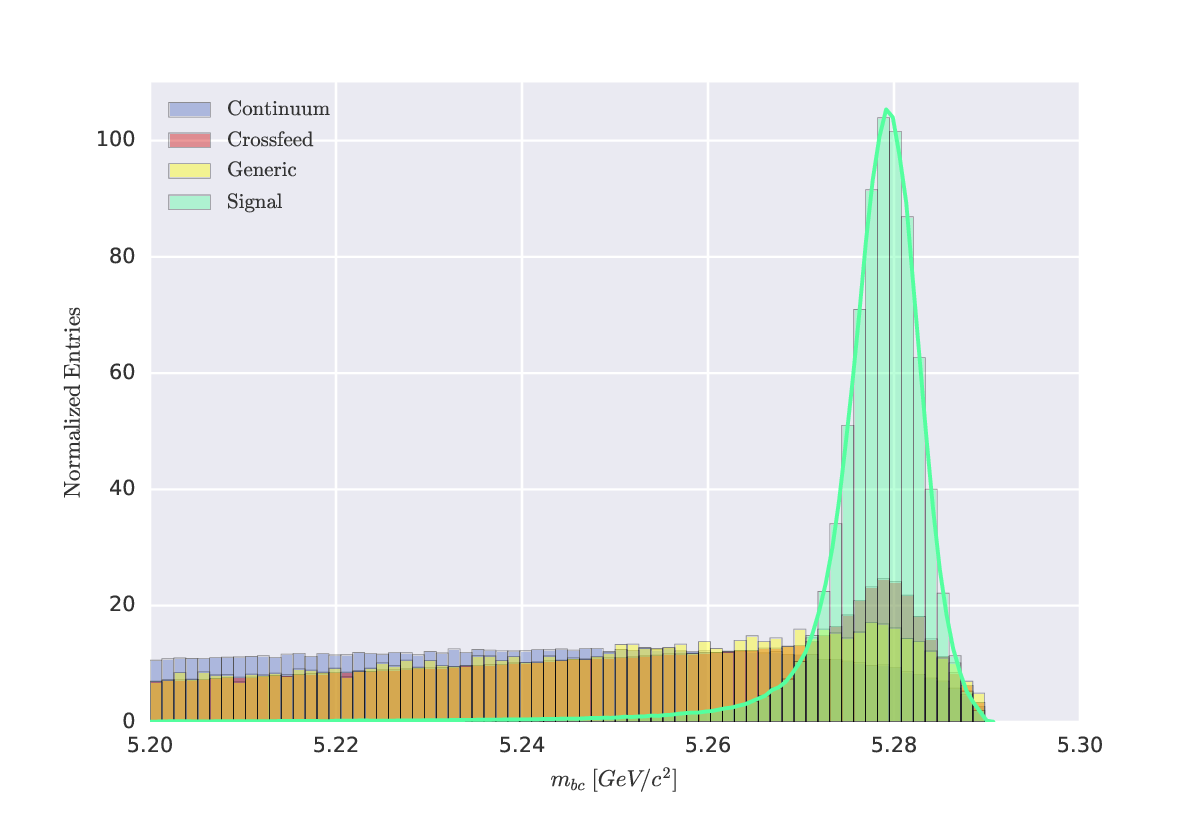

- Signal Yield Extraction

- Maximum Likelihood

- Bayesian Approach

- Precision Branching Fraction / CP Asymmetry Measurements

To view the notebooks available in this repo, visit http://nbviewer.jupyter.org/github/Justin-Tan/hep-analysis/tree/master/notebooks and navigate to the relevant section.

Under development.

$ git clone https://github.com/Justin-Tan/hep-analysis.git

$ cd hep-analysis/path/to/project

# Check command line arguments

$ python3 main.py -h

# Run

$ python3 main.py <args>

The easiest way to install the majority of the required packages is through an Anaconda environment. Download (if working remotely use wget) and run the installer. We recommend creating a separate environment before installing the necessary dependencies.

$ conda create -n hep-ml python=3.6 anaconda

Install the binaries from here.

Installation instructions. To install in your home directory:

$ pip install --user root_numpy

Installation instructions. We recommend building from source for better performance. If GPU acceleration is required, install the CUDA toolkit and cuDNN. Note: As of TF 1.2.0, your code must be modified for multi-gpu support. See the multi-gpu implementations for sample usage (tested on a Slurm cluster, but should be fully general).

# using Anaconda

$ conda install pytorch torchvision -c soumith

Clone the repo as detailed here

- TensorFlow. Open-source deep learning framework.

- PyTorch Dynamic graph construction!

- XGBoost. Extreme gradient boosting classifier. Fast.

- root_numpy. Bridge between ROOT and Python.

- Hyperband. Bandit-based approach to hyperparameter optimization.