| Student | Ruslan Popov |

|---|---|

| Organization | JabRef e.V. |

| Primary repository | JabRef/jabref |

| Project name | AI-Powered Summarization and “Interaction” with Academic Papers |

| Project mentors | @koppor, @ThiloteE, @Calixtus |

| Project page | Google Summer of Code Project Page |

| Status | Complete |

During my Google Summer of Code (GSoC) project, I was working on enhancing JabRef with AI features to assist researchers in their work. Given the current popularity and enhanced usability of AI technologies, my mentors and I aimed to integrate Large Language Models (LLMs) that would analyze research papers.

To achieve this, the project introduced three core AI features:

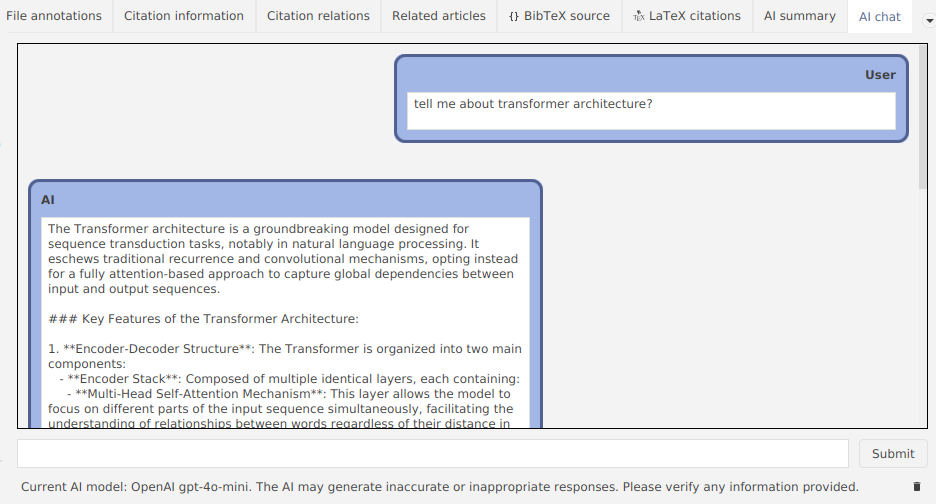

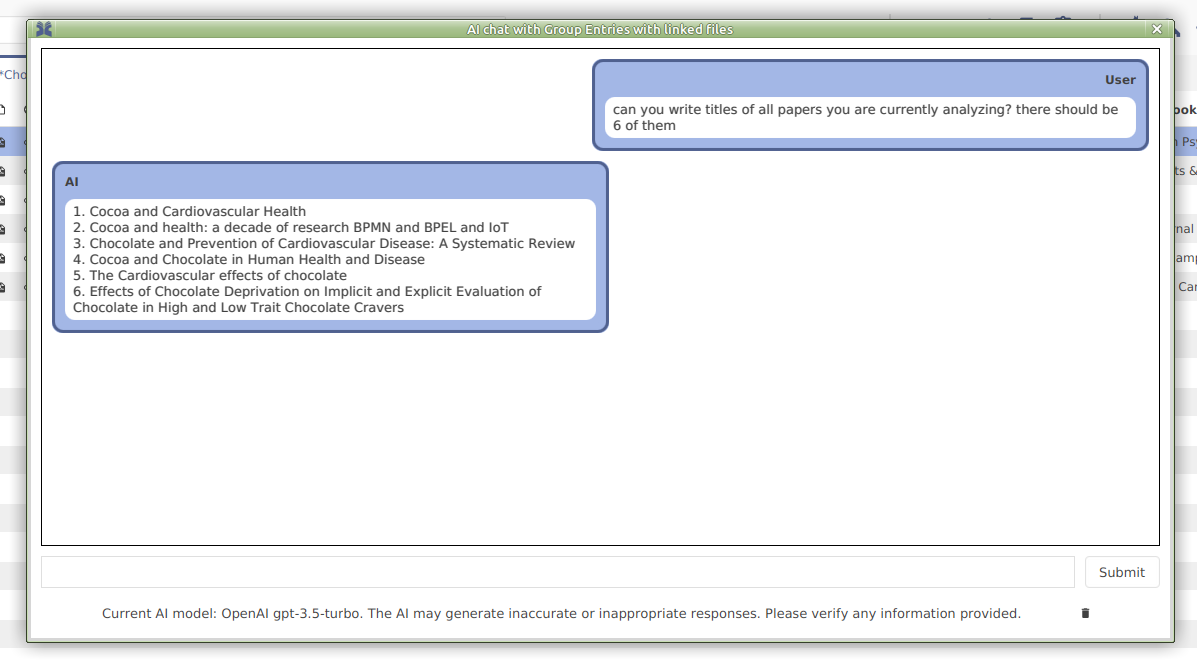

- Chatting with Library Entries. This feature allows users to interact with their library entries through a chat interface. Users can ask questions about specific papers, and the AI will provide relevant answers, making it easier to find and process information without manually searching through the text.

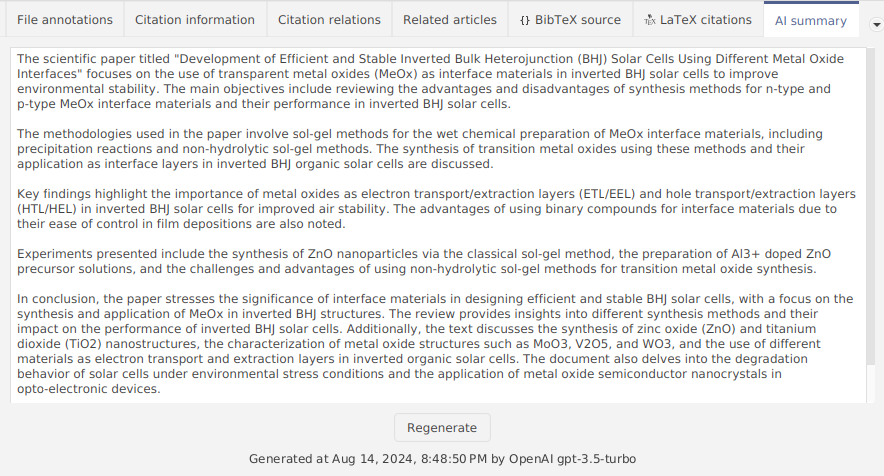

- Generating Summaries. The summarization feature provides a concise overview of research papers. This helps researchers quickly understand the content of a paper before deciding whether to read it in full, saving time and effort.

- Supporting Multiple AI Providers. To ensure flexibility and robustness, I integrated support for multiple AI providers. This allows users to choose the AI model that best suits their needs and preferences.

The implementation was primarily done in Java using JavaFX, with the integration of the LangChain4j library (a Java counterpart to the Python-based LangChain). The embedding model part was done with the Java based DeepJavaLibrary.

The AI features developed in this project have the potential to significantly improve the workflow of researchers by providing them with an AI assistant capable of answering questions and generating summaries. This not only saves time but also enhances the overall research process.

There were many challanges during the development of this project, and I developed a lot of skills and knowledge by overcoming them:

- LangChain4j was not easily usable in the JabRef development due to JabRef making use of JDK's modularization features. However, with the 0.33 release of Langchain4j, the problem was solved. Nevertheless, I learned a lot about Java build systems, when we were trying to develop JabRef with older versions of LangChain4j.

- At the beginning, it was not possible to debug JabRef on Windows, because the command line generated by Gradle grew too large. This issue is related to a previous one with split packages. Patching modules introduced a lot of CLI arguments, so Windows could not handle them properly. Moreover, the dependency on LangChain4j introduced new modules (which were added to the command line, too). Handling of long command lines with Gradle was "fixed" by gradle-modules-plugin#282.

- In some cases, our application cannot shutdown, because LangChain4j was running some threads that were not properly closed. That forced us to dive deep into its source code and use

jvm-openaias the underlying OpenAI client. - In the end, we also found out that the application size with LangChain4j was very big. The reason of this problem was the usage of ONNX library in LangChain4j, it was supplying all possible implementations for various platforms in one package. Also it included the debugging symbols for Windows implementation (

.pdbfile that weighed 200 MBs!). In order to solve this problem, we partially switched todjllibrary. - On the frontend side, there is a long standing issue in JavaFX that text of labels should be selectable/copyable. This severely affects how we develop chat UI, because, on one hand, users need a comfortable UI (which can be made with

LabelorTextelement), but, on the other hand, users will want a copy feature (which is only available inTextFieldorTextAreacomponents). We still have many issues with the chat UI and scrolling down the messsages.

All those issues were unexpected at the beginning of the project, and they caused headaches at my and mentor's side. With having time lost at these unexpected Java eco system issues, we could not work on JabRef itself.

Initially, understanding the existing code base and learning best practices was challenging. However, with time and guidance from mentors, I became more proficient. Integrating AI features into the existing application required overcoming numerous bugs and architectural challenges, but most issues were successfully resolved!

Throughout the development process, I gained valuable insights into Software Engineering. I identified and fixed several small bugs in the original JabRef codebase and discovered several issues in other libraries. These experiences have significantly enhanced my problem-solving skills and, especially, understanding of integrating AIs in software applications.

- We have implemented an interface to allow chatting with LLM.

- LLM will use context from the linked files.

- Chat history is persisted on disk.

- Users can delete messages.

- Users can copy messages.

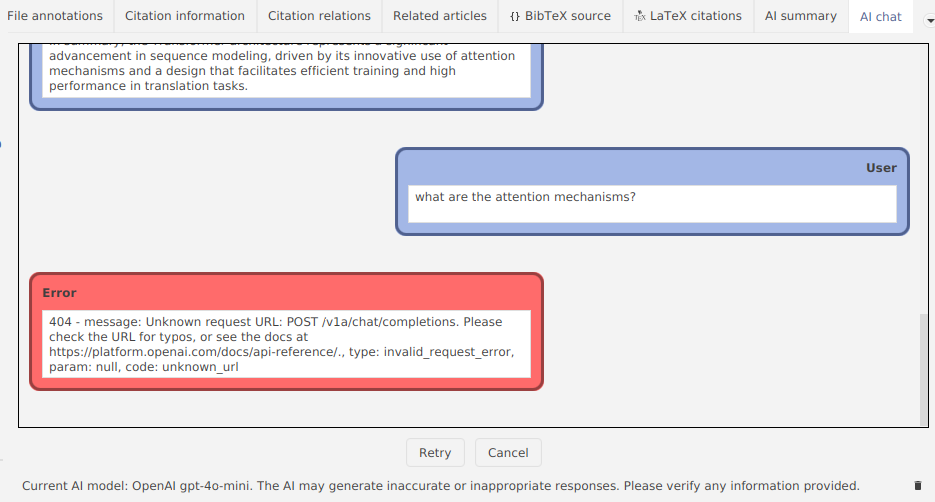

- Errors from LLM are handled gracfully (retry generation, cancel generation).

- The summary is generated in the background.

- Our algorithm can handle both short and long documents.

- We support models with various context windows.

- JabRef records the time and model you used for generating summary.

- The summary can be regenerated.

- In order to chat with an entry, the embeddings of the file will be generated locally.

- Embeddings are generated in the background.

- We also calculate the estimated time left for the result.

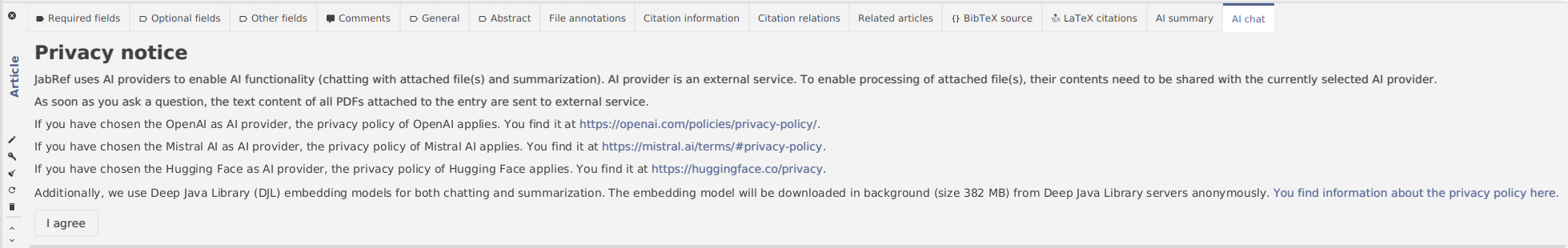

- The notice will explain to users how the LLM features work and how their data is processed by JabRef and an AI provider.

- Users can follow the links to the privacy policies of each AI provider for more details.

- AI features in JabRef are not mandatory and by default they are turned off (user data is private in this case).

- AI features will be enabled, when user clicks "I agree".

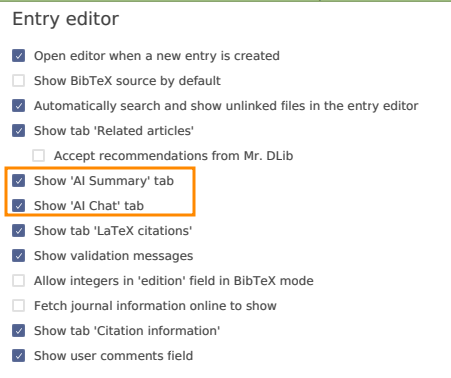

- "AI chat" and "AI summary" tabs can be hidden in the entry editor.

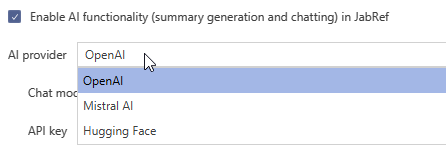

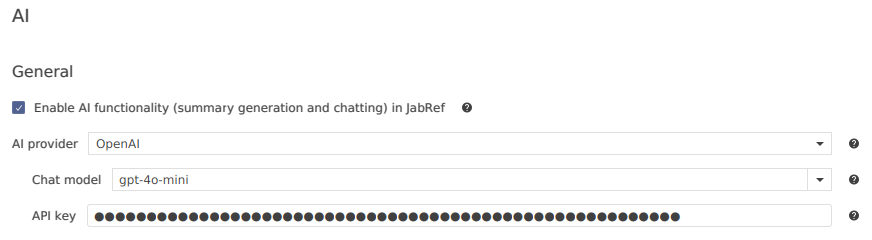

- Users can choose the AI provider they like the most: OpenAI, Mistral AI, Hugging Face.

- Based on the selected AI provider, JabRef will show available chat models.

- For Hugging Face there is no supplied list of models (because there are too many models), instead users can enter the model name manually.

- The API token is stored securely in Java keyring.

- The chat model and API token are linked to the selected AI provider. So, when users change AI provider, the correct model and API token is loaded.

- The embedding model can be chosen.

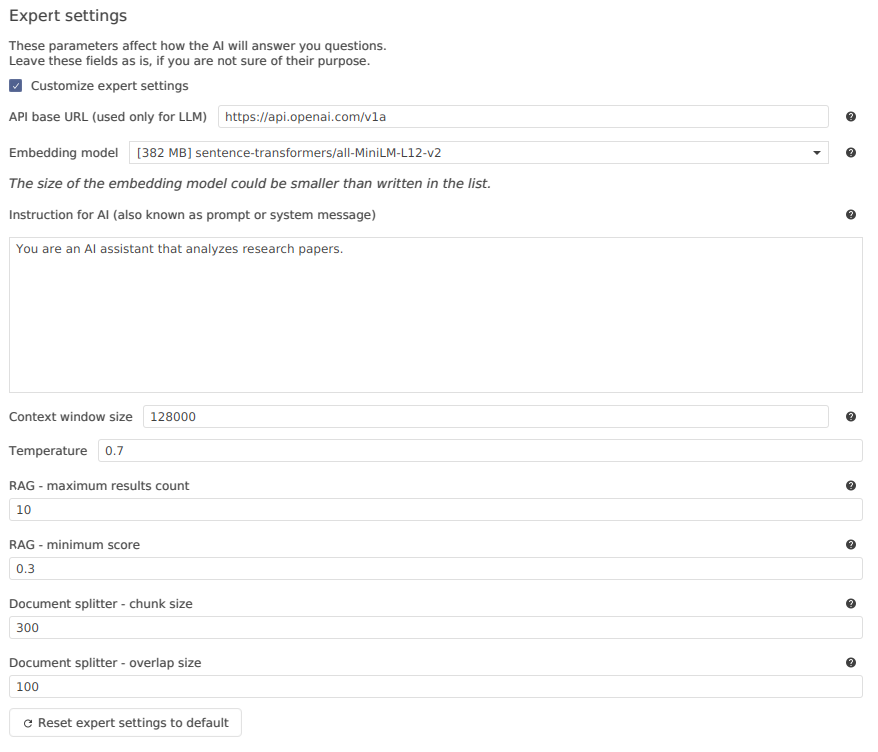

- Users can customize how the AI will respond to their requests.

- The API base URL can be replaced instead of a default one, so that a local LLM can be used.

- The instruction (also called system prompt) is customizable.

- The temperature can be modified in order to make AI generate more probable or more diverse (creative) answers.

- There are also settings to configure the document splitter and retrieval augmented generation (RAG).

Future features include:

- Introduce AI-assistant search for papers in the context of a research group sharing their references and pdf files using a BibTeX file.

- Support full offline mode (no external access to network).

- Support for external RAG (all of the workload for generating and storing embeddings, generating AI answer is offloaded to a separate server). This could be implemented using Microsoft Kernel Memory.

- Integrate scholar.ai, or other services.

- Add more AI providers and embedding models.

- AI chatting functionality.

- Add OpenAI privacy policy.

- Add more organizations related to AI features to PRIVACY.md.

- Add explanation of embeddings.

- AI functionality in JabRef.

- Add AI documentation.

- Update AI documentation.

- Add more explanation for localization in Java code and FXML.

- Change branches of

scanLabeledControls. - Support .lnk files for TeXworks.

- Move advanced contribution hints.

- Fix help wanted in adding entry PDFs.

- Add more explanation for localization in Java code and FXML.

- Add docusaurus-lunr-search plugin.

- Implement remove methods for InMemoryEmbeddingStore.

- Support relative paths without parent directory.

- Devdocs don't have favicon.

- Number of indexed files grows after reindexing action.

- "Default library mode" combobox is cut off.

- UI progress indication button is not shown, if at start of JabRef it was hidden.

- JabRef resets window size and position when a dialog occurs.

- Black text in Dark mode inside "Citation information".

- Extra step in documentation.

- scanLabeledControl logic issue.

- [FEATURE] Add

logRequestsandlogResponsestoHuggingFaceChatModel. - [FEATURE] Stop document ingestion in the middle of the process.

- [FEATURE] Distribution size of app that uses langchain4j with in-process embedding models.

- [FEATURE] Add context window size and

estimateNumberOfTokenstoChatLanguageModel. - [FEATURE] Make

DocumentSplitters to beIterables orStreams. - [FEATURE] Make MessageWindowChatMemory not to remove evicted messages from ChatMemoryStore.

- [FEATURE] Generate new chat IDs automatically as a default parameter.

- [FEATURE] Implement searching on docs website.

- [BUG] FileSystemDocumentLoader cannot handle relative paths without parent directory.

I want to say thank you to those people and comapanies:

- @koppor for mentoring in the project and for raising me as a real developer.

- @ThiloteE for mentoring in the project and for great knowledge of AI ecosystem.

- @calixtus for mentoring in the project and for reviewing and improving my PR.

- @langchain4j for developing and supporting the

LangChain4jlibrary. - @hendrikebbers for raising and fixing the split package problems in

langchain4j. - @StefanBratanov for developing the

jvm-openailibrary. - @deepjavalibrary for developing the

djllibrary. - OpenAI, Mistral AI, and Hugging Face for their API and models.