Created by Haoxiang Zhong.

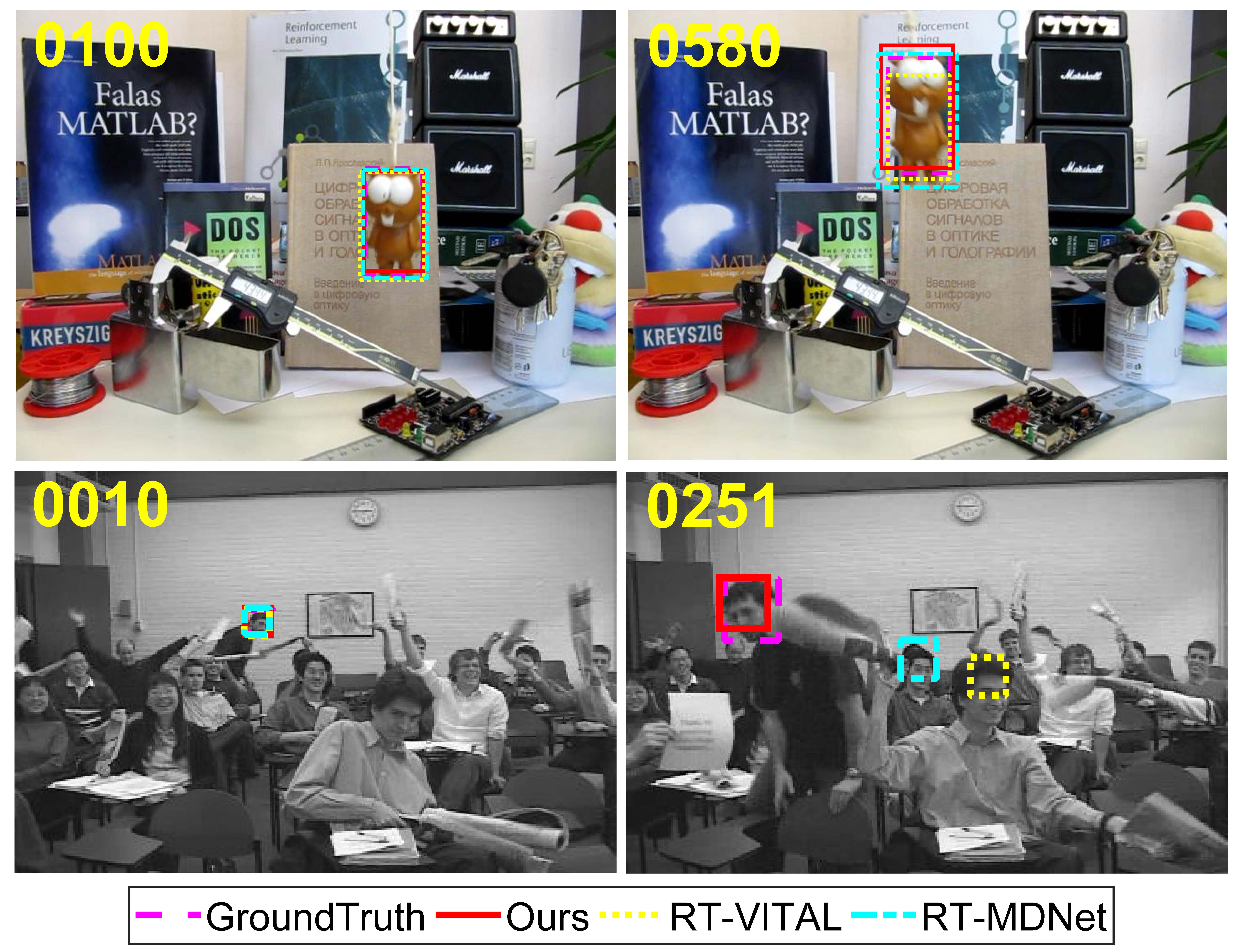

Improved RT-VITAL is deep learning based tracking algorithm. And our work is greatly inspired by RT-MDNet as well as VITAL.

The pipeline of this tracker is based on RT-MDNet.

This code is tested on 64 bit Linux (Ubuntu 16.04 LTS).

- Python 2.7 (other versions of Python2 may also work)

- PyTorch (>= 0.2.1)

- For GPU support, a GPU (~2GB memory for test) and CUDA toolkit.

- Training Dataset (ImageNet-Vid) if needed.

- Testing Dataset (e.g. OTB100, GOT-10k, ...)

Our work only modifies the tracker during online tracking. Pretrained model is provided by RT-MDNet at: RT-MDNet-ImageNet-pretrained.

Other crucial models or files from our work is provided here: Baidu Yun, extraction code:en3j.

Of all the models rt-mdnet.pth and G_sample_list_2.mat are crucial, other files can be generated during running the code.

If you want to save your time, g_model0.003.pth may also be downloaded.

Downloading feat_*.npy will save your time during feature extraction while pretraining net G.

Please put all the files and models in ./models/

python2 Run.py #Run the trakcer, and it will save a pickle file at ./result

cd ./result

python save_txt.py #Decode the pickle file into txt files

We do not recommend test our tracker on VOT benchmark, because these data are used for model learning during initialization.

Please refer to RT-MDNet for more details on traning.

If you're using this code for a publication, please cite our paper and RT-MDNet

@INPROCEEDINGS{impr-RT-VITAL,

author={H. {Zhong} and X. {Yan} and Y. {Jiang} and S. {Xia}},

booktitle={ICASSP 2020 - 2020 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP)},

title={Improved Real-Time Visual Tracking via Adversarial Learning},

year={2020},

volume={},

number={},

pages={1853-1857},}

@InProceedings{rtmdnet,

author = {Jung, Ilchae and Son, Jeany and Baek, Mooyeol and Han, Bohyung},

title = {Real-Time MDNet},

booktitle = {European Conference on Computer Vision (ECCV)},

month = {Sept},

year = {2018}

}