🚀 Major upgrade 🚀 : Migration to Pytorch v1 and Python 3.7. The code is now much more generic and easy to install.

This repository contains the source codes for the paper 3D-CODED : 3D Correspondences by Deep Deformation. The task is to put 2 meshes in point-wise correspondence. Below, given 2 humans scans with holes, the reconstruction are in correspondence (suggested by color).

If you find this work useful in your research, please consider citing:

@inproceedings{groueix2018b,

title = {3D-CODED : 3D Correspondences by Deep Deformation},

author={Groueix, Thibault and Fisher, Matthew and Kim, Vladimir G. and Russell, Bryan and Aubry, Mathieu},

booktitle = {ECCV},

year = 2018}

}

The project page is available http://imagine.enpc.fr/~groueixt/3D-CODED/

This implementation uses Pytorch.

git clone git@github.com:ThibaultGROUEIX/3D-CODED.git ## Download the repo

conda create --name pytorch-atlasnet python=3.7 ## Create python env

source activate pytorch-atlasnet

pip install pandas visdom trimesh sklearn

conda install pytorch torchvision -c pytorch # or from sources if you prefer

# you're done ! Congrats :)Tested on 11/18 with pytorch 0.4.1 (py37_py36_py35_py27__9.0.176_7.1.2_2) and [latest source](

source activate pytorch-atlasnet

cd 3D-CODED/extension

python setup.py installThe trained models and some corresponding results are also available online :

- The trained_models go in

trained_models/

Require 3 GB of RAM on the GPU and 17 sec to run (Titan X Pascal).

cd trained_models; ./download_models.sh; cd .. # download the trained models

python inference/correspondences.pyThis script takes as input 2 meshes from data and compute correspondences in results. Reconstruction are saved in data

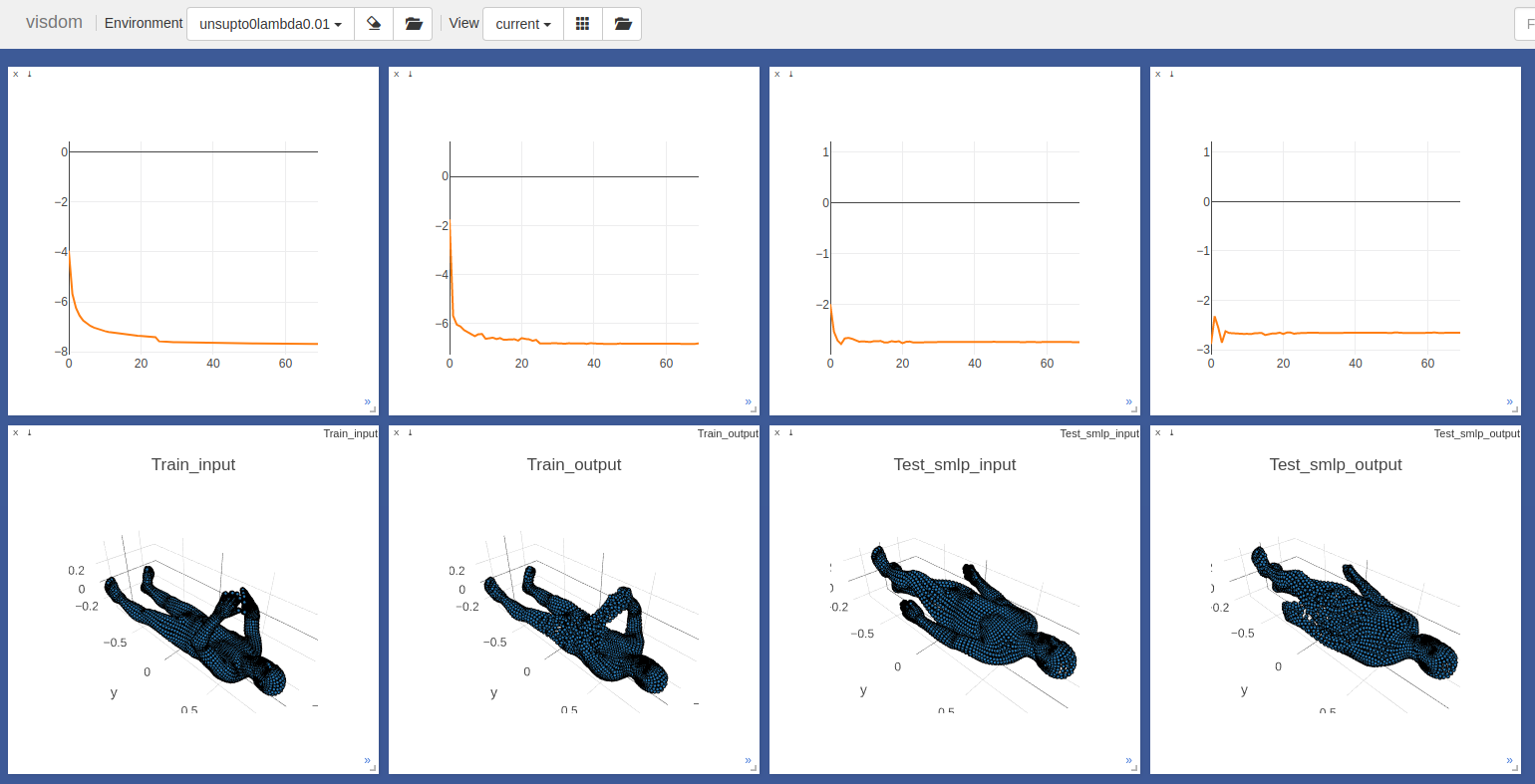

It should look like :

- Initial guesses for example0 and example1:

- Final reconstruction for example0 and example1:

You need to make sure your meshes are preprocessed correctly :

-

The meshes are loaded with Trimesh, which should support a bunch of formats, but I only tested

.plyfiles. Good converters include Assimp and Pymesh. -

The trunk axis is the Y axis (visualize your mesh against the mesh in

datato make sure they are normalized in the same way). -

the scale should be about 1.7 for a standing human (meaning the unit for the point cloud is the

cm). You can automatically scale them with the flag--scale 1

'--HR', type=int, default=1, help='Use high Resolution template for better precision in the nearest neighbor step ?'

'--nepoch', type=int, default=3000, help='number of epochs to train for during the regression step'

'--model', type=str, default = 'trained_models/sup_human_network_last.pth', help='your path to the trained model'

'--inputA', type=str, default = "data/example_0.ply", help='your path to mesh 0'

'--inputB', type=str, default = "data/example_1.ply", help='your path to mesh 1'

'--num_points', type=int, default = 6890, help='number of points fed to poitnet'

'--num_angles', type=int, default = 100, help='number of angle in the search of optimal reconstruction. Set to 1, if you mesh are already facing the cannonical direction as in data/example_1.ply'

'--env', type=str, default="CODED", help='visdom environment'

'--clean', type=int, default=0, help='if 1, remove points that dont belong to any edges'

'--scale', type=int, default=0, help='if 1, scale input mesh to have same volume as the template'

'--project_on_target', type=int, default=0, help='if 1, projects predicted correspondences point on target mesh'-

Sometimes the reconstruction is flipped, which break the correspondences. In the easiest case where you meshes are registered in the same orientation, you can just fix this angle in

reconstruct.pyline 86, to avoid the flipping problem. Also note from this line that the angle search only looks in [-90°,+90°]. -

Check the presence of lonely outliers that break the Pointnet encoder. You could try to remove them with the

--cleanflag.

- If you want to use

inference/correspondences.pyto process a hole dataset, like FAUST test set, make sure you don't load the same network in memory every time you compute correspondences between two meshes (which will happen with the naive and simplest way of doing it by callinginference/correspondences.pyiteratively on all the pairs). A example of bad practice is in./auxiliary/script.sh, for the FAUST inter challenge. Good luck :-)

The dataset can't be shared because of copyrights issues. Since the generation process of the dataset is quite heavy, it has it's own README in data/README.md. Brace yourselve :-)

Follow the specific repo instruction here.

Pymesh is my favorite Geometry Processing Library for Python, it's developed by an Adobe researcher : Qingnan Zhou. It can be tricky to set up. Trimesh is good alternative but requires a few code edits in this case.

'--batchSize', type=int, default=32, help='input batch size'

'--workers', type=int, help='number of data loading workers', default=8

'--nepoch', type=int, default=75, help='number of epochs to train for'

'--model', type=str, default='', help='optional reload model path'

'--env', type=str, default="unsup-symcorrect-ratio", help='visdom environment'

'--laplace', type=int, default=0, help='regularize towords 0 curvature, or template curvature'- First launch a visdom server :

python -m visdom.server -p 8888- Launch the training. Check out all the options in

./training/train_sup.py.

export CUDA_VISIBLE_DEVICES=0 #whichever you want

source activate pytorch-atlasnet

git pull

env=3D-CODED

python ./training/train_sup.py --env $env |& tee ${env}.txt- Monitor your training on http://localhost:8888/

- Timings, results, memory requirements

| Method | Faust euclidean error in cm | GPU memory | Time by epoch⁽²⁾ |

|---|---|---|---|

| train_sup.py | 2.878 | TODO | TODO |

| train_unsup.py | 4.883 | TODO | TODO |

⁽²⁾this is only an estimate, the code is not optimised

- The code for the Chamfer Loss was adapted from Fei Xia'a repo : PointGan. Many thanks to him !

- The code for the Laplacian regularization comes from Angjoo Kanazawa and Shubham Tulsiani. This was so helpful, thanks !

- Part of the SMPL parameters used in the training data comes from Gül Varol's repo : https://github.com/gulvarol/surreal But most of all, thanks for all the advices :)

- The FAUST Team for their prompt reaction in resolving a benchmark issue the week of the deadline, especially to Federica Bogo and Jonathan Williams.

- The efficient code for to compute geodesic errors comes from https://github.com/zorah/KernelMatching. Thanks!

- The SMAL team, and SCAPE team for their help in generating the training data.

- DeepFunctional Maps authors for their fast reply the week of the rebuttal ! Many thanks.

- Hiroharu Kato for his very clean neural renderer code, that I used for the gifs :-)

- Pytorch developpers for making DL code so easy.

- This work was funded by Ecole Doctorale MSTIC. Thanks !

- And last but not least, my great co-authors : Matthew Fisher, Vladimir G. Kim, Bryan C. Russell, and Mathieu Aubry

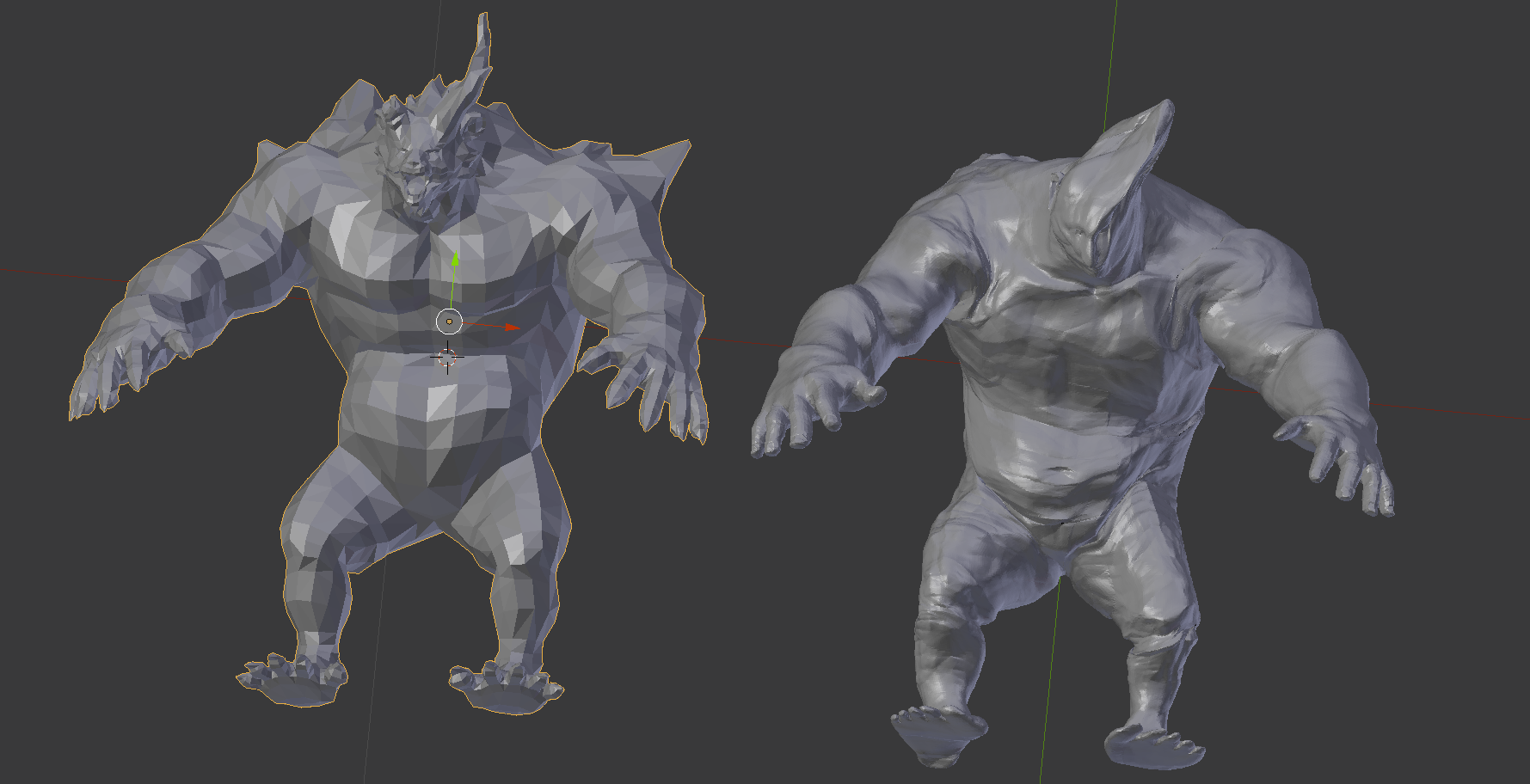

- Zhongshi Jiang applying trained model on a monster model 👹 (left: original , right: reconstruction)