TensorFlow implementation of Ask Me Anything: Dynamic Memory Networks for Natural Language Processing.

- Python 3.6

- TensorFlow 1.8

- hb-config (Singleton Config)

- nltk (tokenizer and blue score)

- tqdm (progress bar)

init Project by hb-base

.

├── config # Config files (.yml, .json) using with hb-config

├── data # dataset path

├── notebooks # Prototyping with numpy or tf.interactivesession

├── dynamic_memory # dmn architecture graphs (from input to output)

├── __init__.py # Graph logic

├── encoder.py # Encoder

└── episode.py # Episode and AttentionGate

├── data_loader.py # raw_date -> precossed_data -> generate_batch (using Dataset)

├── hook.py # training or test hook feature (eg. print_variables)

├── main.py # define experiment_fn

└── model.py # define EstimatorSpec

Reference : hb-config, Dataset, experiments_fn, EstimatorSpec

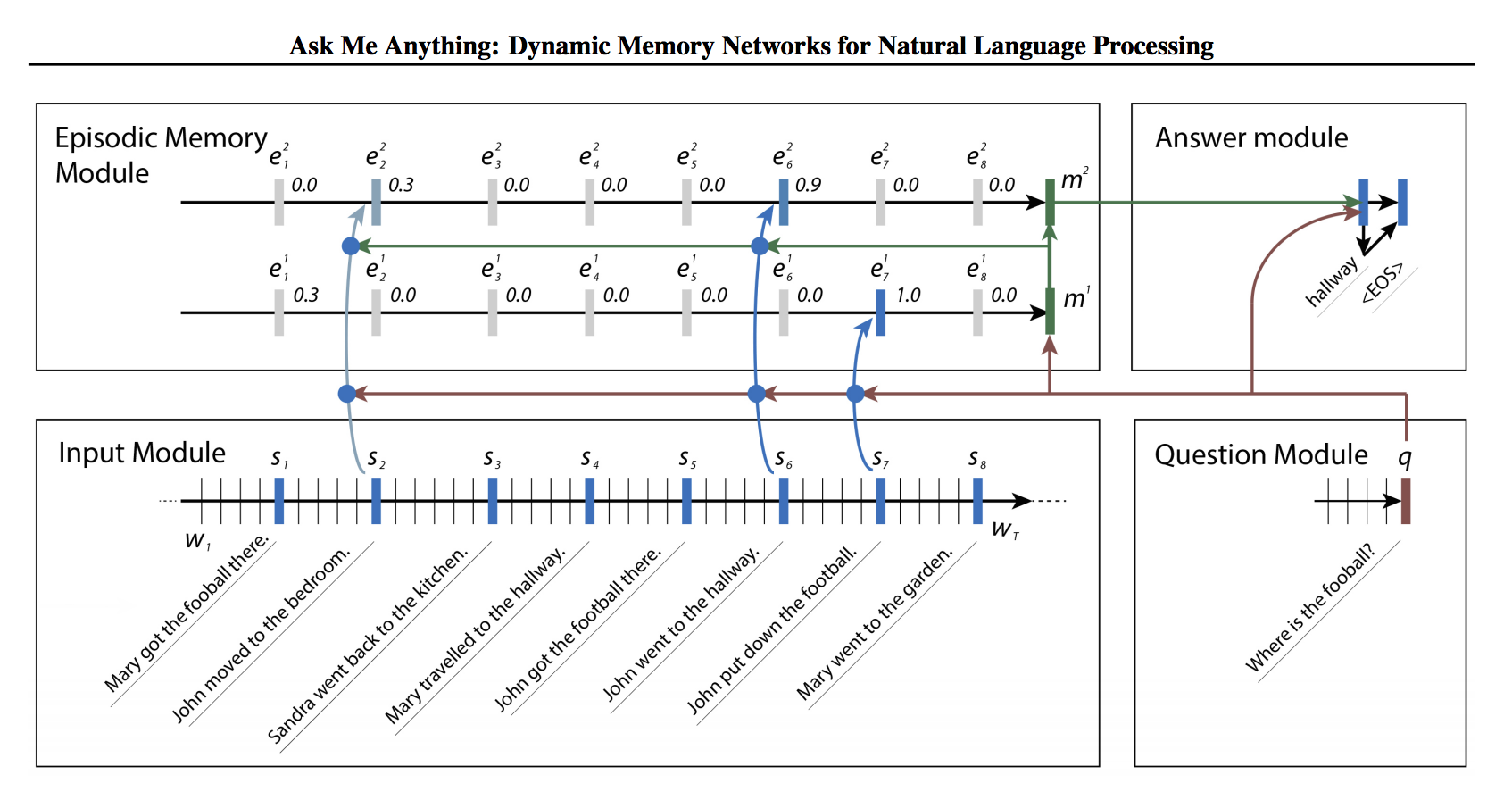

- Implements DMN+ (Dynamic Memory Networks for Visual and Textual Question Answering (2016) by C Xiong)

example: bAbi_task1.yml

data:

base_path: 'data/'

task_path: 'en-10k/'

task_id: 1

PAD_ID: 0

model:

batch_size: 16

use_pretrained: true # (true or false)

embed_dim: 50 # if use_pretrained: only available 50, 100, 200, 300

encoder_type: uni # uni, bi

cell_type: gru # lstm, gru, layer_norm_lstm, nas

num_layers: 1

num_units: 32

memory_hob: 3

dropout: 0.0

reg_scale: 0.001

train:

learning_rate: 0.0001

optimizer: 'Adam' # Adagrad, Adam, Ftrl, Momentum, RMSProp, SGD

train_steps: 100000

model_dir: 'logs/bAbi_task1'

save_checkpoints_steps: 1000

check_hook_n_iter: 1000

min_eval_frequency: 1000

print_verbose: False

debug: FalseInstall requirements.

pip install -r requirements.txt

Then, prepare dataset and pre-trained glove.

sh scripts/fetch_babi_data.sh

sh scripts/fetch_glove_data.sh

Finally, start trand and evalueate model

python main.py --config bAbi_task1 --mode train_and_evaluate

✅ : Working

◽ : Not tested yet.

- ✅

evaluate: Evaluate on the evaluation data. - ◽

extend_train_hooks: Extends the hooks for training. - ◽

reset_export_strategies: Resets the export strategies with the new_export_strategies. - ◽

run_std_server: Starts a TensorFlow server and joins the serving thread. - ◽

test: Tests training, evaluating and exporting the estimator for a single step. - ✅

train: Fit the estimator using the training data. - ✅

train_and_evaluate: Interleaves training and evaluation.

tensorboard --logdir logs

- Implementing Dynamic memory networks

- arXiv - Ask Me Anything: Dynamic Memory Networks for Natural Language Processing (2015. 6) by A Kumar

- arXiv - Dynamic Memory Networks for Visual and Textual Question Answering (2016. 3) by C Xiong

Dongjun Lee (humanbrain.djlee@gmail.com)