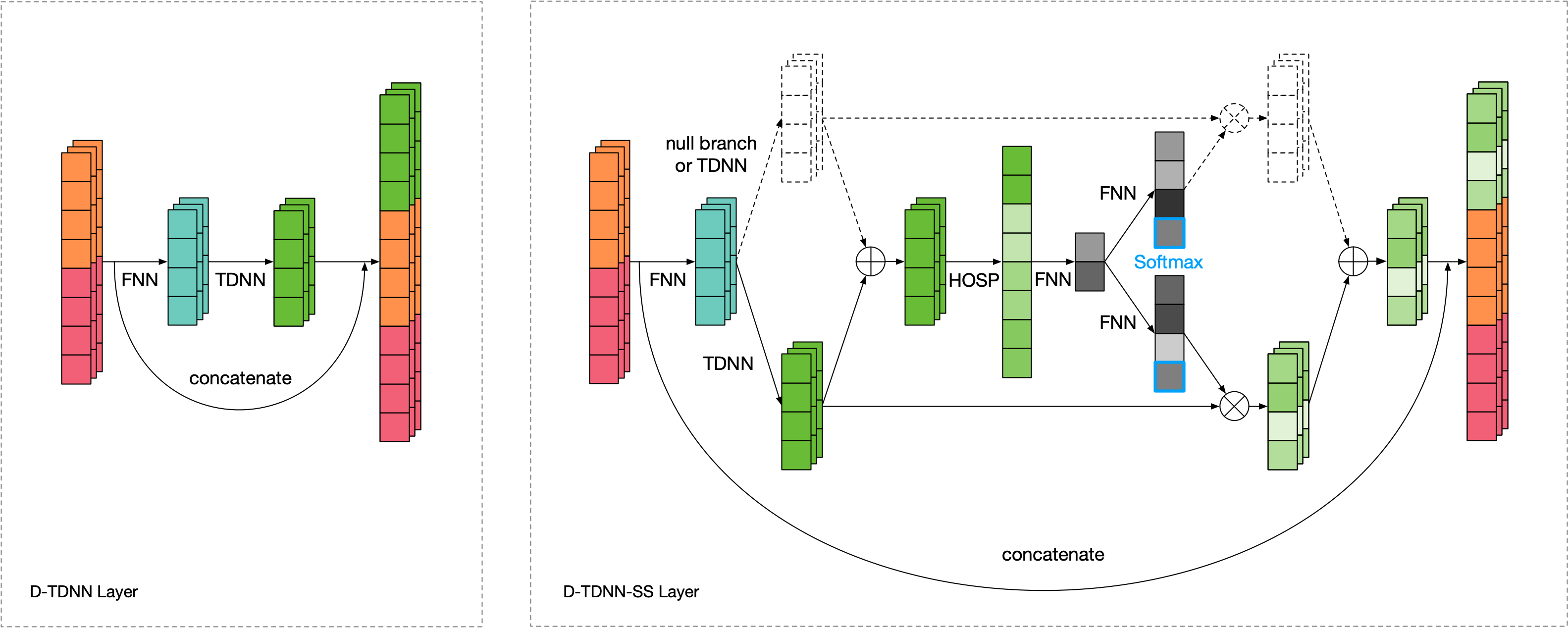

PyTorch implementation of Densely Connected Time Delay Neural Network (D-TDNN) in our paper "Densely Connected Time Delay Neural Network for Speaker Verification" (INTERSPEECH 2020).

We provide the pretrained models which can be used in many tasks such as:

- Speaker Verification

- Speaker-Dependent Speech Separation

- Multi-Speaker Text-to-Speech

- Voice Conversion

You can either use Kaldi toolkit:

- Download VoxCeleb1 test set and unzip it.

- Place

prepare_voxceleb1_test.shunder$kaldi_root/egs/voxceleb/v2and change the$datadirand$voxceleb1_rootin it. - Run

chmod +x prepare_voxceleb1_test.sh && ./prepare_voxceleb1_test.shto generate 30-dim MFCCs. - Place the

trialsunder$datadir/test_no_sil.

Or checkout the kaldifeat branch if you do not want to install Kaldi.

- Download the pretrained D-TDNN model and run:

python evaluate.py --root $datadir/test_no_sil --model D-TDNN --checkpoint dtdnn.pth --device cuda

VoxCeleb1-O

| Model | Emb. | Params (M) | Loss | Backend | EER (%) | DCF_0.01 | DCF_0.001 |

|---|---|---|---|---|---|---|---|

| TDNN | 512 | 4.2 | Softmax | PLDA | 2.34 | 0.28 | 0.38 |

| E-TDNN | 512 | 6.1 | Softmax | PLDA | 2.08 | 0.26 | 0.41 |

| F-TDNN | 512 | 12.4 | Softmax | PLDA | 1.89 | 0.21 | 0.29 |

| D-TDNN | 512 | 2.8 | Softmax | Cosine | 1.81 | 0.20 | 0.28 |

| D-TDNN-SS (0) | 512 | 3.0 | Softmax | Cosine | 1.55 | 0.20 | 0.30 |

| D-TDNN-SS | 512 | 3.5 | Softmax | Cosine | 1.41 | 0.19 | 0.24 |

| D-TDNN-SS | 128 | 3.1 | AAM-Softmax | Cosine | 1.22 | 0.13 | 0.20 |

If you find D-TDNN helps your research, please cite

@inproceedings{DBLP:conf/interspeech/YuL20,

author = {Ya-Qi Yu and

Wu-Jun Li},

title = {Densely Connected Time Delay Neural Network for Speaker Verification},

booktitle = {Annual Conference of the International Speech Communication Association (INTERSPEECH)},

pages = {921--925},

year = {2020}

}