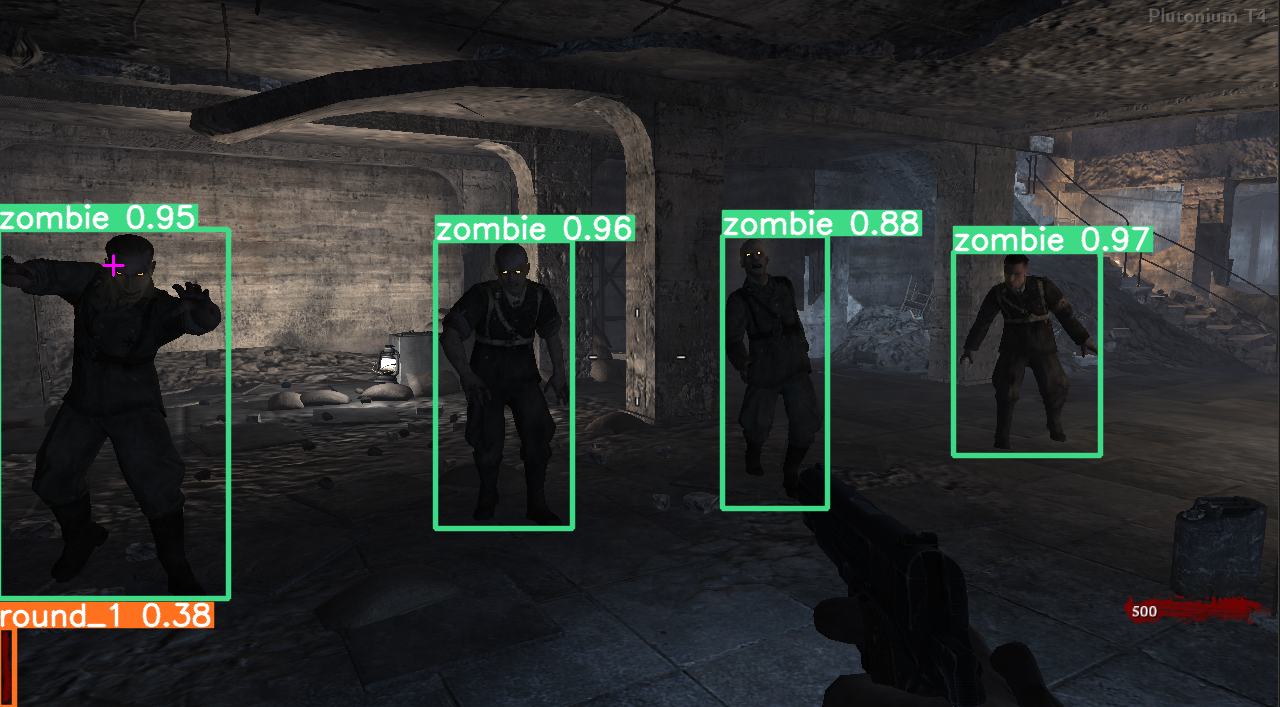

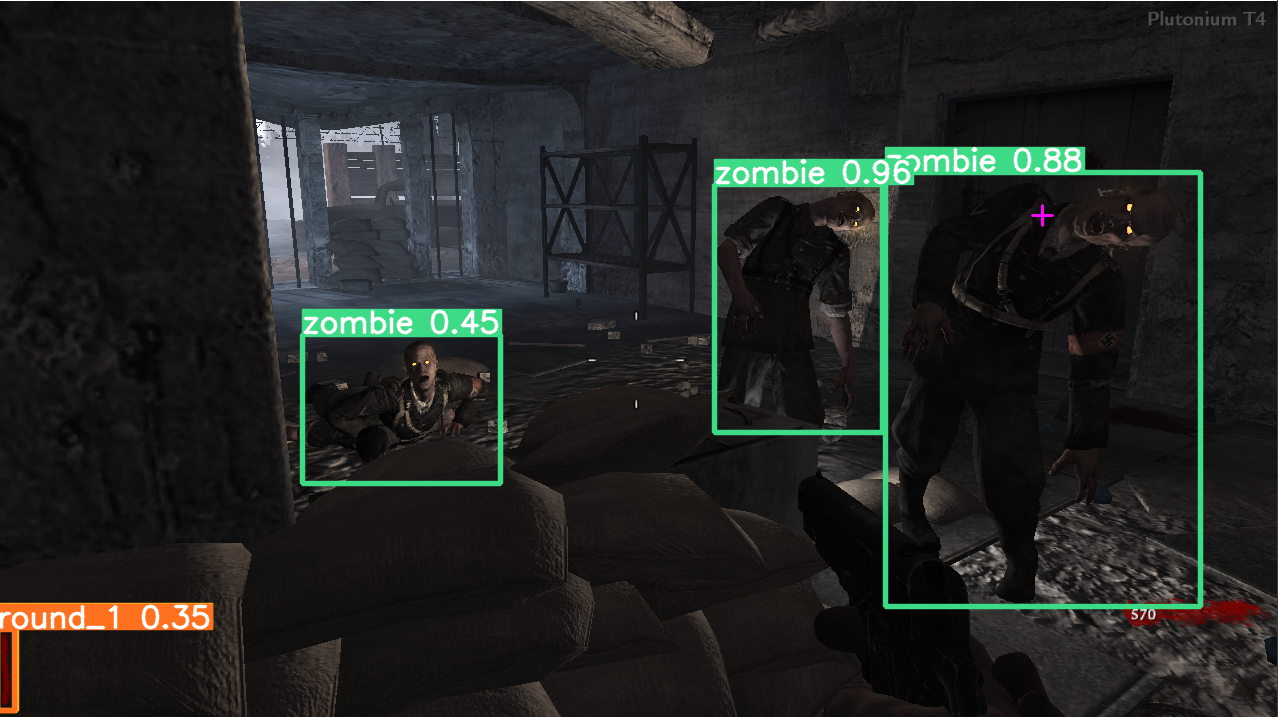

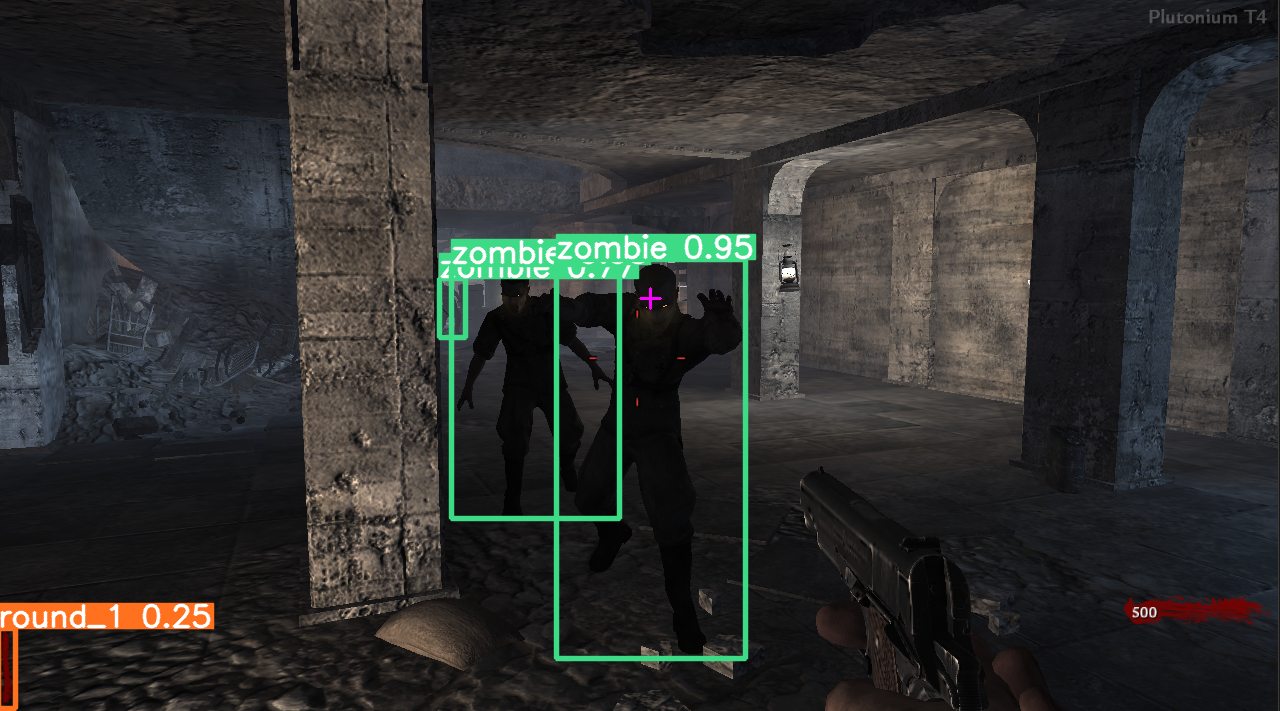

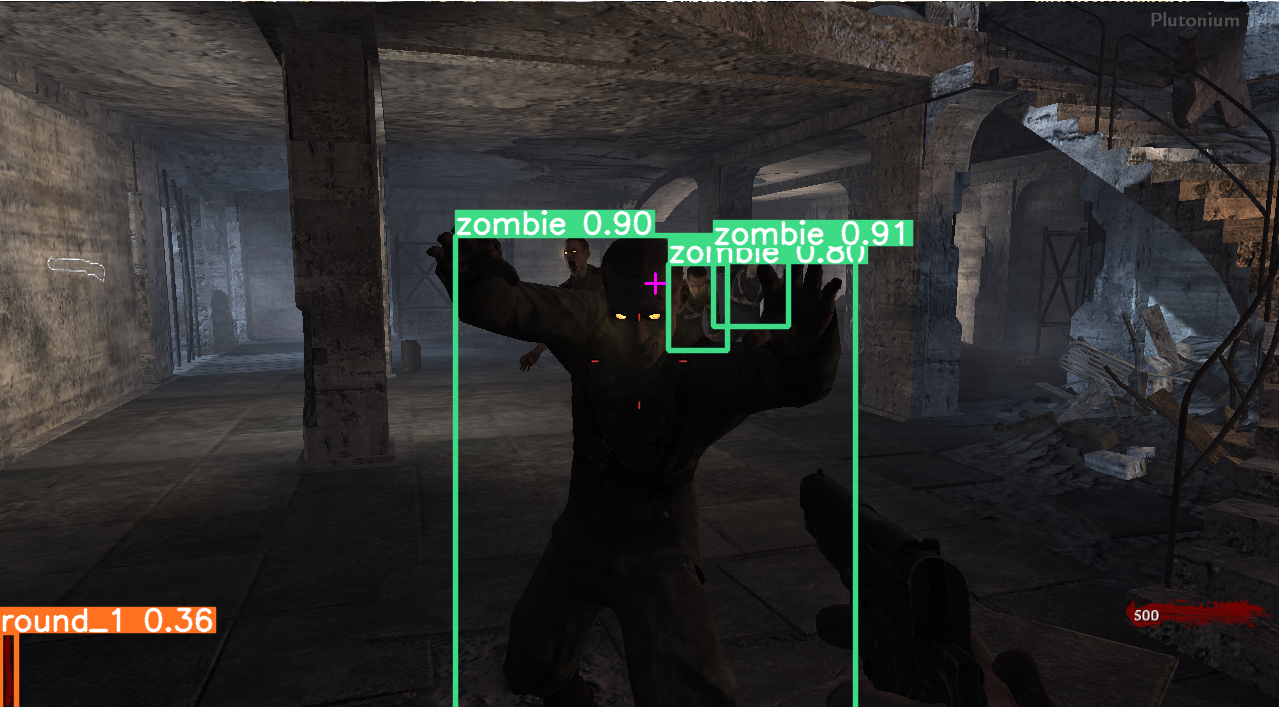

Nazi Zombies AI Object Detection

Experimenting with YOLOv8 Object Detection to detect objects in Call of Duty: World at War - Nazi Zombies. The current YOLOv8 model was only trained on Nacht der Untoten with about 843 training images and 94 validation images.

Current model runs at about 20 FPS detecting zombies.

Tools used

- YOLOv8 Ultralytics

- PyTorch 2.0.1

- CUDA 11.8

- Win32 API for screenshots

- OBS for screen recording

- ffmpeg for splitting video into images

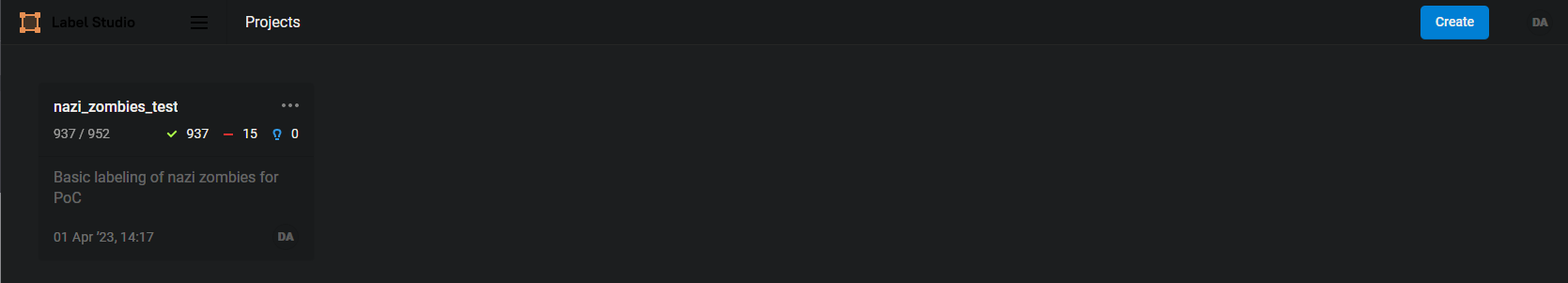

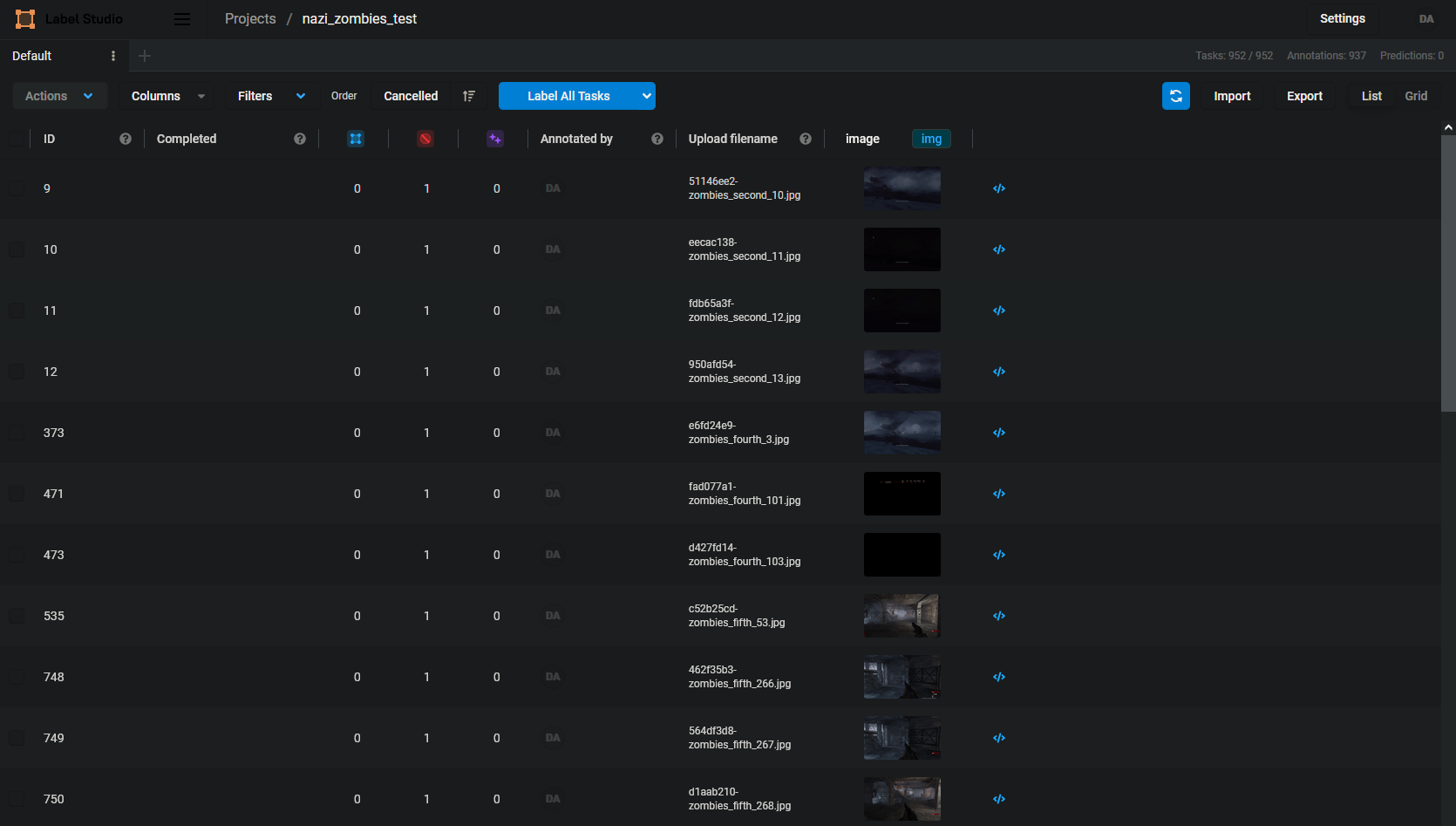

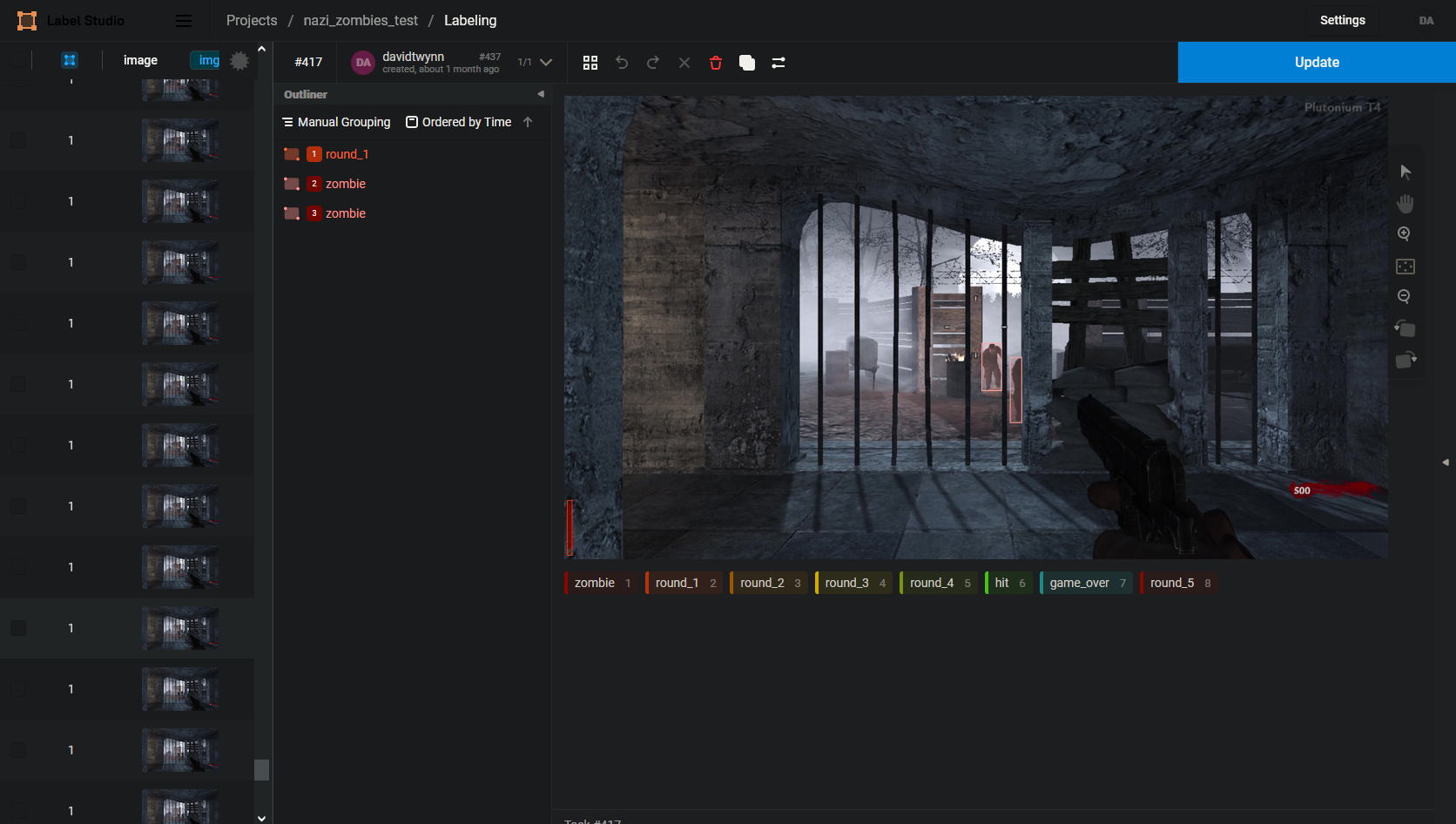

- Label studio for drawing bounding boxes

PC Specs

- i7 4770k

- GTX 980

- 16GB RAM

First time setup

Create virtual environment

py -3.9 -m venv venvActivate environment

venv/Scripts/activateUpgrade pip

py -m pip install --upgrade pipInstall Nvidia CUDA and PyTorch

Gotchas:

- Assumes you have an NVIDIA GPU on Windows that supports CUDA

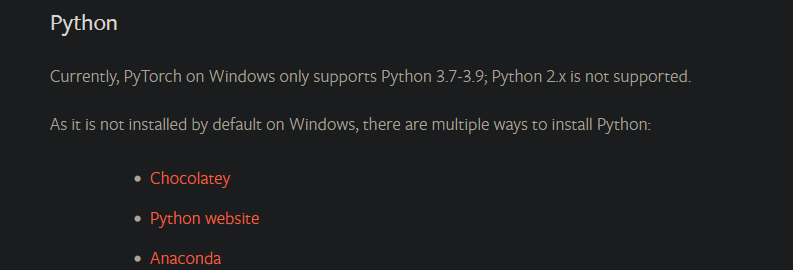

- PyTorch requires a specific version of Python to be used for Windows. In this case Python 3.9 will only work (currently 3.11 is the newest) - https://pytorch.org/get-started/locally/#windows-python

- PyTorch only supports a specific version of Nvidia CUDA. The getting-started page for PyTorch says use CUDA 11.8. This is not currently the newest.

Steps:

-

Check PyTorch Get Started page and select your OS, Python, and newest CUDA version.

-

Install version of NVIDA CUDA supported by PyTorch

-

Check PyTorch Get Started page for version of CUDA to use

This is a 2.6GB install

Use "--no-cache-dir" to fix some problems when a previous download was being used for install and causing problems.

pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu118 --no-cache-dirInstall requirements

py -m pip install -r requirements.txtValidate PyTorch is working with the GPU

python

>>> import torch

>>> torch.cuda.is_available()

TrueRun

Open Plutonium T4 - World at War Nazi Zombies

Script looks for this window name - "Plutonium T4 Singleplayer (r3417)"

Run script

python .\zombies_object_detection.pyDetection:

The YOLOv8 model was trained on Plutonium T4 Call of duty: World at War Nazi Zombies. All training was done on Nacht der Untoten standing in the initial spawn position and looking around. This was done because this was an initial test to detect the AI and have another AI play the game by looking around and shooting. The plan was to later go back after learning what could be improved and redo this model from scratch. The model did better than expected when moving around in other locations in detecting zombies.

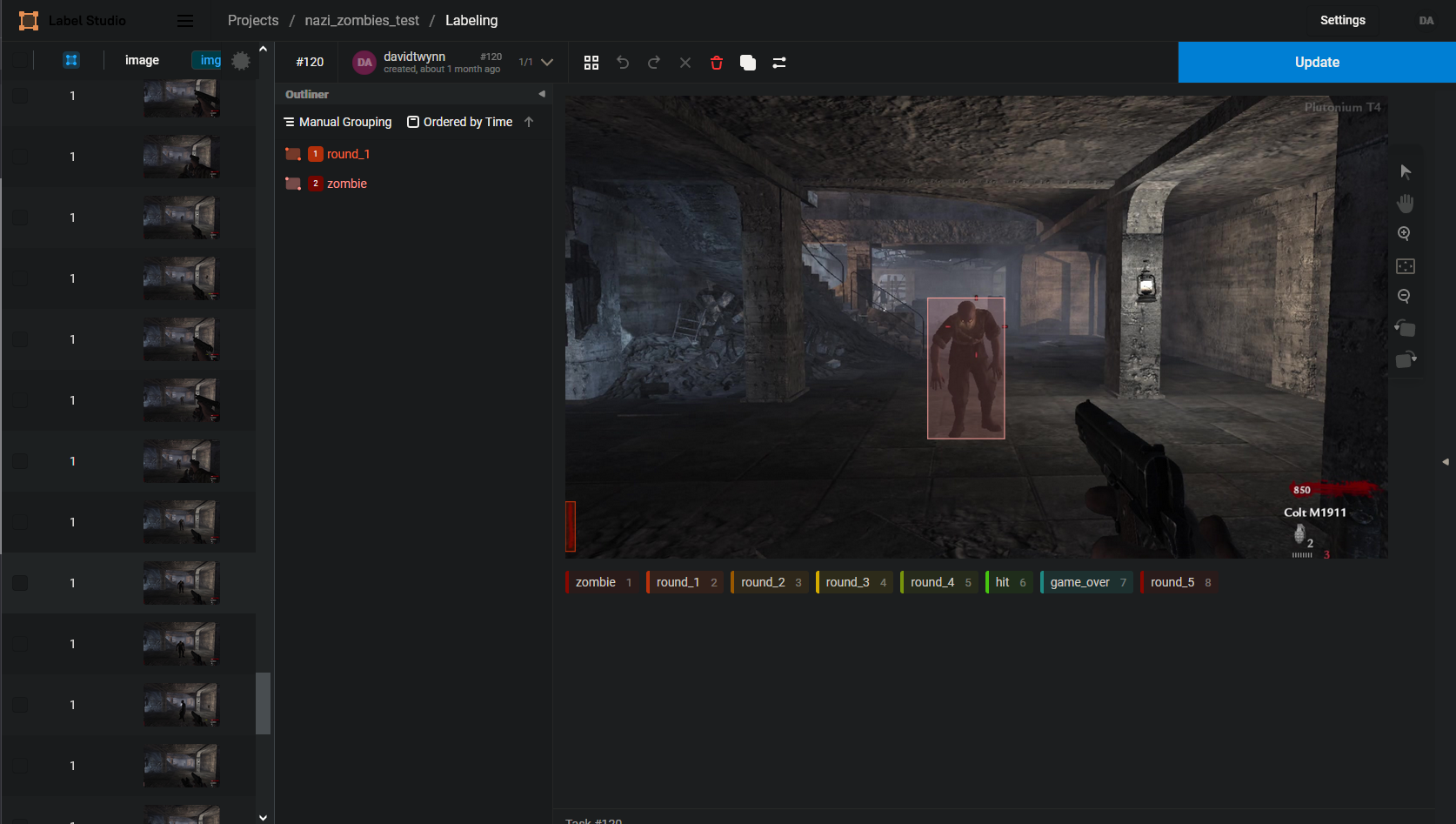

One of the issues was in labeling round numbers. The round 1 label sometimes picks up on other round since the tally marks are the same. Tried some logic where the next round had to be +1 and wouldn't switch until 10 seconds had passed. That seemed better but need another way to detect rounds. Another issue was the hit detection. It was trained so that a full red screen means hit. But in practice it detects hundreds of hits. Logic was put in to only detect as one hit if many were detected within 1 second. Also not sure how to extend data like this. If new data needed to be detected by the model, could the class just be trained on the small subset of data, or would all data have to be relabeled? I am thinking all relabeled.

843 training images and 94 validation images

Steps:

-

Get brightness level in game so if changes in the future we can go back

-

r_gamma command in Plutonium

-

Gamma cannot be changed when not in full screen so just trained on the default brightness

-

-

Figure out recording of game

- Download and install OBS screen recorder - https://obsproject.com/download

- OBS -> Window Recording > Expand to screen size > settings > 60 fps, indistinguishable quality > output dir, start recording

-

Split recording into frames

a. ffmpeg - https://www.gyan.dev/ffmpeg/builds/ full download, put into program files, added to Windows Path User Environment Variable

ffmpeg.exe -i .\zombies_recording.mkv -vf fps=1 -qscale:v 1 zombies_third*%d.jpg- vf fps=1 to make sure 1 frame of the video per second goes to an image

- %d to increase the file name number each time

- -qscale:v 1 to set the jpeg scale to highest which is 1. Goes from 1-32 2 is default

-

Figure out labeler to use

- label-studio # newer version of labelmg

- python -m venv venv/

- venv/Scripts/activate

- py -m pip install --upgrade pip

- pip install label-studio

- label-studio start

- 127.0.0.1:8080

- label-studio # newer version of labelmg

-

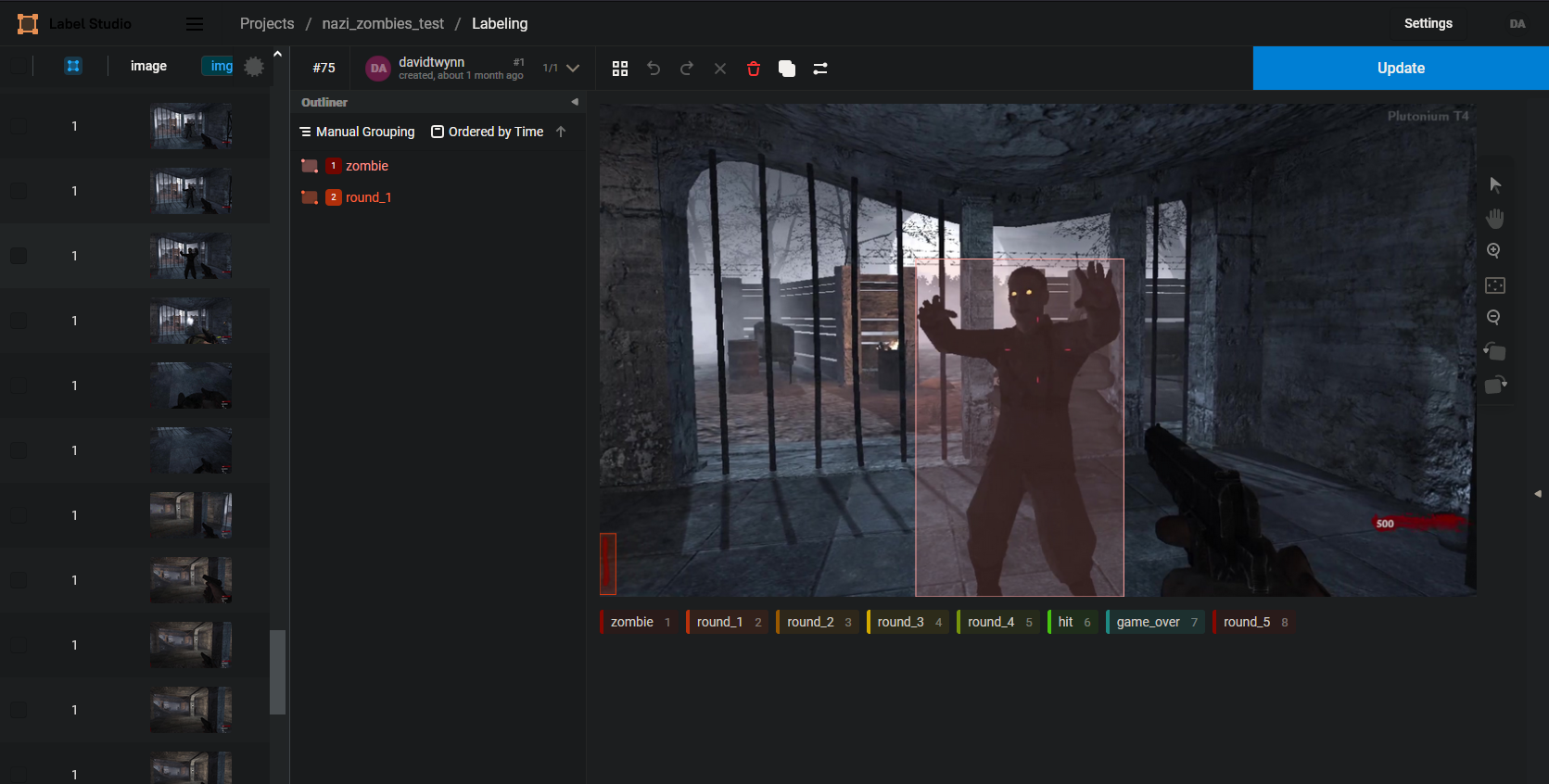

Label zombies, round, hit, and game over

-

Train on yolov8

- Had an issue where yolo8 would point to yolov5. Didn't realize there were appdata settings for ultalytics

- PC Crashed when installing yolov8. Fix was to add --no-cache-dir to the install

- pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu118 --no-cache-dir

- pip install ultralytics

- Copy images to folder

- yolo task=detect mode=train model=yolov8n.pt imgsz=1280 data=zombies_1.yaml epochs=100 batch=4 name=zombies_1_8n

-

Figure out running yolov8 pre trained model on screen recording

- Used Win32 API to take screenshots of the game, and send to YOLOv8 to process

-

Figure out basic controls

- Initial testing was done with pyautogui to control the screen. Ran into issues with the new place to move the mouse to. Not sure if there were scaling issues, or if it has to do with the mouse always being in the middle. It seemed that turning 90, 180, 360 degrees was somewhat deterministic. When actually aiming and shooting there were issues.

- Was able to determine where to shoot by looking for the largest zombie bounding box and putting a crosshair at the top middle.

-

Look into how the data from the model could be parsed to then send to pyauto gui, or NEAT.

classes:

- game_over

- hit

- round_1

- round_2

- round_3

- round_4

- round_5

- zombie

Detection Examples:

zombies_detection_1.720p.mp4

Labeling

Training was done in label studio. It seemed to be a nice editor.