Panza is an automated email assistant customized to your writing style and past email history.

Its main features are as follows:

- Panza produces a fine-tuned LLM that matches your writing style, pairing it with a Retrieval-Augmented Generation (RAG) component which helps it produce relevant emails.

- Panza can be trained and run entirely locally. Currently, it requires a single GPU with 16-24 GiB of memory, but we also plan to release a CPU-only version. At no point in training or execution is your data shared with the entities that trained the original LLMs, with LLM distribution services such as Huggingface, or with us.

- Training and execution are also quick - for a dataset on the order of 1000 emails, training Panza takes well under an hour, and generating a new email takes a few seconds at most.

- Your emails, exported to

mboxformat (see tutorial below). - A computer, preferably with a NVIDIA GPU with at least 24 GiB of memory (alternatively, check out running in Google Colab).

- A Hugging Face account to download the models (free of charge).

- [Optional] A Weights & Biases account to log metrics during training (free of charge).

- Basic Python and Unix knowledge, such as building environments and running python scripts.

- No prior LLMs experience is needed.

For most email clients, it is possible to download a user's past emails in a machine-friendly .mbox format. For example, GMail allows you to do this via Google Takeout, whereas Thunderbird allows one to do this via various plugins.

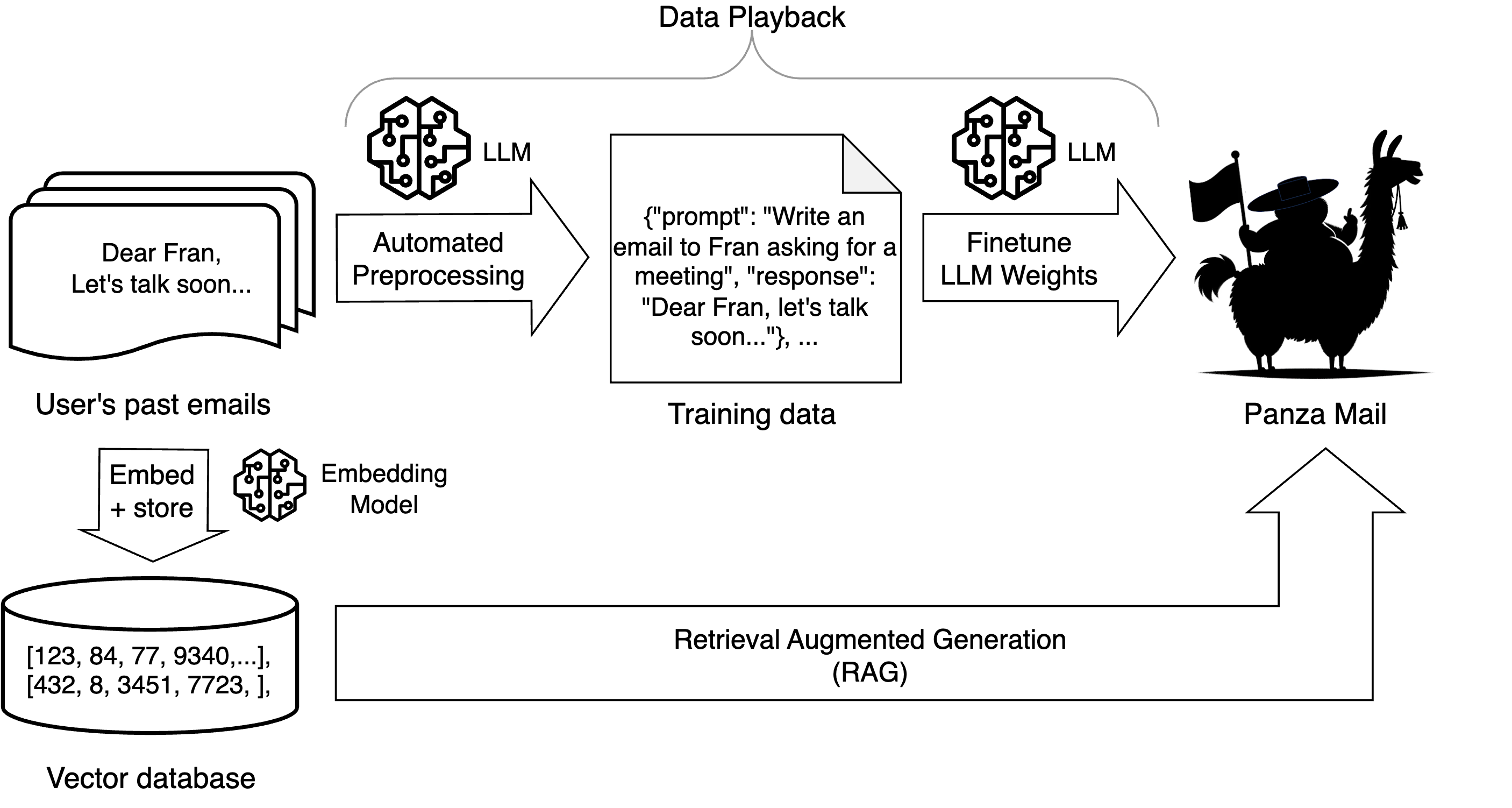

One key part of Panza is a dataset-generation technique we call data playback: Given some of your past emails in .mbox format, we automatically create a training set for Panza by using a pretrained LLM to summarize the emails in instruction form; each email becomes a (synthetic instruction, real email) pair.

Given a dataset consisting of all pairs, we use these pairs to "play back" your sent emails: the LLM receives only the instruction, and has to generate the "ground truth" email as a training target.

We find that this approach is very useful for the LLM to "learn" the user's writing style.

We then use parameter-efficient finetuning to train the LLM on this dataset, locally. We found that we get the best results with the RoSA method, which combines low-rank (LoRA) and sparse finetuning. If parameter efficiency is not a concern, that is, you have a more powerful GPU, then regular, full-rank/full-parameter finetuning can also be used. We find that a moderate amount of further training strikes the right balance between matching the writer's style without memorizing irrelevant details in past emails.

Once we have a custom user model, Panza can be run locally together with a Retrieval-Augmented Generation (RAG) module. Specifically, this functionality stores past emails in a database and provides a few relevant emails as context for each new query. This allows Panza to better insert specific details, such as a writer's contact information or frequently used Zoom links.

The overall structure of Panza is as follows:

- Make sure you have a version of conda installed.

- Run

source prepare_env.sh. This script will create a conda environment namedpanzaand install the required packages.

Run the following commands to pull a docker image with all the dependencies installed.

docker pull istdaslab/panzamail

docker run -it --gpus all istdaslab/panzamail /bin/bash

or alternatively, you can build the image yourself:

docker build . -f Dockerfile -t istdaslab/panzamail

docker run -it --gpus all istdaslab/panzamail /bin/bash

In the docker you can activate the panza environment with:

micromamba activate panza

To quickly get started with building your own personalized email assistant, follow the steps bellow:

Expand for detailed download instructions.

We provide a description for doing this for GMail via Google Takeout.

- Go to https://takeout.google.com/.

- Click

Deselect all. - Find

Mailsection (search for the phraseMessages and attachments in your Gmail account in MBOX format). - Select it.

- Click on

All Mail data includedand deselect everything exceptSent. - Scroll to the bottom of the page and click

Next step. - Click on

Create export. - Wait for download link to arrive in your inbox.

- Download

Sent.mboxand place it in thedata/directory.

For Outlook accounts, we suggest doing this via a Thunderbird plugin for exporting a subset of your email as an MBOX format, such as this add-on.

At the end of this step you should have the downloaded emails placed inside data/Sent.mbox.

Panza is configured through a set of environment variables defined in scripts/config.sh and shared along all running scripts.

The LLM prompt is controlled by a set of prompt_preambles that give the model more insight about its role, the user and how to reuse existing emails for Retrieval-Augmented Generation (RAG). See more details in the prompting section.

- Modifiy the environment variable

PANZA_EMAIL_ADDRESSinsidescripts/config.shwith your own email address. - Modifiy

prompt_preambles/user_preamble.txtwith your own information. If you choose, this can even be empty. - Login to Hugging Face to be able to download pretrained models:

huggingface-cli login. - [Optional] Login to Weights & Biases to log metrics during training:

wandb login. Then, setPANZA_WANDB_DISABLED=Falseinscripts/config.sh.

You are now ready to move to scripts.

cd scripts-

Run

./extract_emails.sh. This extracts your emails in text format todata/<username>_clean.jsonlwhich you can manually inspect. -

If you wish to eliminate any emails from the training set (e.g. containing certain personal information), you can simply remove the corresponding rows.

-

Simply run

./prepare_dataset.sh.This scripts takes care of all the prerequisites before training (expand for details).

- Creates synthetic prompts for your emails as described in the data playback section. The results are stored in

data/<username>_clean_summarized.jsonland you can inspect the"summary"field. - Splits data into training and test subsets. See

data/train.jsonlanddata/test.jsonl. - Creates a vector database from the embeddings of the training emails which will later be used for Retrieval-Augmented Generation (RAG). See

data/<username>.pklanddata/<username>.faiss.

- Creates synthetic prompts for your emails as described in the data playback section. The results are stored in

We currently support LLaMA3-8B-Instruct and Mistral-Instruct-v0.2 LLMs as base models; the former is the default, but we obtained good results with either model.

-

[Recommended] For parameter efficient fine-tuning, run

./train_rosa.sh.

If a larger GPU is available and full-parameter fine-tuning is possible, run./train_fft.sh. -

We have prepopulated the training scripts with parameter values that worked best for us. We recommend you try those first, but you can also experiment with different hyper-parameters by passing extra arguments to the training script, such as

LR,LORA_LR,NUM_EPOCHS. All the trained models are saved in thecheckpointsdirectory.

Examples:

./train_rosa.sh # Will use the default parameters.

./train_rosa.sh LR=1e-6 LORA_LR=1e-6 NUM_EPOCHS=7 # Will override LR, LORA_LR, and NUM_EPOCHS.- Run

./run_panza_gui.sh MODEL=<path-to-your-trained-model>to serve the trained model in a friendly GUI.

Alternatively, if you prefer using the CLI to interact with Panza, run./run_panza_cli.shinstead.

You can experiment with the following arguments:

- If

MODELis not specified, it will use a pretrainedMeta-Llama-3-8B-Instructmodel by default, although Panza also works withMistral-7B-Instruct-v2. Try it out to compare the syle difference! - To disable RAG, run with

PANZA_DISABLE_RAG_INFERENCE=1.

Example:

./run_panza_gui.sh \

MODEL=/local/path/to/this/repo/checkpoints/models/panza-rosa_1e-6-seed42_7908 \

PANZA_DISABLE_RAG_INFERENCE=0 # this is the default behaviour, so you can omit it📧 Have fun with your new email writing assistant! 📧

Panza was conceived by Nir Shavit and Dan Alistarh and built by the Distributed Algorithms and Systems group at IST Austria. The contributors are (in alphabetical order):

Dan Alistarh, Eugenia Iofinova, Eldar Kurtic, Ilya Markov, Armand Nicolicioiu, Mahdi Nikdan, Andrei Panferov, and Nir Shavit.

Contact: dan.alistarh@ist.ac.at

We thank our collaborators Michael Goin and Tony Wang at NeuralMagic and MIT for their helpful testing and feedback.