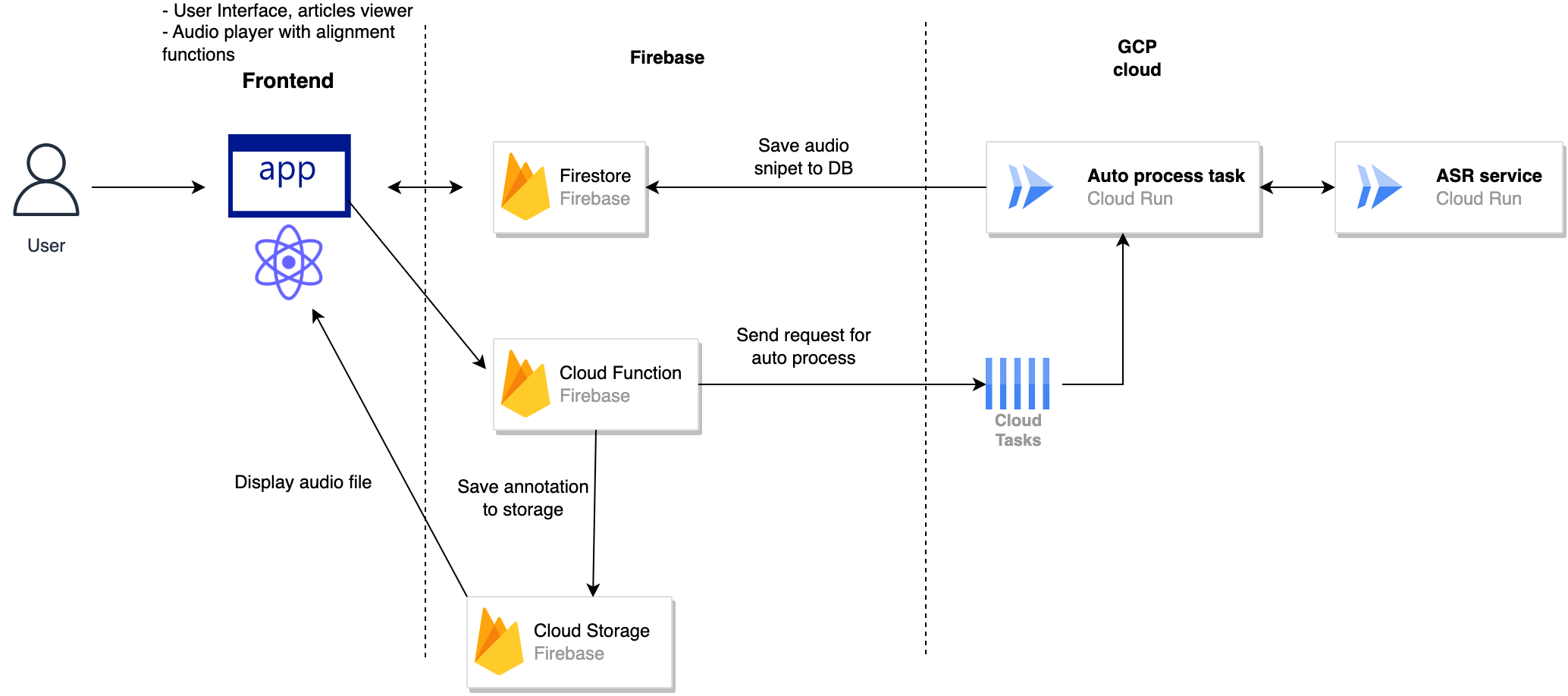

This is a tool for annotating text-to-speech (TTS) data for training. It makes your text - audio alignment easier by providing a simple interface to upload the audio and then create audio - text pair. Below is the workflow:

- Upload the audio file

- Input the long text

- System will process audio and split into small chunks

- An ASR (automatic speech recognition) service will assign suitable text to each chunk

- User can edit the text and align audio to fine tune the annotation

- The result will be saved in Firebase storage with the same name audio - text pair

The project is built using ReactJS and Firebase. The app is deployed on Firebase hosting and the data is stored in Firebase storage. Beside that the project uses Firebase Cloud Function for some services and Google Cloud Platform for ASR service.

To run the project you need to set up a Firebase project and Firebase storage. Please refer to the Firebase docs for more information.

- Create a Firebase project

- Create a Firebase storage

- Create a Firebase Cloud Function

To run the project you need to set up a Google Cloud Platform project.

-

Deploy the ASR service to Cloud Run

-

Obtain any ASR model from Hugging Face, in this case we use a wav2vec2 model. You can also use other models if you want.

-

Package the model and put it to a folder

modelinservices/audio-processingfolder -

Deploy the service to Cloud Run

-

Obtain the URL of the service. It will be used in the next step

-

Create a Cloud task queue in GCP

-

Create a Service account for Cloud task queue and give it permission to access Cloud Tasks Queue

-

Put

task-cert.jsonfile inservices/audio-processing-taskfolder -

Add the task

QUEUE_NAMEandPROJECT_IDtoservices/audio-processing-task/.envfile -

Add Firebase service account key to

services/audio-processing-taskfolder so that the service can access Firestore DB and Firebase storage -

Deploy the service to Cloud Run

-

Obtain the URL of the service. It will be used in the next step

- Reference to the Firebase docs for more information.

- Add Firebase service account key to

web-ui/functionsfolder so that the service can access Firestore DB and Firebase storage - Add URL obtained from last step to the cloud function ENV

functions.config().preprocesssourceaudio.processingurl - Deploy the cloud function to Firebase and get the URL of the service. Run

firebase deploy --only functions - Get

CREATE_SNIPPET_URLfrom the functions putservices/audio-processing-task/.envthen redeploy the ASR tasks to Cloud Run - Build and deploy the web app to Firebase hosting

web-ui/web. See the README inweb-ui/webfor more details

Check out the video here

And the screenshot folder for more details

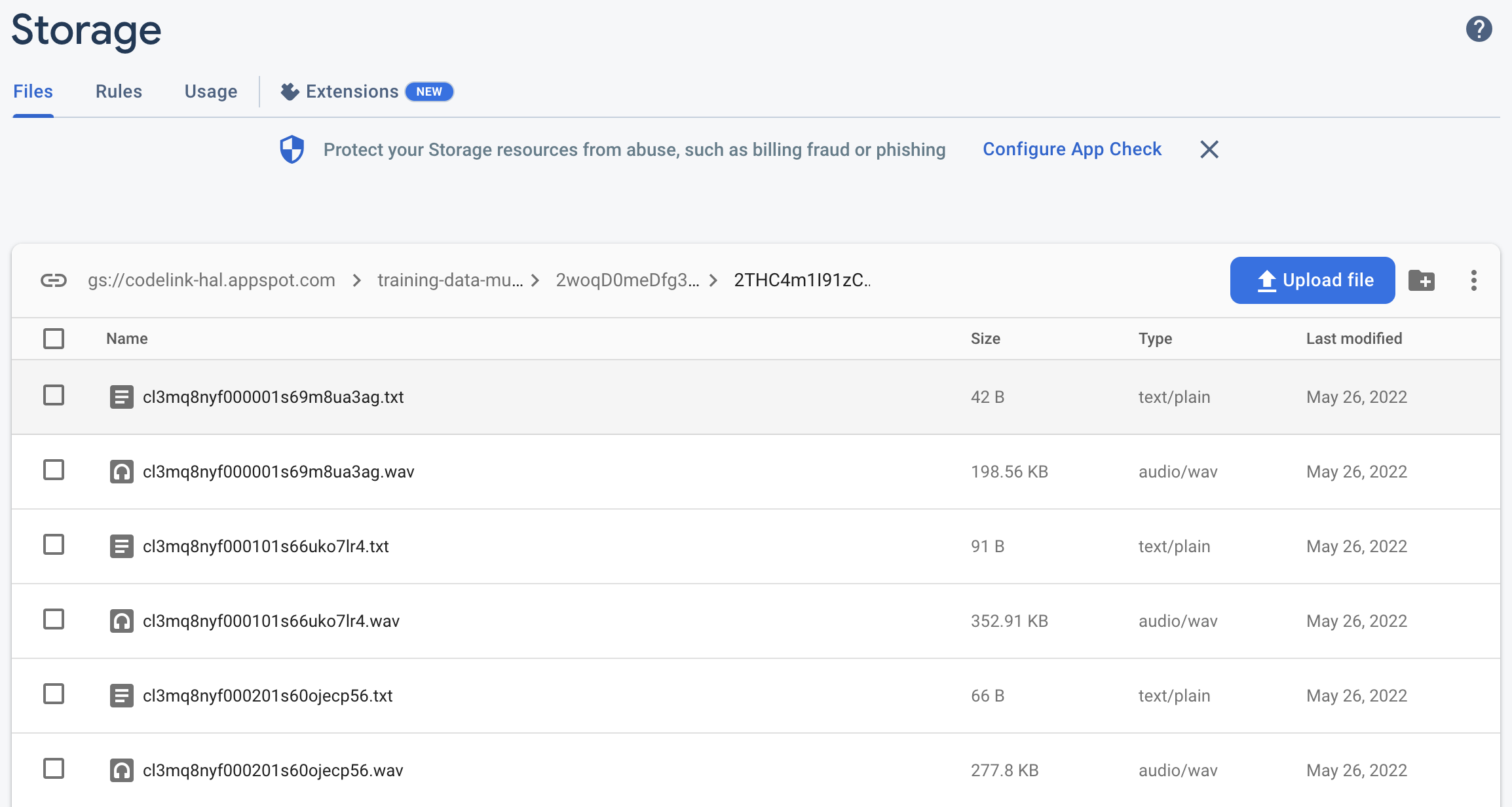

After processing the audio, the result after reading will be like this:

- Audio

- Text: "thế là sáng hôm sau, cái tin tôi về đến cổng còn hỏi thăm đường đã lan ra khắp xóm"

You can also see the result in the app and in Firebase storage.

We have released a Vietnamese dataset using this tool. You can find it here This is a dataset for Vietnamese TTS. It contains 10000 audio - text pairs. The audio is recorded by a professional voice actor. The text is collected from news articles from the internet. The dataset is used for training a TTS model for Vietnamese language.

We have trained a Vietnamese TTS model using with the help of this tool for preparing the dataset. This model is used at https://baonoi.ai to generate voice for news articles. It is available here