Implementing a reinforcement learning environment and algorithms for networking from scratch is a difficult task. Inspired by the work of ns3-gym, we developed RL4Net (Reinforcement Learning for Networking) to facilitate the research and simulator of reinforcement learning for networking.

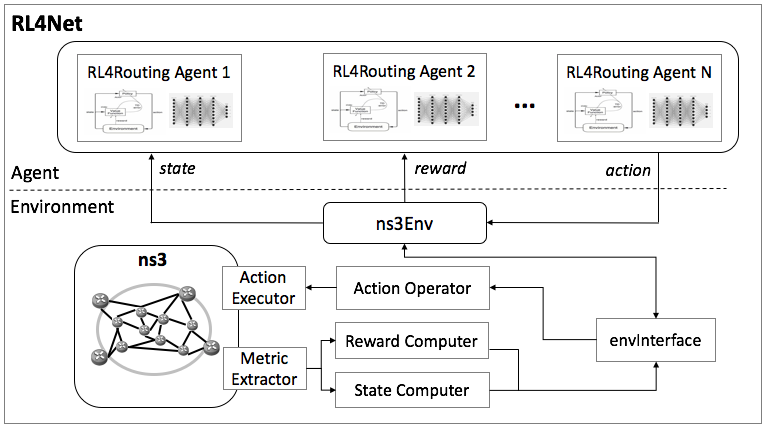

Below figure shows the architecture of RL4Net:

RL4Net is composed of two functional blocks:

- Environment: Environment is built on widely used ns3 network simulator ns3. We extend ns3 with six components:

- Metric Extractor for computing quality metrics like delay and loss from ns3;

- Computers for translating quality metrics to DRL state and reward;

- Action Operator to get action commands from agent;

- Action Executor for perform ns3 operations by actions;

- ns3Env for transforming the ns3 object into DRL environment;

- envInterface to translate between ns3 data and DRL factors.

- Agent: Agent is container of a DRL-based cognitive routing algorithm. A agent can built on various deep learning frameworks like pyTorch and Tensorflow.

- ./ns3-addon: Files to be copied into ns3 source file folder for extension. It includes:

- ns3-src/action-executor: code for Action Executor

- ns3-src/metric-extractor: code for Metric Extractor

- rapidjson: an open source JSON parser and generator

- ns3-scratch: several examples of experiments on RL4Net

- ./ns3-env: File for ns3Env block. It cinludes:

- env-interface: code for envInterface

- ns3-python-connector: code for connecting python and ns3 c++

- ./RL4Net-lib: Libaray files developed by us

- ./TE-trainer: Files for traning agents

- ./RLAgent: Files of agents

Since RL4Net is based on ns-3, you need to install ns-3 before use RL4Net.

The introcuction of ns-3 and how to install can be find at the official website of ns-3.

As a recommendation, you can:

- Install dependencies

You can install ns-3 dependencies by following official guide. - Use git to install ns-3-dev

see: downloading-ns-3-using-git

you can also install a specific version od ns-3, such as ns-3.30, but we prefer ns-3-dev.

Another possible guide is wiki of ns-3, see: wiki of installation

Now suppose you have successfuly installed ns-3-dev, you can start to install RL4Net.

-

Install zmqbridge and protobuf ns3-env needs ZMQ and libprotoc, you can install as follow:

# to install protobuf-3.6 on ubuntu 16.04: sudo add-apt-repository ppa:maarten-fonville/protobuf sudo apt-get update apt-get install libzmq5 libzmq5-dev apt-get install libprotobuf-dev apt-get install protobuf-compiler -

Install addon files Since you have installed dependence libs, you can install addon files by:

python ns3_setup.py --wafdir=YOUR_WAFPATH

the

YOUR_WAFPATHis correspond to the introduction of ns-3 installation, where you can execute./waf build, typicallyns-3-allinone/ns-3-dev. Remember to use absolute path.The default value of wafdir is

/ns-3-dev(notice it is subdir of '/'). As an alternative, you can copy the folder into/ns-3-dev, then runpython ns3_setup.py

pyns3 is the python module that connect python and ns3. Use pip(or pip3) to install this module with your python env(maybe conda).

pip install ns3-env/ns3-python-connectorwjwgym is a lab that helps build reinforcement learning algorithms. See: Github

Install it with pip and your python env:

pip install RL4Net-lib/wjwgym-homeThe lab need numpy, torch and tensorboard. You can pre-install them, especially pytorch, by which you can choose pip/conda.

TBD.

Jun Liu (liujun@bupt.edu.cn), Beijing University of Posts and Telecommunications, China