TODO blabla

- Save model in ONNX

- Sampling code

- Inference code

The directory structure of the project looks like this:

├── bash <- Bash scripts

│ ├── setup_conda.sh <- Setup conda environment

│ └── schedule.sh <- Schedule execution of many runs

│

├── configs <- Hydra configuration files

│ ├── callbacks <- Callbacks configs

│ ├── datamodule <- Datamodule configs

│ ├── experiment <- Experiment configs

│ ├── hparams_search <- Hyperparameter search configs

│ ├── hydra <- Hydra related configs

│ ├── logger <- Logger configs

│ ├── model <- Model configs

│ ├── trainer <- Trainer configs

│ │

│ └── config.yaml <- Main project configuration file

│

├── data <- Project data

│

├── logs <- Logs generated by Hydra and PyTorch Lightning loggers

│

├── notebooks <- Jupyter notebooks. Naming convention is a number (for ordering),

│ the creator's initials, and a short `-` delimited description, e.g.

│ `1.0-jqp-initial-data-exploration.ipynb`.

│

├── tests <- Tests of any kind

│ ├── helpers <- A couple of testing utilities

│ ├── shell <- Shell/command based tests

│ └── unit <- Unit tests

│

├── src

│ ├── callbacks <- Lightning callbacks

│ ├── datamodules <- Lightning datamodules

│ ├── models <- Lightning models

│ ├── utils <- Utility scripts

│ │

│ └── train.py <- Training pipeline

│

├── run.py <- Run pipeline with chosen experiment configuration

│

├── .env.example <- Template of the file for storing private environment variables

├── .gitignore <- List of files/folders ignored by git

├── .pre-commit-config.yaml <- Configuration of automatic code formatting

├── setup.cfg <- Configurations of linters and pytest

├── Dockerfile <- File for building docker container

├── requirements.txt <- File for installing python dependencies

├── LICENSE

└── README.md

# clone project

git clone https://github.com/YourGithubName/your-repo-name

cd your-repo-name

# [OPTIONAL] create conda environment

bash bash/setup_conda.sh

# install requirements

pip install -r requirements.txtThis code contains example with DeepForest object detection .

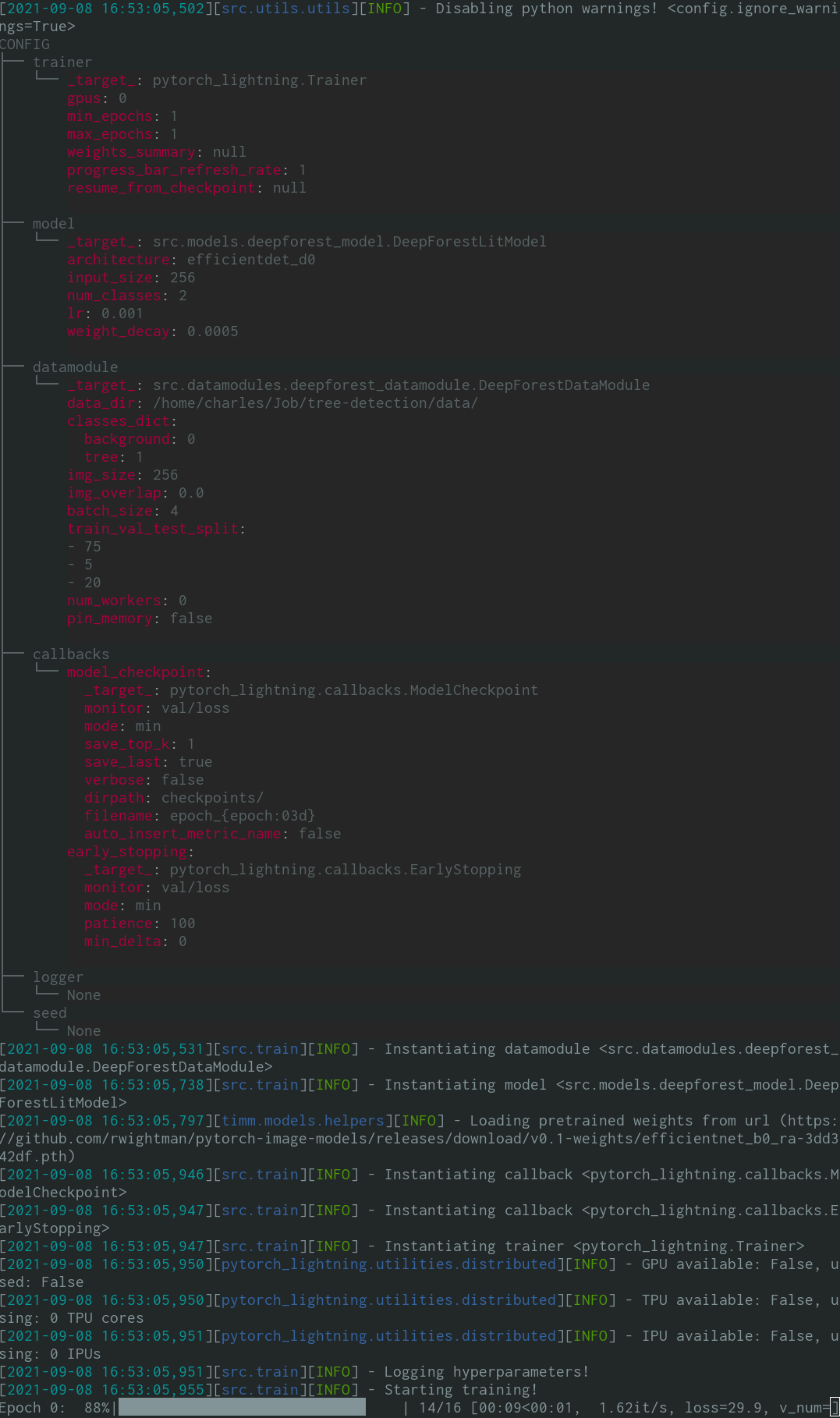

When running python run.py you should see something like this:

Train model with default configuration

# default train

python run.py

# train on CPU

python run.py trainer.gpus=0

# train on GPU

python run.py trainer.gpus=1Train model with chosen experiment configuration from configs/experiment/

python run.py experiment=experiment_nameYou can override any parameter from command line like this

python run.py trainer.max_epochs=20 datamodule.batch_size=64- First, you should probably get familiar with PyTorch Lightning

- Next, go through Hydra quick start guide and basic Hydra tutorial

By design, every run is initialized by run.py file. All PyTorch Lightning modules are dynamically instantiated from module paths specified in config. Example model config: TODO

Using this config we can instantiate the object with the following line:

model = hydra.utils.instantiate(config.model)This allows you to easily iterate over new models!

Every time you create a new one, just specify its module path and parameters in appriopriate config file.

The whole pipeline managing the instantiation logic is placed in src/train.py.

Location: configs/config.yaml

Main project config contains default training configuration.

It determines how config is composed when simply executing command python run.py.

It also specifies everything that shouldn't be managed by experiment configurations.

Show main project configuration

# specify here default training configuration

defaults:

- trainer: default.yaml

- model: deepforest_model.yaml

- datamodule: deepforest_datamodule.yaml

- callbacks: default.yaml # set this to null if you don't want to use callbacks

- logger: null # set logger here or use command line (e.g. `python run.py logger=wandb`)

- hydra: default.yaml

- experiment: null

- hparams_search: null

# path to original working directory

# hydra hijacks working directory by changing it to the current log directory,

# so it's useful to have this path as a special variable

# learn more here: https://hydra.cc/docs/next/tutorials/basic/running_your_app/working_directory

work_dir: ${hydra:runtime.cwd}

# path to folder with data

data_dir: ${work_dir}/data/

# pretty print config at the start of the run using Rich library

print_config: True

# disable python warnings if they annoy you

ignore_warnings: TrueLocation: configs/experiment

You should store all your experiment configurations in this folder.

Experiment configurations allow you to overwrite parameters from main project configuration.

TODO

TODO Change the name mnist in the future.

- Write your PyTorch Lightning model (see mnist_model.py for example)

- Write your PyTorch Lightning datamodule (see mnist_datamodule.py for example)

- Write your experiment config, containing paths to your model and datamodule

- Run training with chosen experiment config:

python run.py experiment=experiment_name

Hydra creates new working directory for every executed run.

By default, logs have the following structure:

│

├── logs

│ ├── runs # Folder for logs generated from single runs

│ │ ├── 2021-02-15 # Date of executing run

│ │ │ ├── 16-50-49 # Hour of executing run

│ │ │ │ ├── .hydra # Hydra logs

│ │ │ │ ├── wandb # Weights&Biases logs

│ │ │ │ ├── checkpoints # Training checkpoints

│ │ │ │ └── ... # Any other thing saved during training

│ │ │ ├── ...

│ │ │ └── ...

│ │ ├── ...

│ │ └── ...

│ │

│ └── multiruns # Folder for logs generated from multiruns (sweeps)

│ ├── 2021-02-15_16-50-49 # Date and hour of executing sweep

│ │ ├── 0 # Job number

│ │ │ ├── .hydra # Hydra logs

│ │ │ ├── wandb # Weights&Biases logs

│ │ │ ├── checkpoints # Training checkpoints

│ │ │ └── ... # Any other thing saved during training

│ │ ├── 1

│ │ ├── 2

│ │ └── ...

│ ├── ...

│ └── ...

│

You can change this structure by modifying paths in hydra configuration.

PyTorch Lightning supports the most popular logging frameworks:

Weights&Biases · Neptune · Comet · MLFlow · Aim · Tensorboard

These tools help you keep track of hyperparameters and output metrics and allow you to compare and visualize results. To use one of them simply complete its configuration in configs/logger and run:

python run.py logger=logger_nameYou can use many of them at once (see configs/logger/many_loggers.yaml for example).

You can also write your own logger.

Lightning provides convenient method for logging custom metrics from inside LightningModule.

Read the docs here or

take a look at DeepForest example.

Defining hyperparameter optimization is as easy as adding new config file to configs/hparams_search.

Show example

defaults:

- override /hydra/sweeper: optuna

# choose metric which will be optimized by Optuna

optimized_metric: "val/acc"

hydra:

# here we define Optuna hyperparameter search

# it optimizes for value returned from function with @hydra.main decorator

# learn more here: https://hydra.cc/docs/next/plugins/optuna_sweeper

sweeper:

_target_: hydra_plugins.hydra_optuna_sweeper.optuna_sweeper.OptunaSweeper

storage: null

study_name: null

n_jobs: 1

# 'minimize' or 'maximize' the objective

direction: maximize

# number of experiments that will be executed

n_trials: 20

# choose Optuna hyperparameter sampler

# learn more here: https://optuna.readthedocs.io/en/stable/reference/samplers.html

sampler:

_target_: optuna.samplers.TPESampler

seed: 12345

consider_prior: true

prior_weight: 1.0

consider_magic_clip: true

consider_endpoints: false

n_startup_trials: 10

n_ei_candidates: 24

multivariate: false

warn_independent_sampling: true

# define range of hyperparameters

search_space:

datamodule.batch_size:

type: categorical

choices: [32, 64, 128]

model.lr:

type: float

low: 0.0001

high: 0.2

model.lin1_size:

type: categorical

choices: [32, 64, 128, 256, 512]

model.lin2_size:

type: categorical

choices: [32, 64, 128, 256, 512]

model.lin3_size:

type: categorical

choices: [32, 64, 128, 256, 512]Next, you can execute it with: TODO change mnist

python run.py -m hparams_search=mnist_optuna

Using this approach doesn't require you to add any boilerplate into your pipeline, everything is defined in a single config file. You can use different optimization frameworks integrated with Hydra, like Optuna, Ax or Nevergrad.

The following is example of loading model from checkpoint and running predictions.

TODO

Template comes with example tests implemented with pytest library.

To execute them simply run:

# run all tests

pytest

# run tests from specific file

pytest tests/shell/test_basic_commands.py

# run all tests except the ones marked as slow

pytest -k "not slow"I often find myself running into bugs that come out only in edge cases or on some specific hardware/environment. To speed up the development, I usually constantly execute tests that run a couple of quick 1 epoch experiments, like overfitting to 10 batches, training on 25% of data, etc. Those kind of tests don't check for any specific output - they exist to simply verify that executing some commands doesn't end up in throwing exceptions. You can find them implemented in tests/shell folder.

You can easily modify the commands in the scripts for your use case. If even 1 epoch is too much for your model, then you can make it run for a couple of batches instead (by using the right trainer flags).

Template contains example callbacks enabling better Weights&Biases integration, which you can use as a reference for writing your own callbacks (see wandb_callbacks.py).

To support reproducibility:

- WatchModel

- UploadCodeAsArtifact

- UploadCheckpointsAsArtifact

To provide examples of logging custom visualisations with callbacks only:

- LogConfusionMatrix

- LogF1PrecRecHeatmap

- LogImagePredictions

To see the result of all the callbacks attached, take a look at this experiment dashboard.

Lightning supports multiple ways of doing distributed training.

The most common one is DDP, which spawns separate process for each GPU and averages gradients between them. To learn about other approaches read lightning docs.

You can run DDP on mnist example with 4 GPUs like this:

python run.py trainer.gpus=4 +trainer.accelerator="ddp"TODO (see template for info)

List of extra utilities available in the template:

- loading environment variables from .env file

- pretty printing config with Rich library

- disabling python warnings

- debug mode

You can easily remove any of those by modifying run.py and src/train.py.

Use Miniconda for GPU environments

Use miniconda for your python environments (it's usually unnecessary to install full anaconda environment, miniconda should be enough).

It makes it easier to install some dependencies, like cudatoolkit for GPU support.

Example installation:

wget https://repo.anaconda.com/miniconda/Miniconda3-latest-Linux-x86_64.sh

bash Miniconda3-latest-Linux-x86_64.shCreate environment using bash script provided in the template:

bash bash/setup_conda.shUse automatic code formatting

Use pre-commit hooks to standardize code formatting of your project and save mental energy.

Simply install pre-commit package with:

pip install pre-commitNext, install hooks from .pre-commit-config.yaml:

pre-commit installAfter that your code will be automatically reformatted on every new commit.

Currently template contains configurations of black (python code formatting), isort (python import sorting), flake8 (python code analysis) and prettier (yaml formating).

To reformat all files in the project use command:

pre-commit run -aSet private environment variables in .env file

System specific variables (e.g. absolute paths to datasets) should not be under version control or it will result in conflict between different users. Your private keys also shouldn't be versioned since you don't want them to be leaked.

Template contains .env.example file, which serves as an example. Create a new file called .env (this name is excluded from version control in .gitignore).

You should use it for storing environment variables like this:

MY_VAR=/home/user/my_system_pathAll variables from .env are loaded in run.py automatically.

Hydra allows you to reference any env variable in .yaml configs like this:

path_to_data: ${oc.env:MY_VAR}Name metrics using '/' character

Depending on which logger you're using, it's often useful to define metric name with / character:

self.log("train/loss", loss)This way loggers will treat your metrics as belonging to different sections, which helps to get them organised in UI.

Use torchmetrics

Use official torchmetrics library to ensure proper calculation of metrics. This is especially important for multi-GPU training!

For example, instead of calculating accuracy by yourself, you should use the provided Accuracy class like this:

from torchmetrics.classification.accuracy import Accuracy

class LitModel(LightningModule):

def __init__(self)

self.train_acc = Accuracy()

self.val_acc = Accuracy()

def training_step(self, batch, batch_idx):

...

acc = self.train_acc(predictions, targets)

self.log("train/acc", acc)

...

def validation_step(self, batch, batch_idx):

...

acc = self.val_acc(predictions, targets)

self.log("val/acc", acc)

...Make sure to use different metric instance for each step to ensure proper value reduction over all GPU processes.

Torchmetrics provides metrics for most use cases, like F1 score or confusion matrix. Read documentation for more.

Follow PyTorch Lightning style guide

The style guide is available here.

-

Be explicit in your init. Try to define all the relevant defaults so that the user doesn’t have to guess. Provide type hints. This way your module is reusable across projects!

class LitModel(LightningModule): def __init__(self, layer_size: int = 256, lr: float = 0.001):

-

Preserve the recommended method order.

class LitModel(LightningModule): def __init__(): ... def forward(): ... def training_step(): ... def training_step_end(): ... def training_epoch_end(): ... def validation_step(): ... def validation_step_end(): ... def validation_epoch_end(): ... def test_step(): ... def test_step_end(): ... def test_epoch_end(): ... def configure_optimizers(): ... def any_extra_hook(): ...

Version control your data and models with DVC

Use DVC to version control big files, like your data or trained ML models.

To initialize the dvc repository:

dvc initTo start tracking a file or directory, use dvc add:

dvc add data/MNISTDVC stores information about the added file (or a directory) in a special .dvc file named data/MNIST.dvc, a small text file with a human-readable format. This file can be easily versioned like source code with Git, as a placeholder for the original data:

git add data/MNIST.dvc data/.gitignore

git commit -m "Add raw data"Support installing project as a package

It allows other people to easily use your modules in their own projects.

Change name of the src folder to your project name and add setup.py file:

from setuptools import find_packages, setup

setup(

name="src", # you should change "src" to your project name

version="0.0.0",

description="Describe Your Cool Project",

author="",

author_email="",

# replace with your own github project link

url="https://github.com/ashleve/lightning-hydra-template",

install_requires=["pytorch-lightning>=1.2.0", "hydra-core>=1.0.6"],

packages=find_packages(),

)Now your project can be installed from local files:

pip install -e .Or directly from git repository:

pip install git+git://github.com/YourGithubName/your-repo-name.git --upgradeSo any file can be easily imported into any other file like so:

from project_name.models.mnist_model import MNISTLitModel

from project_name.datamodules.mnist_datamodule import MNISTDataModuleAutomatic activation of virtual environment and tab completion when entering folder

Create a new file called .autoenv (this name is excluded from version control in .gitignore).

You can use it to automatically execute shell commands when entering folder.

To setup this automation for bash, execute the following line:

echo "autoenv() { if [ -x .autoenv ]; then source .autoenv ; echo '.autoenv executed' ; fi } ; cd() { builtin cd \"\$@\" ; autoenv ; } ; autoenv" >> ~/.bashrcNow you can add any commands to your .autoenv file, e.g. activation of virtual environment and hydra tab completion:

# activate conda environment

conda activate myenv

# activate hydra tab completion for bash

eval "$(python run.py -sc install=bash)"

# enable aliases for debugging

alias test='pytest'

alias debug1='python run.py debug=true'

alias debug2='python run.py trainer.gpus=1 trainer.max_epochs=1'

alias debug3='python run.py trainer.gpus=1 trainer.max_epochs=1 +trainer.limit_train_batches=0.1'

alias debug_wandb='python run.py trainer.gpus=1 trainer.max_epochs=1 logger=wandb logger.wandb.project=tests'(these commands will be executed whenever you're openning or switching terminal to folder containing .autoenv file)

Lastly add execution previliges to your .autoenv file:

chmod +x .autoenv

Explanation

The mentioned line appends your .bashrc file with 2 commands:

autoenv() { if [ -x .autoenv ]; then source .autoenv ; echo '.autoenv executed' ; fi }- this declares theautoenv()function, which executes.autoenvfile if it exists in current work dir and has execution previligiescd() { builtin cd \"\$@\" ; autoenv ; } ; autoenv- this extends behaviour ofcdcommand, to make it executeautoenv()function each time you change folder in terminal or open new terminal

Accessing datamodule attributes in model

The simplest way is to pass datamodule attribute directly to model on initialization:

datamodule = hydra.utils.instantiate(config.datamodule)

model = hydra.utils.instantiate(config.model, some_param=datamodule.some_param)This is not a robust solution, since it assumes all your datamodules have some_param attribute available (otherwise the run will crash).

A better solution is to add Omegaconf resolver to your datamodule:

from omegaconf import OmegaConf

# you can place this snippet in your datamodule __init__()

resolver_name = "datamodule"

OmegaConf.register_new_resolver(

resolver_name,

lambda name: getattr(self, name),

use_cache=False

)This way you can reference any datamodule attribute from your config like this:

# this will get 'datamodule.some_param' field

some_parameter: ${datamodule: some_param}When later accessing this field, say in your lightning model, it will get automatically resolved based on all resolvers that are registered. Remember not to access this field before datamodule is initialized. You also need to set resolve to false in print_config() in run.py method or it will throw errors!

utils.print_config(config, resolve=False)Inspirations

This template was inspired by: PyTorchLightning/deep-learninig-project-template, drivendata/cookiecutter-data-science, tchaton/lightning-hydra-seed, Erlemar/pytorch_tempest, lucmos/nn-template.

Useful repositories

- pytorch/hydra-torch - resources for configuring PyTorch classes with Hydra,

- romesco/hydra-lightning - resources for configuring PyTorch Lightning classes with Hydra

- lucmos/nn-template - similar template

- PyTorchLightning/lightning-transformers - official Lightning Transformers repo built with Hydra

First you will need to install Nvidia Container Toolkit to enable GPU support.

To build the container from provided Dockerfile use:

docker build -t <project_name> .To mount the project to the container use:

docker run -v $(pwd):/workspace/project --gpus all -it --rm <project_name>Uncomment Apex in Dockerfile for mixed-precision support.

From the template.

Why you should use it: it allows you to rapidly iterate over new models/datasets and scale your projects from small single experiments to hyperparameter searches on computing clusters, without writing any boilerplate code. To my knowledge, it's one of the most convenient all-in-one technology stack for Deep Learning research. Good starting point for reproducing papers, kaggle competitions or small-team research projects. It's also a collection of best practices for efficient workflow and reproducibility.

Why you shouldn't use it: Lightning and Hydra are not yet mature, which means you might run into some bugs sooner or later. Also, even though Lightning is very flexible, it's not well suited for every possible deep learning task.

PyTorch Lightning is a lightweight PyTorch wrapper for high-performance AI research. It makes your code neatly organized and provides lots of useful features, like ability to run model on CPU, GPU, multi-GPU cluster and TPU.

Hydra is an open-source Python framework that simplifies the development of research and other complex applications. The key feature is the ability to dynamically create a hierarchical configuration by composition and override it through config files and the command line. It allows you to conveniently manage experiments and provides many useful plugins, like Optuna Sweeper for hyperparameter search, or Ray Launcher for running jobs on a cluster.