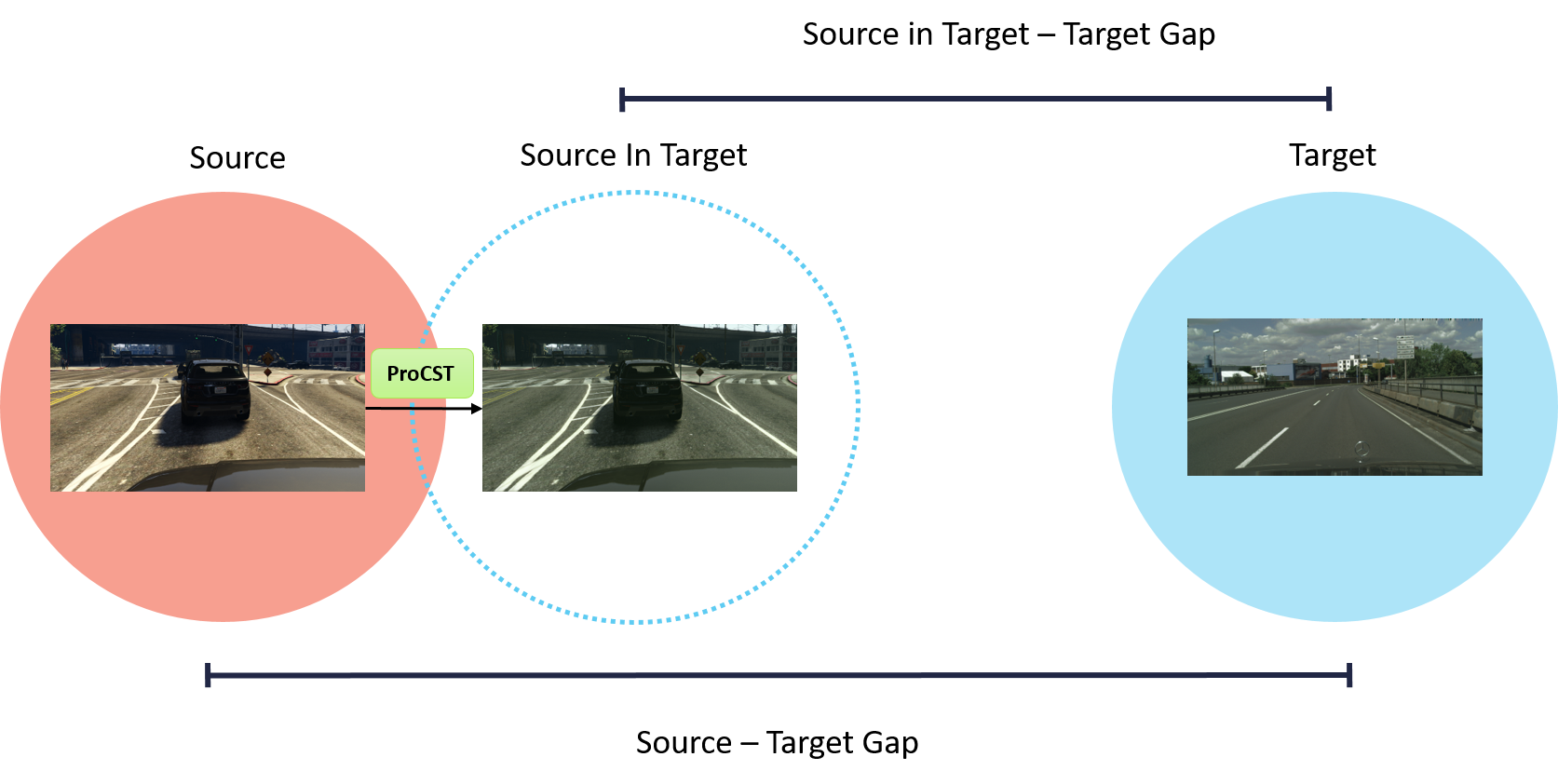

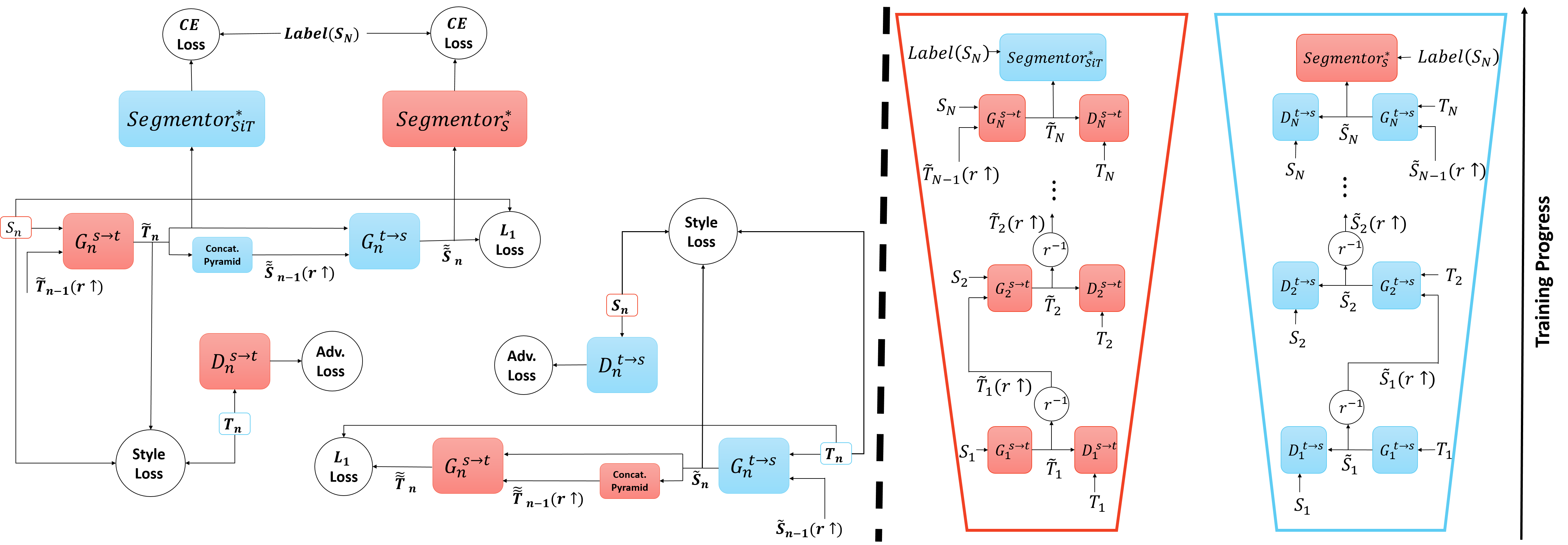

In this work, we propose a novel two-stage framework for improving unsupervised domain adaptation (UDA) techniques.

In the first step, we progressively train a multi-scale neural network to perform an initial transfer between the source data to the target data. We denote the new transformed data as "Source in Target" (SiT).

Then, we use the generated SiT data as the input to any standard UDA approach. This new data has a reduced domain gap from the desired target domain, and the applied UDA approach further closes the gap.

We exhibit the improvement achieved by our framework with two state-of-the-art methods for semantic segmentation, DAFormer and ProDA, on two UDA tasks, GTA5 to Cityscapes and Synthia to Cityscapes.

Checkpoints of ProCST+DAFormer are provided at the bottom of this page.

NEW: we report also on improvement of the new state-of-the-art UDA method HRDA, currently on the GTA5 to Cityscapes task. Synthia to Cityscapes still in progress.

Results summary using our runs:

GTA5 → Cityscapes

| Method | Source [mIoU] | SiT [mIoU] | Relative |

|---|---|---|---|

| ProDA | 57.5% | 58.6% | +1.1% |

| DAFormer | 67.9% | 69.4% | +1.5% |

| HRDA | 72.8% | 74.0% | +1.2% |

Synthia → Cityscapes

| Method | Source [mIoU] | SiT [mIoU] | Relative |

|---|---|---|---|

| ProDA | 55.5% | 56.1% | +0.6% |

| DAFormer | 60.5% | 61.6% | +1.1% |

| HRDA | WIP | WIP | WIP |

Translation GIF. Top row: GTA5 → Cityscapes translation, bottom row: Synthia → Cityscapes translation

Translation GIF. Top row: GTA5 → Cityscapes translation, bottom row: Synthia → Cityscapes translation

Please be sure to install python version >= 3.8. First, set up a new virtual environment:

python -m venv ~/venv/procst

source ~/venv/procst/bin/activateClone ProCST repository and install requirements:

git clone https://github.com/shahaf1313/ProCST

cd ProCST

pip install -r requirements.txt -f https://download.pytorch.org/whl/torch_stable.htmlDownload the pretrained semantic segmentation networks

trained on the source domain. Please create ./pretrained folder and save the pretrained networks there.

Cityscapes: Please download leftImg8bit_trainvaltest.zip, leftImg8bit_trainextra.zip and

gt_trainvaltest.zip from here

and extract them to data/cityscapes. Pay attention to delete the corrupted image

'troisdorf_000000_000073_leftImg8bit.png' from the dataset, as the link suggests.

GTA: Please download all image and label packages from

here and extract

them to data/gta.

Synthia: Please download SYNTHIA-RAND-CITYSCAPES from

here and extract it to data/synthia.

The final folder structure should look like this:

ProCST

├── ...

├── data

│ ├── cityscapes

│ │ ├── leftImg8bit

│ │ │ ├── train

│ │ │ ├── val

│ │ ├── gtFine

│ │ │ ├── train

│ │ │ ├── val

│ ├── gta

│ │ ├── images

│ │ ├── labels

│ ├── synthia

│ │ ├── RGB

│ │ ├── GT

│ │ │ ├── LABELS

├── pretrained

│ ├── pretrained_semseg_on_gta5.pth

│ ├── pretrained_semseg_on_synthia.pth

├── ...

Model is trained using one NVIDIA A6000 GPU with 48GB of memory.

The --gpus flag is mandatory. In the command line, insert GPU number instead of gpu_id.

For example, if we chose to train on GPU number 3, we should enter --gpus 3.

python ./train_procst.py --batch_size_list 32 16 3 --gpus gpu_idpython ./train_procst.py --source=synthia --src_data_dir=data/synthia --batch_size_list 32 16 3 --gpus gpu_idAfter training a full ProCST model, we are ready to boost the performance of UDA methods. For your convenience, we attach ProCST pretrained models:

First, we will generate the SiT Dataset using the

pretrained models.

Please enter the path to the pretrained model in the --trained_procst_path flag,

and choose the output images folder using the --sit_output_path flag.

python ./create_sit_dataset.py --batch_size=1 --trained_procst_path=path/to/pretrained_sit_model --sit_output_path=path/to/save/output_images --gpus gpu_idpython ./create_sit_dataset.py --source=synthia --src_data_dir=data/synthia --batch_size=1 --trained_procst_path=path/to/pretrained_sit_model --sit_output_path=path/to/save/output_images --gpus gpu_idAfter successfully generating SiT dataset, we can now replace the original source images with the resulting SiT images. Segmentation maps remain unchanged due to the Content Preservation property of ProCST.

We tested our SiT datasets on two state-of-the-art UDA methods: DAFormer and ProDA.

Both methods were boosted due to our SiT dataset. Results detailed in the paper.

We provide checkpoints of the combined ProCST+DAFormer, trained on GTA5→Cityscapes and Synthia→Cityscapes. Results can be tested on Cityscapes validation set.

- GTA5→Cityscapes checkpoint: 69.5% mIoU

- Synthia→Cityscapes checkpoint: 62.4% mIoU

In order to evaluate results using the above checkpoints, please refer to the original DAFormer repository. After setting up the required environment, use the given checkpoints as an input to test shell script:

cd path/to/DAFormer/directory

cd work_dirs # if this directory does not exits, than please create it.

# download checkpoint .zip file and unzip it in this directory.

chmod +w path/to/checkpoint_directory

cd path/to/DAFormer/directory

sh test.sh path/to/checkpoint_directoryThis project uses on the following open-source projects. We thank their authors for making the source code publically available.