Big Data Analytic Toolkit is a set of acceleration libraries aimed to optimize big data analytic frameworks. There're several major use cases:

- Data engineers who want some Intel architecture based optimizations

- End-users of big data analytic frameworks who're looking for performance acceleration

- Database developers who're seeking for reusable building blocks

- Data Scientist who looks for heterogenous execution

By using this library, frontend SQL engines like Prestodb/Spark query performance will be significant improved.

Users can reuse implemented operators/functions to build a full-featured SQL engine. Currently this library offers an highly optimized compiler to JITed function for execution.

Building blocks utilizing compression codec (based on IAA, QAT) can be used directly to Hadoop/Spark for compression acceleration.

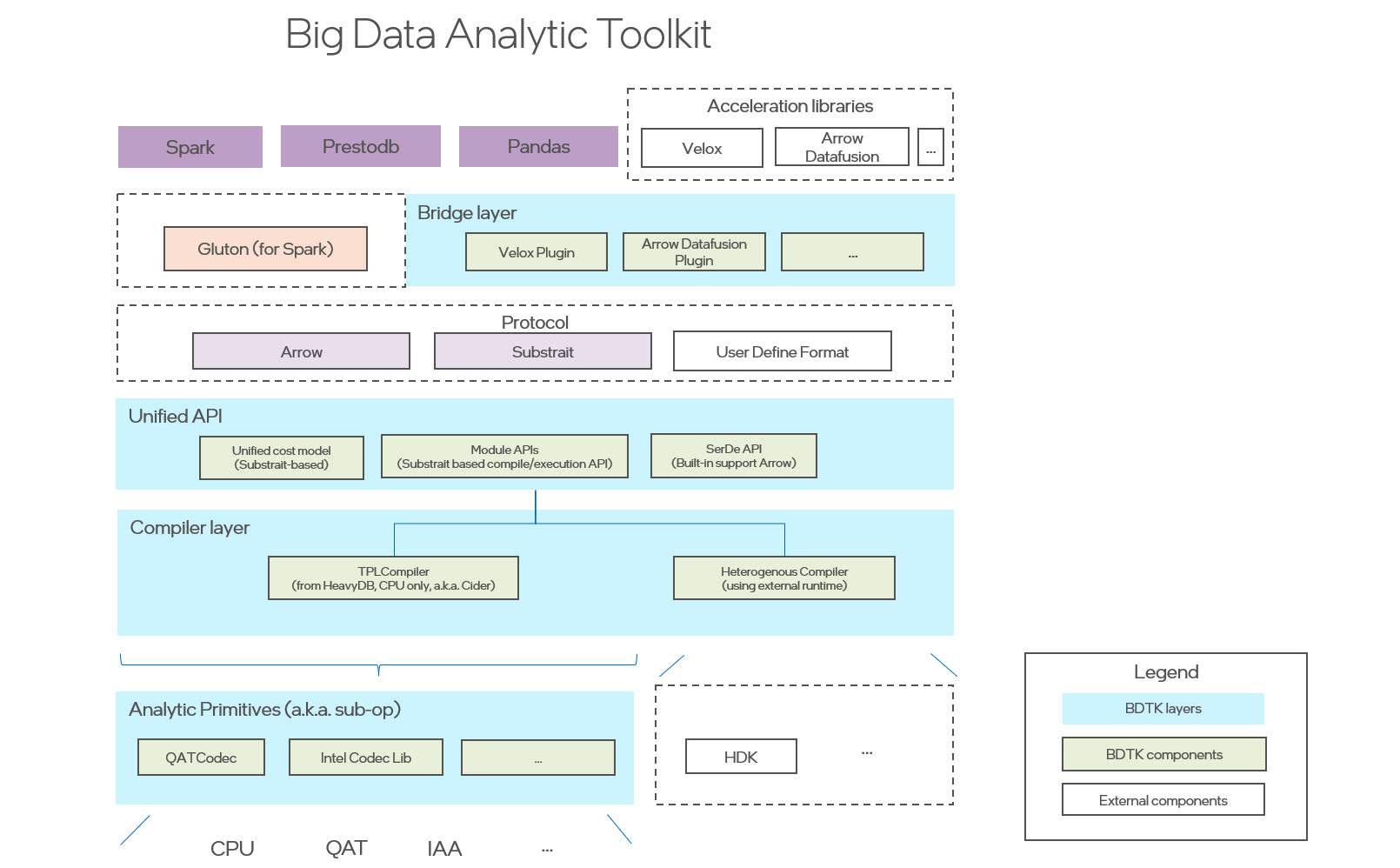

The following diagram shows the design architecture. Currently, it offers a few building blocks including a lightweight LLVM based SQL compiler on top of Arrow data format, ICL - a compression codec leveraging state-of-art Intel IAA accelerator, QATCodec - compression codec wrapper based on Intel QAT accelerator.

-

a modularized and general-purposed Just-In-Time (JIT) compiler for data analytic query engine. It employs Substrait as a protocol allowing to support multiple front-end engines. Currently it provides a LLVM based implementation based on HeavyDB.

-

a Velox-plugin is a bridge to enable Big Data Analytic Toolkit onto Velox. It introduces hybrid execution mode for both compilation and vectorization (existed in Velox). It works as a plugin to Velox seamlessly without changing Velox code.

-

Intel Codec Library for BigData provides compression and decompression library for Apache Hadoop/Spark to make use of the acceleration hardware for compression/decompression.

Current supported features are available on Project Page. Newly supported feature in release 0.9 is available at release page.

git clone --recursive https://github.com/intel/BDTK.git

cd BDTK

# if you are updating an existing checkout

git submodule sync --recursive

git submodule update --init --recursive

We provide Dockerfile to help developers setup and install BDTK dependencies.

- Build an image from a Dockerfile

$ cd ${path_to_source_of_bdtk}/ci/docker

$ docker build -t ${image_name} .- Start a docker container for development

$ docker run -d --name ${container_name} --privileged=true -v ${path_to_source_of_bdtk}:/workspace/bdtk ${image_name} /usr/sbin/initOnce you have setup the Docker build envirenment for BDTK and get the source, you can enter the BDTK container and build like:

Run make in the root directory to compile the sources. For development, use

make debug to build a non-optimized debug version, or make release to build

an optimized version. Use make test-debug or make test-release to run tests.

To use it with Prestodb, Intel version Prestodb is required together with Intel version Velox. Detailed steps are available at installation guide.

In the coming release, following working items were prioritized.

- Better test coverage for entire library

- Better robustness and enable more implemented features in Prestodb as pilot SQL engine, by improving offloading framework

- Better extensibility at multi-levels (incl. relational algebra operator, expression function, data format), by adopting state-of-art compiler design (multi-levels)

- Complete Arrow format migration

- Next-gen codegen framework

- Support large volume data processing

- Advanced features development

Big Data Analytic Toolkit's Code of Conduct can be found here.

You can find the all the Big Data Analytic Toolkit documents on the project web page.

Big Data Analytic Toolkit is licensed under the Apache 2.0 License. A copy of the license can be found here.