Hi, this is the code for our KDD 2022 paper: Bilateral Dependency Optimization: Defending Against Model-inversion Attacks.

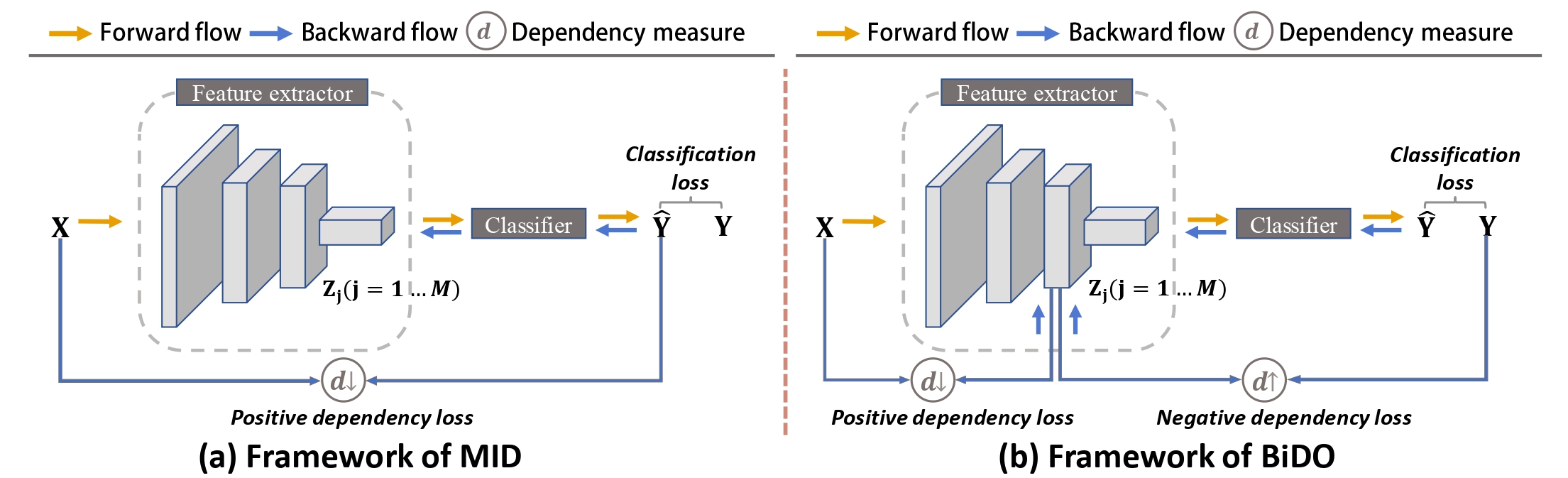

Overview of MID framework vs. bilateral dependency optimization (BiDO) framework. BiDO forces DNNs to learn robust latent representations by minimizing

Overview of MID framework vs. bilateral dependency optimization (BiDO) framework. BiDO forces DNNs to learn robust latent representations by minimizing

This code has been tested on Ubuntu 16.04/18.04, with Python 3.7, Pytorch 1.7 and CUDA 10.2/11.0

Download relevant datasets: CelebA, MNIST.

- CelebA

- MNIST

python prepare_data.py

The directory of datasets is organized as follows:

./attack_dataset

├── MNIST

│ ├── *.txt

│ └── Img

│ └── *.png

└── CelebA

├── *.txt

└── Img

└── *.png

You can also skip to the next section for defending against MI attacks with well-trained defense models.

- For GMI and KED-MI

For KED-MI, if you trained a defense model yourself, you have to train an attack model (generative model) specific to this defense model.

# dataset:celeba, mnist, cifar; # measure:COCO, HSIC; # balancing hyper-parameters: tune them in train_HSIC.py python train_HSIC.py --measure=HSIC --dataset=celeba# put your hyper-parameters in k+1_gan_HSIC.py first python k+1_gan_HSIC --dataset=celeba --defense=HSIC - For VMI

Please refer to this section (Defending against MI attacks - VMI - train with BiDO).

Here, we only provide the weights file of the well-trained defense models that achieve the best trade-off between model robustness and utility, which are highlighted in the experimental results.

-

GMI

-

Weights file (defense model / eval model / GAN) :

- Place pretrained VGG16 in

BiDO/target_model/ - Place defense model in

BiDO/target_model/celeba/HSIC/ - Place evaluation classifer in

GMI/eval_model/ - Place GAN in

GMI/result/models_celeba_gan/

- Place pretrained VGG16 in

-

Launch attack

# balancing hyper-parameters: (0.05, 0.5) python attack.py --dataset=celeba --defense=HSIC -

Calculate FID

# sample real images from training set cd attack_res/celeba/pytorch-fid && python private_domain.py # calculate FID between fake and real images python fid_score.py ../celeba/trainset/ ../celeba/HSIC/all/

-

-

KED-MI

- Weights file (defense model / eval model / GAN) :

- Place defense model in

BiDO/target_model/mnist/COCO/ - Place evaluation classifer in

DMI/eval_model/ - Place improved GAN for celeba in

DMI/improvedGAN/celeba/HSIC/ - Place improved GAN for mnist in

DMI/improvedGAN/mnist/COCO/

- Place defense model in

- Launch attack

# balancing hyper-parameters: (0.05, 0.5) python recovery.py --dataset=celeba --defense=HSIC # balancing hyper-parameters: (1, 50) python recovery.py --dataset=mnist --defense=COCO - Calculate FID

# celeba cd attack_res/celeba/pytorch-fid && python private_domain.py python fid_score.py ../celeba/trainset/ ../celeba/HSIC/all/ --dataset=celeba # mnist cd attack_res/mnist/pytorch-fid && python private_domain.py python fid_score.py ../mnist/trainset/ ../mnist/COCO/all/ --dataset=mnist

- Weights file (defense model / eval model / GAN) :

-

VMI

To run this code, you need ~38G of memory for data loading. The attacking of 20 identities takes ~20 hours on a TiTAN-V GPU (12G).- Data (CelebA)

# create a link to CelebA cd VMI/data && ln -s ../../attack_data/CelebA/Img img_align_celeba python celeba.py - Weights file (defense model / eval model / GAN) :

- Place defense model in

VMI/clf_results/celeba/hsic_0.1&2/ - Place ir_se50.pth in

VMI/3rd_party/InsightFace_Pytorch/work_space/save/; Place evaluation classifer inVMI/pretrained/eval_clf/celeba/ - Place StyleGAN in

VMI/pretrained/stylegan/neurips2021-celeba-stylegan/

- Place defense model in

- Launch attack

# balancing hyper-parameters: (0.1, 2) cd VMI # x.sh (1st) path/to/attack_results (2nd) config_file (3rd) batch_size ./run_scripts/neurips2021-celeba-stylegan-flow.sh 'hsic_0.1&2' 'hsic_0.1&2.yml' 32 - Train with BiDO

python classify_mnist.py --epochs=100 --dataset=celeba --output_dir=./clf_results/celeba/hsic_0.1&2 --model=ResNetClsH --measure=hsic --a1=0.1 --a2=2

- Data (CelebA)

If you find this code helpful in your research, please consider citing

@inproceedings{peng2022BiDO,

title={Bilateral Dependency Optimization: Defending Against Model-inversion Attacks},

author={Peng, Xiong and Liu, Feng and Zhang, Jingfeng and Lan, Long and Ye, Junjie and Liu, Tongliang and Han, Bo},

booktitle={KDD},

year={2022}

}Some of our implementations rely on other repos. We want to thank the authors (MID, GMI, KED-MI, VMI) for making their code publicly available.😄