RLDS stands for Reinforcement Learning Datasets and it is an ecosystem of tools to store, retrieve and manipulate episodic data in the context of Sequential Decision Making including Reinforcement Learning (RL), Learning for Demonstrations, Offline RL or Imitation Learning.

This repository includes a library for manipulating RLDS compliant datasets. For other parts of the pipeline please refer to:

- EnvLogger to create synthetic datasets

- RLDS Creator to create datasets where a human interacts with an environment.

- TFDS for existing RL datasets.

See how to use RLDS in this tutorial.

You can find more examples in the following colabs:

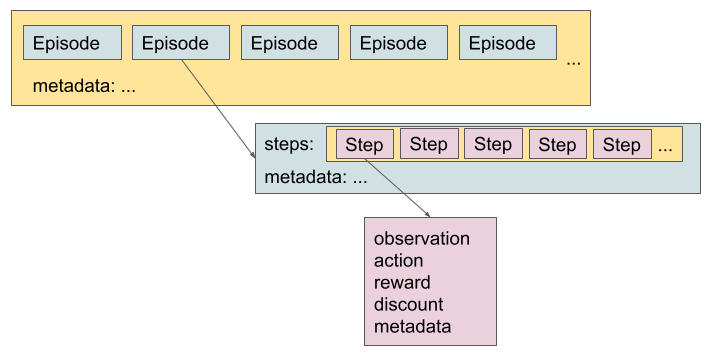

The dataset is retrieved as a tf.data.Dataset of Episodes where each episode

contains a tf.data.Dataset of steps.

-

Episode: dictionary that contains a

tf.data.Datasetof Steps, and metadata. -

Step: dictionary that contains:

-

Mandatory fields:

is_first: if this is the first step of an episode that contains the initial state.is_last: if this is the last step of an episode, that contains the last observation. When true,action,rewardanddiscount, and other cutom fields subsequent to the observation are considered invalid.

-

Optional fields:

observation: current observationaction: action taken in the current observationreward: return after appyling the action to the current observationis_terminal: if this is a terminal stepdiscount: discount factor at this step.- extra metadata

When

is_terminal = True, theobservationcorresponds to a final state, soreward,discountandactionare meaningless. Depending on the environment, the finalobservationmay also be meaningless.If an episode ends in a step where

is_terminal = False, it means that this episode has been truncated. In this case, depending on the environment, the action, reward and discount might be empty as well.Note: Although some fields of the steps are optional, all the steps in the same dataset are required to have the same fields.

-

Although you can read datasets with the RLDS format even if they were not created with our tools (for example, by adding them to TFDS), we recommend the use of EnvLogger and RLDS Creator as they ensure that the data is stored in a lossless fashion and compatible with RLDS.

Envlogger provides a dm_env Environment class wrapper that records

interactions between a real environment and an agent.

env = envlogger.EnvLogger(

environment,

data_directory=`/tmp/mydataset`)

Besides, two callbacks can be passed to the EnvLogger constructor to

store per-step metadata and per-episode metadata. See the EnvLogger

documentation for more details.

Note that per-session metadata can be stored but is currently ignored when loading the dataset.

Note that the Envlogger follows the dm_env convention. So considering:

o_i: observation at stepia_i: action applied too_ir_i: reward obtained when applyinga_iino_id_i: discount for rewardr_im_i: metadata for stepi

Data is generated and stored as:

(o_0, _, _, _, m_0) → (o_1, a_0, r_0, d_0, m_1) → (o_2, a_1, r_1, d_1, m_2) ⇢ ...

But loaded with RLDS as:

(o_0,a_0, r_0, d_0, m_0) → (o_1, a_1, r_1, d_1, m_1) → (o_2, a_2, r_2, d_2, m_2) ⇢ ...

If you want to collect data generated by a human interacting with an environment, check the RLDS Creator.

RL datasets can be loaded with TFDS and they are retrieved with the canonical RLDS dataset format.

See this section for instructions on how to add an RLDS dataset to TFDS.

These datasets can be loaded directly with:

tfds.load('dataset_name').as_dataset()['train']This is how we load the datasets in the tutorial.

See the full documentation and the catalog in the [TFDS] site.

Datasets can be implemented with TFDS both inside and outside of the TFDS repository. See examples here.

Adding a dataset to TFDS involves two steps:

-

Implement a python class that provides a dataset builder with the specs of the data (e.g., what is the shape of the observations, actions, etc.) and how to read your dataset files.

-

Run a

download_and_preparepipeline that converts the data to the TFDS intermediate format.

You can add your dataset directly to TFDS following the instructions at https://www.tensorflow.org/datasets.

- If your data has been generated with Envlogger or the RLDS Creator, you can just use the rlds helpers in TFDS (see here an example).

- Otherwise, make sure your

generate_examplesimplementation provides the same structure and keys as RLDS loaders if you want your dataset to be compatible with RLDS pipelines (example).

Note that you can follow the same steps to add the data to your own repository (see more details in the TFDS documentation).

As RLDS exposes RL datasets in a form of Tensorflow's tf.data, many Tensorflow's performance hints apply to RLDS as well. It is important to note, however, that RLDS datasets are very specific and not all general speed-up methods work out of the box. advices on improving performance might not result in expected outcome. To get a better understanding on how to use RLDS datasets effectively we recommend going through this colab.

While by default the order of episodes in RLDS datasets is randomized and there is no need to randomize them again when loading the dataset, some algorithms operate on steps/n-step transitions. There are different ways to interleave steps across multiple episodes - for example:

-

Shuffle steps using tf.data.Dataset.shuffle. Note that obtaining perfect shuffling this way involves specifying

buffer_sizewhich can accomodate entire dataset and can result in high memory usage for big datasets. -

Interleave

Ncopies of the dataset using tf.data.Dataset.interleave:

def ds_loader():

episode_dataset = tfds.load(...)

step_dataset = episode_dataset.flat_map(lambda x: x[rlds.STEPS])

return step_dataset

dataset = Dataset.range(1, N).interleave(ds_loader, cycle_length=..., block_length=...)

Each copy of the dataset shuffles input partitions independently, so consecutive steps

returned by the resulting dataset come from unrelated episodes. It is important to note,

however, that this way each step will be loaded N times. To avoid duplicates,

it is possible to construct each dataset using disjoint splits.

To improve throughput of loading datasets, by default TFDS loads multiple partitions

of the dataset in parallel. In the case of datasets with big episodes that can result

in high memory usage. If you run into high memory usage problems, it is worth playing

around with read_config provided to tfds.load.

If you use RLDS, please cite the RLDS paper as

@misc{ramos2021rlds,

title={RLDS: an Ecosystem to Generate, Share and Use Datasets in Reinforcement Learning},

author={Sabela Ramos and Sertan Girgin and Léonard Hussenot and Damien Vincent and Hanna Yakubovich and Daniel Toyama and Anita Gergely and Piotr Stanczyk and Raphael Marinier and Jeremiah Harmsen and Olivier Pietquin and Nikola Momchev},

year={2021},

eprint={2111.02767},

archivePrefix={arXiv},

primaryClass={cs.LG}

}

We greatly appreciate all the support from the TF-Agents team in setting up building and testing for EnvLogger.

This is not an officially supported Google product.