Official implementation of the paper Distilling the Knowledge from Conditional Normalizing Flows (ICMLW'2021) by Dmitry Baranchuk, Vladimir Aliev, Artem Babenko.

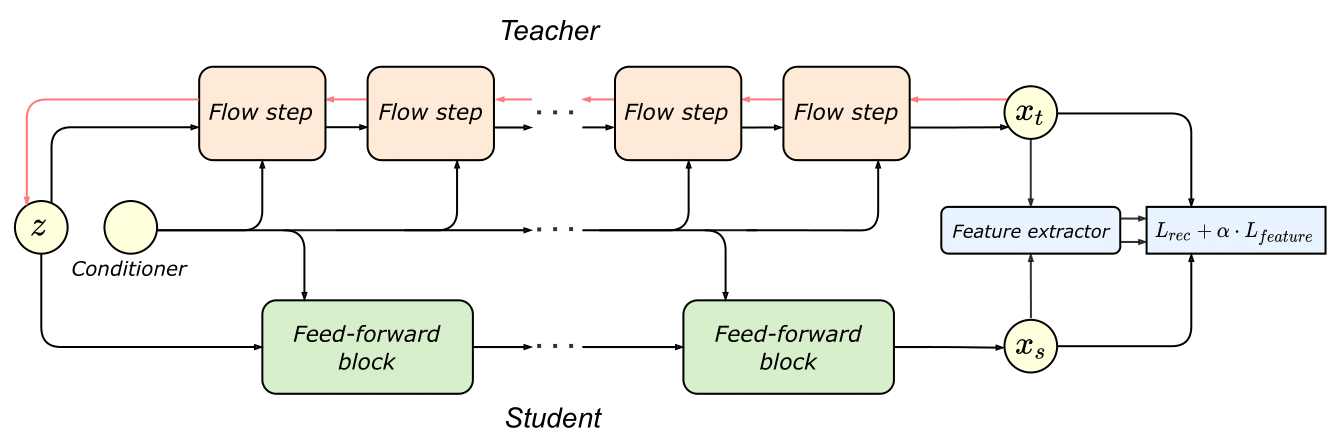

The approach transfers the knowledge from Normalizing Flows (NF) to efficient feed-forward models to speed up inference and reduce the model size. The effectiveness of this approach is demonstrated on two state-of-the-art conditional flow-based models for image super-resolution (SRFlow) and speech synthesis (WaveGlow).

The detailed descriptions of the super-resolution and speech synthesis applications are presented in:

@inproceedings{baranchuk2021distilling,

title={Distilling the Knowledge from Conditional Normalizing Flows},

author={Dmitry Baranchuk and Vladimir Aliev and Artem Babenko},

booktitle={ICML Workshop on Invertible Neural Networks, Normalizing Flows, and Explicit Likelihood Models},

year={2021},

url={https://openreview.net/forum?id=fEPhiuZS9TV}

}