Paper | Project Page | Code

GaussianFormer: Scene as Gaussians for Vision-Based 3D Semantic Occupancy Prediction

Yuanhui Huang, Wenzhao Zheng

$\dagger$ , Yunpeng Zhang, Jie Zhou, Jiwen Lu$\ddagger$

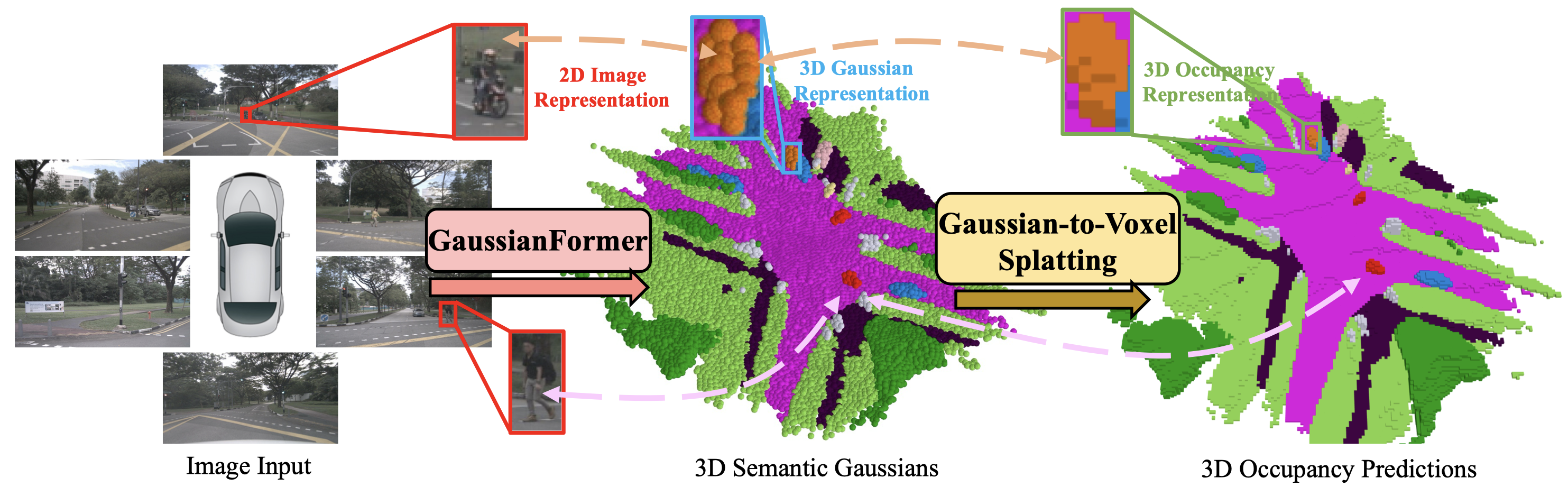

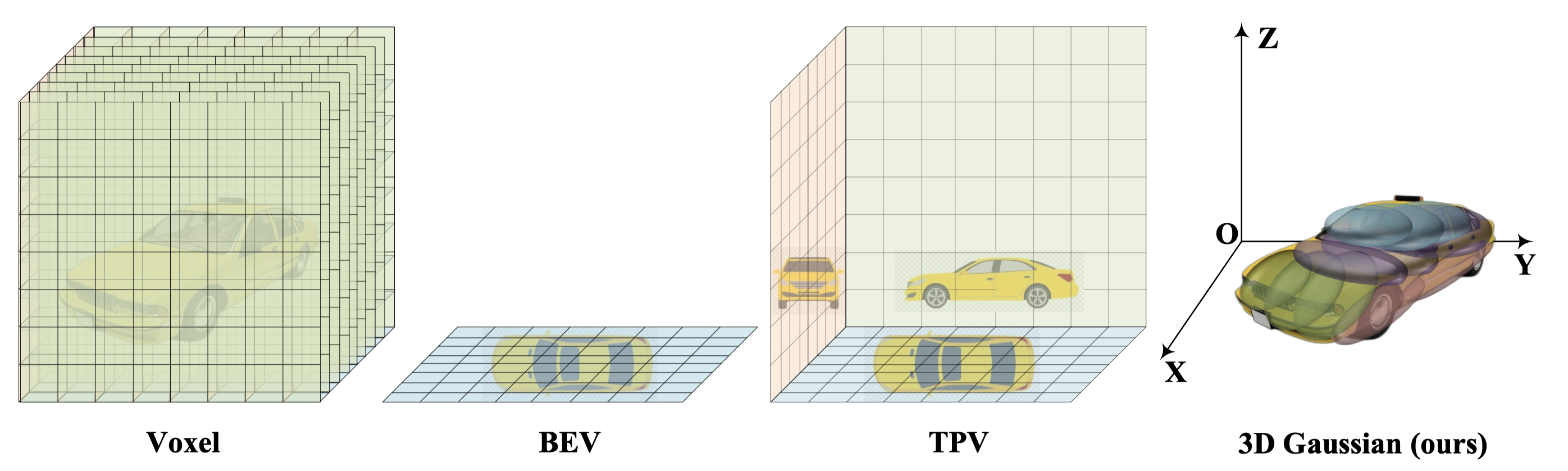

GaussianFormer proposes the 3D semantic Gaussians as a more efficient object-centric representation for driving scenes compared with 3D occupancy.

- [2024/05/28] Paper released on arXiv.

- [2024/05/28] Demo release.

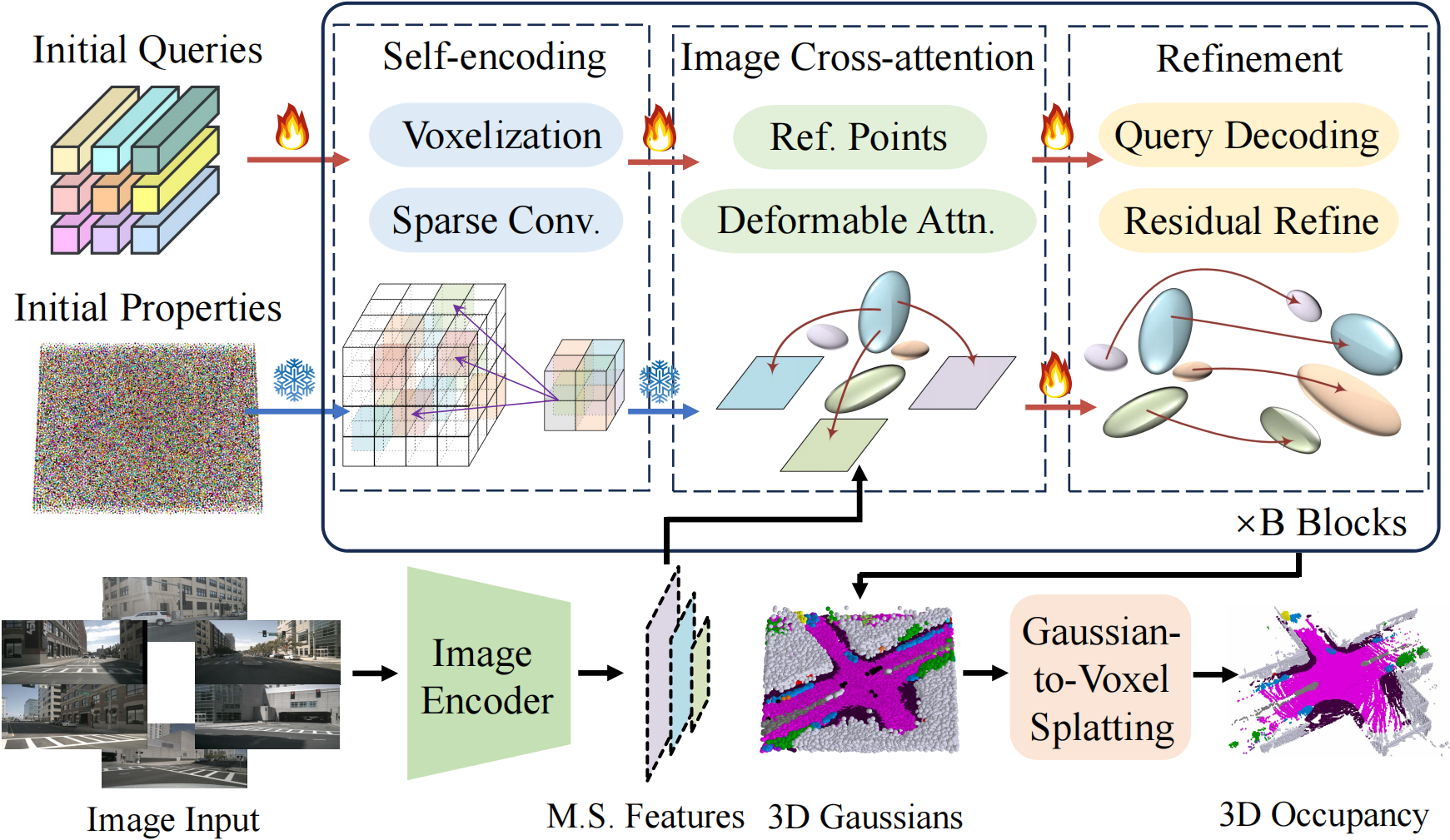

Considering the universal approximating ability of Gaussian mixture, we propose an object-centric 3D semantic Gaussian representation to describe the fine-grained structure of 3D scenes without the use of dense grids. We propose a GaussianFormer model consisting of sparse convolution and cross-attention to efficiently transform 2D images into 3D Gaussian representations. To generate dense 3D occupancy, we design a Gaussian-to-voxel splatting module that can be efficiently implemented with CUDA. With comparable performance, our GaussianFormer reduces memory consumption of existing 3D occupancy prediction methods by 75.2% - 82.2%.

Code is available at: GaussianFormer

Our work is inspired by these excellent open-sourced repos: TPVFormer PointOcc SelfOcc SurroundOcc OccFormer BEVFormer

If you find this project helpful, please consider citing the following paper:

@article{huang2024gaussian,

title={GaussianFormer: Scene as Gaussians for Vision-Based 3D Semantic Occupancy Prediction},

author={Huang, Yuanhui and Zheng, Wenzhao and Zhang, Yunpeng and Zhou, Jie and Lu, Jiwen},

journal={arXiv preprint arXiv:2405.17429},

year={2024}

}