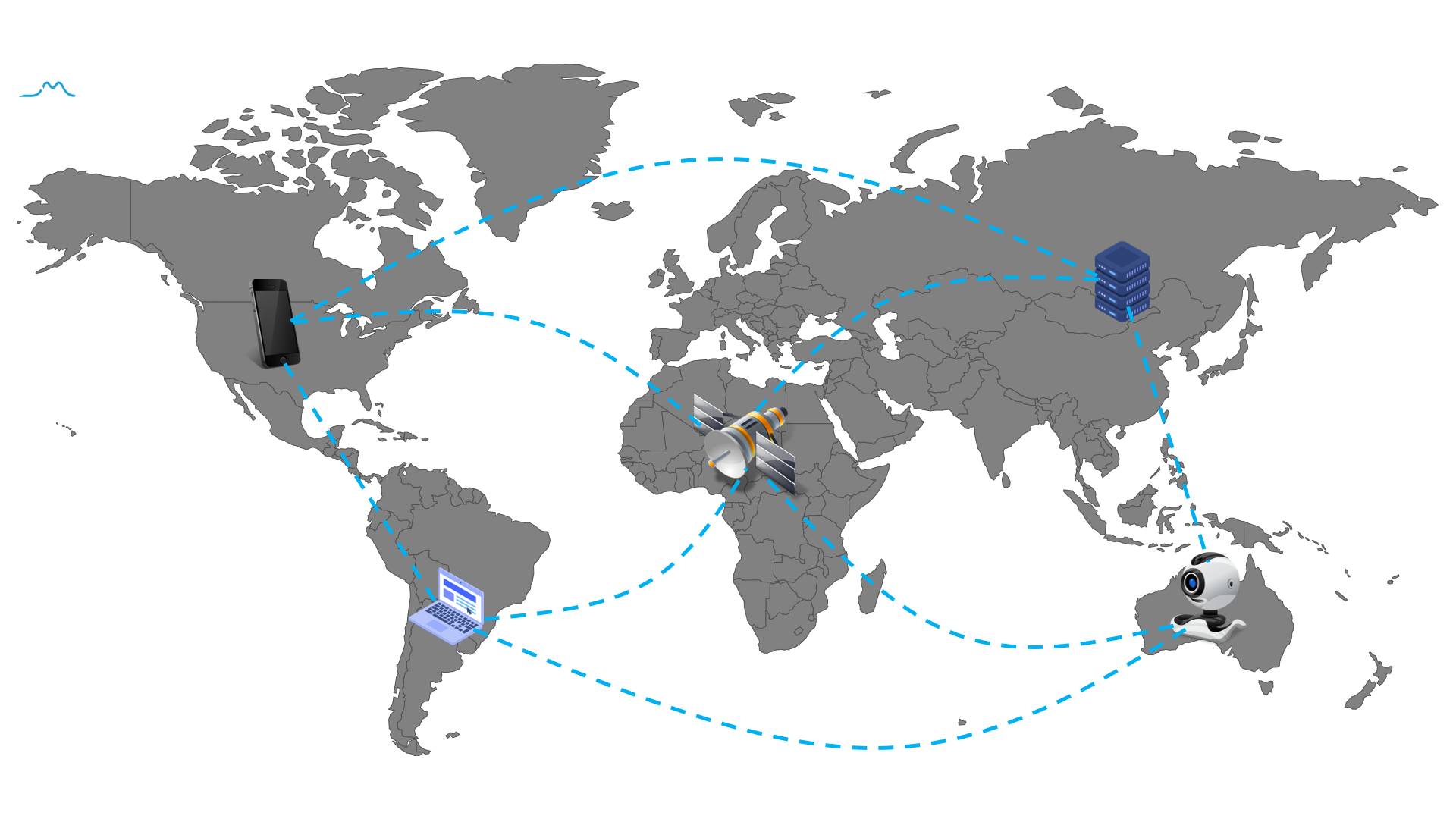

Federated Learning (FL) is a new machine learning framework, which enables multiple devices collaboratively to train a shared model without compromising data privacy and security.

This repository aims to keep tracking the latest research advancements of federated learning, including but not limited to research papers, books, codes, tutorials, and videos.

- Top Machine Learning Conferences

- Top Computer Vision Conferences

- Top Artificial Intelligence and Data Mining Conferences

- Books

- Papers (Research directions)

- Google FL Workshops

- Videos and Lectures

- Tutorials and Blogs

- Open-Sources

In this section, we will summarize Federated Learning papers accepted by top machine learning conference, Including NeurIPS, ICML, ICLR.

In this section, we will summarize Federated Learning papers accepted by top computer vision conference, Including CVPR, ICCV, ECCV.

| Years | Title | Affiliation | Materials |

| ICCV 2021 | Federated Learning for Non-IID Data via Unified Feature Learning and Optimization Objective Alignment | Peking University | |

| Ensemble Attention Distillation for Privacy-Preserving Federated Learning | University at Buffalo | ||

| Collaborative Unsupervised Visual Representation Learning from Decentralized Data | Nanyang Technological University; SenseTime |

In this section, we will summarize Federated Learning papers accepted by top AI and DM conference, Including AAAI, AISTATS, KDD.

-

联邦学习(Federated Learning)

-

联邦学习实战(Practicing Federated Learning)

-

Federated Learning - A Comprehensive Overview of Methods and Applications

Model Aggregation (or Model Fusion) refers to how to combine local models into a shared global model.

Personalized federated learning refers to train a model for each client, based on the client’s own dataset and the datasets of other clients. There are two major motivations for personalized federated learning:

- Due to statistical heterogeneity across clients, a single global model would not be a good choice for all clients. Sometimes, the local models trained solely on their private data perform better than the global shared model.

- Different clients need models specifically customized to their own environment. As an example of model heterogeneity, consider the sentence: “I live in .....”. The next-word prediction task applied on this sentence needs to predict a different answer customized for each user. Different clients may assign different labels to the same data.

Personalized federated learning Survey paper:

-

Three Approaches for Personalization with Applications to Federated Learning

-

Personalized Federated Learning for Intelligent IoT Applications: A Cloud-Edge based Framework

Recommender system (RecSys) is widely used to solve information overload. In general, the more data RecSys use, the better the recommendation performance we can obtain.

Traditionally, RecSys requires the data that are distributed across multiple devices to be uploaded to the central database for model training. However, due to privacy and security concerns, such directly sharing user data strategies are no longer appropriate.

The incorporation of federated learning and RecSys is a promising approach, which can alleviate the risk of privacy leakage.

More federated recommendation papers can be found in this repository: FedRecPapers

Communication-Based: Improving efficiency by reducing model parameters transmission.

Hardware-Based: Improving efficiency by hardware acceleration (GPU, FPGA, etc.)

Algorithm-Based: Improving efficiency by accelerating model convergence rate (local training, model aggregation, client selection, etc.)

| Papers | Application Scenarios | Conferences/Affiliations | Materials |

| Federated Composite Optimization | loss function contains a non-smooth regularizer | ICML 2021(Google) | code |

| Federated Deep AUC Maximization for Hetergeneous Data with a Constant Communication Complexity | Federated Deep AUC Maximization | ICML 2021 (The University of Iowa) |

video |

| Bias-Variance Reduced Local SGD for Less Heterogeneous Federated Learning | Non-Convex Objective optimization | ICML 2021 (The University of Tokyo) |

video |

| From Local SGD to Local Fixed-Point Methods for Federated Learning | fixed-point algorithms optimization | ICML 2020 (Moscow Institute of Physics and Technology; KAUST) |

Slide Video |

| Federated Learning Based on Dynamic Regularization | In each round, the objective function for each device dynamically updates its regularizer | ICLR 2021 (Boston University; ARM) |

|

| Federated Learning via Posterior Averaging: A New Perspective and Practical Algorithms | Formulate federated learning optimization as a posterior inference problem | ICLR 2021 (CMU; Google) |

code |

| Adaptive Federated Optimization | Federated versions of adaptive optimizers | ICLR 2021 (Google) |

code |

| FedBN: Federated Learning on Non-IID Features via Local Batch Normalization | How to uses local batch normalization to alleviate the feature shift before averaging models. | ICLR 2021 (Princeton University) |

code |

| Federated Semi-Supervised Learning with Inter-Client Consistency & Disjoint Learning | SFederated versions of emi-Supervised Learning | ICLR 2021 (KAIST) |

code |

| Sageflow: Robust Federated Learning against Both Stragglers and Adversaries | Handle both stragglers (slow devices) and adversaries simultaneously | NeurIPS 2021 (KAIST) |

HomePage |

| STEM: A Stochastic Two-Sided Momentum Algorithm Achieving Near-Optimal Sample and Communication Complexities for Federated Learning | Distributed stochastic non-convex optimization | University of Minnesota | HomePage |

| Secure Bilevel Asynchronous Vertical Federated Learning with Backward Updating | Vertical Federated Learning Optimization | AAAI 2021 (Xidian University; JD Tech) |

video |

| Category | Papers | Conferences/Affiliations | Materials |

| Tree-Base Boosting | Practical Federated Gradient Boosting Decision Trees | AAAI 2020 (NUS) |

code |

| Secureboost: A lossless federated learning framework | IEEE Intelligent Systems 2021 (WeBank; HKUST) |

||

| Large-scale Secure XGB for Vertical Federated Learning | CIKM 2021 (Ant Group) |

video | |

Typically, the incentive mechanism consists of the following two steps:

-

How to evaluate the contribution of each participant (Shapley value)

-

How to allocate profits based on contributions

| Category | Papers | Conferences/Affiliations | Materials |

| Clustering | Heterogeneity for the Win: One-Shot Federated Clustering | ICML 2021 (CMU) |

video |

| Representations Learning | Exploiting Shared Representations for Personalized Federated Learning | ICML 2021 (University of Texas at Austin; University of Pennsylvania) |

code video |

| Towards Federated Unsupervised Representation Learning | EdgeSys '20 (Eindhoven University of Technology) |

||

| Federated Unsupervised Representation Learning | Zhejiang University | ||

| Collaborative Unsupervised Visual Representation Learning from Decentralized Data | (ICCV 2021) (Nanyang Technological University;SenseTime) |

||

| Divergence-aware Federated Self-Supervised Learning | (ICLR 2022) (Nanyang Technological University) |

||

| Orchestra: Unsupervised Federated Learning via Globally Consistent Clustering | (ICML 2022) (University of Michigan;University of Cambridge) |

code |

Privacy, utility, and efficiency are the three key concepts of trustworthy federated learning. We point out that there is no security mechanism that can achieve optimality in terms of privacy leakage, utility loss, and efficiency loss simultaneously.

- No Free Lunch Theorem for Security and Utility in Federated Learning

- Trading Off Privacy, Utility and Efficiency in Federated Learning

- Probably Approximately Correct Federated Learning

- A Game-theoretic Framework for Federated Learning

- Google Workshop on Federated Learning and Analytics 2021

- Google Workshop on Federated Learning and Analytics 2020

- Google Federated Learning workshop 2019

-

TensorFlow Federated (TFF): Machine Learning on Decentralized Data - Google, TF Dev Summit ‘19 2019

-

Federated Learning: Machine Learning on Decentralized Data - Google, Google I/O 2019

-

Federated Learning - Cloudera Fast Forward Labs, DataWorks Summit 2019

-

GDPR, Data Shortage and AI - Qiang Yang, AAAI 2019 Invited Talk

-

Code Tutorial: From Centralized to Federated - Flower Summit 2021

- What is Federated Learning - Nvidia 2019

- Online Federated Learning Comic - Google 2019

- Federated Learning: Collaborative Machine Learning without Centralized Training Data - Google AI Blog 2017

- Go Federated with OpenFL - Intel 2021

Developing a federated learning framework from scratch is very time-consuming, especially in industrial. An excellent FL framework can facilitate engineers and researchers to train, research and deploy the FL model in practice. In this section, we summarize some commonly used open-source FL frameworks from both industrial and academia perspectives.