In some cases, video does not play after event ontrack is triggered on the client

kolserdav opened this issue · comments

Hello! I moved my project from wrtc to werift. Due to the fact that 1 has different results between the first and subsequent server launches - apparently wrtc has some dependencies that store variables in memory bound to the process, so the server on wrtc does not work stably, the same happens if you use webdriver through puppeteer or playwright. But at werift this moment works stably, but I ran into a problem with reconnecting werift.

Description of the problem

When two or more room clients connect to the server at the same time, the video is played, but if you first connect one user, and then reconnect again, then his video is never played. Also, this problem occurs when about 5 users are connected and several users reload the page at the same time, but this moment is more difficult to track, so I temporarily set the disconnect from the client when clicking on the button to close the full screen video. And the listener then connects the disconnected but active user in the room.

Problem reproduction

In my project, you can make sure that the moment with a single reconnect worked with wrtc but does not work with werift.

I have checked all possible options, but everything suggests that the problem may be at a lower level of code than my application. That's why I'm writing this question because perhaps my project example will help you find a hard-to-find problem.

Result of reconnection to wrtc:

Result of reconnection to werift:

To reproduce this examples need:

- Install master (

werift) or wrtc (wrtc) branch of https://github.com/kolserdav/werift-sfu-react project by instruction https://github.com/kolserdav/werift-sfu-react/blob/master/docs/CONTRIBUTING.md - Create room and click to copy button with room id and unique user id url:

- Open room as new user in new tab and split by difference pages.

- Click by second video and while opened full video screen to ckick by close button:

Add-ons

I don't see any difference between normal and non-playable video streams:

Perhaps you will have some ideas.

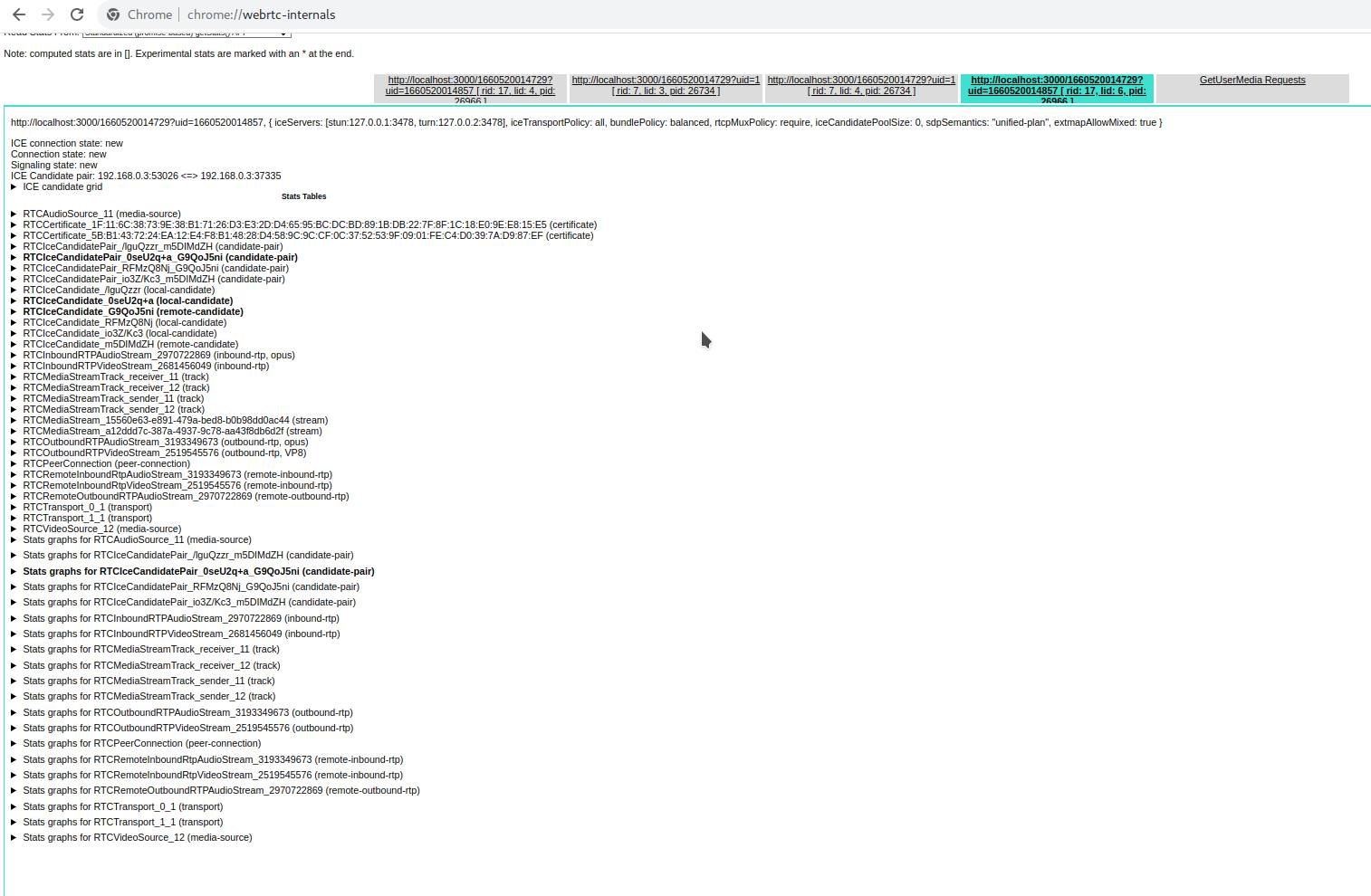

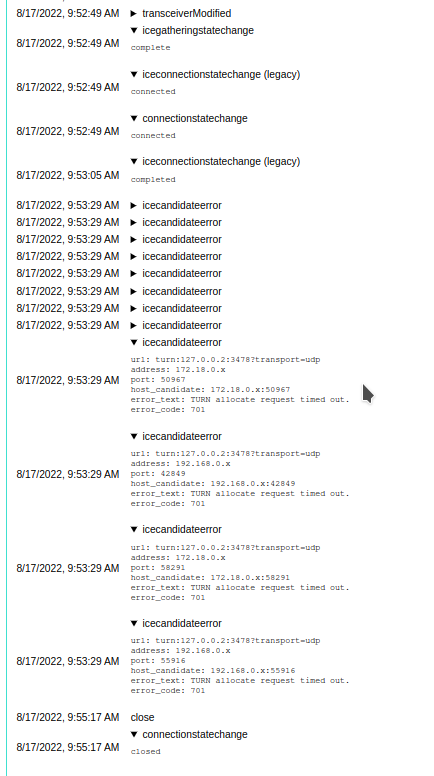

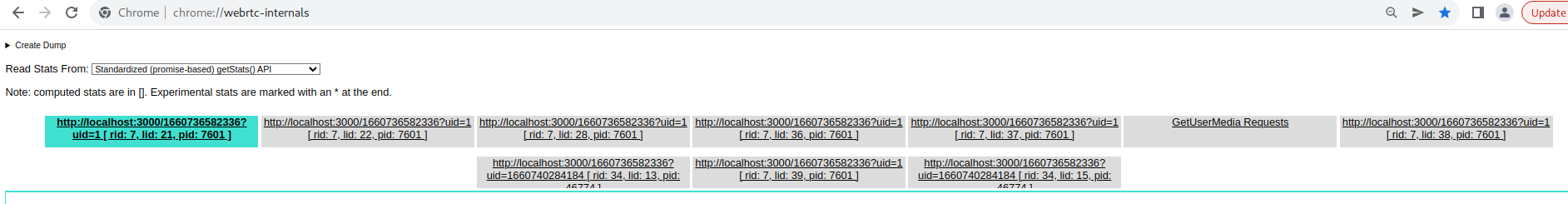

I carefully studied chrome://webrtc-internals/ and noticed that bad connections have errors icecandidateerror.

I decided that this is due to the fact that the server defaults to iceServers from Google, I also put it on the client, but it did not help (

Next, I tried to put iceServers on the server as well as on the client (coturn):

iceServers: [

{

urls: process.env.STUN_SERVER as string,

},

{

urls: process.env.TURN_SERVER as string,

username: process.env.TURN_SERVER_USER,

credential: process.env.TURN_SERVER_PASSWORD,

},

],sample .env file:

STUN_SERVER=stun:127.0.0.1:3478

TURN_SERVER=turn:127.0.0.2:3478

TURN_SERVER_USER=username

TURN_SERVER_PASSWORD=passwordBut every time I get the following error:

[0] [1] TransactionTimeout [Error]

[0] [1] at Timeout.Transaction.retry (/home/kol/Projects/group-call/node_modules/werift/lib/ice/src/stun/transaction.js:47:39)

[0] [1] at listOnTimeout (node:internal/timers:559:17)

[0] [1] at processTimers (node:internal/timers:502:7)

[0] [1] error Error set remote description {

[0] [1] e: "Cannot read properties of undefined (reading 'getAttributeValue')",

[0] [1] stack: "TypeError: Cannot read properties of undefined (reading 'getAttributeValue')\n" +

[0] [1] ' at TurnClient.connect (/home/kol/Projects/group-call/node_modules/werift/lib/ice/src/turn/protocol.js:146:26)\n' +

[0] [1] ' at runMicrotasks (<anonymous>)\n' +

[0] [1] ' at runNextTicks (node:internal/process/task_queues:61:5)\n' +

[0] [1] ' at listOnTimeout (node:internal/timers:528:9)\n' +

[0] [1] ' at processTimers (node:internal/timers:502:7)\n' +

[0] [1] ' at async createTurnEndpoint (/home/kol/Projects/group-call/node_modules/werift/lib/ice/src/turn/protocol.js:233:5)\n' +

[0] [1] ' at async Connection.getComponentCandidates (/home/kol/Projects/group-call/node_modules/werift/lib/ice/src/ice.js:281:30)\n' +

[0] [1] ' at async Connection.gatherCandidates (/home/kol/Projects/group-call/node_modules/werift/lib/ice/src/ice.js:229:36)\n' +

[0] [1] ' at async RTCIceGatherer.gather (/home/kol/Projects/group-call/node_modules/werift/lib/webrtc/src/transport/ice.js:107:13)\n' +

[0] [1] ' at async /home/kol/Projects/group-call/node_modules/werift/lib/webrtc/src/peerConnection.js:673:13',Your server code doesn't take keyframes into account, so I think that's why.

Please put the following code in packages/server/src/core/rtc.ts L159

setInterval(() => {

e.transceiver.receiver.sendRtcpPLI(e.track.ssrc);

}, 3000);Thank you very much for your support. I checked your recommendation. It did not work out for an objective reason. In the case of line 179, the tracks are not sent to the client. They are in memory this.streams[peerId], which means that this is the main connection of the client to the server, where he only sends his tracks. This connection comes with target = 0.

this.peerConnectionsServer[peerId]!.ontrack = (e) => {

const peer = peerId.split(delimiter);

const isRoom = peer[2] === '0';

const stream = e.streams[0];

const isNew = !this.streams[peerId]?.length;

log('info', 'ontrack', {

peerId,

isRoom,

si: stream.id,

isNew,

userId,

target,

tracks: stream.getTracks().map((item) => item.kind),

});

// if target == 0

if (isRoom) {

// only save to memory this.streams[peerId]

if (!this.streams[peerId]) {

this.streams[peerId] = [];

}

this.streams[peerId].push(stream.getTracks()[0]);

const room = rooms[roomId];

if (room && isNew) {

// it is not working

setInterval(() => {

e.transceiver.receiver.sendRtcpPLI(e.track.ssrc);

}, 3000);

setTimeout(() => {

room.forEach((id) => {

ws.sendMessage({

type: MessageType.SET_CHANGE_UNIT,

id,

data: {

target: userId,

eventName: 'add',

roomLenght: rooms[roomId]?.length || 0,

muteds: this.muteds[roomId],

},

connId,

});

});

}, 0);

} else if (!room) {

log('warn', 'Room missing in memory', { roomId });

}

}

};

};Then when the client finds out that there are more users in the room, it creates a separate connection for each guest with target = userId. And when signalingstatechange becomes have-remote-offer in such a connection, it sends the previously saved tracks of the target user to this connection.

This script is run on line 141:

this.peerConnectionsServer[peerId]!.onsignalingstatechange =

function handleSignalingStateChangeEvent() {

if (!core.peerConnectionsServer[peerId]) {

log('warn', 'On signalling state change without peer connection', { peerId });

return;

}

const state = peerConnectionsServer[peerId].signalingState;

log('log', 'On connection state change', { peerId, state, target });

// Add tracks from remote offer

if (state === 'have-remote-offer' && target.toString() !== '0') {

addTracks({ roomId, userId, target, connId }, () => {

//

});

}

log(

'info',

'! WebRTC signaling state changed to:',

core.peerConnectionsServer[peerId]!.signalingState

);

switch (core.peerConnectionsServer[peerId]!.signalingState) {

case 'closed':

core.onClosedCall({ roomId, userId, target, connId });

break;

default:

}

};I have also implemented your recommendation in the addTracks method. But that didn't work either. I noticed that track.ssrc is always the same for every kind of track. Perhaps I need to somehow update the keyframes of the tracks that are stored in memory!? But I don't quite understand how tranceiver.receiver.sendRtcpPLI(track.ssrc) works, so I've described my application logic above in the hope that you can point me in the right direction.

public addTracks: RTCInterface['addTracks'] = ({ roomId, connId, userId, target }) => {

const _connId = this.getStreamConnId(target);

const _connId1 = this.getPeerConnId(userId, target);

const peerId = this.getPeerId(roomId, userId, target, _connId1);

const _peerId = this.getPeerId(roomId, target, 0, _connId);

const tracks = this.streams[_peerId];

const streams = Object.keys(this.streams);

const opts = {

roomId,

userId,

target,

connId,

_peerId,

peerId,

tracksL: tracks?.length,

tracks: tracks?.map((item) => item.kind),

peers: Object.keys(this.peerConnectionsServer),

ssL: streams.length,

ss: streams,

cS: this.peerConnectionsServer[peerId]?.connectionState,

sS: this.peerConnectionsServer[peerId]?.signalingState,

iS: this.peerConnectionsServer[peerId]?.iceConnectionState,

};

if (!tracks || tracks?.length === 0) {

log('warn', 'Skiping add track', opts);

return;

}

if (this.peerConnectionsServer[peerId]) {

log('warn', 'Add tracks', opts);

tracks.forEach((track) => {

this.peerConnectionsServer[peerId]!.addTrack(track);

// it is also not working

const tranceiver = this.peerConnectionsServer[peerId]?.transceivers.find(

(item) => item.kind === track.kind

);

if (tranceiver) {

tranceiver.receiver.sendRtcpPLI(track.ssrc);

} else {

log('warn', 'Tranciever not found', { ...opts });

}

});

} else {

log('error', 'Can not add tracks', { opts });

}

};Example of add tracks log:

[0] [1] warn Add tracks {

[0] [1] roomId: '1660736582336',

[0] [1] userId: '1660740284184',

[0] [1] target: '1',

[0] [1] connId: '80bf1be8-2771-410b-b5f5-c68213ddf8b1',

// peerId for this.streams[_peerId]

[0] [1] _peerId: '1660736582336_1_0_2f4d889f-2f7e-4c70-8659-2b72d83667f9',

// peerId for current peer connection

[0] [1] peerId: '1660736582336_1660740284184_1_80bf1be8-2771-410b-b5f5-c68213ddf8b1',

[0] [1] tracksL: 2,

[0] [1] tracks: [ 'audio', 'video' ],

[0] [1] peers: [

[0] [1] '1660736582336_1_0_2f4d889f-2f7e-4c70-8659-2b72d83667f9',

[0] [1] '1660736582336_1660740284184_0_80bf1be8-2771-410b-b5f5-c68213ddf8b1',

[0] [1] '1660736582336_1_1660740284184_80bf1be8-2771-410b-b5f5-c68213ddf8b1',

[0] [1] '1660736582336_1660740284184_1_80bf1be8-2771-410b-b5f5-c68213ddf8b1'

[0] [1] ],

[0] [1] ssL: 2,

[0] [1] ss: [

[0] [1] '1660736582336_1_0_2f4d889f-2f7e-4c70-8659-2b72d83667f9',

[0] [1] '1660736582336_1660740284184_0_80bf1be8-2771-410b-b5f5-c68213ddf8b1'

[0] [1] ],

[0] [1] cS: 'new',

[0] [1] sS: 'have-remote-offer',

[0] [1] iS: 'new'

[0] [1] } I carefully studied chrome://webrtc-internals/ and noticed that bad connections have errors

icecandidateerror.I decided that this is due to the fact that the server defaults to

iceServersfrom Google, I also put it on the client, but it did not help (Next, I tried to put

iceServerson the server as well as on the client (coturn):iceServers: [ { urls: process.env.STUN_SERVER as string, }, { urls: process.env.TURN_SERVER as string, username: process.env.TURN_SERVER_USER, credential: process.env.TURN_SERVER_PASSWORD, }, ],sample .env file:

STUN_SERVER=stun:127.0.0.1:3478 TURN_SERVER=turn:127.0.0.2:3478 TURN_SERVER_USER=username TURN_SERVER_PASSWORD=passwordBut every time I get the following error:

[0] [1] TransactionTimeout [Error] [0] [1] at Timeout.Transaction.retry (/home/kol/Projects/group-call/node_modules/werift/lib/ice/src/stun/transaction.js:47:39) [0] [1] at listOnTimeout (node:internal/timers:559:17) [0] [1] at processTimers (node:internal/timers:502:7) [0] [1] error Error set remote description { [0] [1] e: "Cannot read properties of undefined (reading 'getAttributeValue')", [0] [1] stack: "TypeError: Cannot read properties of undefined (reading 'getAttributeValue')\n" + [0] [1] ' at TurnClient.connect (/home/kol/Projects/group-call/node_modules/werift/lib/ice/src/turn/protocol.js:146:26)\n' + [0] [1] ' at runMicrotasks (<anonymous>)\n' + [0] [1] ' at runNextTicks (node:internal/process/task_queues:61:5)\n' + [0] [1] ' at listOnTimeout (node:internal/timers:528:9)\n' + [0] [1] ' at processTimers (node:internal/timers:502:7)\n' + [0] [1] ' at async createTurnEndpoint (/home/kol/Projects/group-call/node_modules/werift/lib/ice/src/turn/protocol.js:233:5)\n' + [0] [1] ' at async Connection.getComponentCandidates (/home/kol/Projects/group-call/node_modules/werift/lib/ice/src/ice.js:281:30)\n' + [0] [1] ' at async Connection.gatherCandidates (/home/kol/Projects/group-call/node_modules/werift/lib/ice/src/ice.js:229:36)\n' + [0] [1] ' at async RTCIceGatherer.gather (/home/kol/Projects/group-call/node_modules/werift/lib/webrtc/src/transport/ice.js:107:13)\n' + [0] [1] ' at async /home/kol/Projects/group-call/node_modules/werift/lib/webrtc/src/peerConnection.js:673:13',

I corrected this error myself. Coturn was configured incorrectly and the client tried to connect to the wrong turn address. This did not solve the main problem, but this situation indicates that when reconnecting, the client tries to set up a connection through turn server, although during the initial connection (which plays video normal), there are no attempts to connect through turn. This information can also help you understand the cause of the problem.

Your server code doesn't take keyframes into account, so I think that's why.

Please put the following code in packages/server/src/core/rtc.ts L159

setInterval(() => { e.transceiver.receiver.sendRtcpPLI(e.track.ssrc); }, 3000);

Your recommendation worked for me. In parallel with method ontrack, I added such a listener:

this.peerConnectionsServer[peerId]!.onRemoteTransceiverAdded.subscribe(async (transceiver) => {

const [track] = await transceiver.onTrack.asPromise();

setInterval(() => {

transceiver.receiver.sendRtcpPLI(track.ssrc);

}, 1000);

});The problem with reconnection was successfully solved. Thank you so much and all the best to you!