📘Documentation | 🛠️Installation | 🚀Model Zoo | 👀Awesome Mixup | 🔍Awesome MIM | 🆕News

The main branch works with PyTorch 1.8 (required by some self-supervised methods) or higher (we recommend PyTorch 1.12). You can still use PyTorch 1.6 for supervised classification methods.

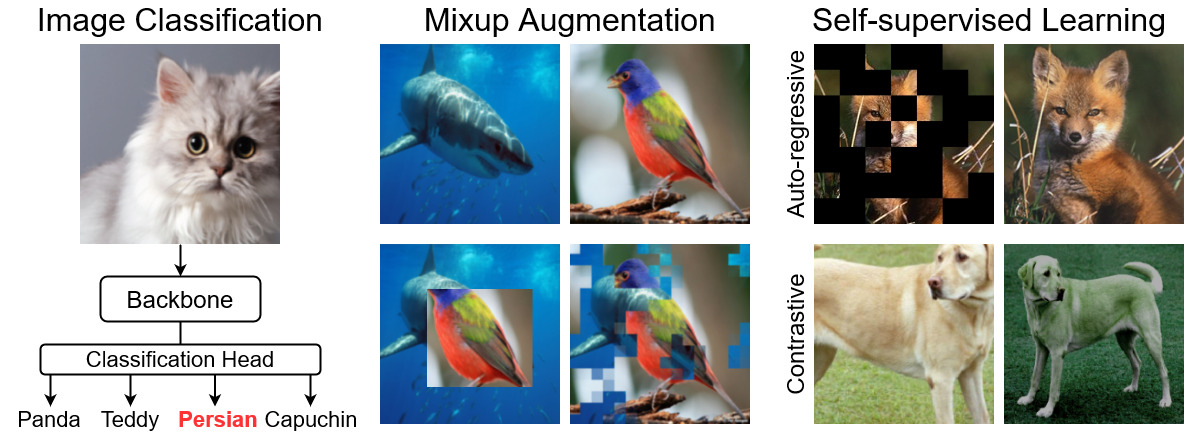

OpenMixup is an open-source toolbox for supervised, self-, and semi-supervised visual representation learning with mixup based on PyTorch, especially for mixup-related methods.

Major Features

-

Modular Design. OpenMixup follows a similar code architecture of OpenMMLab projects, which decompose the framework into various components, and users can easily build a customized model by combining different modules. OpenMixup is also transplantable to OpenMMLab projects (e.g., MMSelfSup).

-

All in One. OpenMixup provides popular backbones, mixup methods, semi-supervised, and self-supervised algorithms. Users can perform image classification (CNN & Transformer) and self-supervised pre-training (contrastive and autoregressive) under the same framework.

-

Standard Benchmarks. OpenMixup supports standard benchmarks of image classification, mixup classification, self-supervised evaluation, and provides smooth evaluation on downstream tasks with open-source projects (e.g., object detection and segmentation on Detectron2 and MMSegmentation).

-

State-of-the-art Methods. Openmixup provides awesome lists of popular mixup and self-supervised methods. OpenMixup is updating to support more state-of-the-art image classification and self-supervised methods.

[2020-08-19] Weights and logs for mixup benchmarks are released.

[2020-07-30] OpenMixup v0.2.5 is released (issue #10).

There are quick installation steps for develepment:

conda create -n openmixup python=3.8 pytorch=1.12 cudatoolkit=11.3 torchvision -c pytorch -y

conda activate openmixup

pip install openmim

mim install mmcv-full

git clone https://github.com/Westlake-AI/openmixup.git

cd openmixup

python setup.py developPlease refer to install.md for more detailed installation and dataset preparation.

Please see get_started.md for the basic usage of OpenMixup. You can start a multiple GPUs training with CONFIG_FILE using the following script. An example,

bash tools/dist_train.sh ${CONFIG_FILE} ${GPUS} [optional arguments]Please then, see Tutorials for more tech details:

- config files

- add new dataset

- data pipeline

- add new modules

- customize schedules

- customize runtime

- benchmarks

Please refer to Model Zoos for various backbones, mixup methods, and self-supervised algorithms. We also provide the paper lists of Awesome Mixups for your reference. Checkpoints and traning logs will be updated soon!

-

Backbone architectures for supervised image classification on ImageNet.

Currently supported backbones

- VGG (ICLR'2015) [config]

- ResNet (CVPR'2016) [config]

- ResNeXt (CVPR'2017) [config]

- SE-ResNet (CVPR'2018) [config]

- SE-ResNeXt (CVPR'2018) [config]

- ShuffleNetV2 (ECCV'2018) [config]

- MobileNetV2 (CVPR'2018) [config]

- MobileNetV3 (ICCV'2019)

- EfficientNet (ICML'2019) [config]

- Swin-Transformer (ICCV'2021) [config]

- RepVGG (CVPR'2021)

- Vision-Transformer (ICLR'2021) [config]

- MLP-Mixer (NIPS'2021) [config]

- DeiT (ICML'2021) [config]

- ConvMixer (Openreview'2021) [config]

- UniFormer (ICLR'2022) [config]

- PoolFormer (CVPR'2022) [config]

- ConvNeXt (CVPR'2022) [config]

- VAN (ArXiv'2022) [config]

- LITv2 (ArXiv'2022) [config]

-

Mixup methods for supervised image classification.

Currently supported mixup methods

- Mixup (ICLR'2018)

- CutMix (ICCV'2019)

- ManifoldMix (ICML'2019)

- FMix (ArXiv'2020)

- AttentiveMix (ICASSP'2020)

- SmoothMix (CVPRW'2020)

- SaliencyMix (ICLR'2021)

- PuzzleMix (ICML'2020)

- GridMix (Pattern Recognition'2021)

- ResizeMix (ArXiv'2020)

- AutoMix (ECCV'2022) [config]

- SAMix (ArXiv'2021) [config]

Currently supported datasets for mixups

- ImageNet [download] [config]

- CIFAR-10 [download] [config]

- CIFAR-100 [download] [config]

- Tiny-ImageNet [download] [config]

- CUB-200-2011 [download] [config]

- FGVC-Aircraft [download] [config]

- StandfoldCars [download]

- Places205 [download] [config]

- iNaturalist-2017 [download] [config]

- iNaturalist-2018 [download] [config]

-

Self-supervised algorithms for visual representation learning.

Currently supported self-supervised algorithms

- Relative Location (ICCV'2015) [config]

- Rotation Prediction (ICLR'2018) [config]

- DeepCluster (ECCV'2018) [config]

- NPID (CVPR'2018) [config]

- ODC (CVPR'2020) [config]

- MoCov1 (CVPR'2020) [config]

- SimCLR (ICML'2020) [config]

- MoCoV2 (ArXiv'2020) [config]

- BYOL (NIPS'2020) [config]

- SwAV (NIPS'2020) [config]

- DenseCL (CVPR'2021) [config]

- SimSiam (CVPR'2021) [config]

- Barlow Twins (ICML'2021) [config]

- MoCoV3 (ICCV'2021) [config]

- MAE (CVPR'2022) [config]

- SimMIM (CVPR'2022) [config]

- CAE (ArXiv'2022)

- A2MIM (ArXiv'2022) [config]

Please refer to changelog.md for details and release history.

This project is released under the Apache 2.0 license.

- OpenMixup is an open-source project for mixup methods created by researchers in CAIRI AI Lab. We encourage researchers interested in visual representation learning and mixup methods to contribute to OpenMixup!

- This repo borrows the architecture design and part of the code from MMSelfSup and MMClassification.

If you find this project useful in your research, please consider cite our repo:

@misc{2022openmixup,

title={{OpenMixup}: Open Mixup Toolbox and Benchmark for Visual Representation Learning},

author={Li, Siyuan and Liu, Zicheng and Wu, Di and Stan Z. Li},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/Westlake-AI/openmixup}},

year={2022}

}For now, the direct contributors include: Siyuan Li (@Lupin1998), Zicheng Liu (@pone7), Zedong Wang (@Jacky1128), and Di Wu (@wudi-bu). We thank contributors from MMSelfSup and MMClassification.

This repo is currently maintained by Siyuan Li (lisiyuan@westlake.edu.cn) and Zicheng Liu (liuzicheng@westlake.edu.cn).