This project aims to move the data from a Relational database system (RDBMS) to a Hadoop file system (HDFS). Here are the technology stacks we will use in this project.

- PostgreSQL

- Apache Sqoop

- Apache Hive

- Apache Spark

- To create a modified

dockerfile based on your use cases. - To know how to set up the component with

dockerin both traditional and distributed environments. - To craft a data pipeline for moving data from the traditional system to the distributed system.

- To learn how to communicate between containers in the same environment.

- To build data quality checkers in the intermediate step of the data pipeline.

- To be familiar with the basic usage of

sqoop,hive, andspark.

- Docker desktop

- Docker compose

-

linux/amd64

I provide you a docker-compose file so that you can run the whole application with the following command.

docker-compose up -d

Then you can access the Airflow UI webserver through port 8080

Please feel free to turn the dag button on for the hands_on_test.

It sets a start_date to days_ago(1) and schedule to run on a daily basis.

Assume that the pipeline is run completely. You can test the result on the following components like this.

# show table in database

docker exec postgres-db psql -U postgres -d lineman_wongnai -c \\dt

# describe table

docker exec postgres-db psql -U postgres -d lineman_wongnai -c "

SELECT

table_name,

column_name,

data_type

FROM

information_schema.columns

WHERE

table_name = '<<TARGET TABLE>>';

"

# change <<TARGET TABLE>> to your table name e.g., 'order_detail', 'restaurant_detail'

# sample data

docker exec postgres-db psql -U postgres -d lineman_wongnai -c "SELECT * FROM <<TARGET TABLE>> LIMIT 5;"

# change <<TARGET TABLE>> to your table name e.g., 'order_detail', 'restaurant_detail'

# show tables

docker exec hive-server beeline -u jdbc:hive2://localhost:10000/default -e "SHOW TABLES;"

# describe table

docker exec hive-server beeline -u jdbc:hive2://localhost:10000/default -e "SHOW CREATE TABLE <<TARGET TABLE>>;"

# change <<TARGET TABLE>> to your table name e.g., 'order_detail', 'restaurant_detail'

# sample data

docker exec hive-server beeline -u jdbc:hive2://localhost:10000/default -e "SELECT * FROM <<TARGET TABLE>> LIMIT 5;"

# change <<TARGET TABLE>> to your table name e.g., 'order_detail', 'restaurant_detail'

# check partitioned parquet

docker exec hive-server hdfs dfs -ls /user/spark/transformed_order_detail

docker exec hive-server hdfs dfs -ls /user/spark/transformed_restaurant_detail

# check the source of external table in ./airflow/scripts/hql script.

For SQL requirement files, the CSV files will be placed in the ./sql_result when the dag is completed.

After you finish the test, you can close the whole application by

docker-compose down -v

- Cannot spin up docker-compose because the port has been used.

- check the mapping port on the host machine in

docker-compose.yamlfile. Changing it to another open port. e.g.,'10000:'10000'to'10001:10000'

- check the mapping port on the host machine in

- If you use

arm64architecture to run this project, theimport_sqooptask will be failed. Please swith to run it in thelinux/amd64architecture.

-

Create two tables in postgres database with the above given column types.

- order_detail table using

order_detail.csv - restaurant_detail table using

restaurant_detail.csv - Check

COPYcommand inairflow/dags/script/sql_queries.py

- order_detail table using

-

Once we have these two tables in postgres DB, ETL the same tables to Hive with the same names and corresponding Hive data type using the below guidelines

- Both the tables should be

external table - Both the tables should have

parquet file format - restaurant_detail table should be partitioned by a column name

dt(type string) with a static valuelatest - order_detail table should be partitioned by a column named

dt(type string) extracted fromorder_created_timestampin the formatYYYYMMDD - Check

DESCRIBE TABLEandSAMPLE DATAcommands in the previous HIVE section.

- Both the tables should be

-

After creating the above tables in Hive, create two new tables order_detail_new and restaurant_detail_new with their respective columns and partitions and add one new column for each table as explained below.

-

discount_no_null- replace all the NULL values of discount column with 0 -

cooking_bin- using esimated_cooking_time column and the below logic- I saw that there are a gap for the estimated cooking time below 10 minutes, so I set the default value for outside the provided logic to be

nullvalue.

- I saw that there are a gap for the estimated cooking time below 10 minutes, so I set the default value for outside the provided logic to be

- Check

SAMPLE DATAcommands in the previous HIVE section. You can edit query to test the requirement such asSELECT COUNT(*) FROM order_detail_new WHERE discount_no_null IS NULL. The expected result would be 0.

estimated_cooking_time cooking_bin 10-40 1 41-80 2 81-120 3 > 120 4 -

-

Final column count of each table (including partition column):

- order_detail = 9

- restaurant_detail = 7

- order_detail_new = 10

- restaurant_detail_new = 8

- Check

DESCRIBE TABLEcommand in the previous HIVE section.

-

SQL requirements & CSV output requirements

- Get the average discount for each category

- I'm not sure whether you need the average of

discountordiscount_no_nullcolumns, so I calculate it both. It could lead to different business interpretation.

- I'm not sure whether you need the average of

- Row count per each cooking_bin

- Check the result in

./sql_resultfordiscount.csvandcooking.csv

- Get the average discount for each category

- Use Apache Spark, Apache Sqoop or any other big data frameworks

- Use a scheduler tool to run the pipeline daily. Airflow is preferred

- Include a README file that explains how we can deploy your code

- (bonus) Use Docker or Kubernetes for up-and-running program

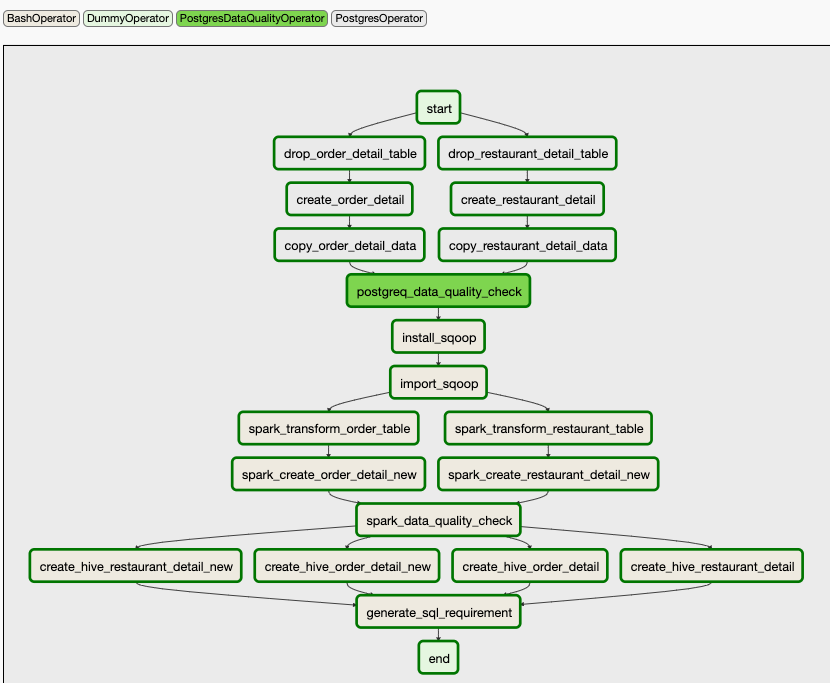

- We orchestrate and schedule this project with

airflow. It will run on a daily basis. Each task will have 1 times retry. - For the postgres, part we use the

PostgresOperatorto submit the SQL script provided inairflow/scripts/sql_queries.pyto create postgres tables. - We provide a custom

PostgresDataQualityOperatorto check that the number of row for each table matched with the source data in./datafolder. - For the second part of this pipeline, we ingest data from RDBMS system to HDFS system with

sqoop. Unfortunately, with the best understanding I have about the HADOOP ecosystem, I can't set up thesqoopcontainer for isolating this operation. So, I work around by installingsqoopcomponent inhive-server. Then I use theBashOperatorto ingest the data from shell script. The data will be placed inhive-serverhdfs filesystem under/user/sqoop/folder. For the source code and required components for sqoop you can find it in./airflow/othres/sqoop - Also, for the hive and spark components, I found trouble to use the

SparkSubmitOperator, andHiveOperatorfrom theairflowcontainers. So I work around by modified the airflow docker image to be able to use thedockercommand within the container. You can find the script to build that image indockerfileandrequirements.txtfiles. I have already pushed modified image to docker hub so you don't to to build it yourself. - With the docker command within the airflow container, I can continue working on the spark and hive things through the

docker execcommand. It use theBashOperatorto run those scripts. For spark script, you can find the source code in./airflow/dags/scripts/spark. For hive script, you can find the source code in./airflow/dags/scripts/hive - For both

PostgresandHiveI use thedrop-createstyle . For the spark output, the default mode isoverwrite. - For sql requirement, when the dag is completed the csv files will be placed in

./sql_resultfolder.

- Source code

- Docker, docker-compose, kubernetes files if possible.

- README of how to test / run