This is Pytorch implementation of XoFTR: Cross-modal Feature Matching Transformer CVPR 2024 Image Matching Workshop paper.

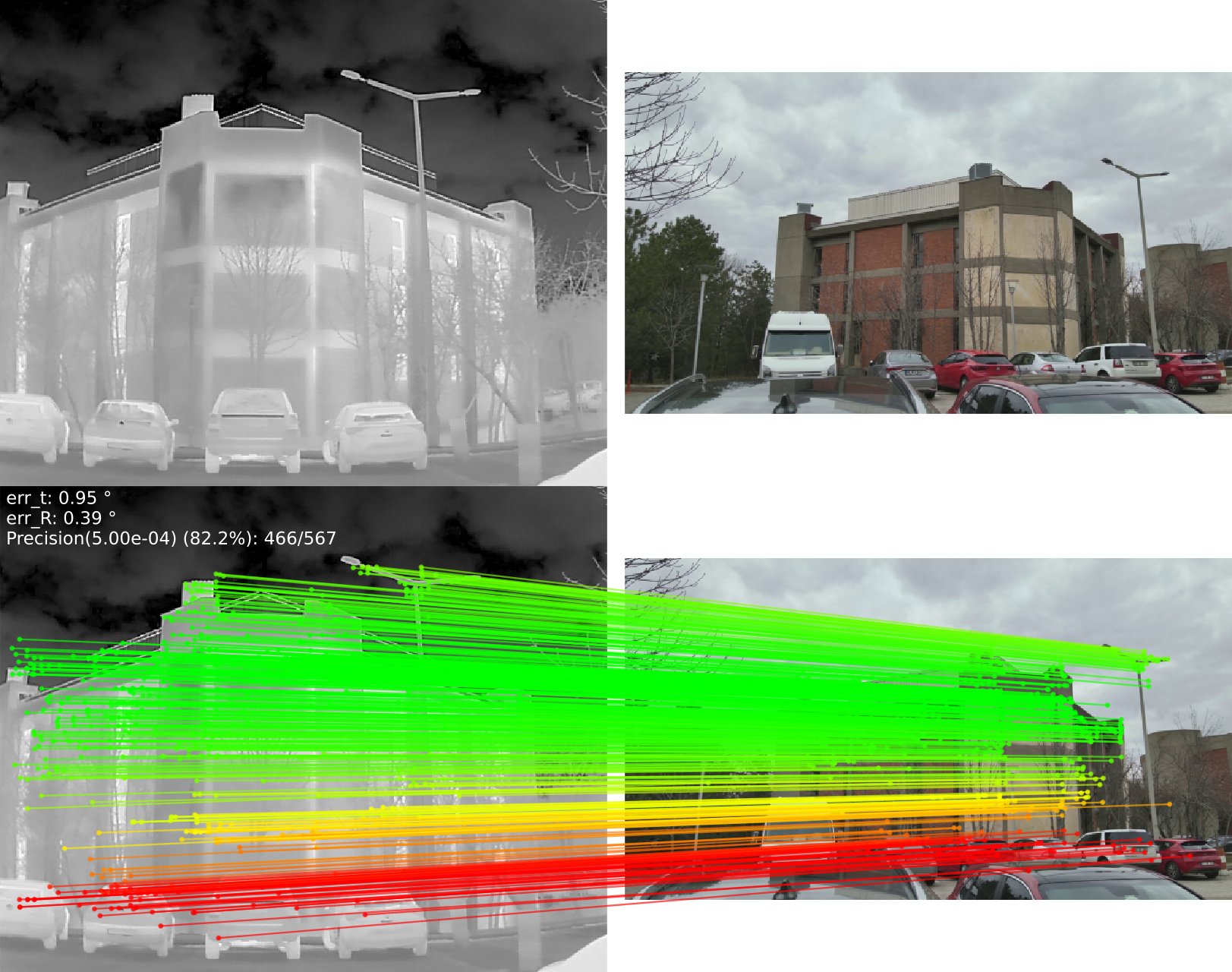

XoFTR is a cross-modal cross-view method for local feature matching between thermal infrared (TIR) and visible images.

To run XoFTR with custom image pairs without configuring your own GPU environment, you can use the Colab demo:

conda env create -f environment.yaml

conda activate xoftrDownload links for

- Pretrained models weights: Two versions available, trained at 640 and 840 resolutions.

- METU-VisTIR dataset

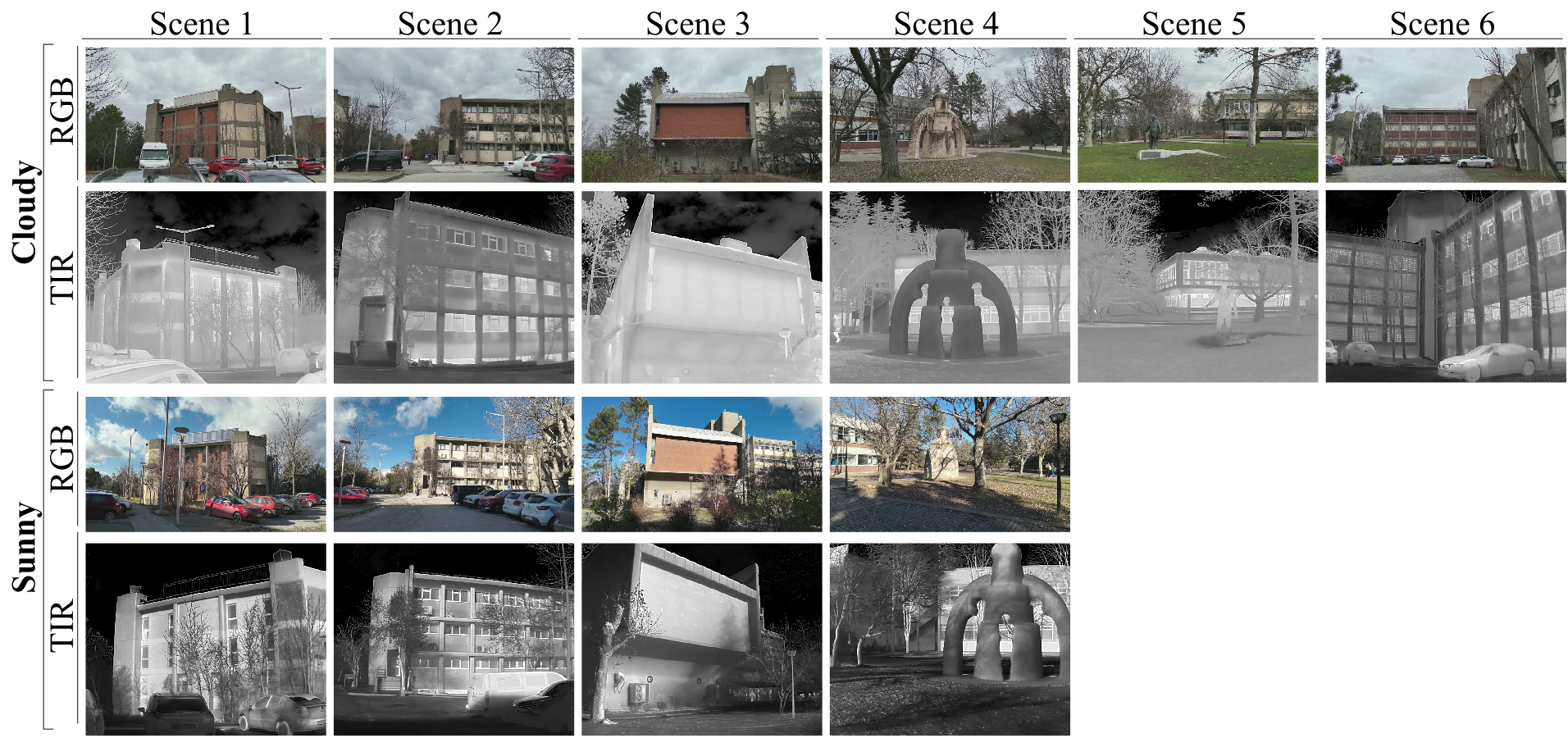

This dataset includes thermal and visible images captured across six diverse scenes with ground-truth camera poses. Four of the scenes encompass images captured under both cloudy and sunny conditions, while the remaining two scenes exclusively feature cloudy conditions. Since the cameras are auto-focus, there may be result in slight imperfections in the ground truth camera parameters. For more information about the dataset, please refer to our paper.

License of the dataset:

The METU-VisTIR dataset is licensed under the Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License (CC BY-NC-SA 4.0).

The dataset is organized into folders according to scenarios. The organization format is as follows:

METU-VisTIR/

├── index/

│ ├── scene_info_test/

│ │ ├── cloudy_cloudy_scene_1.npz # scene info with test pairs

│ │ └── ...

│ ├── scene_info_val/

│ │ ├── cloudy_cloudy_scene_1.npz # scene info with val pairs

│ │ └── ...

│ └── val_test_list/

│ ├── test_list.txt # test scenes list

│ └── val_list.txt # val scenes list

├── cloudy/ # cloudy scenes

│ ├── scene_1/

│ │ ├── thermal/

│ │ │ └── images/ # thermal images

│ │ └── visible/

│ │ └── images/ # visible images

│ └── ...

└── sunny/ # sunny scenes

└── ...

cloudy_cloudy_scene_*.npz and cloudy_sunny_scene_*.npz files contain GT camera poses and image pairs

A demo notebook for XoFTR on a single pair of images is given in notebooks/xoftr_demo.ipynb.

You need to download METU-VisTIR dataset. After downloading, unzip the required files. Then, symlinks need to be created for the data folder.

unzip downloaded-file.zip

# set up symlinks

ln -s /path/to/METU_VisTIR/ /path/to/XoFTR/data/conda activate xoftr

python test_relative_pose.py xoftr --ckpt weights/weights_xoftr_640.ckpt

# with visualization

python test_relative_pose.py xoftr --ckpt weights/weights_xoftr_640.ckpt --save_figsThe results and figures are saved to results_relative_pose/.

See Training XoFTR for more details.

If you find this code useful for your research, please use the following BibTeX entry.

@inproceedings{tuzcuouglu2024xoftr,

title={XoFTR: Cross-modal Feature Matching Transformer},

author={Tuzcuo{\u{g}}lu, {\"O}nder and K{\"o}ksal, Aybora and Sofu, Bu{\u{g}}ra and Kalkan, Sinan and Alatan, A Aydin},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

pages={4275--4286},

year={2024}

}This code is derived from LoFTR. We are grateful to the authors for their contribution of the source code.