Mehmet Özgür Türkoǧlu, Eric Brachmann, Konrad Schindler, Gabriel J. Brostow, Áron Monszpart - 3DV 2021.

[Paper on ArXiv] [Paper on IEEE Explore] [Presentation (long)] [Presentation (short)] [Poster]

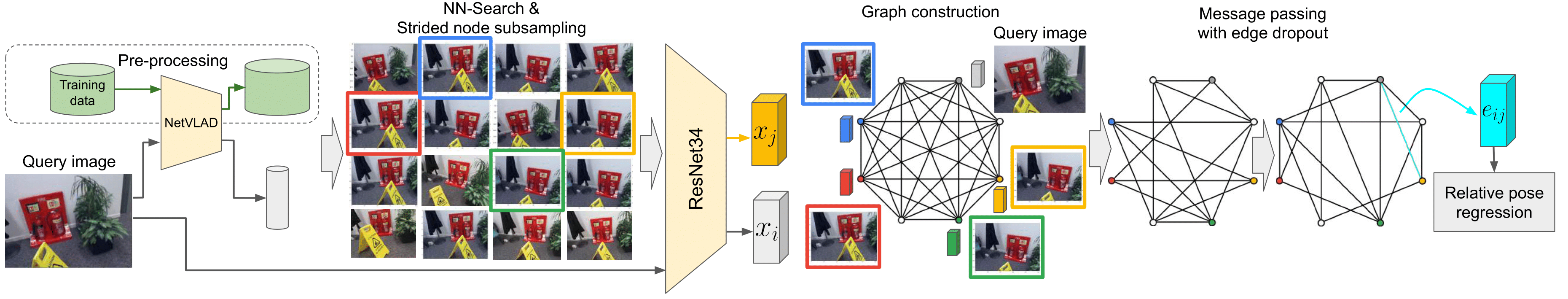

Relative pose regression. We combine the efficiency of image retrieval methods and the ability of graph neural networks to selectively and iteratively refine estimates to solve the challenging relative pose regression problem. Given a query image, we first find similar images to it using a differentiable image retrieval method NetVLAD. We preserve the diversity of neighbors by strided subsampling before building a fully connected Graph Neural Network (GNN). Node representations xi are initialized from ResNet34, and are combined using MLP-s into edge features eij. Finally, the relative pose regression layer maps the refined edge representations into relative poses between image pairs. Edge dropout is only applied at training time.| Trained on 7 training sets | |

| Chess |

pred. poses: relpose_gnn__multi_39_chess_0.09_2.9.npz |

| Fire |

pred. poses: relpose_gnn__multi_39_fire_0.23_7.4.npz |

| Heads |

pred. poses: relpose_gnn__multi_39_heads_0.13_8.5.npz |

| Office |

pred. poses: relpose_gnn__multi_39_office_0.15_4.1.npz |

| Pumpkin |

pred. poses: relpose_gnn__multi_39_pumpkin_0.17_3.3.npz |

| Kitchen |

pred. poses: relpose_gnn__multi_39_redkitchen_0.20_3.6.npz |

| Stairs |

pred. poses: relpose_gnn__multi_39_stairs_0.23_6.4.npz |

If you find our work useful or interesting, please cite our paper:

@inproceedings{turkoglu2021visual,

title={{Visual Camera Re-Localization Using Graph Neural Networks and Relative Pose Supervision}},

author={T{\"{u}}rko\u{g}lu, Mehmet {\"{O}}zg{\"{u}}r and

Brachmann, Eric and

Schindler, Konrad and

Brostow, Gabriel and

Monszpart, \'{A}ron},

booktitle={International Conference on 3D Vision ({3DV})},

year={2021},

organization={IEEE}

}export RELPOSEGNN="${HOME}/relpose_gnn"

git clone --recurse-submodules --depth 1 https://github.com/nianticlabs/relpose-gnn.git ${RELPOSEGNN}We use a Conda environment that makes it easy to install all dependencies. Our code has been tested on Ubuntu 20.04 with PyTorch 1.8.2 and CUDA 11.1.

- Install miniconda with Python 3.8.

- Create the conda environment:

conda env create -f environment-cu111.yml

- Activate and verify the environment:

conda activate relpose_gnn python -c 'import torch; \ print(f"torch.version: {torch.__version__}"); \ print(f"torch.cuda.is_available(): {torch.cuda.is_available()}"); \ import torch_scatter; \ print(f"torch_scatter: {torch_scatter.__version__}")'

export SEVENSCENES="/mnt/disks/data-7scenes/7scenes"

export DATADIR="/mnt/disks/data"

export SEVENSCENESRW="${DATADIR}/7scenes-rw"

export PYTHONPATH="${RELPOSEGNN}:${RELPOSEGNN}/python:${PYTHONPATH}"-

Download

mkdir -p "${SEVENSCENES}" || (mkdir -p "${SEVENSCENES}" && chmod go+w -R "${SEVENSCENES}") for SCENE in "chess" "fire" "heads" "office" "pumpkin" "redkitchen" "stairs"; do test -f "${SEVENSCENES}/${SCENE}.zip" || \ (wget -c "http://download.microsoft.com/download/2/8/5/28564B23-0828-408F-8631-23B1EFF1DAC8/${SCENE}.zip" -O "$SEVENSCENES/$SCENE.zip" &) done

-

Extract

find "${SEVENSCENES}" -maxdepth 1 -name "*.zip" | xargs -P 7 -I fileName sh -c 'unzip -o -d "$(dirname "fileName")" "fileName"' find "${SEVENSCENES}" -mindepth 2 -name "*.zip" | xargs -P 7 -I fileName sh -c 'unzip -o -d "$(dirname "fileName")" "fileName"'

For graph construction we incorporate the NetVLAD image retrieval CNN model.

It is based on this repository: https://github.com/sfu-gruvi-3dv/sanet_relocal_demo.

You'll need preprocessed .bin files (train_frames.bin, test_frames.bin) for each scene.

Coming soon...

for SCENE in "chess" "fire" "heads" "office" "pumpkin" "redkitchen" "stairs"; do

python python/external/sanet_relocal_demo/seq_data/seven_scenes/scenes2seq.py \

"${SEVENSCENES}/${SCENE}" \

--dst-dir "${SEVENSCENESRW}/${SCENE}"

doneBefore starting to train the model, train and test graphs should be generated to speed up the dataloaders, and not have to run NN search during training.

-

Download

-

Test

mkdir -p "${SEVENSCENESRW}" || (mkdir -p "${SEVENSCENESRW}" && chmod go+w -R "${SEVENSCENESRW}") for SCENE in "chess" "fire" "heads" "office" "pumpkin" "redkitchen" "stairs"; do wget -c "https://storage.googleapis.com/niantic-lon-static/research/relpose-gnn/data/${SCENE}_fc8_sp5_test.tar" \ -O "${SEVENSCENESRW}/${SCENE}_fc8_sp5_test.tar" done

-

Train

for SCENE in "chess" "fire" "heads" "office" "pumpkin" "redkitchen" "stairs"; do wget -c "https://storage.googleapis.com/niantic-lon-static/research/relpose-gnn/data/${SCENE}_fc8_sp5_train.tar" \ -O "${SEVENSCENESRW}/${SCENE}_fc8_sp5_train.tar" done

-

-

Extract

(cd "${SEVENSCENESRW}"; \ find "${SEVENSCENESRW}" -mindepth 1 -maxdepth 1 -name "*.tar" | xargs -P 7 -I fileName sh -c 'tar -I pigz -xvf "fileName"')

-

For testing a model

for SCENE in "chess" "fire" "heads" "office" "pumpkin" "redkitchen" "stairs"; do python python/niantic/datasets/dataset_7Scenes_multi.py \ "${SCENE}" \ "test" \ --data-path "${SEVENSCENES}" \ --graph-data-path "${SEVENSCENESRW}" \ --seq-len 8 \ --sampling-period 5 \ --gpu 0 done

-

For training a multi-scene model (Table 1. in paper)

python python/niantic/datasets/dataset_7Scenes_multi.py \ multi \ "train" \ --data-path "${SEVENSCENES}" \ --graph-data-path "${SEVENSCENESRW}" \ --seq-len 8 \ --sampling-period 5 \ --gpu 0

-

For training a single-scene model (Table 1. in supplementary)

for SCENE in "chess" "fire" "heads" "office" "pumpkin" "redkitchen" "stairs"; do python python/niantic/datasets/dataset_7Scenes_multi.py \ "${SCENE}" \ train \ --data-path "${SEVENSCENES}" \ --graph-data-path "${SEVENSCENESRW}" \ --seq-len 8 \ --sampling-period 5 \ --gpu 0 done

-

Download pre-trained model trained with entire 7-Scenes training scenes (Table 1 in the paper)

wget \ -c "https://storage.googleapis.com/niantic-lon-static/research/relpose-gnn/models/relpose_gnn__multi_39.pth.tar" \ -O "${DATADIR}/relpose_gnn__multi_39.pth.tar"

-

Evaluate on each 7scenes test scene

for SCENE in "chess" "fire" "heads" "office" "pumpkin" "redkitchen" "stairs"; do python -u ${RELPOSEGNN}/python/niantic/testing/test.py \ --dataset-dir "${SEVENSCENES}" \ --test-data-dir "${SEVENSCENESRW}" \ --weights "${DATADIR}/relpose_gnn__multi_39.pth.tar" \ --save-dir "${DATADIR}" \ --gpu 0 \ --test-scene "${SCENE}" done

-

Download pre-trained models trained with 7-Scenes' 6 training scenes (Table 2 in the paper)

wget \ -c "https://storage.googleapis.com/niantic-lon-static/research/relpose-gnn/models/6Scenes_${SCENE}_epoch_039.pth.tar" \ -O "${DATADIR}/6Scenes_${SCENE}_epoch_039.pth.tar"

-

Evaluate each model on a corresponding remaining scene

for SCENE in "chess" "fire" "heads" "office" "pumpkin" "redkitchen" "stairs"; do python -u ${RELPOSEGNN}/python/niantic/testing/test.py \ --dataset-dir "${SEVENSCENES}" \ --test-data-dir "${SEVENSCENESRW}" \ --weights "${DATADIR}/6Scenes_${SCENE}_epoch_039.pth.tar" \ --save-dir "${DATADIR}" \ --gpu 0 \ --test-scene "${SCENE}" done

-

Download pre-trained models trained with 7-Scenes' single training scene (Table 1. in the supp.)

wget \ -c "https://storage.googleapis.com/niantic-lon-static/research/relpose-gnn/models/1Scenes_${SCENE}_epoch_039.pth.tar" \ -O "${DATADIR}/1Scenes_${SCENE}_epoch_039.pth.tar"

-

Evaluate each model on the same scene

for SCENE in "chess" "fire" "heads" "office" "pumpkin" "redkitchen" "stairs"; do python -u ${RELPOSEGNN}/python/niantic/testing/test.py \ --dataset-dir "${SEVENSCENES}" \ --test-data-dir "${SEVENSCENESRW}" \ --weights "${DATADIR}/1Scenes_${SCENE}_epoch_039.pth.tar" \ --save-dir "${DATADIR}" \ --gpu 0 \ --test-scene "${SCENE}" done

-

7 scenes training (Table 1. in the paper)

python -u ${RELPOSEGNN}/python/niantic/training/train.py \ --dataset-dir "${SEVENSCENES}" \ --train-data-dir "${SEVENSCENESRW}" \ --test-data-dir "${SEVENSCENESRW}" \ --save-dir "${DATADIR}" \ --gpu 0 \ --experiment 0 \ --test-scene multi

-

6 scenes training (Table 2. in the paper)

for SCENE in "chess" "fire" "heads" "office" "pumpkin" "redkitchen" "stairs"; do python -u ${RELPOSEGNN}/python/niantic/training/train.py \ --dataset-dir "${SEVENSCENES}" \ --train-data-dir "${SEVENSCENESRW}" \ --test-data-dir "${SEVENSCENESRW}" \ --save-dir "${DATADIR}" \ --gpu 0 \ --experiment 1 \ --test-scene "${SCENE}" done

-

Single scene training (Table 1. in the supp.)

for SCENE in "chess" "fire" "heads" "office" "pumpkin" "redkitchen" "stairs"; do python -u ${RELPOSEGNN}/python/niantic/training/train.py \ --dataset-dir "${SEVENSCENES}" \ --train-data-dir "${SEVENSCENESRW}" \ --test-data-dir "${SEVENSCENESRW}" \ --save-dir "${DATADIR}" \ --gpu 0 \ --experiment 2 \ --train-scene "${SCENE}" \ --test-scene "${SCENE}" \ --max-epoch 100 done

We would like to thank Galen Han for his extensive help with this project.

We also thank Qunjie Zhou, Luwei Yang, Dominik Winkelbauer, Torsten Sattler, and Soham Saha

for their help and advice with baselines.

Copyright © Niantic, Inc. 2021. Patent Pending. All rights reserved. Please see the license file for terms.