This Capstone project is part of the Udacity Azure ML Nanodegree. In this project, I used a loan Application Prediction dataset from Kaggle to build a loan application prediction classifier. The classification goal is to predict if a loan application will be approved or denied given the applicant's credit history and other social economic demographic data.

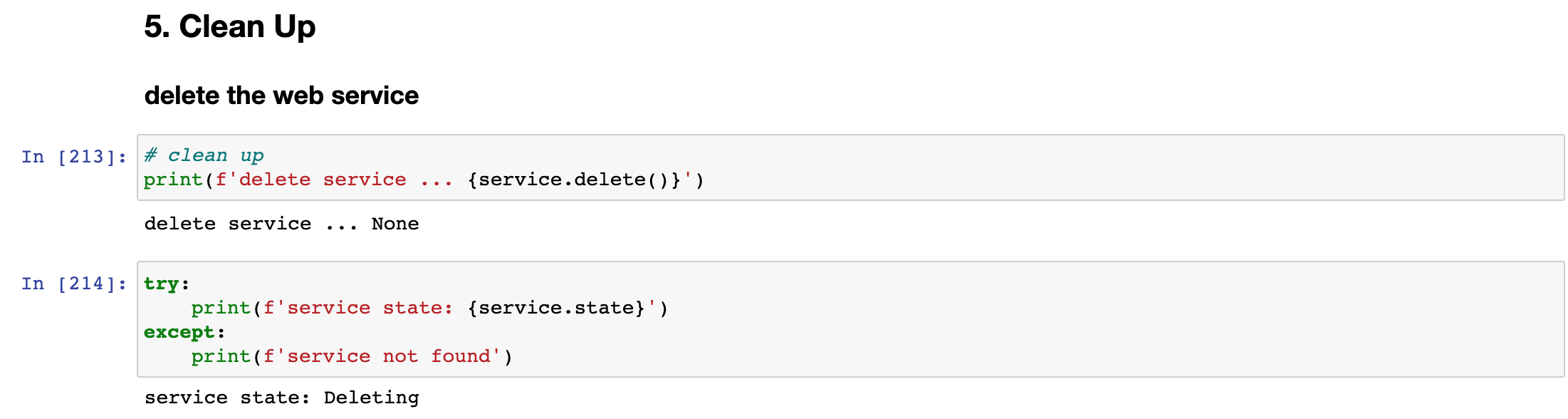

I built two models, one using AutoML and one, custom model. The AutoML is equipped to train and produce the best model on its own, while the custom model leverages HyperDrive to tune training hyperparameters to deliver the best model. Between the AutoML and Hyperdrive experiment runs, a best performing model was selected for deployment. Scoring requests were then sent to the deployment endpoint to test the deployed model.

The diagram below provides an overview of the workflow:

To set up this project, you will need the following 5 items:

an Azure Machine Learning Workspace with Python SDK installed

the two project notebooks named

automlandhyperparameter_tuningthe python script file named

train.pythe conda environment yaml file

conda_env.ymland scoring scriptscore.py

To run the project,

upload all the 5 items to a jupyter notebook compute instance in the AML workspace and place them in the same folder

open the

automlnotebook and run each code cell in turn from Section 1 thru 3, stop right before Section 4Model Deploymentopen the

hyperparameter_tuningand run each code cell in turn from Section 1 thru 3, stop right before Section 4Model Deploymentcompare the best model accuracy in

automlandhyperparameter_tuningnotebooks, run Section 4Model Deploymentfrom the notebook that has the best performing model

The external dataset is the train_u6lujuX_CVtuZ9i.csv of this kaggle Loan Prediction Problem Dataset which I downloaded and staged on this Github Repo.

The task is to train a loan prediction classifier using the dataset. The classification goal is to predict if a loan application will be approved or denied.

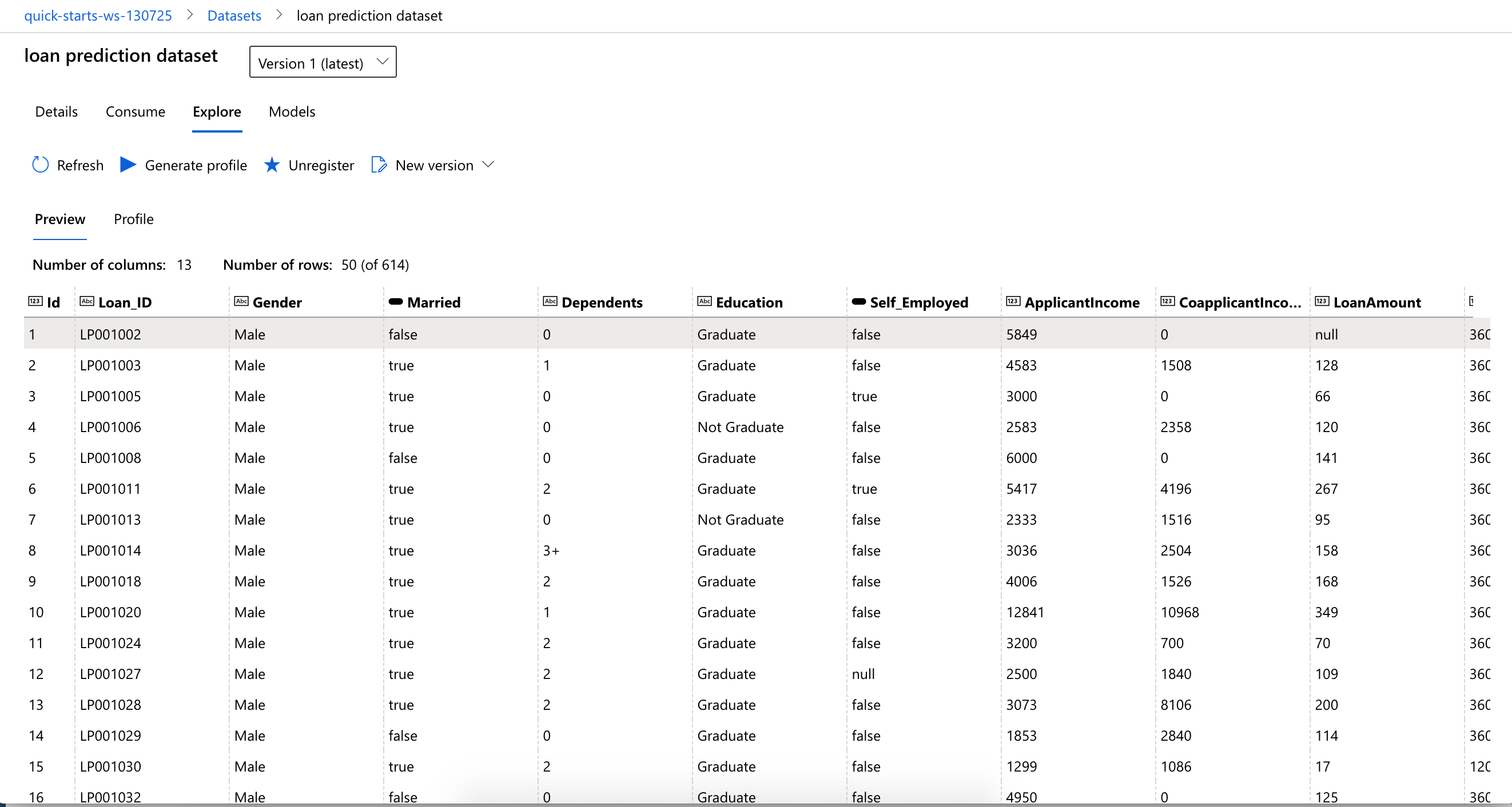

The dataset has 613 records and 13 columns. The input variables are the columns carrying the credit history and other social economics demographics of the applicants. The output variable Loan Status column indicates if a loan application is approved or denied, i.e. a True (1) or False (0).

The dataset was downloaded from this Github Repo where I have staged it for direct download to the AML workspace using SDK.

Once the dataset was downloaded, SDK was again used to clean and split the data into training and validation datasets, which were then stored as Pandas dataframes in memory to facilitate quick data exploration and query, and registered as AML TabularDatasets in the workspace to enable remote access by the AutoML experiment running on a remote compute cluster.

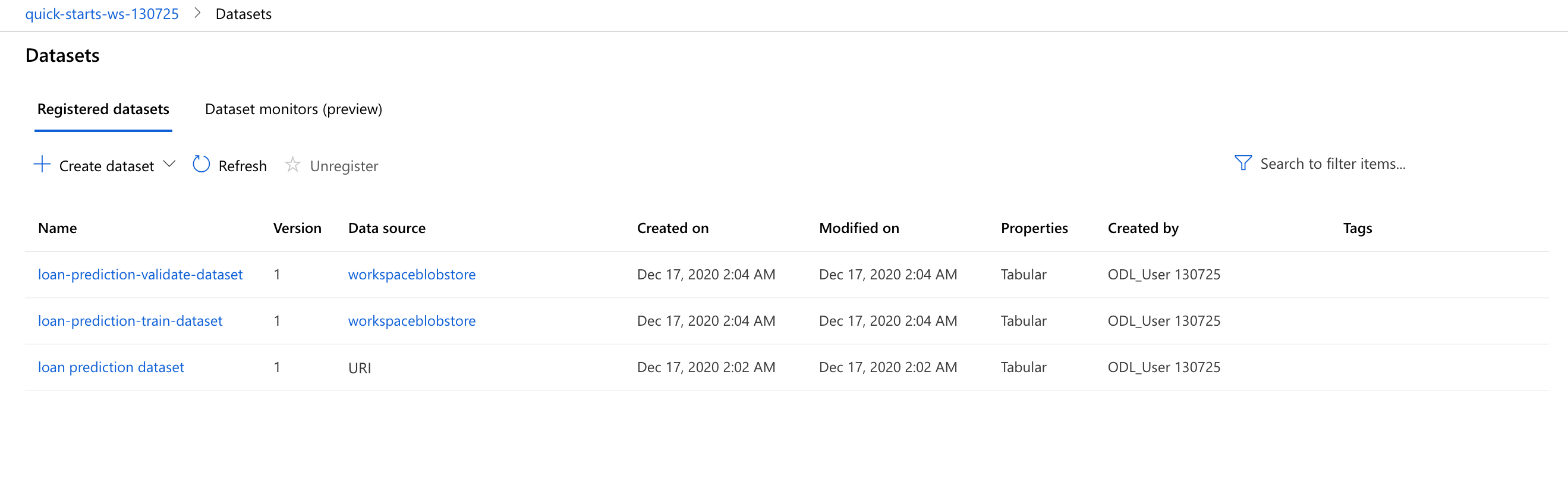

The dataset after downloaded and registered into the workspace looks like this:

Datasets in the workspace after the cleannig, splitting and registration steps:

The AutoML experiment run was executed with this AutoMLConfig settings:

automl_settings = {

"experiment_timeout_minutes": 30,

"max_concurrent_iterations": 4,

"primary_metric" : 'accuracy',

}

automl_config = AutoMLConfig(

task='classification',

max_cores_per_iteration=-1,

featurization='auto',

iterations=30,

enable_early_stopping=True,

compute_target=compute_cluster,

debug_log = 'automl_errors.log',

training_data=train_ds,

validation_data=valid_ds,

label_column_name='y',

**automl_settings)A classification task with auto featurization, early stopping policy, up to 4 concurrent iterations, experiment timeout of 30 minutes and primary metric of accuracy was executed 30 times, using a training and a validation TabularDataset and label_column_name set to y (i.e. Loan Status).

The settings and experiment configuration were chosen in consideration of such factors like:

- classification is the best suited task to use with the dataset

accuracyas the primary metric for apple to apple comparison with the HyperDrive trained best model- enable early termination to terminate poorly performing runs and improves computational efficiency

- iterations (the total number of different algorithm and parameter combinations to test during an automated ML experiment) of 30 to ensure the experiment can fit in the chosen experiment timeout limit of 30 minutes

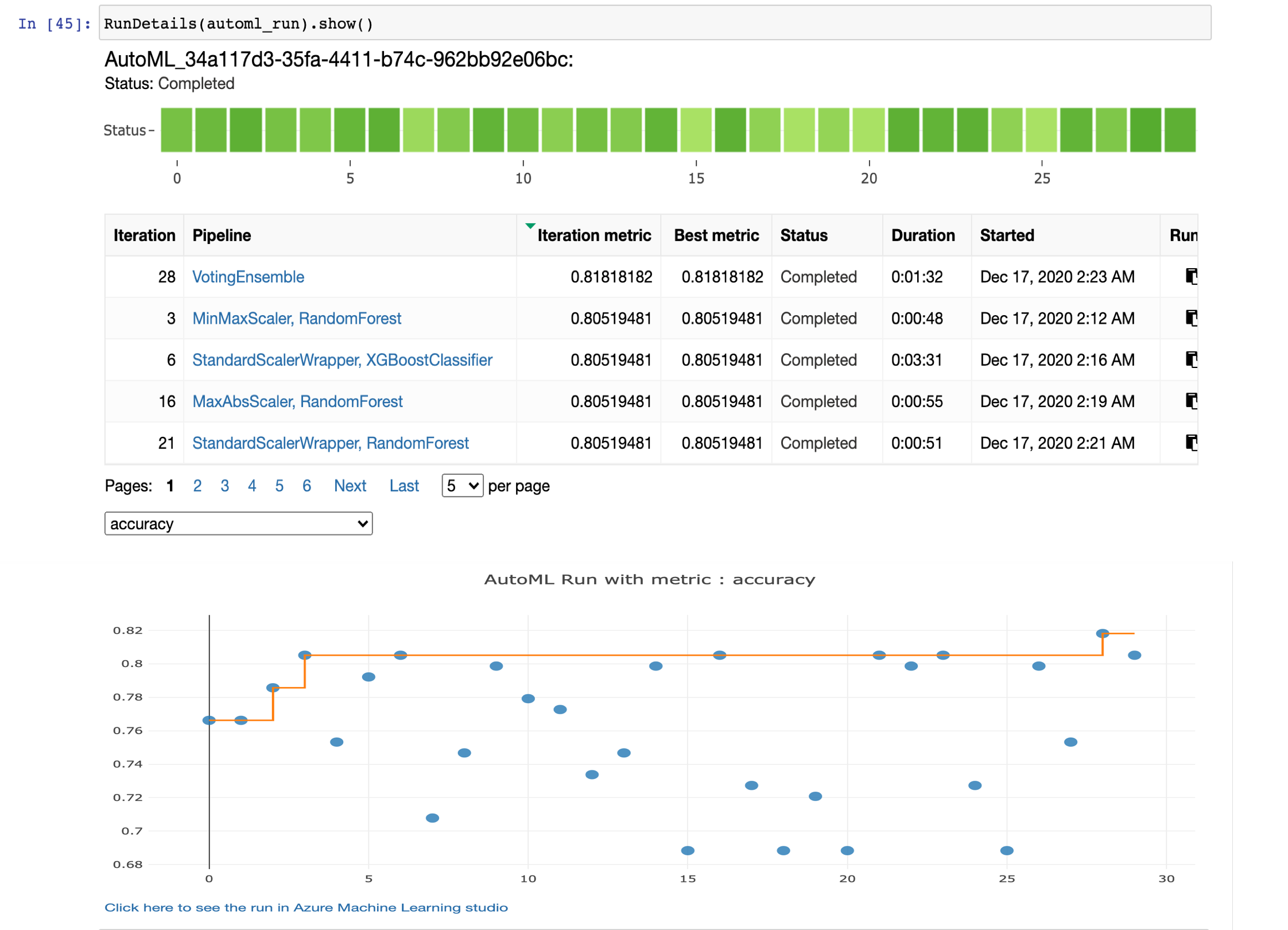

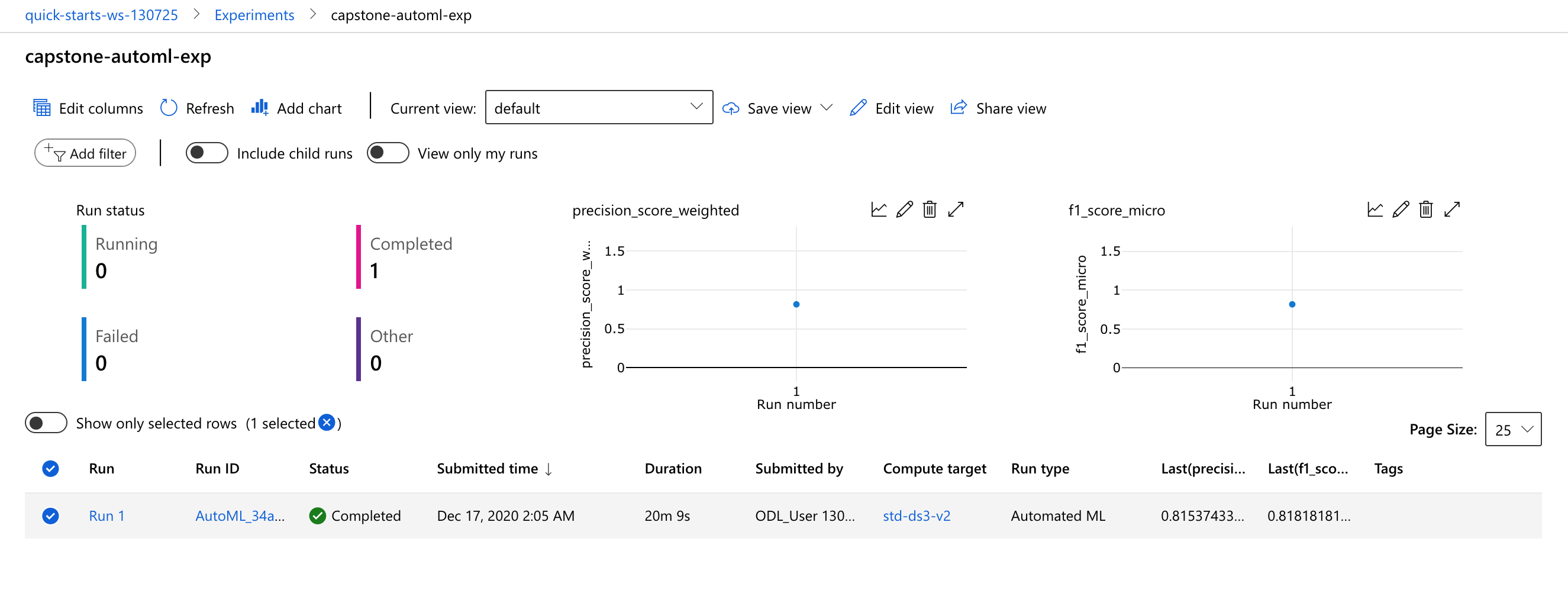

The experiment ran on a remote compute cluster with the progress tracked real time using the RunDetails widget as shown below:

The experiment run took slightly over 21 minutes to finish:

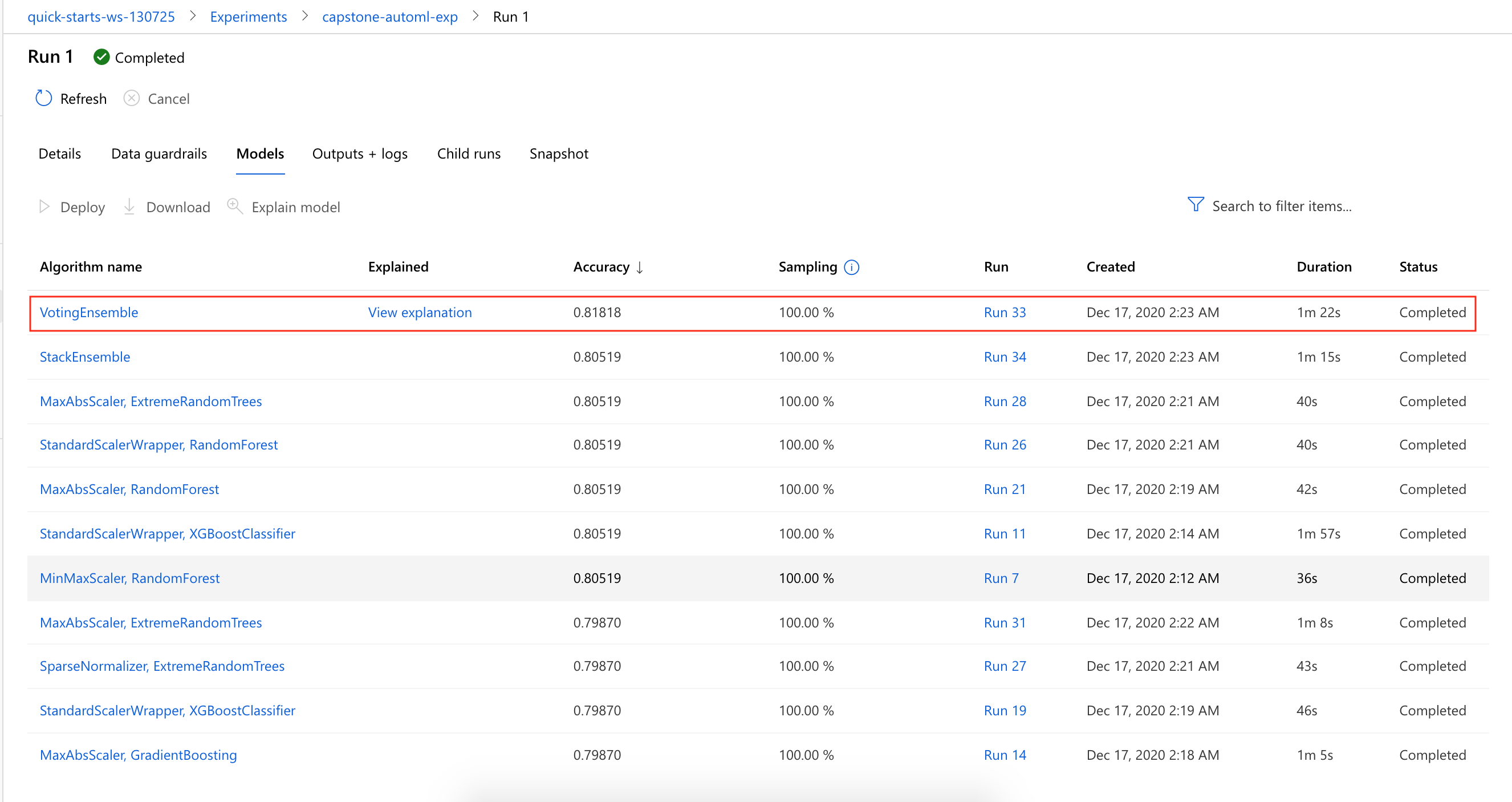

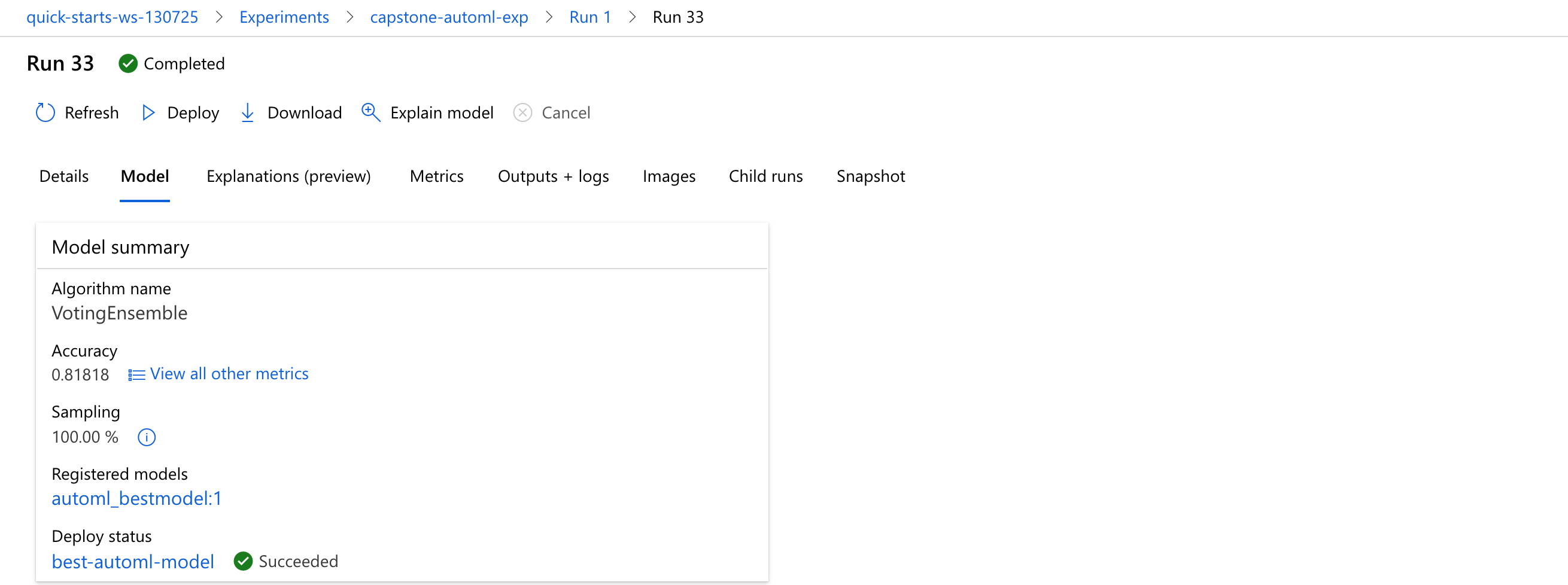

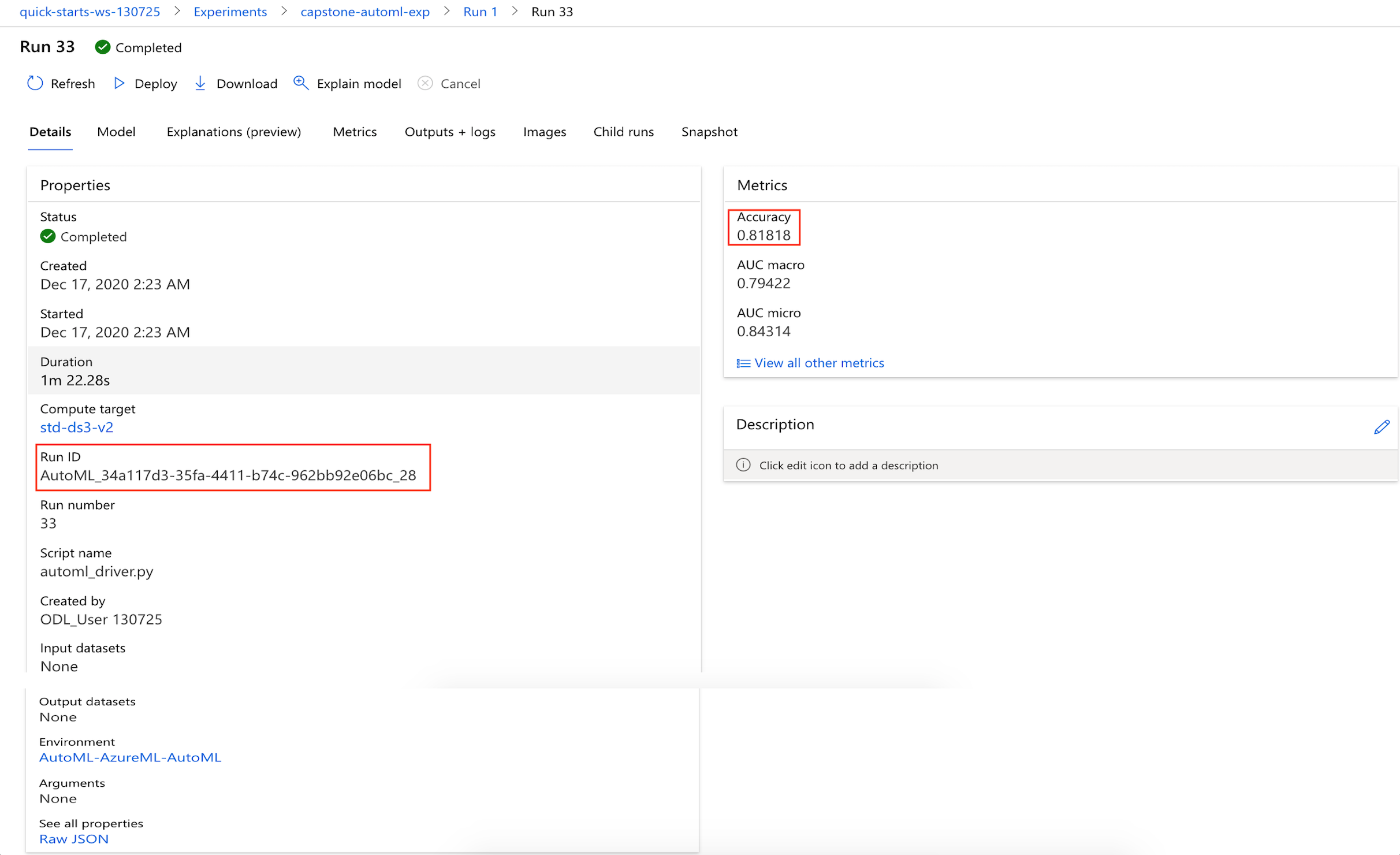

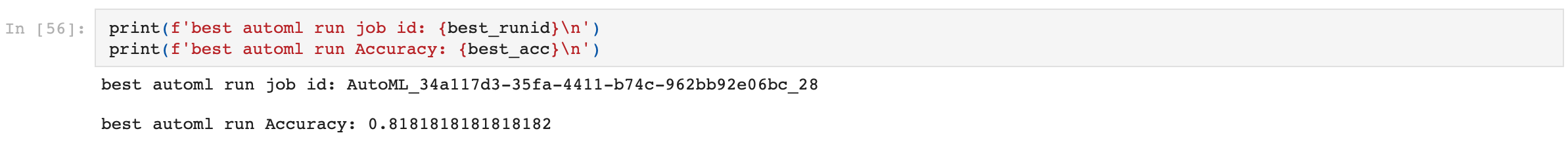

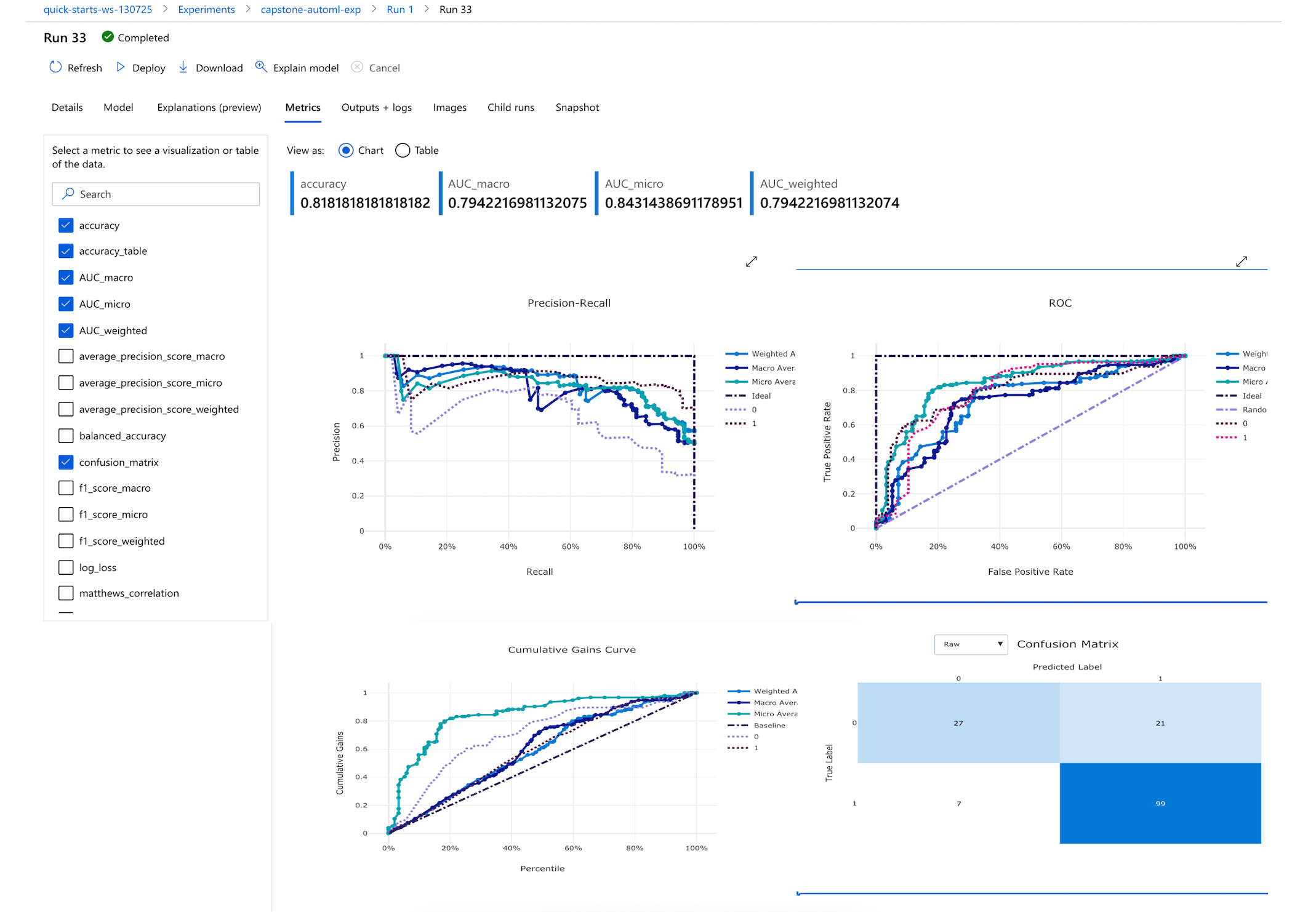

The best model generated by AutoML experiment run was the VotingEnsemble model:

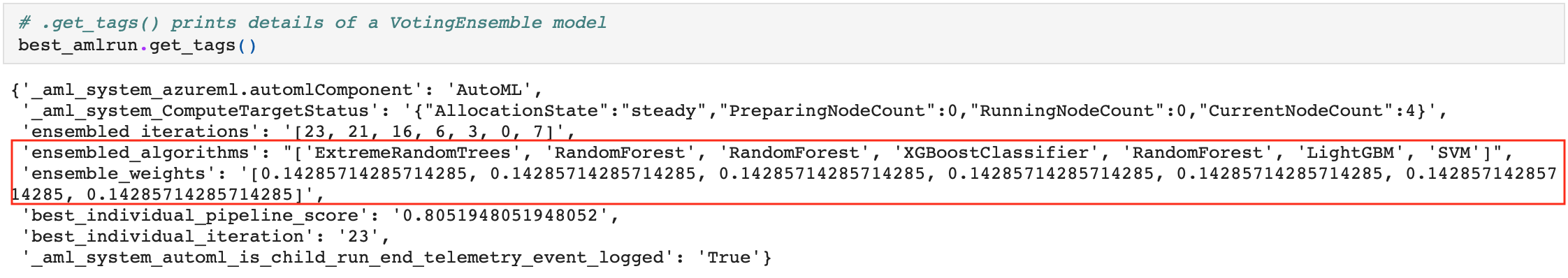

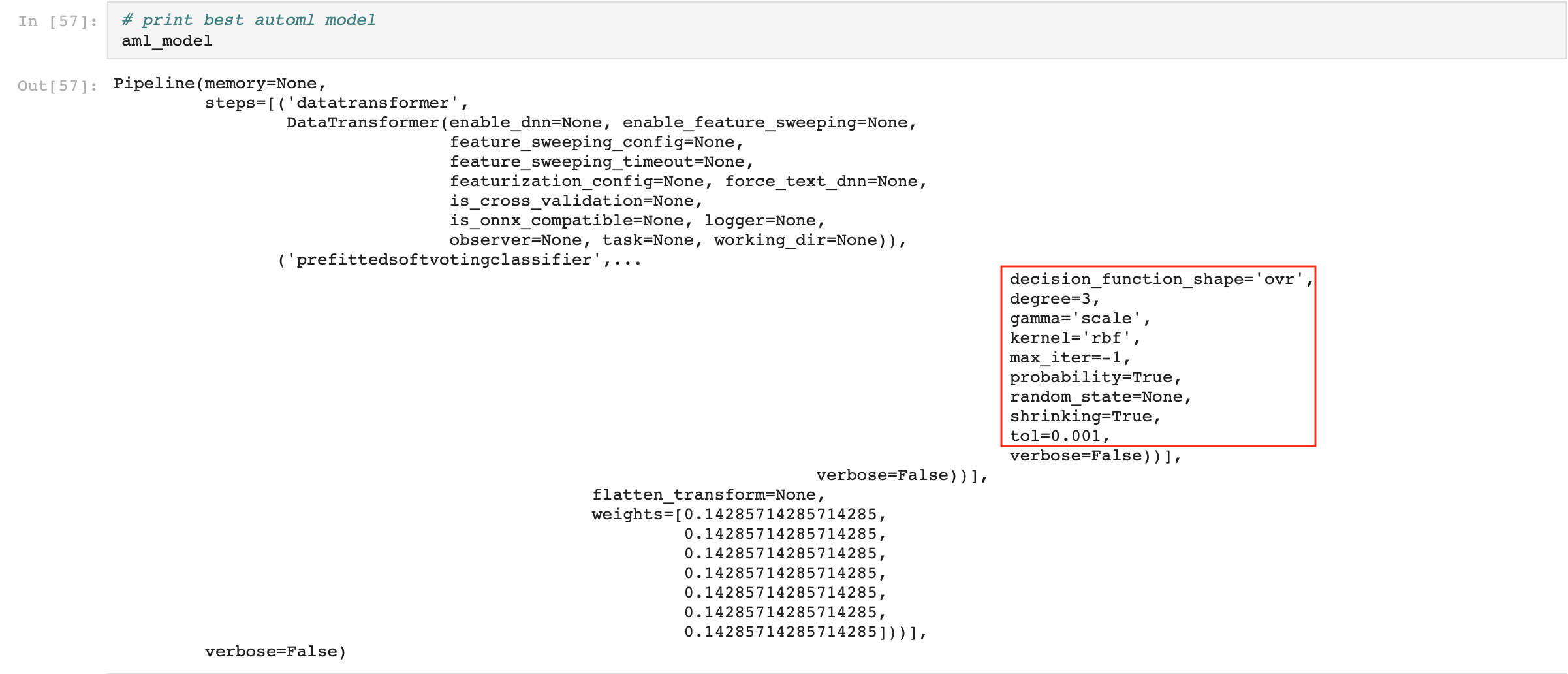

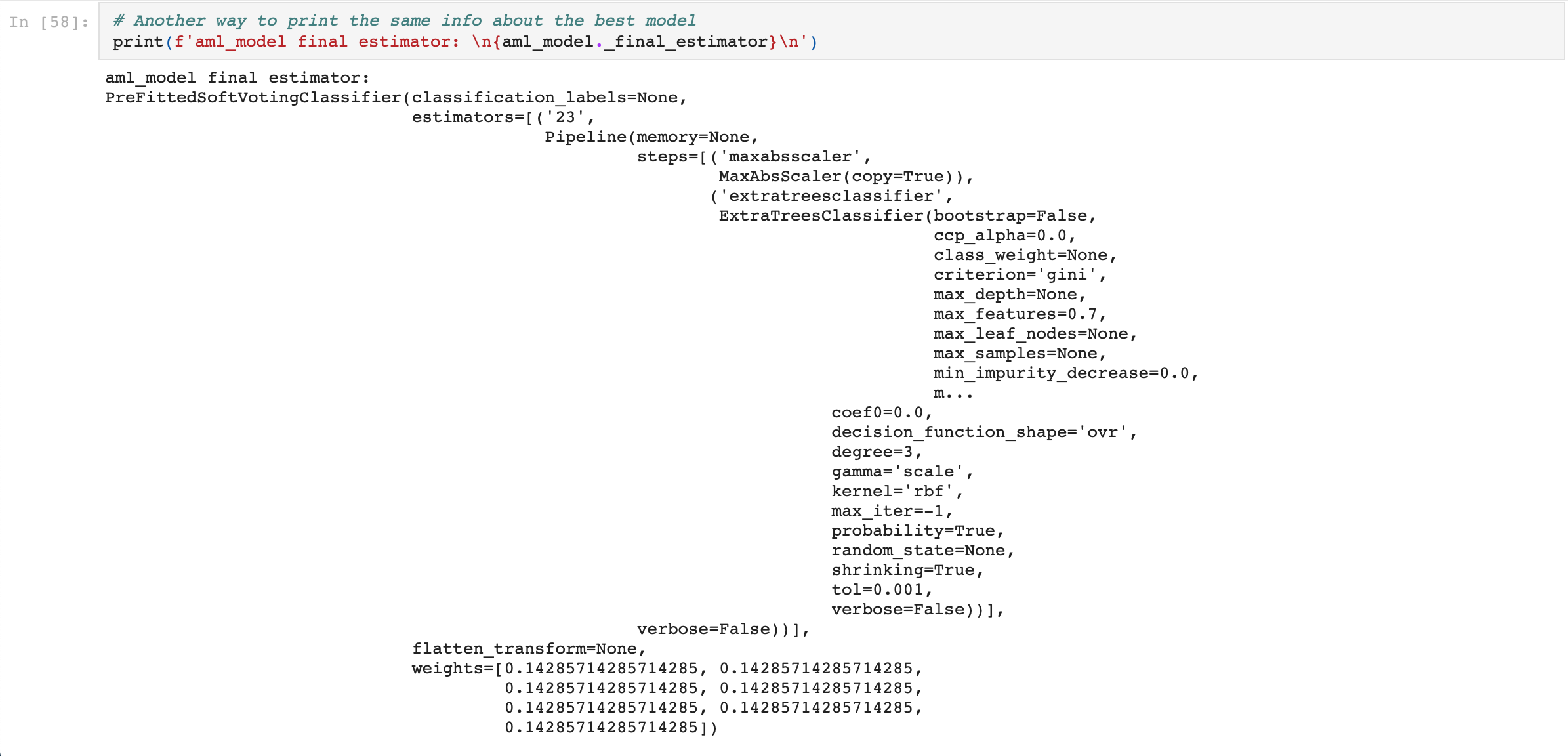

The VotingEnsemble model, with an accuracy of 81.82%, consisted of the weighted results of 7 voting classifers as shown here:

The key parameters of the VotingEnsemble model:

Details and metrics of the VotingEnsemble model:

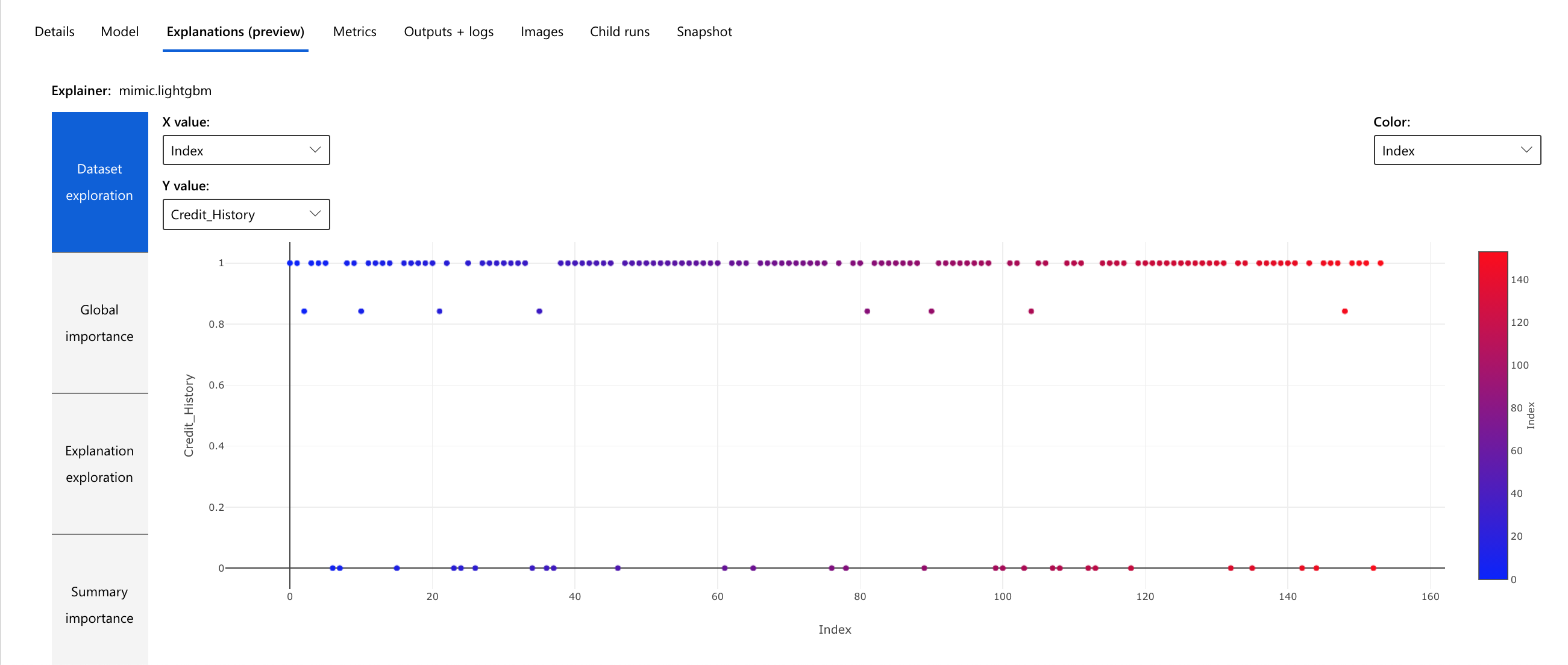

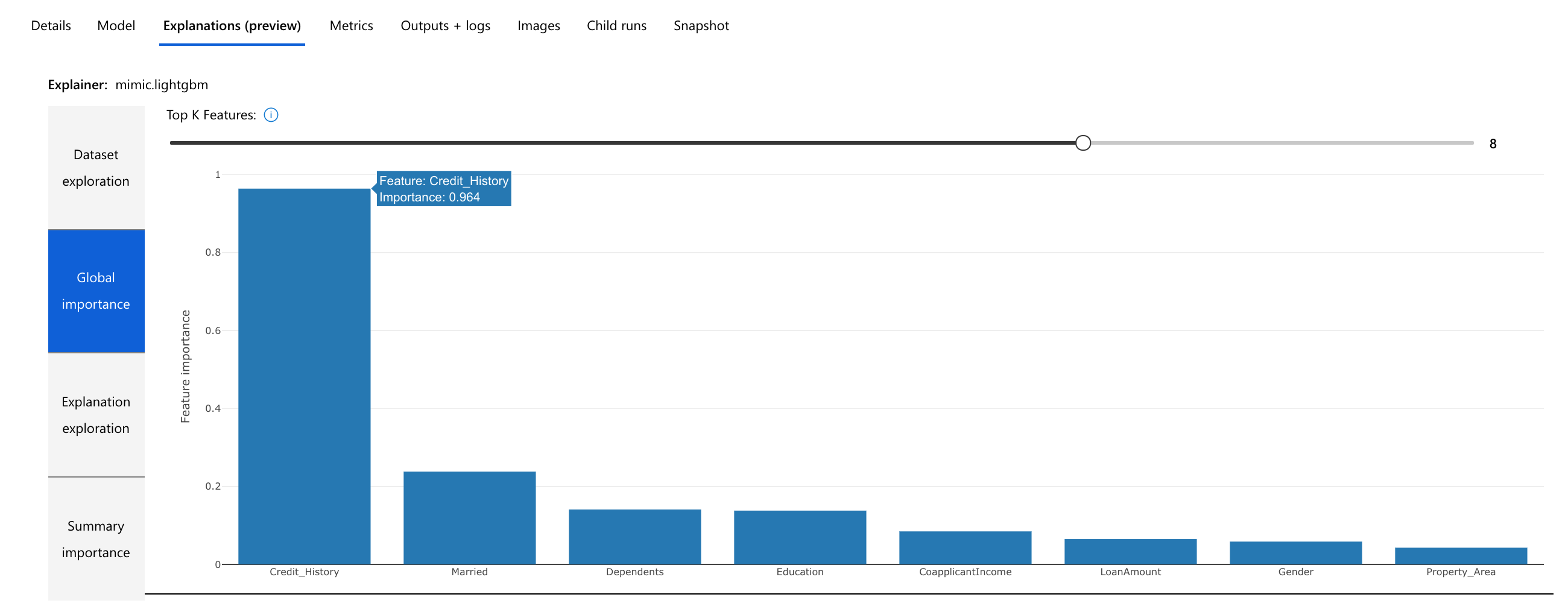

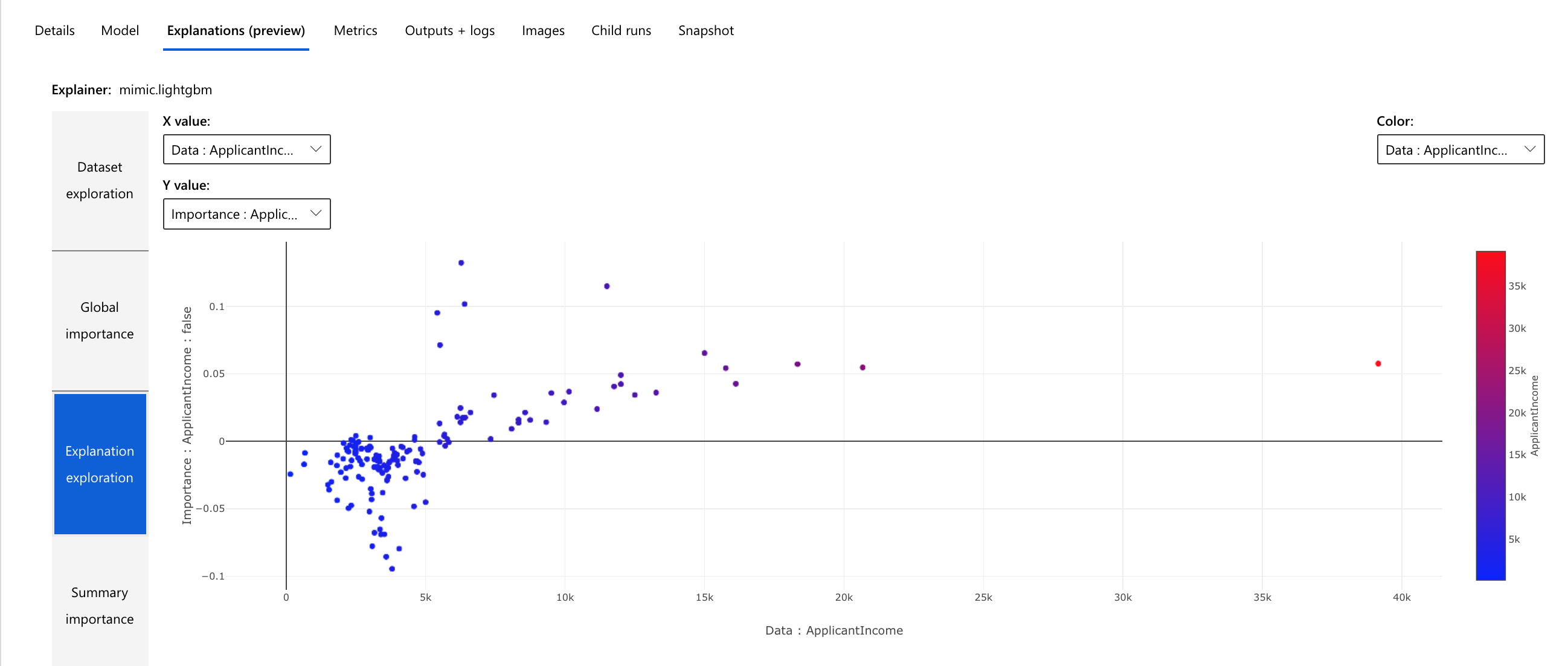

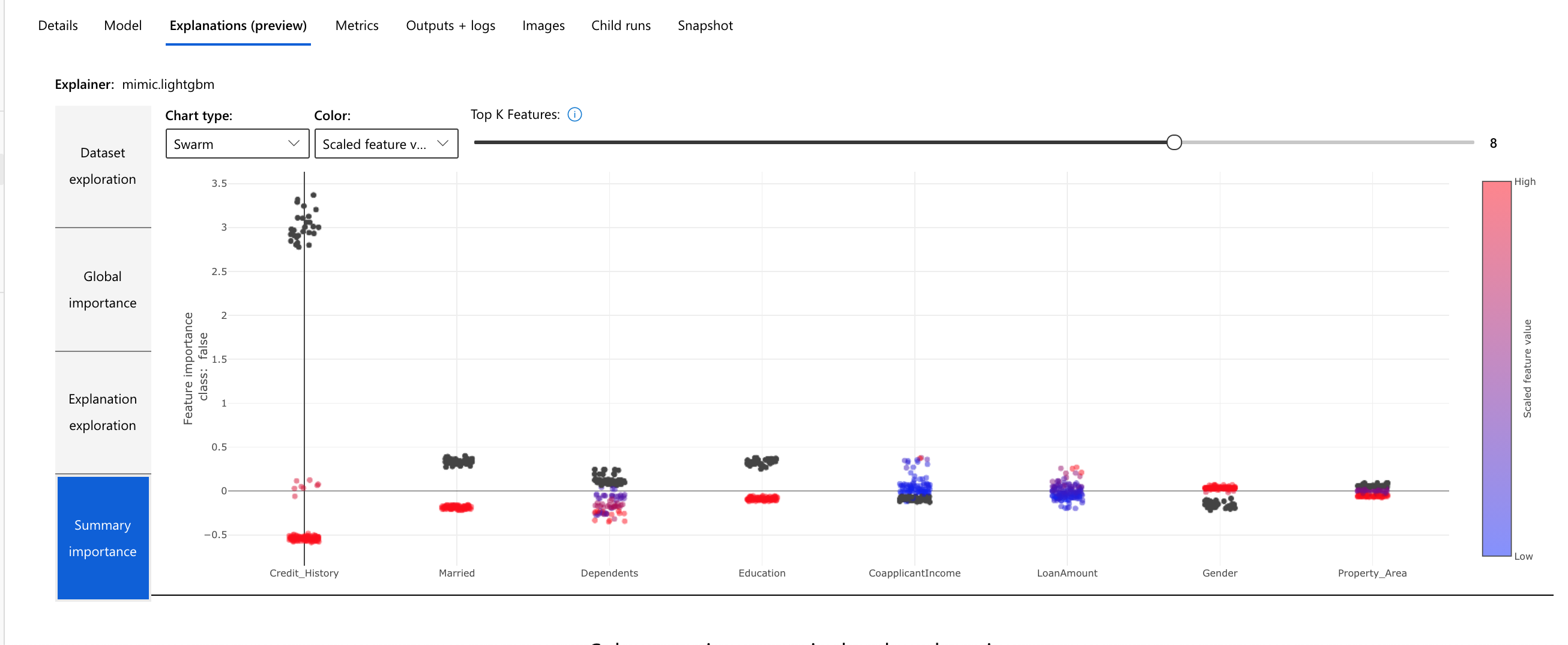

The AutoML experiment also generated a visual model interpretation which is useful in understanding why a model made a certain prediction as well as getting an idea of the importance of individual features for tasks.

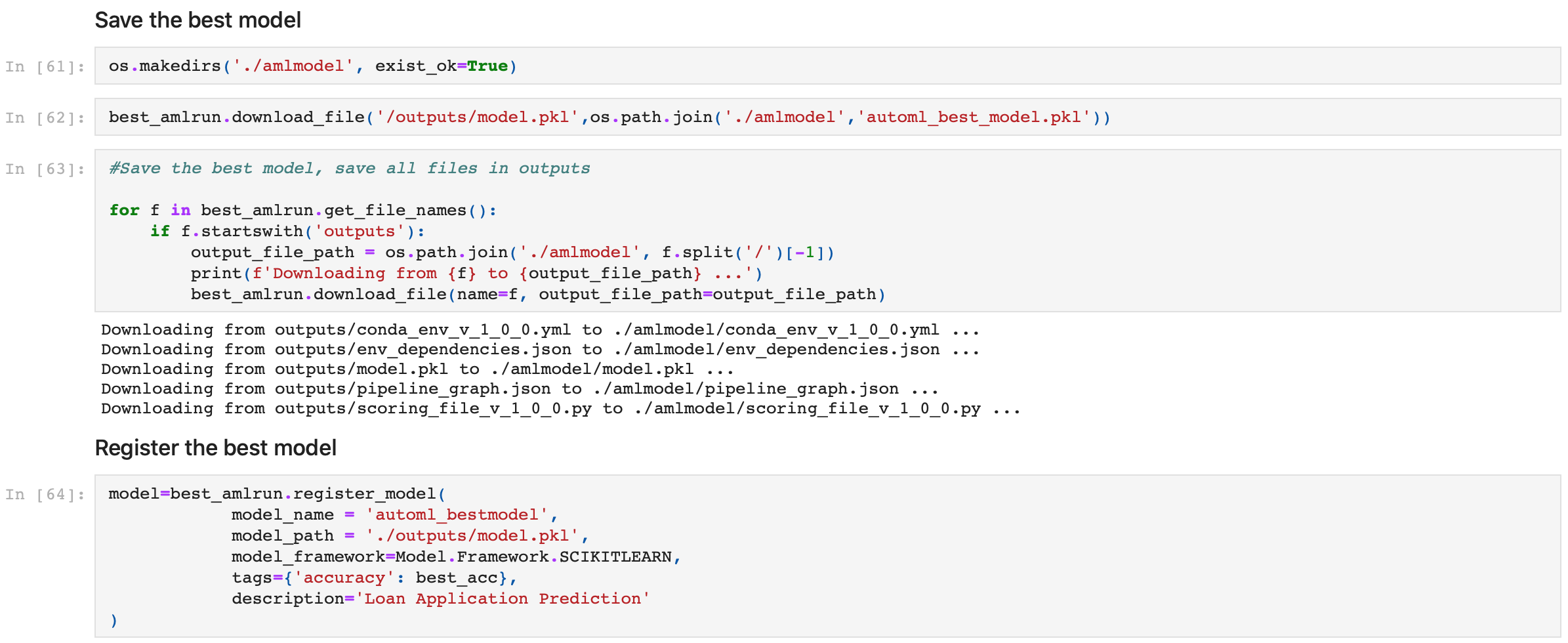

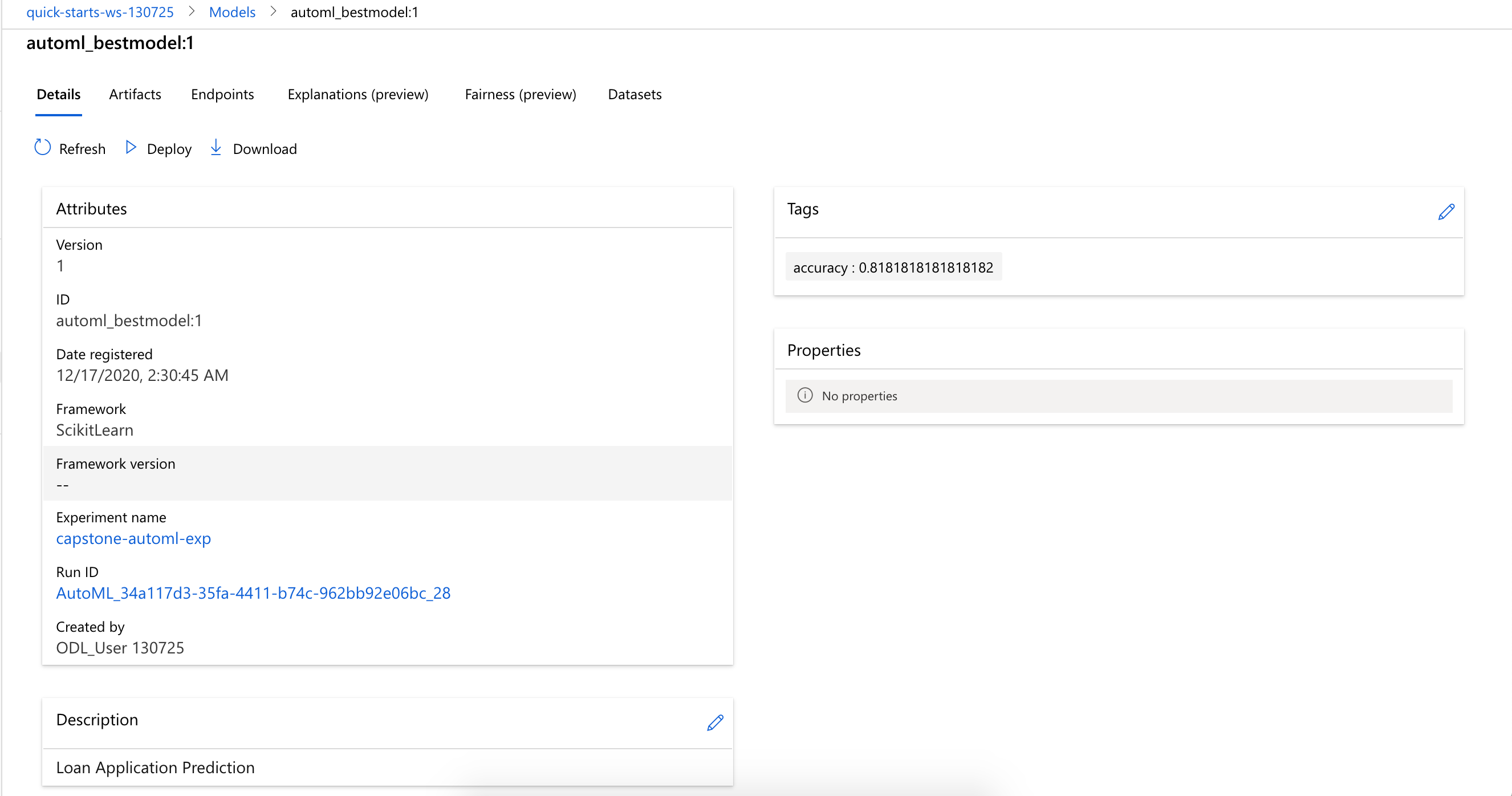

Naturally, the VotingEnsemble model was saved and registered as the best model from the AutoML experiment run:

The HyperDrive experiment run was configured with parameter settings as follows:

define a conda environment YAML file

%%writefile conda_env.yml dependencies: - python=3.6.2 - pip: - azureml-train-automl-runtime==1.18.0 - inference-schema - azureml-interpret==1.18.0 - azureml-defaults==1.18.0 - numpy>=1.16.0,<1.19.0 - pandas==0.25.1 - scikit-learn==0.22.1 - py-xgboost<=0.90 - fbprophet==0.5 - holidays==0.9.11 - psutil>=5.2.2,<6.0.0 channels: - anaconda - conda-forgecreate a sklearn AML environment

sklearn_env = Environment.from_conda_specification(name = 'sklearn_env', file_path = './conda_env.yml')specify a parameter sampler

ps = RandomParameterSampling({'--C': uniform(0.1, 1.0), '--max_iter': choice(50,100,200)})specify an early termination policy

policy = BanditPolicy(evaluation_interval=2, slack_factor=0.1)specify the compute cluster, max run and number of concurrent threads

cluster = ws.compute_targets[cluster_name] # cluster_name = 'hd-ds3-v2' max_run = 30 max_thread = 4create a ScriptRunConfig for use with

train.pysrc = ScriptRunConfig(source_directory='.', compute_target=cluster, script='train.py', arguments=['--C', 1.0, '--max_iter', 100], environment=sklearn_env)create a HyperDrive Config

hyperdrive_config = HyperDriveConfig(hyperparameter_sampling=ps, primary_metric_name='Accuracy', primary_metric_goal=PrimaryMetricGoal.MAXIMIZE, max_total_runs=max_run, max_concurrent_runs=max_thread, policy=policy, run_config=src)

The python training script train.py was executed during the experiment run. It downloaded the dataset from this Github Repo, split it into train and test sets, accepted two input parameters C and max_iter (representing Regularization Strength and Max iterations respectively) for use with Sckit-learn LogisticRegression. These were the two hyperparameters tuned by HyperDrive during the experiment run.

The max_current_runs was set to 4 and mex_total_runs was set to 30 to ensure the experiment can fit in the chosen experiment timeout limit of 30 minutes.

The random parameter sampler for HyperDrive supports discrete and continuous hyperparameters, as well as early termination of low-performance runs. It is simple to use, eliminates bias and increases the accuracy of the model.

The early termination policy BanditPolicy for HyperDrive automatically terminates poorly performing runs and improves computational efficiency. It is based on slack factor/slack amount and evaluation interval and cancels runs where the primary metric is not within the specified slack factor/slack amount compared to the best performing run.

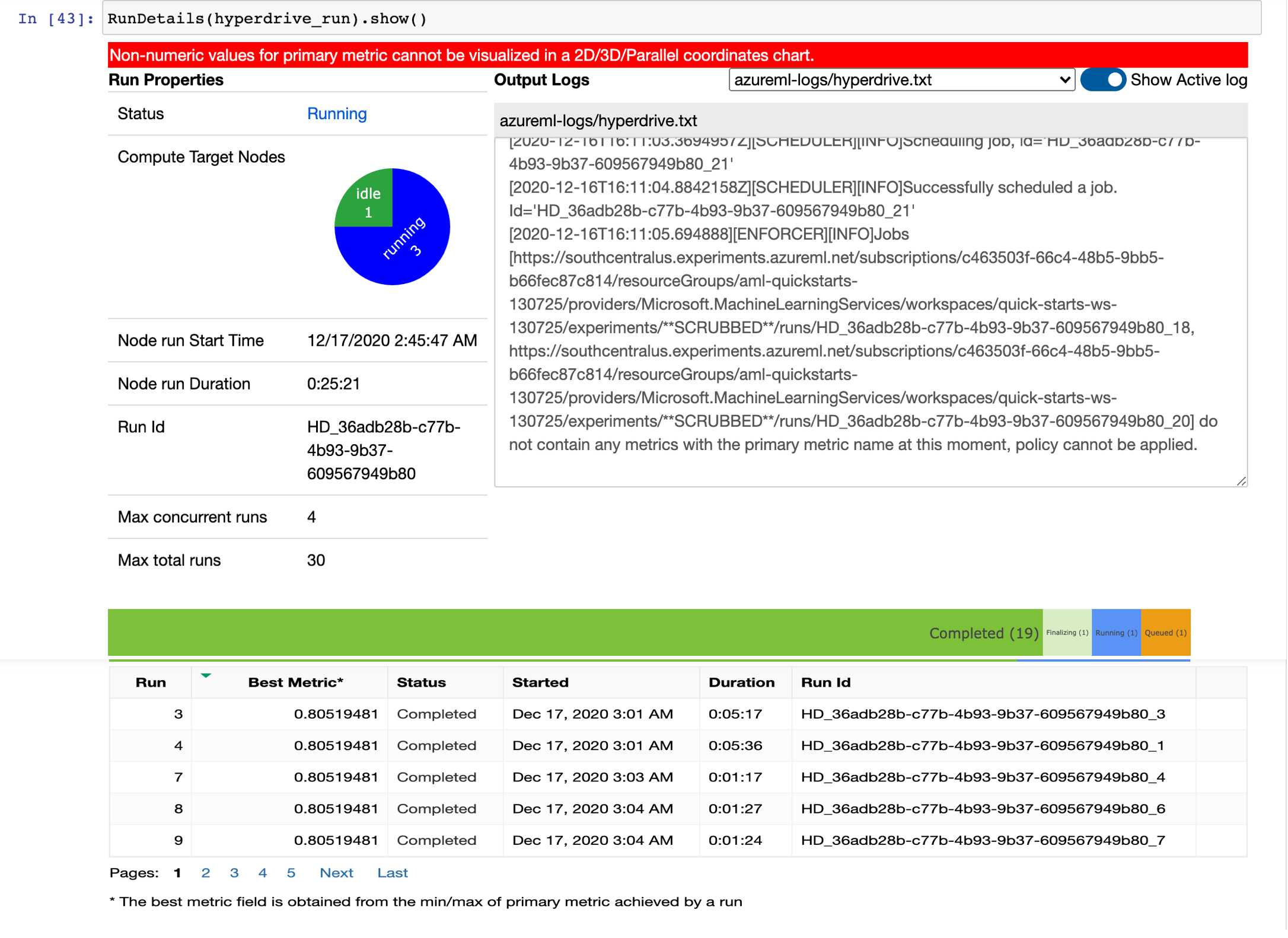

The experiment ran on a remote compute cluster with the progress tracked real time using the RunDetails widget as shown below:

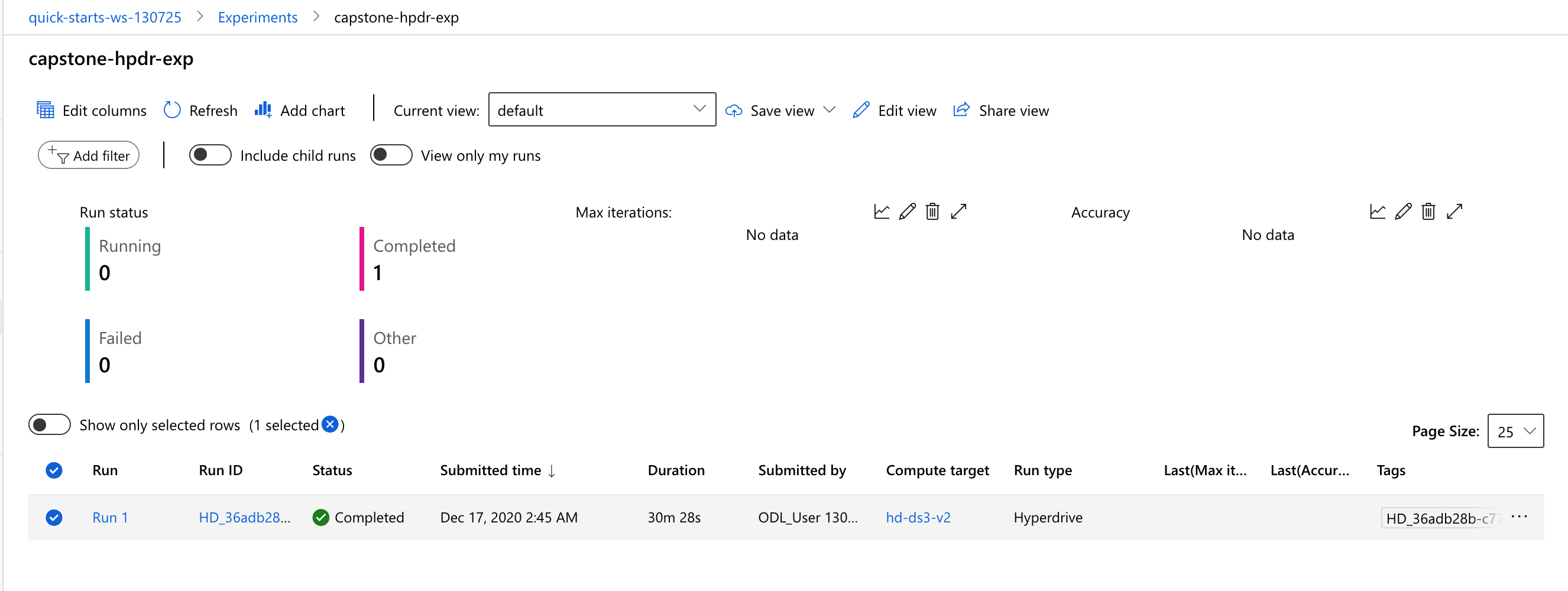

The experiment run took nearly 31 minutes (notice this was much slower than the AutoML experiment run with the same 30 iterations and 4 concurrent threads on the same compute cluster type) to finish:

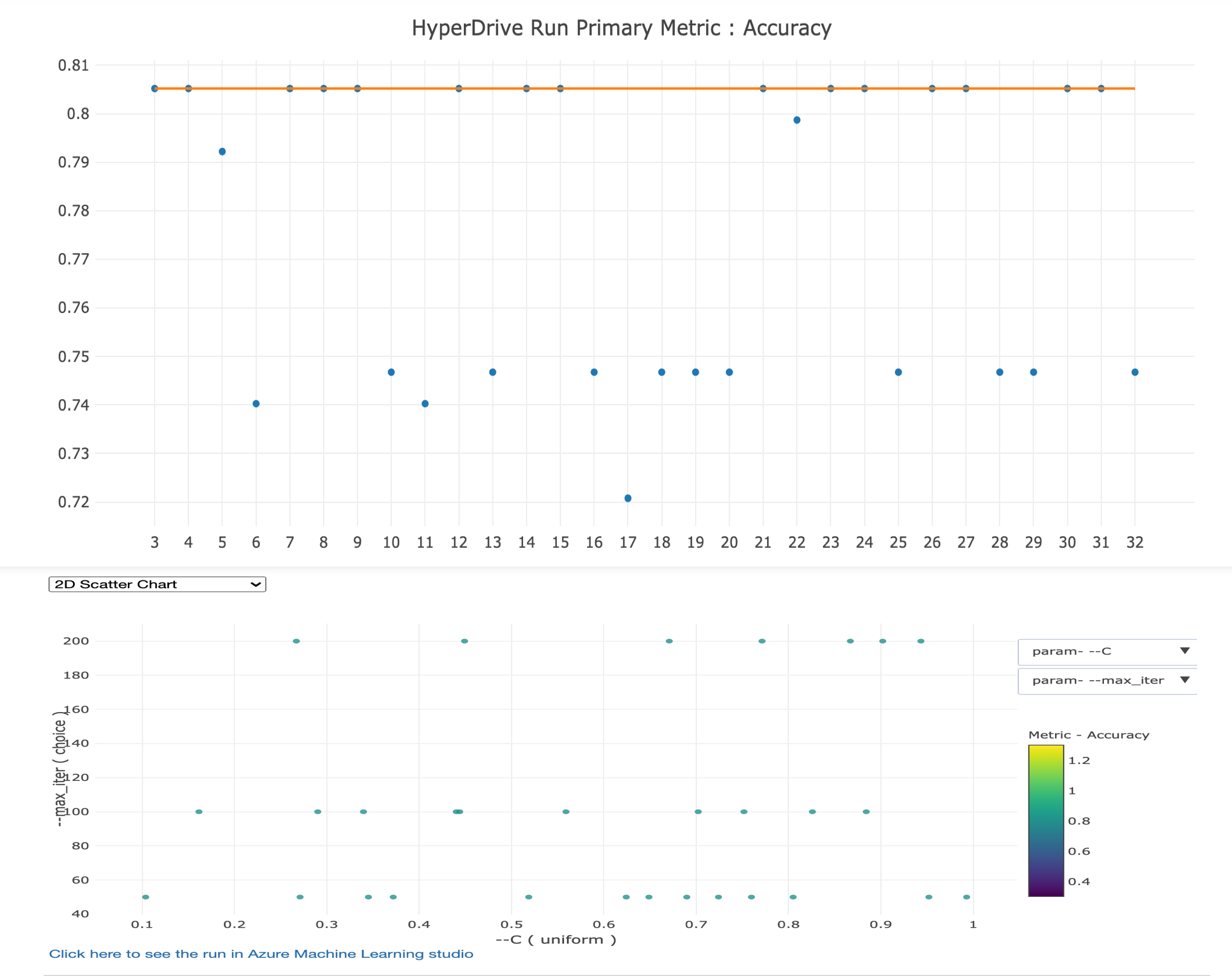

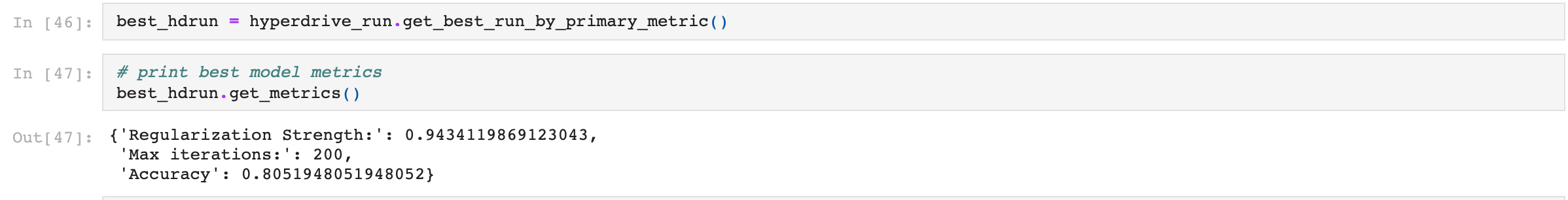

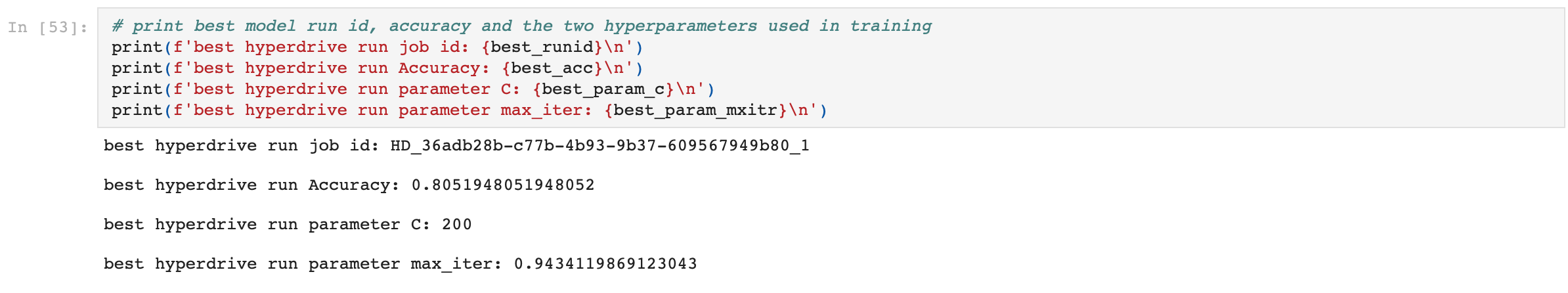

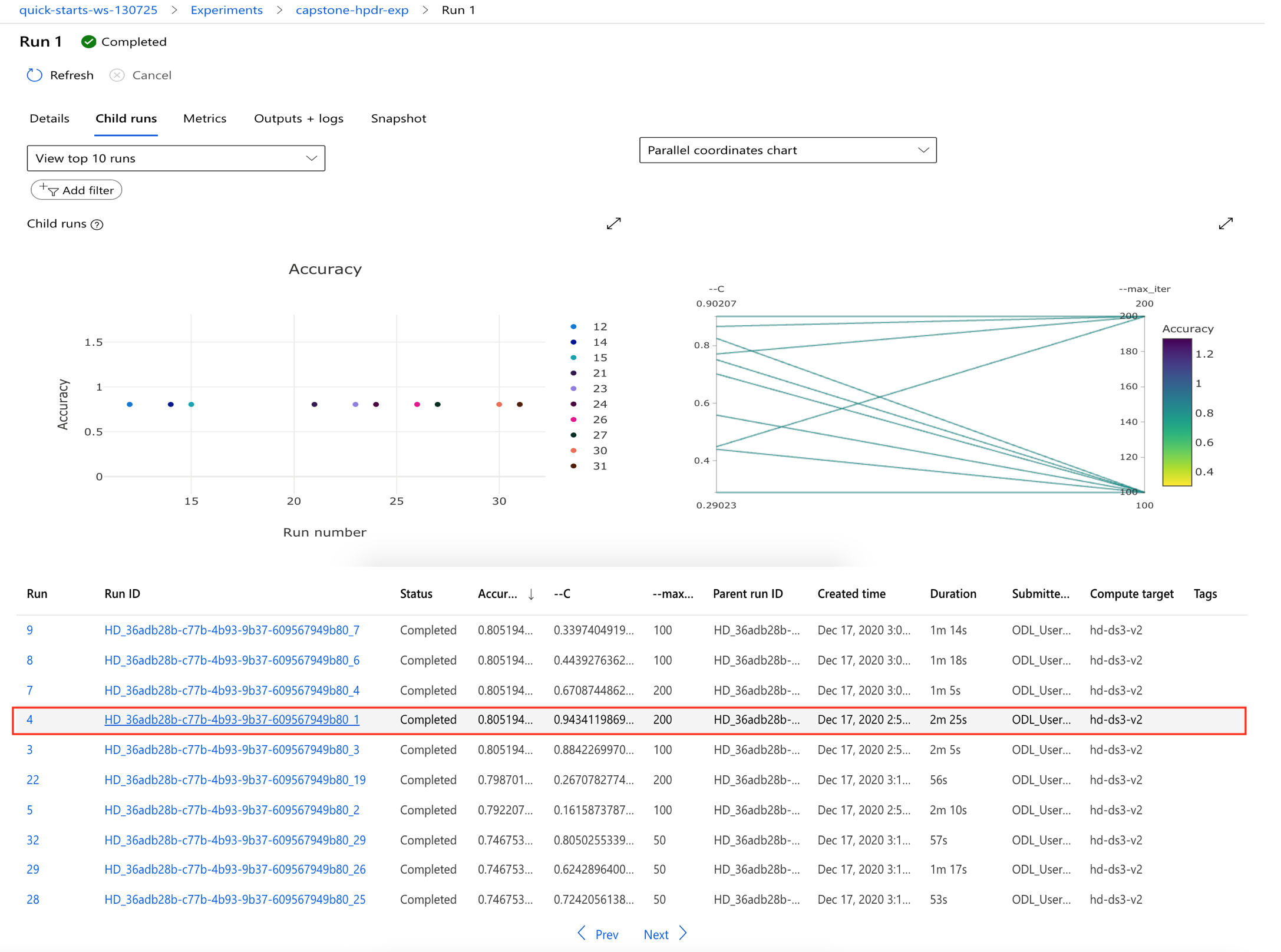

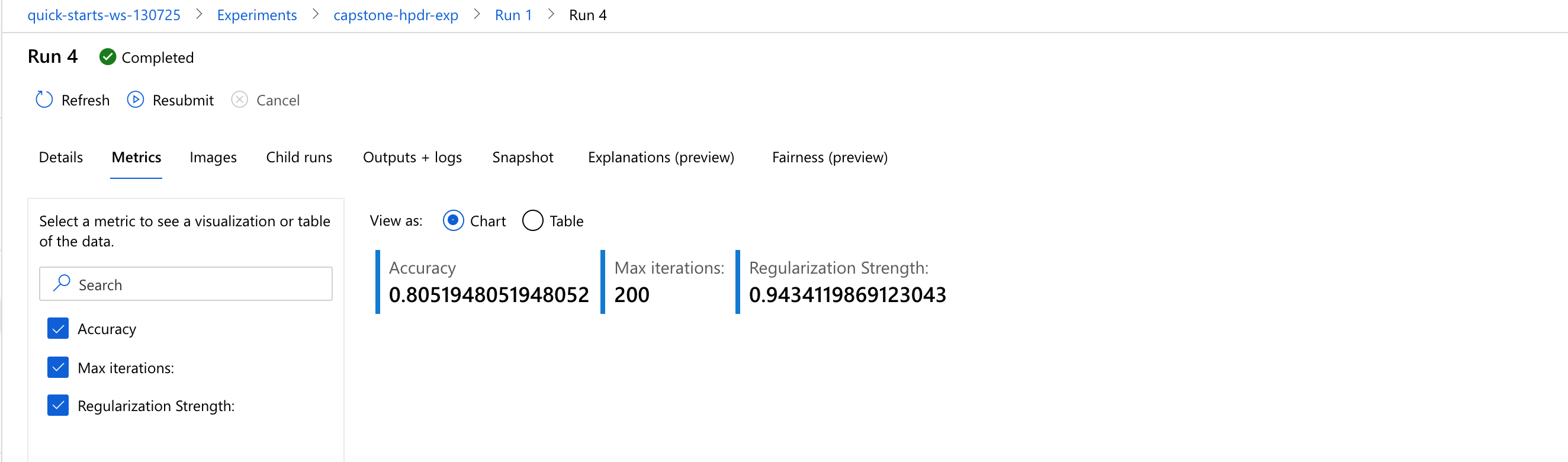

The best model generated by HyperDrive experiment run was Run 4 with an accuracy of 80.52%. The metrics and visulaization charts are as shown below:

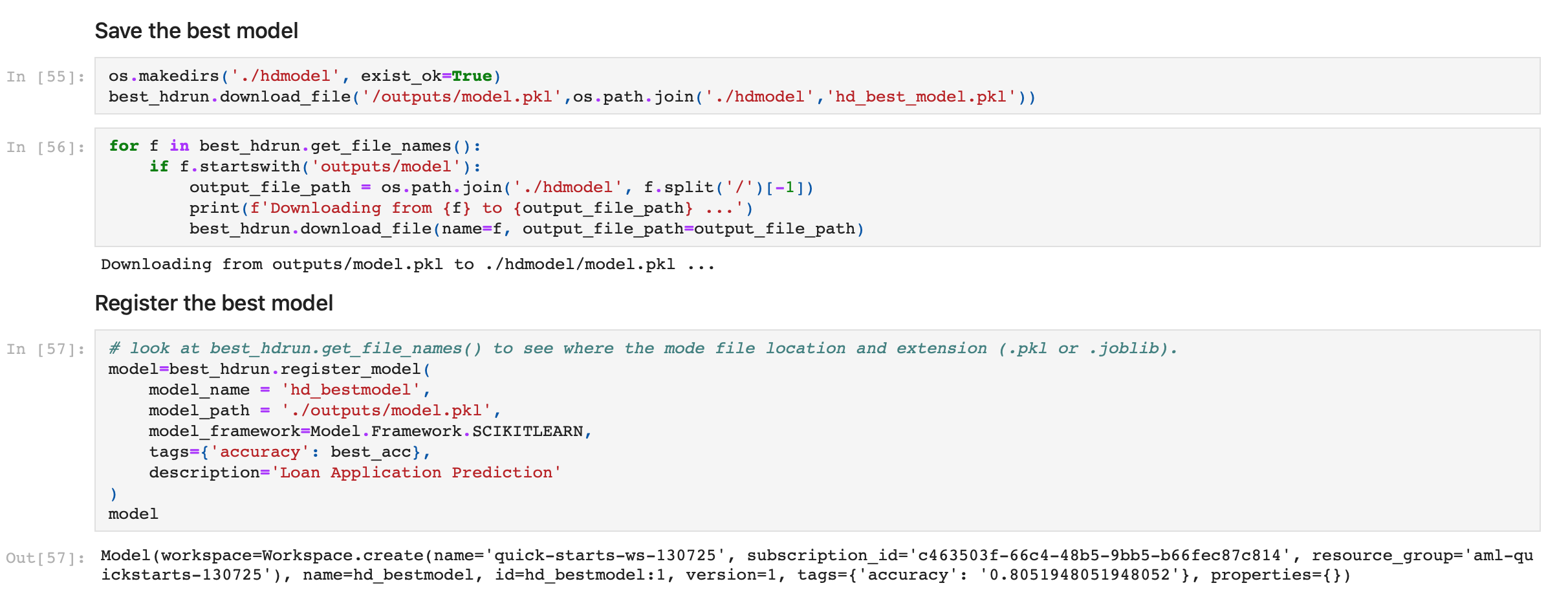

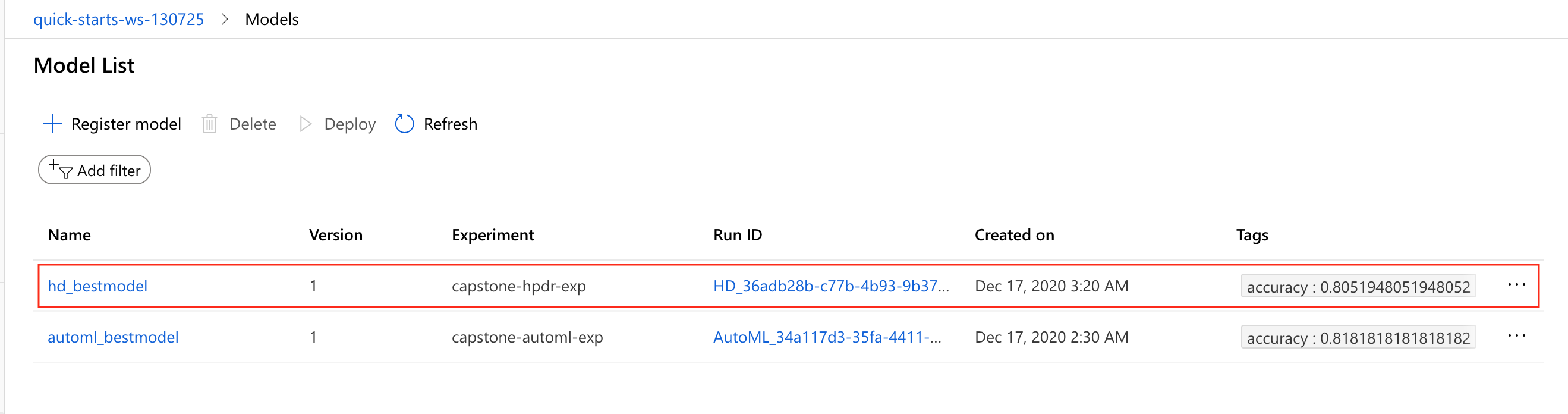

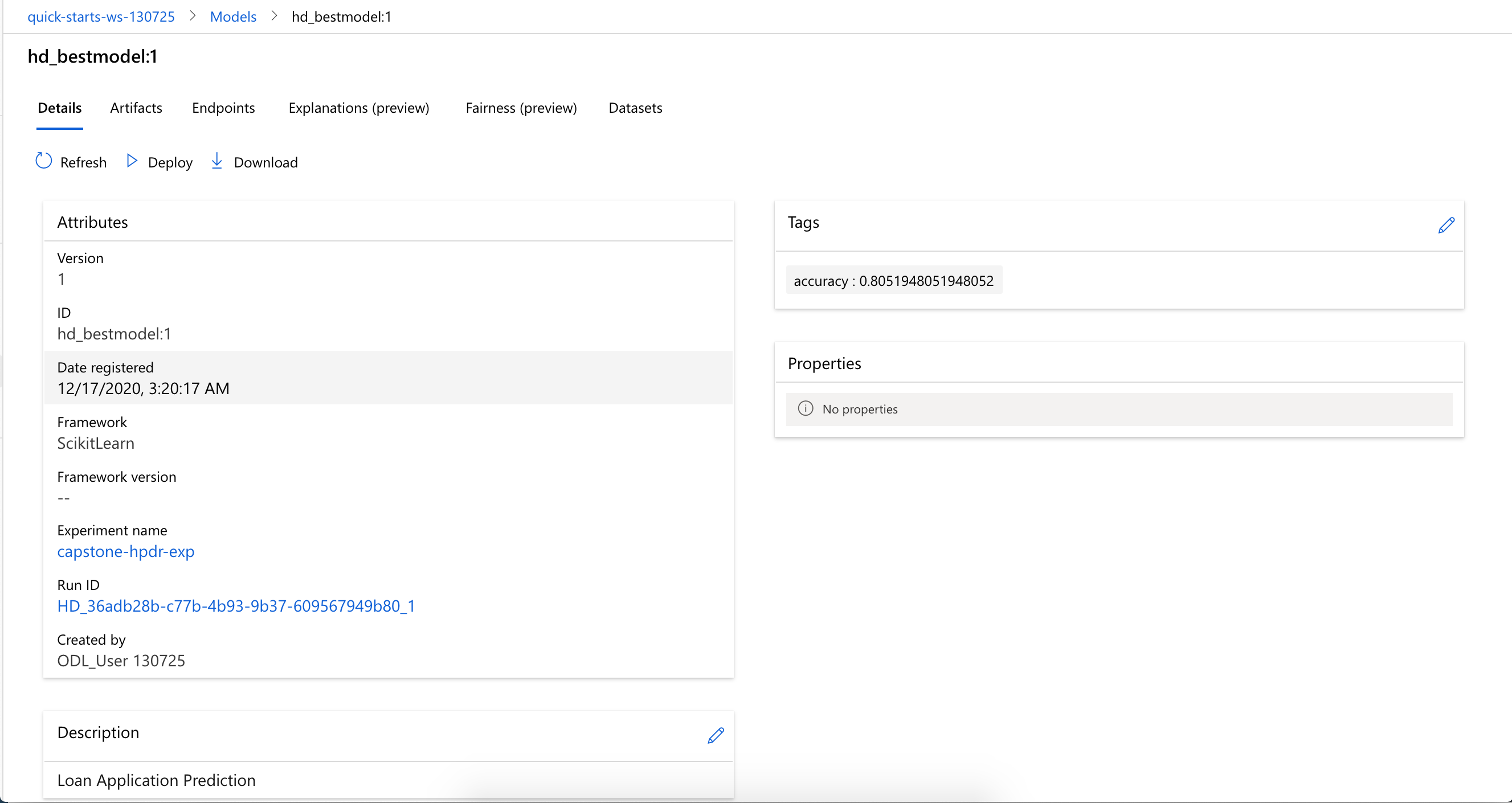

This best mode from Run4 was saved and registered as the best model from the HyperDrive experiment run:

The AutoML and HyperDrive experiment runs used the same dataset, number of iterations (30) and threads (4) on the same compute cluster type, yet AutoML run was more than 10 minutes faster than the HyperDrive run, as showned here:

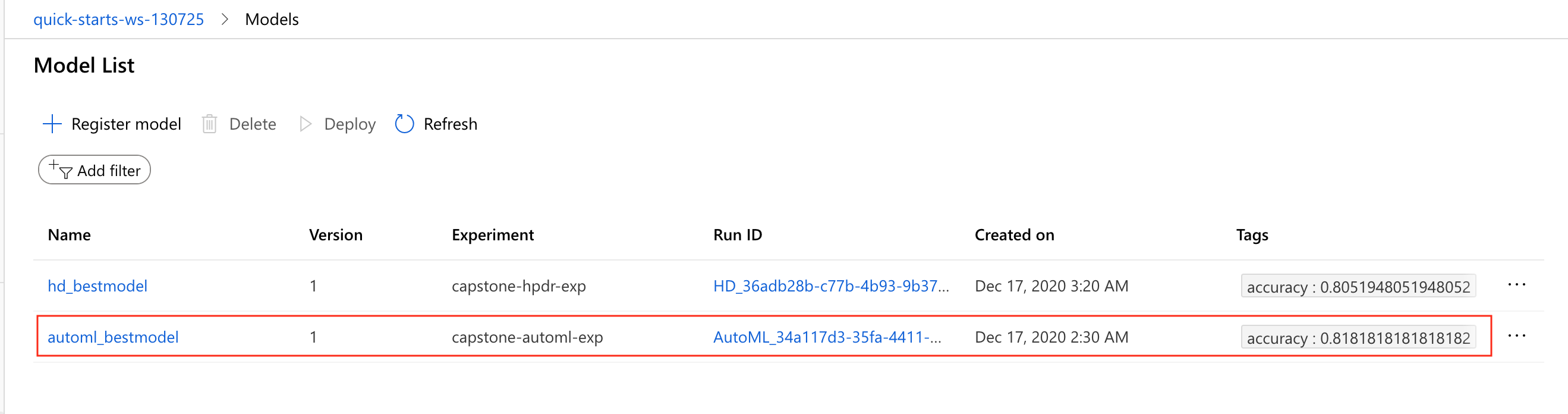

Moreover, the best model (VotingEnsemble) by AutoML had an accuracy of 81.82%, compared with HyperDrive's 80.52% as seen here:

The AutoML model performs better than the HyperDrive model in accuracy and run efficiency. Add other bonus features of AutoML such as model interpretation, deployment artifacts (e.g. conda environment yaml file and endpoint scoring script), the clear choice for deployment is the AutoML model.

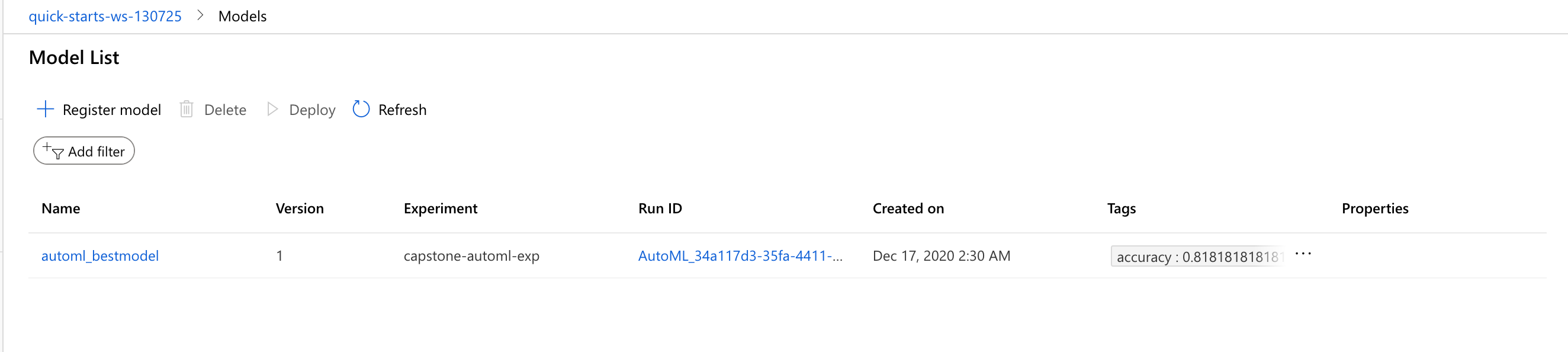

The best AutoML model (VotingEnsemble) was already registered at the end of the AutoML experiment run using SDK like this:

model=best_amlrun.register_model(

model_name = 'automl_bestmodel',

model_path = './outputs/model.pkl',

model_framework=Model.Framework.SCIKITLEARN,

tags={'accuracy': best_acc},

description='Loan Application Prediction'

)and the registered model appeared on the Models dashboard like so:

To deploy the model, go to the automl notebook and execute the code cells below the markdown cell titled 4.1 Deployment setup like so:

configure a deployment environment

# Download the conda environment file produced by AutoML and create an environment best_amlrun.download_file('outputs/conda_env_v_1_0_0.yml', 'conda_env.yml') myenv = Environment.from_conda_specification(name = 'myenv', file_path = 'conda_env.yml')configure inference config

# download the scoring file produced by AutoML best_amlrun.download_file('outputs/scoring_file_v_1_0_0.py', 'score.py') # set inference config inference_config = InferenceConfig(entry_script= 'score.py', environment=myenv)set Aci Webservice config

aci_config = AciWebservice.deploy_configuration(cpu_cores=1, memory_gb=1, auth_enabled=True)

Next, execute the code cells below the markdown cell titled 4.2 Deploy the model as a web service in the notebook, like so:

deploy the model as a web service

service = Model.deploy(workspace=ws, name='best-automl-model', models=[model], inference_config=inference_config, deployment_config=aci_config, overwrite=True)wait for the deployment to finish, query the web service state

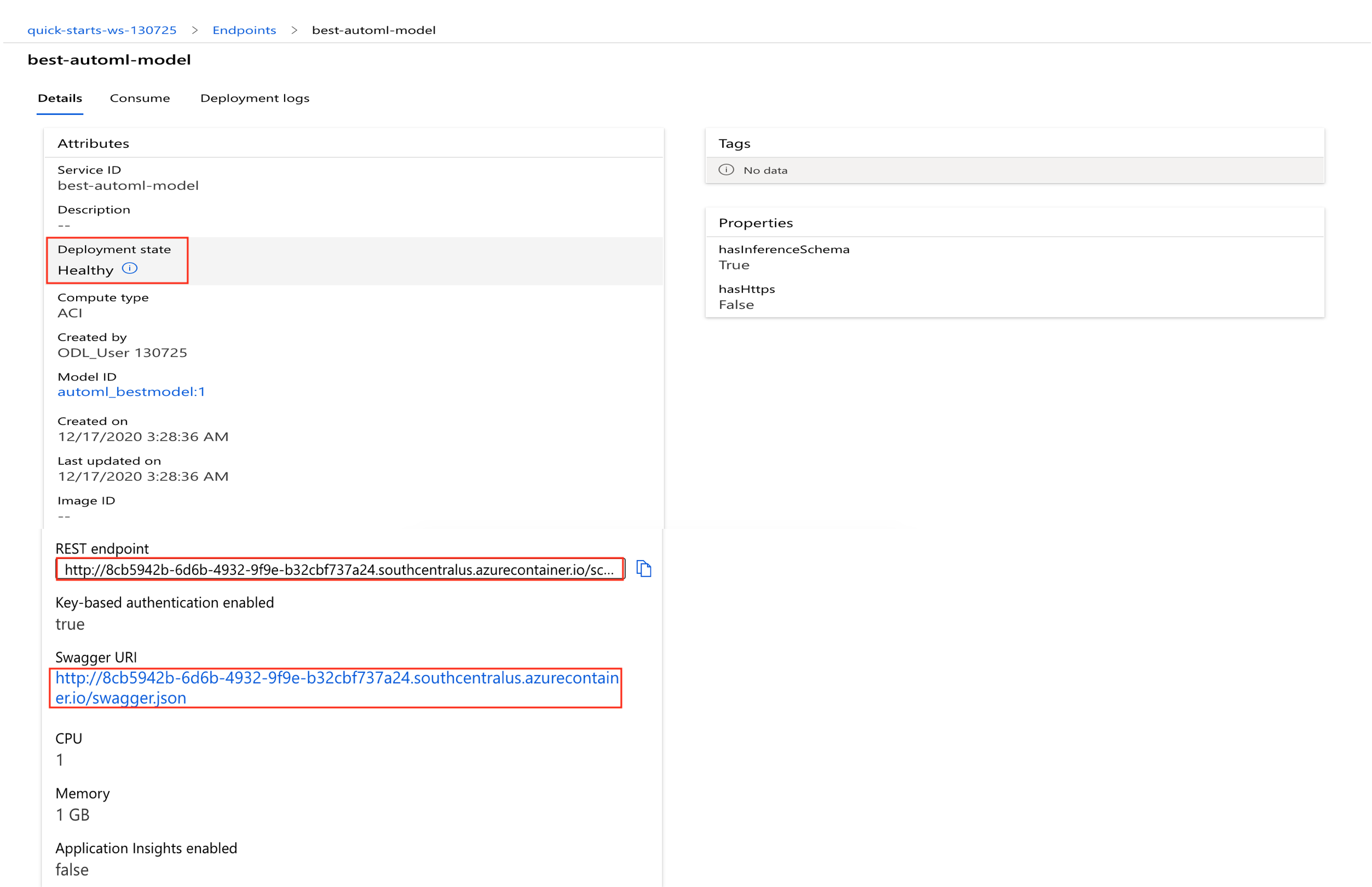

service.wait_for_deployment(show_output=True) print(f'\nservice state: {service.state}\n')print the scoring uri, swagger uri and the primary authentication key

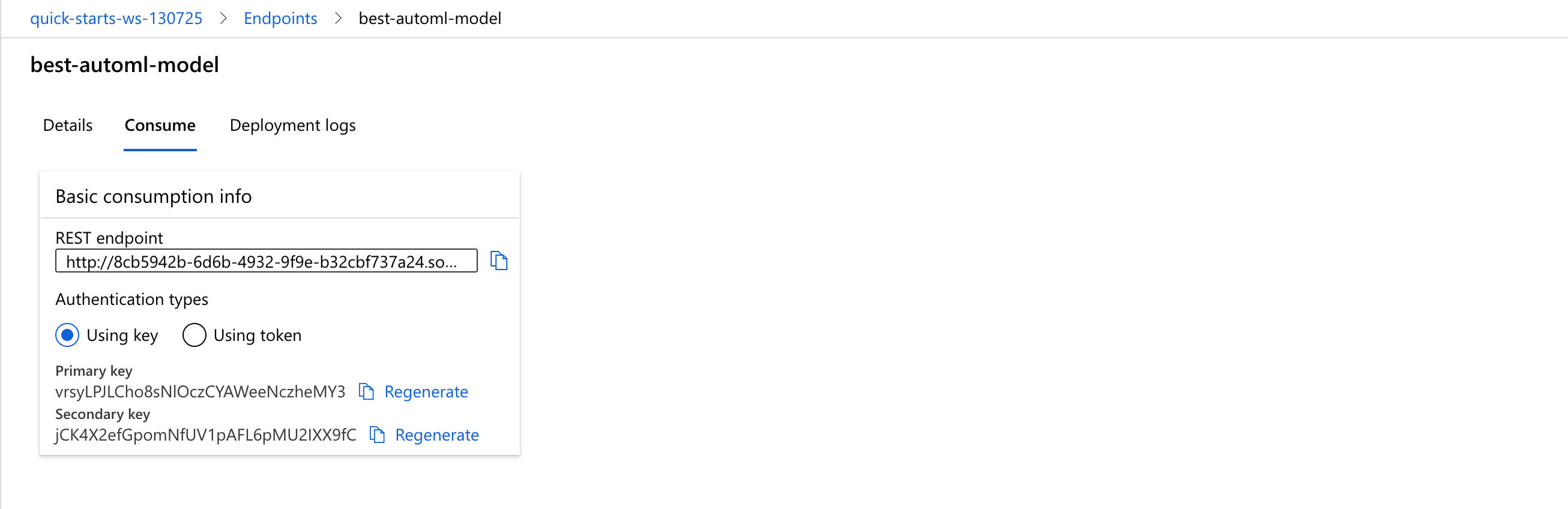

print(f'scoring URI: \n{service.scoring_uri}\n') print(f'swagger URI: \n{service.swagger_uri}\n') pkey, skey = service.get_keys() print(f'primary key: {pkey}')

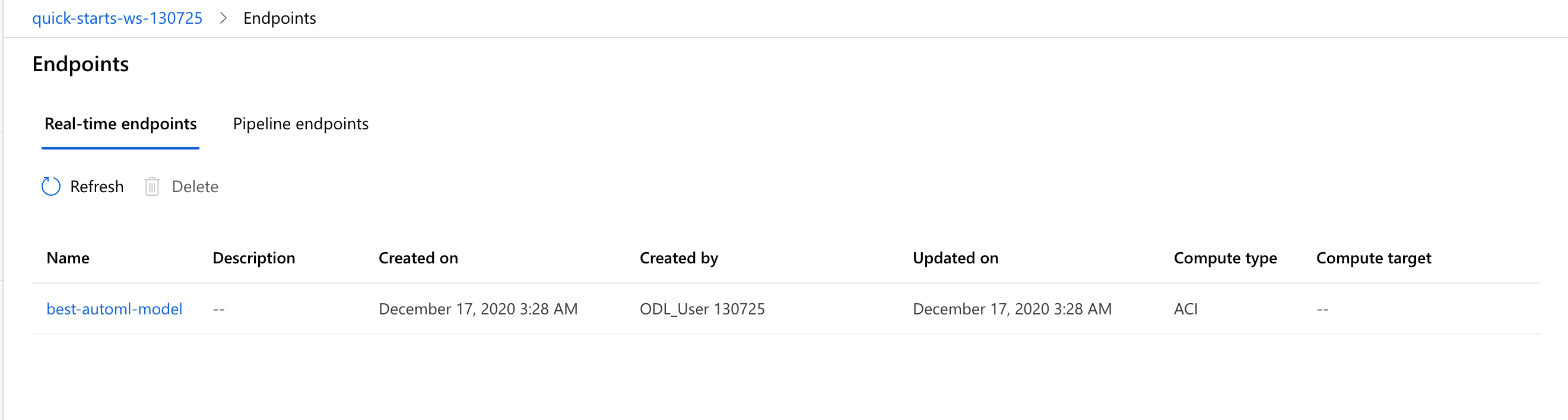

After executing the above block of code cells, the model was deployed as a web service and appeared on the Endpoints dashboard like the screenshots shown below:

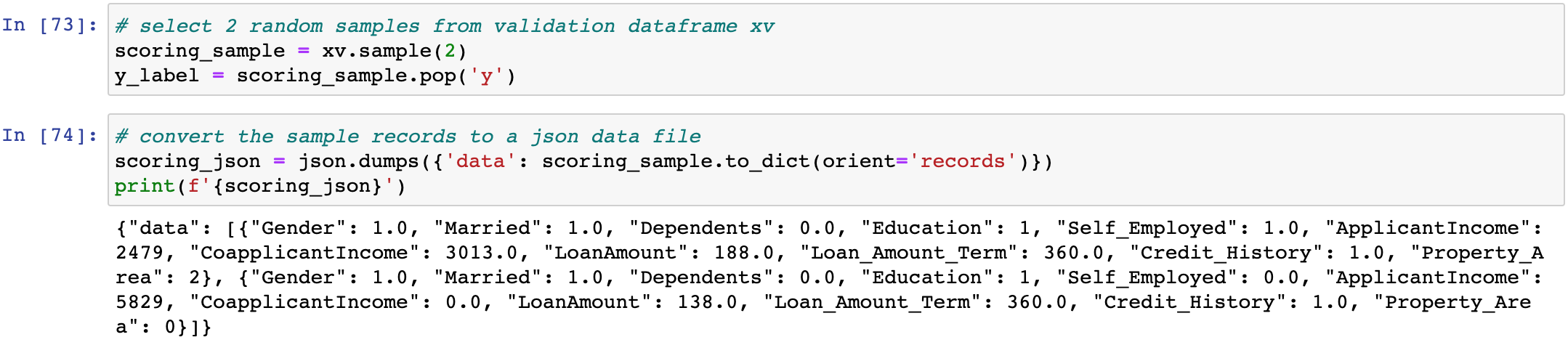

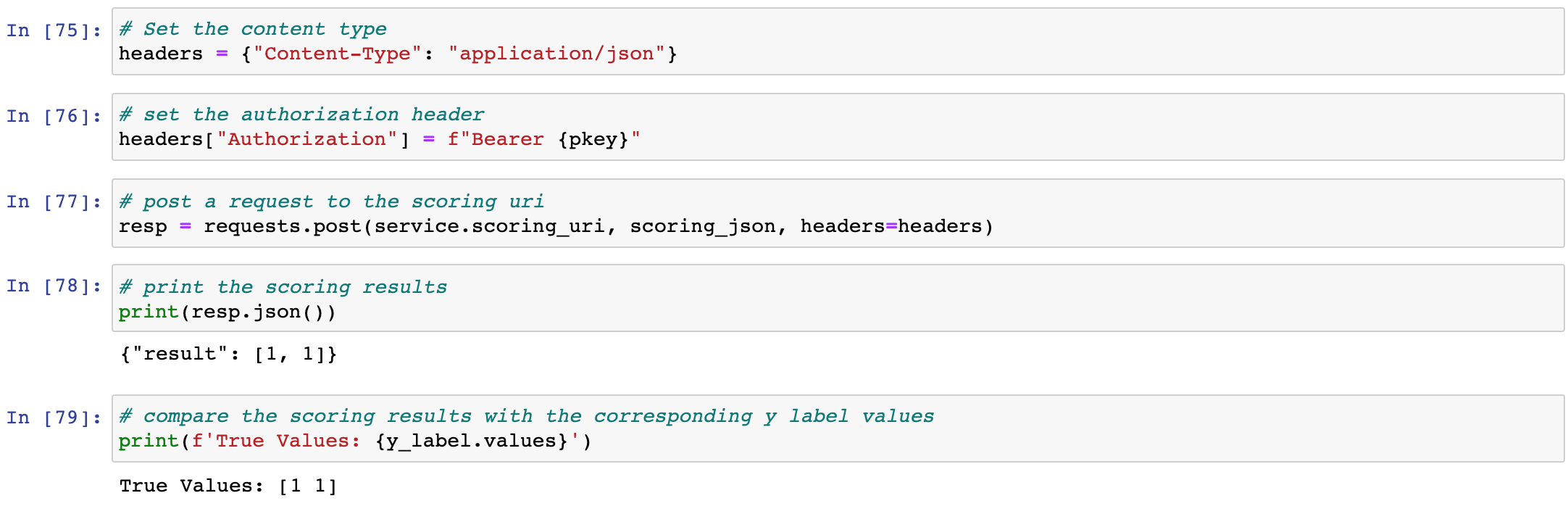

To test the scoring endpoint, execute the code cells below the markdown cell titled 4.3 Testing the web service in the notebook as shown here:

- prepare a sample payload

# select 2 random samples from the validation dataframe xv scoring_sample = xv.sample(2) y_label = scoring_sample.pop('y') # convert the sample records to a json data file scoring_json = json.dumps({'data': scoring_sample.to_dict(orient='records')}) print(f'{scoring_json}')

- set request headers, post the request and check the response

# Set the content type headers = {"Content-Type": "application/json"} # set the authorization header headers["Authorization"] = f"Bearer {pkey}" # post a request to the scoring uri resp = requests.post(service.scoring_uri, scoring_json, headers=headers) # print the scoring results print(resp.json()) # compare the scoring results with the corresponding y label values print(f'True Values: {y_label.values}')

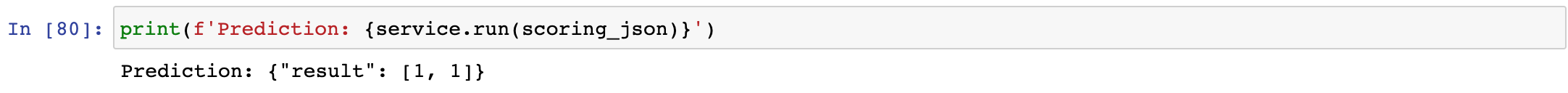

- another way to send a request to the scoring endpoint without sending the key is to call the

runmethod of the web service like so:# another way to test the scoring uri print(f'Prediction: {service.run(scoring_json)}')

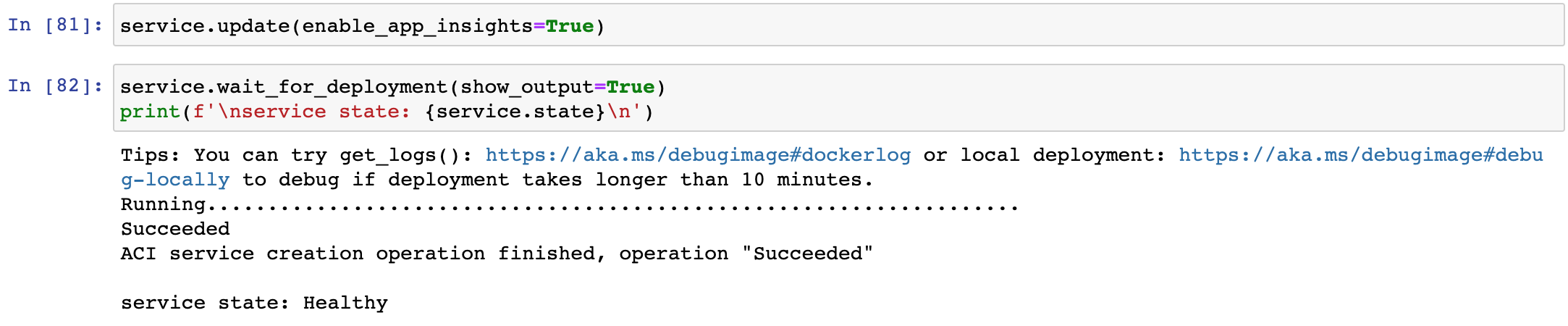

Next up, optionally enable Application Insights by executing the code cells below the markdown cell titled 4.4 Enable Application Insights in the notebook, as illustrated here:

- enable Application Insights

# update web service to enable Application Insights service.update(enable_app_insights=True) # wait for the deployment to finish, query the web service state service.wait_for_deployment(show_output=True) print(f'\nservice state: {service.state}\n')

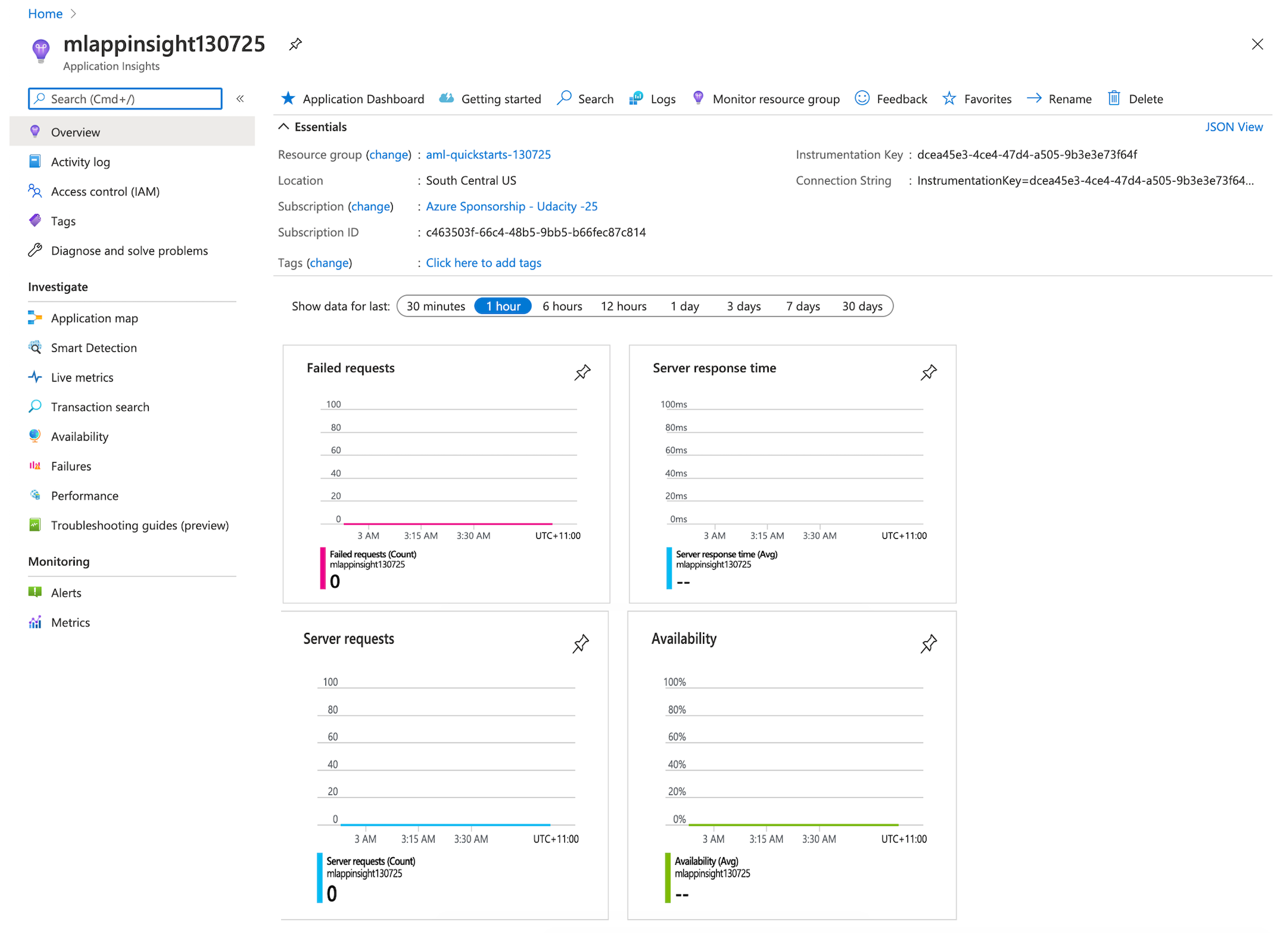

Application Insights collects useful data from the web service endpoint, such as

Output data

Responses

Request rates, response times, and failure rates

Dependency rates, response times, and failure rates

Exceptions

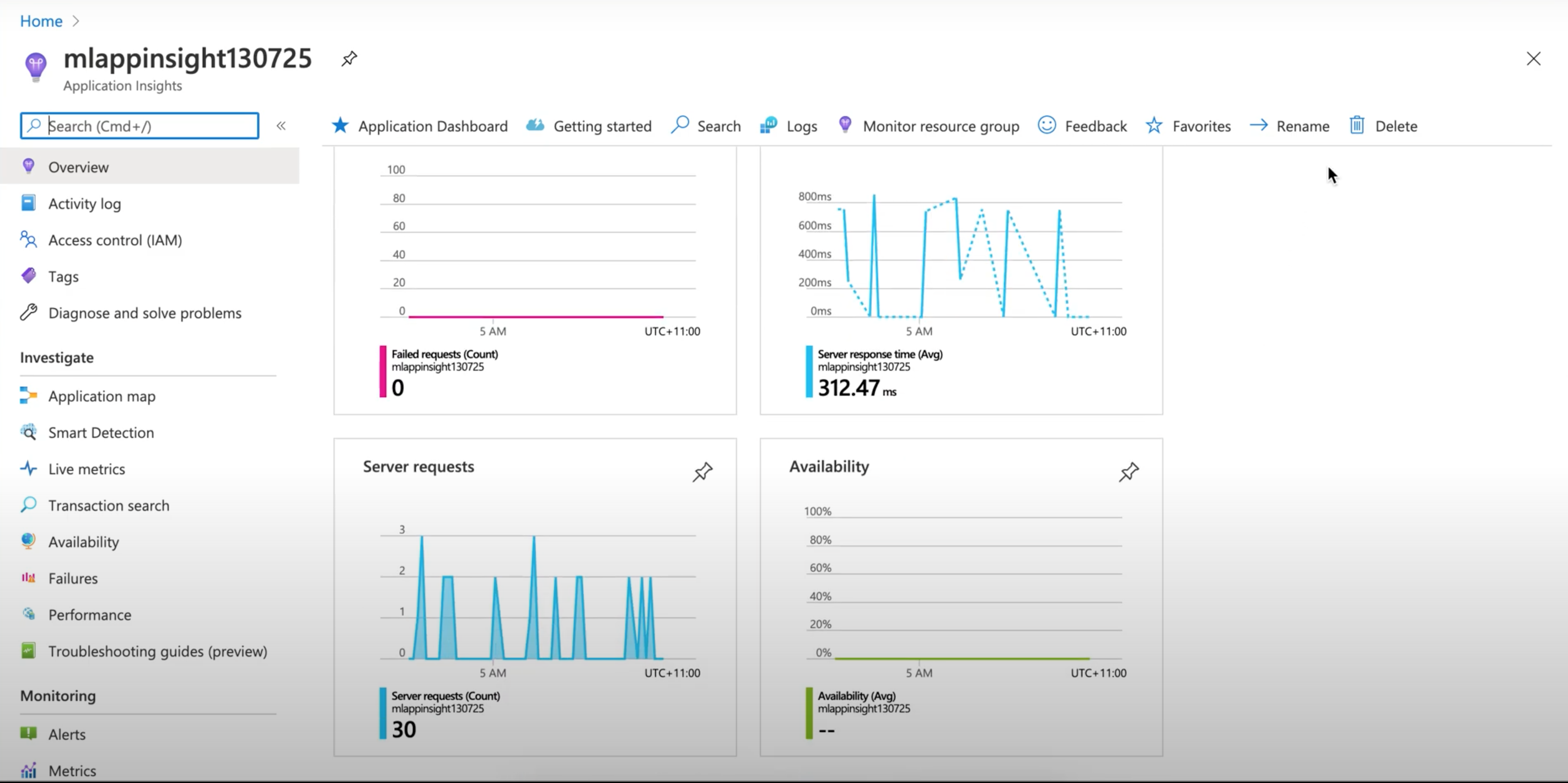

The data is useful for monitoring the endpoint. It also automatically detect performance anomalies, and includes powerful analytics tools to help you diagnose issues and to understand what users actually do with your app. It's designed to help you continuously improve performance and usability.

For example, the dashboard showed the 30 scoring requests I sent to the endpoint with an average response time of 312.47 ms and 0 failed request:

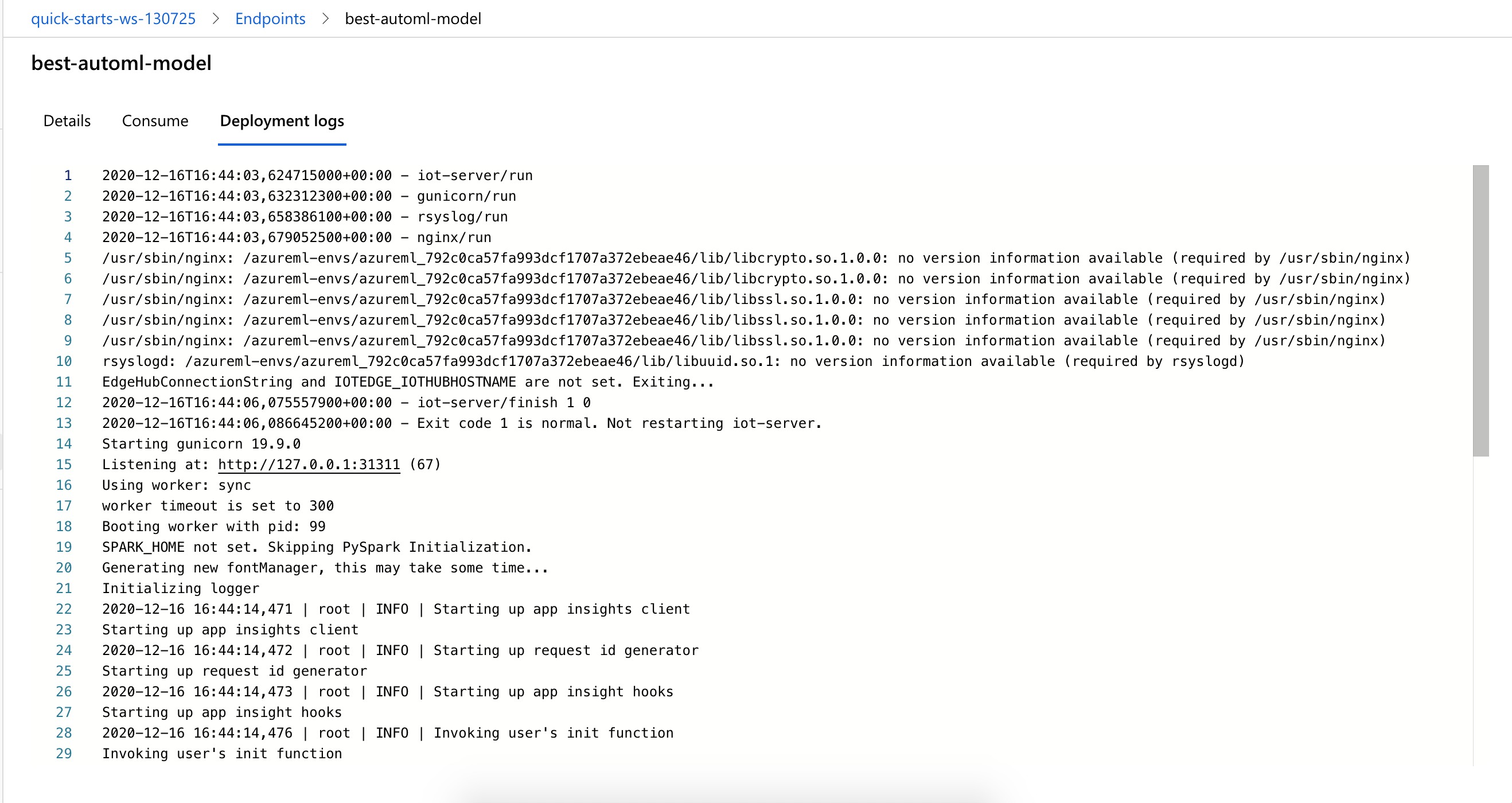

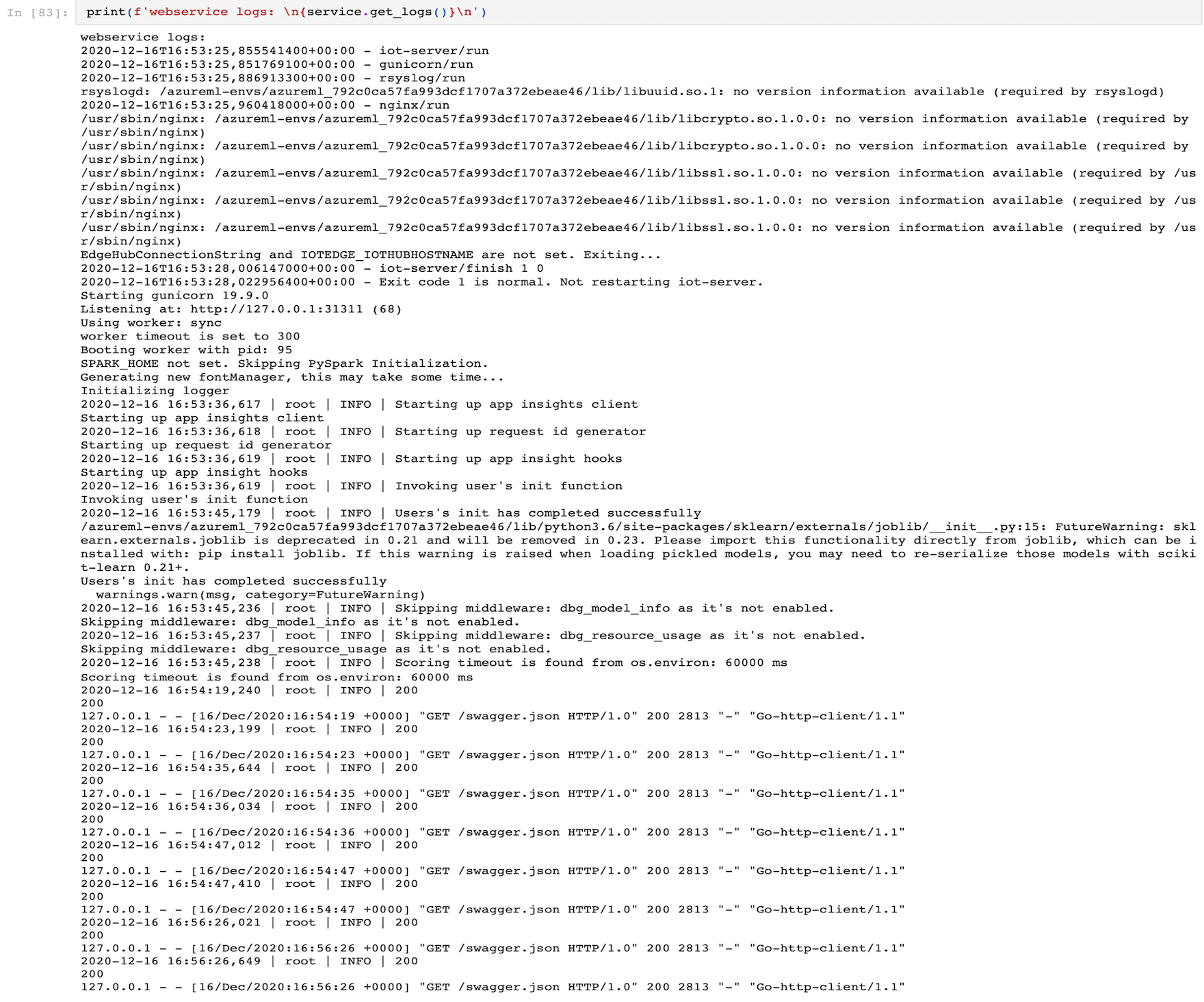

To print the logs of the web service, run the code cells below the markdown cell titled 4.5 Printing the logs of the web service in the notebook, like so:

- print the logs of the web service

# print the logs by calling the get_logs() function of the web service print(f'webservice logs: \n{service.get_logs()}\n')

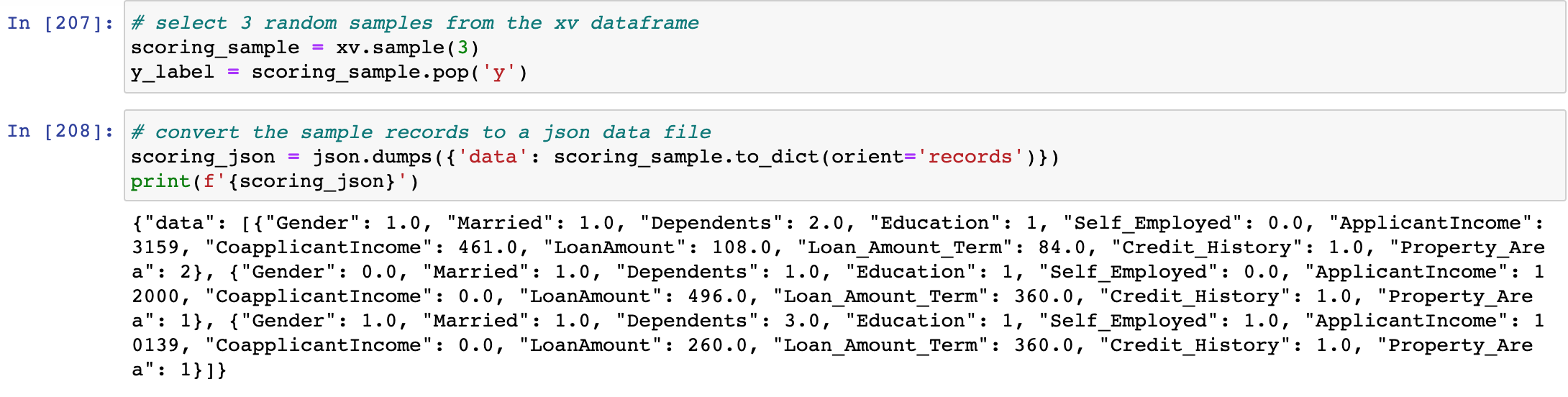

Lastly, to run a demo of the active web service scoring endpoint, run the code cells under the markdown cell titled 4.6 Active web service endpoint Demo in the notebook as shown here:

- prepare a sample payload

# select 3 random samples from the validation dataframe xv scoring_sample = xv.sample(3) y_label = scoring_sample.pop('y') # convert the sample records to a json data file scoring_json = json.dumps({'data': scoring_sample.to_dict(orient='records')}) print(f'{scoring_json}')

- set request headers, post the request and check the response

# Set the content type headers = {"Content-Type": "application/json"} # set the authorization header headers["Authorization"] = f"Bearer {pkey}" # post a request to the scoring uri resp = requests.post(service.scoring_uri, scoring_json, headers=headers) # print the scoring results print(resp.json()) # compare the scoring results with the corresponding y label values print(f'True Values: {y_label.values}') # another way to test the scoring uri print(f'Prediction: {service.run(scoring_json)}')

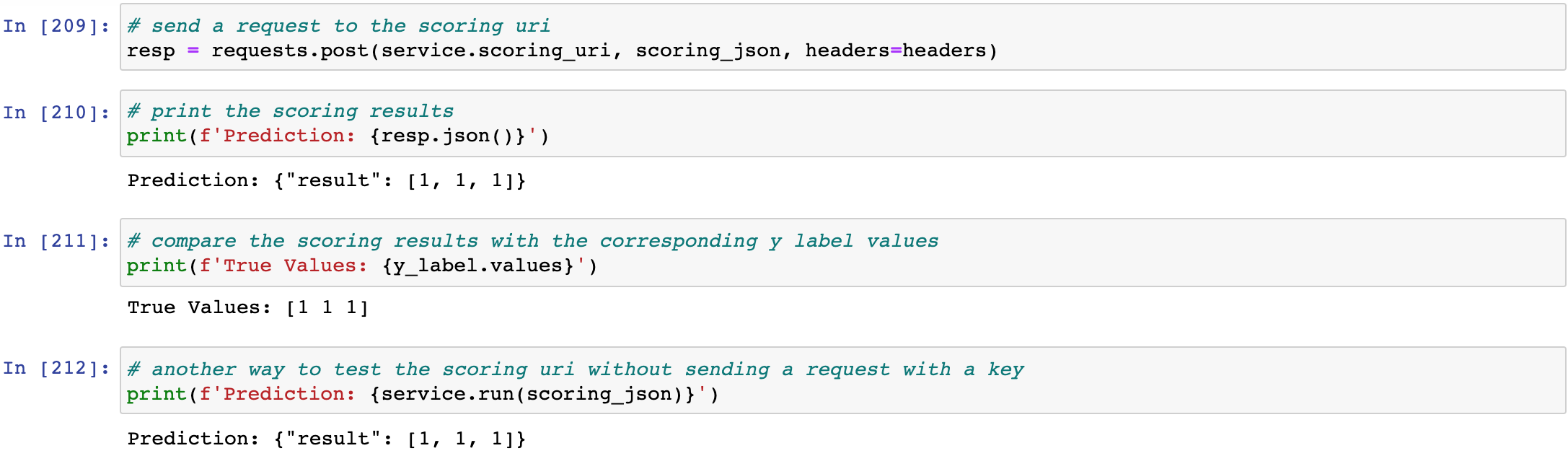

Finally, to delete the web service deployed, run the code cells under the markdown cell titled 5. Clean Up in the notebook:

- The deployment steps described above are for deploying the best AutoML model, to deploy the best HyperDrive model, simply execute the code cells under the markdown cell titled

4. Model Deploymentsection in thehyperparameter_tuningnotebook. - The best model file that was used in this project deployment can be found in the best_model folder.

- The registered model file from the AutoML and HyperDrive experiment runs in this project can be found in the registered_model folder.

- This conda environment file contains the deployment environment details and must be included in the model deployment.

- This scoring script file contains the functions used to initialize the deployed web service at startup and run the model using request data passed in by a client call. It must be included in the model deployment.

A screencast showing:

a working model

a demo of the deployed model

a demo of a sample request sent to the endpoint and its response

is available here:

A small dataset was chosen for this project with the resource and time constraints of Udacity project workspace in mind. Without the constraints, we can possibly try the following improvement ideas:

Increase the model training time

Apply model interpretability of AutoML on more complex and larger datasets, to gain speed and valuable insights in feature engineering, which can in turn help to improve the model accuracy further

Experiment with different hyperparameter sampling methods like Gird sampling or Bayesian sampling on the Scikit-learn LogicRegression model or other custom-coded machine learning models

The screenshots referenced in this README can be found in the folder named assets. A short description (description marked with an asterisk denotes mandatory submission screenshot) and link to each of them is listed here:

-

Common

-

AutoML

- AutoML Experiment Run completed

- AutoML RunDetails widget *

- AutoML Best Model *

- AutoML Best Model with its run id *

- AutoML Best Model Details

- AutoML Best Model Training Algorithms

- AutoML Best Model Key Parameters

- AutoML Best Model Accuracy and Run Id

- AutoML Best Model Estimator Details

- AutoML Best Model Metrics

- AutoML Explainer: Dataset Exploration

- AutoML Explainer: Global Importance

- AutoML Explainer: Explanation Exploration

- AutoML Explainer: Summary Importance

- Saving and Registering AutoML Best Model

- AutoML Registered Best Model

- AutoML Registered Best Model Details

-

HyperDrive

- HyperDrive Experiment Run Completion

- HyperDrive RunDetails Widget *

- HyperDrive RunDetails Widget Metrics Visual *

- Visualization of HyperDrive Training Progress and Performance of Hyperparameter Runs *

- HyperDrive Best Model *

- HyperDrive Best Model Hyperparamters and Run Id *

- HyperDrive Best Model Run Id and Tuned Hyperparamters *

- HyperDirve Best Model Metrics

- Saving and Registering HyperDrive Best Model

- HyperDrive Registered Best Model

- HyperDrive Registered Best Model Details

-

Deployment

- AutoML and HyperDrive Experiment Run Duration Comparison

- Best Model Pick for Deployment

- Best Model Endpoint

- Active Best Model Endpoint *

- Best Model EndPoint Consume

- Best Model Deployment logs

- Enable Application Insights

- Application Insights URL

- Application Insights Dashboard

- Monitoring Scoring Endpoint with Application Insights

- Printing Logs of the Web Service

- Prepare Data Payload for Testing Scoring Endpoint

- Testing Scoring Endpoint

- Alternate way for Testing Scoring Endpoint

- Inference Demo: Preparing Data Payload

- Inference Demo: Posting Request

- Delete Web Service

Lesson 6.3 - Exercise: Hyperparameter Tuning with HyperDrive

Lesson 6.8 - Exercise: AutoML and the SDK

Lesson 2.5 - Exercise: Enable Security and Authentication

Lesson 2.10 - Exercise: Deploy an Azure Machine learning Model

Lesson 2.15 - Exercise: Enable Application Insights

List all ComputeTarget objects within the workspace

Create a dataset from pandas dataframe