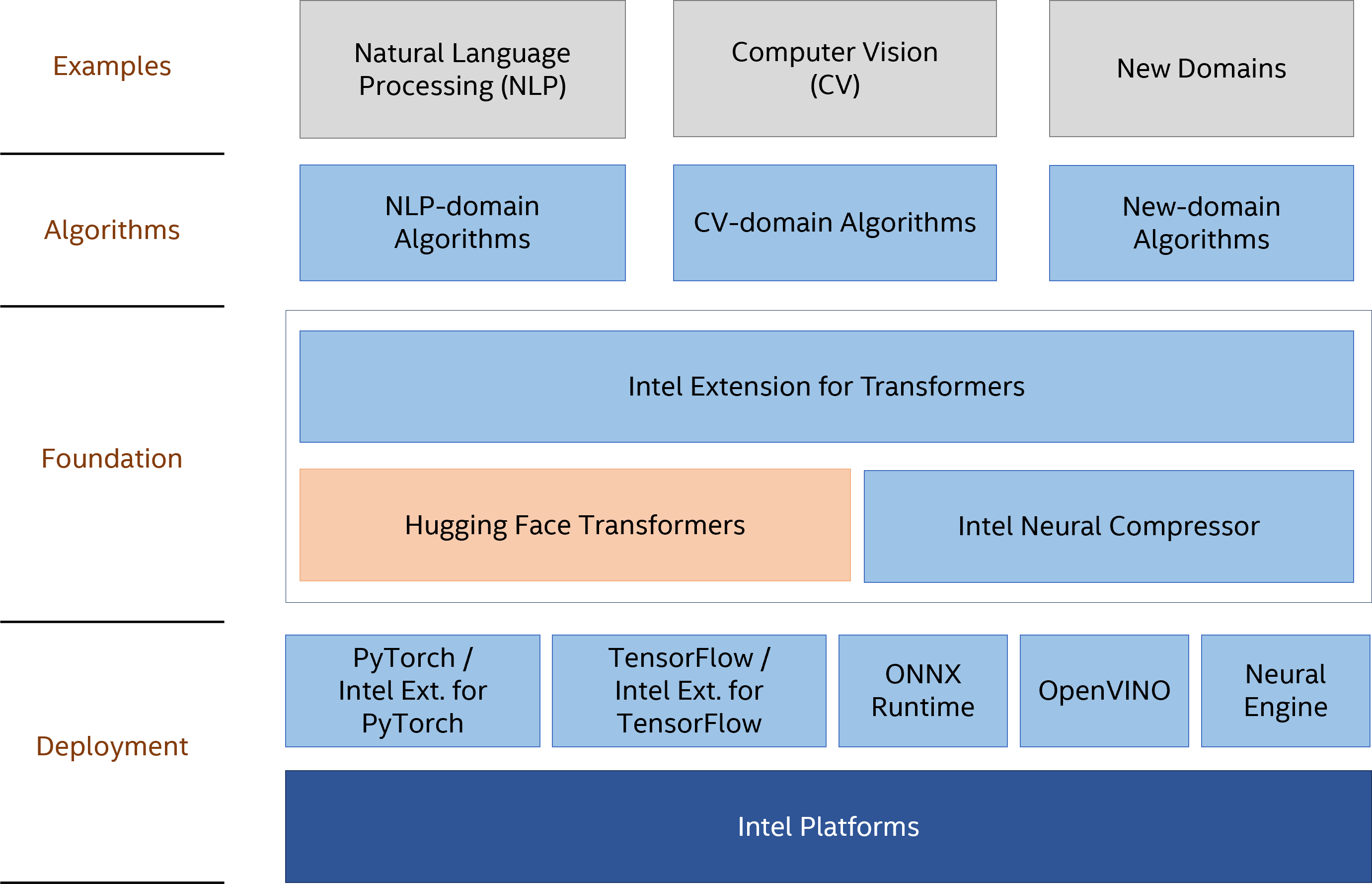

Intel® Extension for Transformers is an innovative toolkit to accelerate Transformer-based models on Intel platforms. The toolkit helps developers to improve the productivity through ease-of-use model compression APIs by extending Hugging Face transformers APIs. The compression infrastructure leverages Intel® Neural Compressor which provides a rich set of model compression techniques: quantization, pruning, distillation and so on. The toolkit provides Transformers-accelerated Libraries and Neural Engine to demonstrate the performance of extremely compressed models, and therefore significantly improve the inference efficiency on Intel platforms. Some of the key features have been published in NeurIPS 2021 and 2022.

This toolkit helps developers to improve the productivity of inference deployment by extending Hugging Face transformers APIs for Transformer-based models in natural language processing (NLP) domain. With extremely compressed models, the toolkit can greatly improve the inference efficiency on Intel platforms.

- Model Compression

| Framework | Quantization | Pruning/Sparsity | Distillation | Neural Architecture Search |

|---|---|---|---|---|

| PyTorch | ✔ | ✔ | ✔ | ✔ |

| TensorFlow | ✔ | ✔ | ✔ | Stay tuned ⭐ |

-

Data Augmentation for NLP Datasets

-

Transformers-accelerated Neural Engine

-

Transformers-accelerated Libraries

-

Domain Algorithms |Length Adaptive Transformer | |-| |PyTorch ✔ |

-

Architecture of Intel® Extension for Transformers

| OVERVIEW | |||||||

|---|---|---|---|---|---|---|---|

| Model Compression | Neural Engine | Kernel Libraries | Examples | ||||

| MODEL COMPRESSION | |||||||

| Quantization | Pruning | Distillation | Orchestration | ||||

| Neural Architecture Search | Export | Metrics/Objectives | Pipeline | ||||

| NEURAL ENGINE | |||||||

| Model Compilation | Custom Pattern | Deployment | Profiling | ||||

| KERNEL LIBRARIES | |||||||

| Sparse GEMM Kernels | Custom INT8 Kernels | Profiling | Benchmark | ||||

| ALGORITHMS | |||||||

| Length Adaptive | Data Augmentation | ||||||

| TUTORIALS AND RESULTS | |||||||

| Tutorials | Supported Models | Model Performance | Kernel Performance | ||||

pip install intel-extension-for-transformersgit clone https://github.com/intel/intel-extension-for-transformers.git intel_extension_for_transformers

cd intel_extension_for_transformers

# Install Dependency

pip install -r requirements.txt

git submodule update --init --recursive

# Install intel_extension_for_transformers

python setup.py installNote: Recommend install protobuf <= 3.20.0 if use onnxruntime <= 1.11

from intel_extension_for_transformers.optimization import QuantizationConfig, metric, objectives

from intel_extension_for_transformers.optimization.trainer import NLPTrainer

# Replace transformers.Trainer with NLPTrainer

# trainer = transformers.Trainer(...)

trainer = NLPTrainer(...)

metric = metrics.Metric(name="eval_f1", is_relative=True, criterion=0.01)

q_config = QuantizationConfig(

approach="PostTrainingStatic",

metrics=[metric],

objectives=[objectives.performance]

)

model = trainer.quantize(quant_config=q_config)Please refer to quantization document for more details.

from intel_extension_for_transformers.optimization import PrunerConfig, PruningConfig

from intel_extension_for_transformers.optimization.trainer import NLPTrainer

# Replace transformers.Trainer with NLPTrainer

# trainer = transformers.Trainer(...)

trainer = NLPTrainer(...)

metric = metrics.Metric(name="eval_accuracy")

pruner_config = PrunerConfig(prune_type='BasicMagnitude', target_sparsity_ratio=0.9)

p_conf = PruningConfig(pruner_config=[pruner_config], metrics=metric)

model = trainer.prune(pruning_config=p_conf)Please refer to pruning document for more details.

from intel_extension_for_transformers.optimization import DistillationConfig, Criterion

from intel_extension_for_transformers.optimization.trainer import NLPTrainer

# Replace transformers.Trainer with NLPTrainer

# trainer = transformers.Trainer(...)

teacher_model = ... # exist model

trainer = NLPTrainer(...)

metric = metrics.Metric(name="eval_accuracy")

d_conf = DistillationConfig(metrics=metric)

model = trainer.distill(distillation_config=d_conf, teacher_model=teacher_model)Please refer to distillation document for more details.

Data augmentation provides the facilities to generate synthesized NLP dataset for further model optimization. The data augmentation supports text generation on popular fine-tuned models like GPT, GPT2, and other text synthesis approaches from nlpaug.

from intel_extension_for_transformers.preprocessing.data_augmentation import DataAugmentation

aug = DataAugmentation(augmenter_type="TextGenerationAug")

aug.input_dataset = "original_dataset.csv" # example: https://huggingface.co/datasets/glue/viewer/sst2/train

aug.column_names = "sentence"

aug.output_path = os.path.join(self.result_path, "test2.cvs")

aug.augmenter_arguments = {'model_name_or_path': 'gpt2-medium'}

aug.data_augment()

raw_datasets = load_dataset("csv", data_files=aug.output_path, delimiter="\t", split="train")Please refer to data augmentation document for more details.

Neural Engine is one of reference deployments that Intel Extension for Transformers provides. Neural Engine aims to demonstrate the optimal performance of extremely compressed NLP models by exploring the optimization opportunities from both HW and SW.

from intel_extension_for_transformers.backends.neural_engine.compile import compile

# /path/to/your/model is a TensorFlow pb model or ONNX model

model = compile('/path/to/your/model')

inputs = ... # [input_ids, segment_ids, input_mask]

model.inference(inputs)Please refer to Neural Engine for more details.

Quantized Length Adaptive Transformer leverages sequence-length reduction and low-bit representation techniques to further enhance model inference performance, enabling adaptive sequence-length sizes to accommodate different computational budget requirements with an optimal accuracy efficiency tradeoff.

from intel_extension_for_transformers.optimization import QuantizationConfig, DynamicLengthConfig, metric, objectives

from intel_extension_for_transformers.optimization.trainer import NLPTrainer

# Replace transformers.Trainer with NLPTrainer

# trainer = transformers.Trainer(...)

trainer = NLPTrainer(...)

metric = metrics.Metric(name="eval_f1", is_relative=True, criterion=0.01)

q_config = QuantizationConfig(

approach="PostTrainingStatic",

metrics=[metric],

objectives=[objectives.performance]

)

# Apply the length config

dynamic_length_config = DynamicLengthConfig(length_config=length_config)

trainer.set_dynamic_config(dynamic_config=dynamic_length_config)

# Quantization

model = trainer.quantize(quant_config=q_config)Please refer to paper QuaLA-MiniLM and code for details

Transformers-accelerated Neural Engine is one of reference deployments that Intel® Extension for Transformers provides. Neural Engine aims to demonstrate the optimal performance of extremely compressed NLP models by exploring the optimization opportunities from both HW and SW.

from intel_extension_for_transformers.backends.neural_engine.compile import compile

# /path/to/your/model is a TensorFlow pb model or ONNX model

model = compile('/path/to/your/model')

inputs = ... # [input_ids, segment_ids, input_mask]

model.inference(inputs)Please refer to example in Transformers-accelerated Neural Engine and paper Fast Distilbert on CPUs for more details.

Transformers-accelerated Libraries is a high-performance operator computing library implemented by assembly. Transformers-accelerated Libraries contains a JIT domain, a kernel domain, and a scheduling proxy framework.

#include "interface.hpp"

...

operator_desc op_desc(ker_kind, ker_prop, eng_kind, ts_descs, op_attrs);

sparse_matmul_desc spmm_desc(op_desc);

sparse_matmul spmm_kern(spmm_desc);

std::vector<const void*> rt_data = {data0, data1, data2, data3, data4};

spmm_kern.execute(rt_data);Please refer to Transformers-accelerated Libraries for more details.

Intel® Extension for Transformers supports systems based on Intel 64 architecture or compatible processors that are specifically optimized for the following CPUs:

- Intel Xeon Scalable processor (formerly Cascade Lake, Icelake)

- Future Intel Xeon Scalable processor (code name Sapphire Rapids)

- OS version: CentOS 8.4, Ubuntu 20.04

- Python version: 3.7, 3.8, 3.9

| Framework | Intel TensorFlow | PyTorch | IPEX |

|---|---|---|---|

| Version | 2.10.0 2.9.1 | 1.13.0+cpu 1.12.0+cpu 1.11.0+cpu | 1.13.0 1.12.0 |

- OS version: Windows 10

- Python version: 3.7, 3.8, 3.9

| Framework | Intel TensorFlow | PyTorch |

|---|---|---|

| Version | 2.9.1 | 1.13.0+cpu |

- Blog published on Medium: MLefficiency — Optimizing transformer models for efficiency (Dec 2022)

- NeurIPS'2022: Fast Distilbert on CPUs (Nov 2022)

- NeurIPS'2022: QuaLA-MiniLM: a Quantized Length Adaptive MiniLM (Nov 2022)

- Blog published by Cohere: Top NLP Papers—November 2022 (Nov 2022)

- Blog published by Alibaba: Deep learning inference optimization for Address Purification (Aug 2022)

- NeurIPS'2021: Prune Once for All: Sparse Pre-Trained Language Models (Nov 2021)