A PyTorch implementation of Tacotron2, described in Natural TTS Synthesis By Conditioning Wavenet On Mel Spectrogram Predictions, an end-to-end text-to-speech(TTS) neural network architecture, which directly converts character text sequence to speech.

- Python3.6+ (Recommend Anaconda)

- PyTorch 0.4.1+

pip install -r requirements.txt- If you want to run

egs/ljspeech/run.sh, download LJ Speech Dataset for free.

$ cd egs/ljspeech

# Modify wav_dir to your LJ Speech dir

$ bash run.shThat's all.

You can change parameter by $ bash run.sh --parameter_name parameter_value, egs, $ bash run.sh --stage 2. See parameter name in egs/ljspeech/run.sh before . utils/parse_options.sh.

Workflow of egs/ljspeech/run.sh:

- Stage 1: Training

- Stage 2: Synthesising

egs/ljspeech/run.sh provide example usage.

# Set PATH and PYTHONPATH

$ cd egs/ljspeech/; . ./path.sh

# Train:

$ train.py -h

# Synthesis audio:

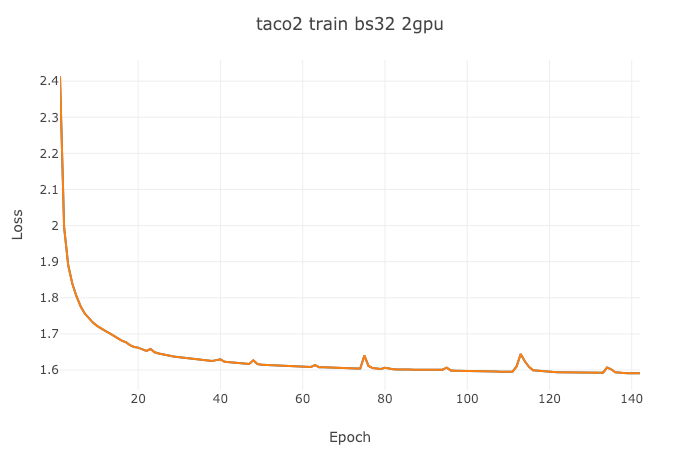

$ synthesis.py -hIf you want to visualize your loss, you can use visdom to do that:

- Open a new terminal in your remote server (recommend tmux) and run

$ visdom - Open a new terminal and run

$ bash run.sh --visdom 1 --visdom_id "<any-string>"or$ train.py ... --visdom 1 --vidsdom_id "<any-string>" - Open your browser and type

<your-remote-server-ip>:8097, egs,127.0.0.1:8097 - In visdom website, chose

<any-string>inEnvironmentto see your loss

$ bash run.sh --continue_from <model-path>Use comma separated gpu-id sequence, such as:

$ bash run.sh --id "0,1"- When happened in training, try to reduce

batch_sizeor use more GPU.$ bash run.sh --batch_size <lower-value>or$ bash run.sh --id "0,1".

- https://github.com/NVIDIA/tacotron2

- https://github.com/A-Jacobson/tacotron2

- https://github.com/Rayhane-mamah/Tacotron-2

This is a work in progress and any contribution is welcome (dev branch is main development branch).

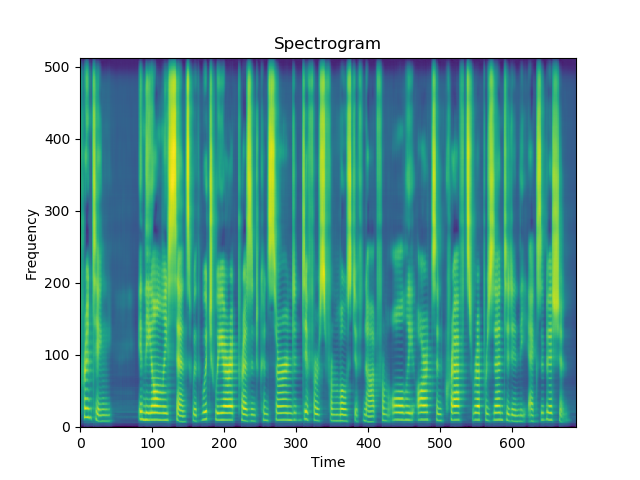

I implement feature prediction network + Griffin-Lim to synthesis speech now.