electron.desktopCapturer to cv.VideoCapture

developer239 opened this issue · comments

Hi, :)

awesome library as always. I spent couple hours browsing the internet and searching for some solution how to capture desktop (or window) and send it to OpenCv. I got it working on Linux but I was unable to make it work on Mac. Then I found Electron and its desktopCapturer. So I started using Electron...

I would like to be able to send the recorded screen to your OpenCV library.

a) Simply starting stream using desktopCapturer and passing the stream to your opencv library.

I have this code:

navigator.mediaDevices.getUserMedia({

audio: false,

video: {

mandatory: {

chromeMediaSource: 'desktop',

chromeMediaSourceId: source.id,

minWidth: 640,

maxWidth: 640,

minHeight: 320,

maxHeight: 320,

},

},

})

.then((stream) => {

console.log('stream ', stream);

const videoUrl = URL.createObjectURL(stream);

console.log('videoUrl', videoUrl);

const capturedVideo = new cv.VideoCapture(videoUrl);

console.log('captured video', capturedVideo);

})

.catch((error) => console.error(error));

b) Stream the MediaStream object to Nodejs server and process the MediaStream using your OpenCV library.

Is a) or b) possible? :)

Thank you

Hmm I am not sure if the OpenCV VideoCapture accepts this kind of file as input.

Anyways, you should be able to extract OpenCV Mat's from any kinds of image streams by using the constructor, which takes the raw data as input new cv.Mat(buf, rows, cols, type). You just have to pipe your stream into a node buffer. Atleast that's how it works with streaming images with socket.io.

That makes sense. This is probably not a best way of taking an image but after a while I managed to get still image and convert it to node buffer like this:

navigator.mediaDevices.getUserMedia({

audio: false,

video: {

mandatory: {

chromeMediaSource: 'desktop',

chromeMediaSourceId: source.id,

minWidth: 1000,

maxWidth: 1000,

minHeight: 1000,

maxHeight: 1000,

},

},

})

.then((stream) => {

const track = stream.getVideoTracks()[0];

const capturedImage = new ImageCapture(track);

capturedImage

.takePhoto()

.then(blob => {

toBuffer(blob, function (err, buffer) { // TODO: This is probably not necessary?

if (err) throw err;

const appDownloadsPath = electron.remote.app.getPath('downloads');

fs.writeFileSync(`${appDownloadsPath}/image.png`, buffer); // This works fine I can see the image in good quality

const matFromArray = new cv.Mat(buffer, 1000, 1000, cv.CV_8UC3);

cv.imshowWait('mat array', matFromArray);

});

})

.catch(error => console.error('takePhoto() error:', error));

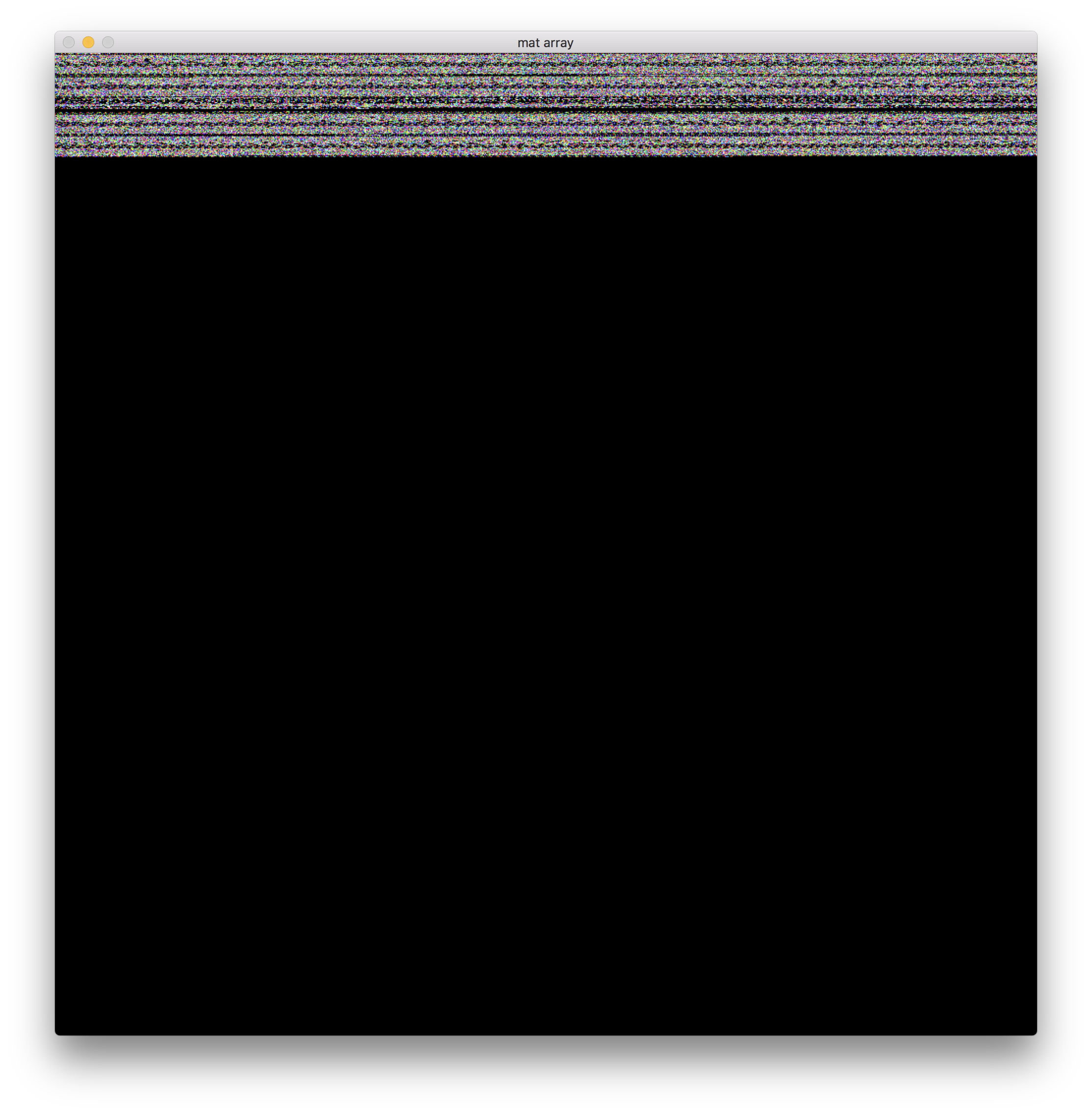

I am probably missing some settings here const matFromArray = new cv.Mat(buffer, 1000, 1000, cv.CV_8UC3); Beceause when I save the image it looks fine. However when I imshowWait the image it looks like this:

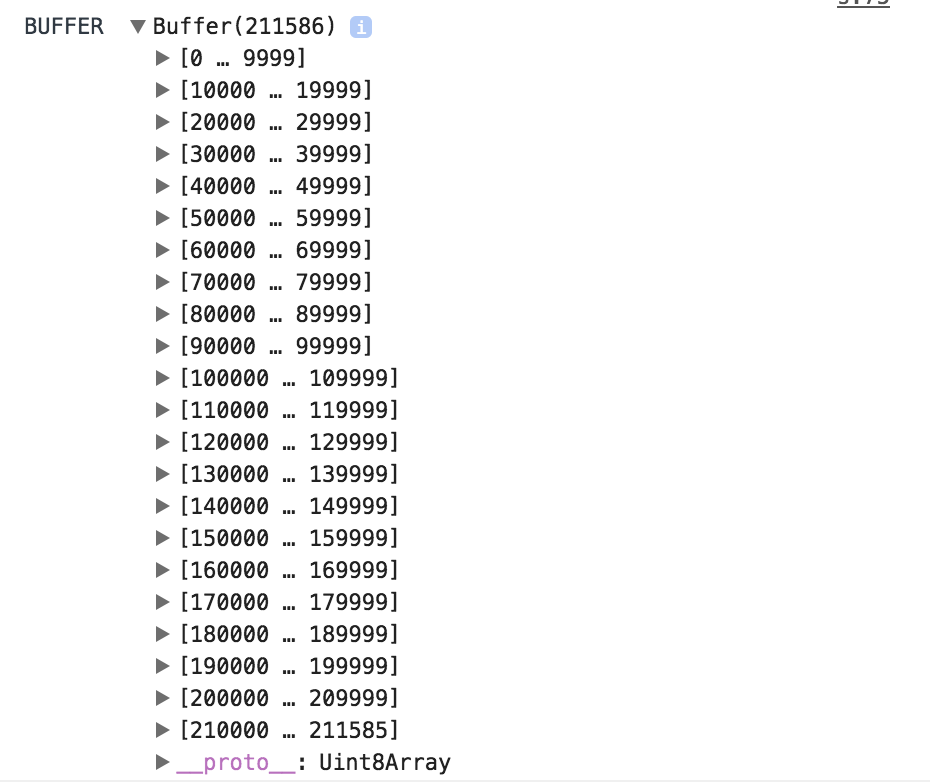

Can you check console.log(buffer.length). The length of the buffer should equals rows * cols * channels in your case 1000 * 1000 * 3.

Okay I guess the data of your image is compressed, since the raw data size does not match. Can you set codec options to figure out how to decode the data?

You could try to const mat = cv.imdecode(buffer) in case this is a valid codec, which is known to opencv.

Or in case it's not base64 encoded:

const base64Buf = Buffer.from(buffer.data, 'base64')

cv.imdecode(base64Buf)

That is awesome. I believe it works now. Thanks a lot. :)

Great, which one was the solution?

I just used cv.imdecode(base64Buf) it returned the matrix succesffully.

capturedImage

.takePhoto()

.then(blob => {

toBuffer(blob, function (err, buffer) { // TODO: This is probably not necessary?

if (err) throw err;

const appDownloadsPath = electron.remote.app.getPath('downloads');

fs.writeFileSync(`${appDownloadsPath}/image.png`, buffer); // This works fine I can see the image in good quality

const mat = cv.imdecode(buffer);

cv.imshowWait('ma', mat);

});

})

.catch(error => console.error('takePhoto() error:', error));