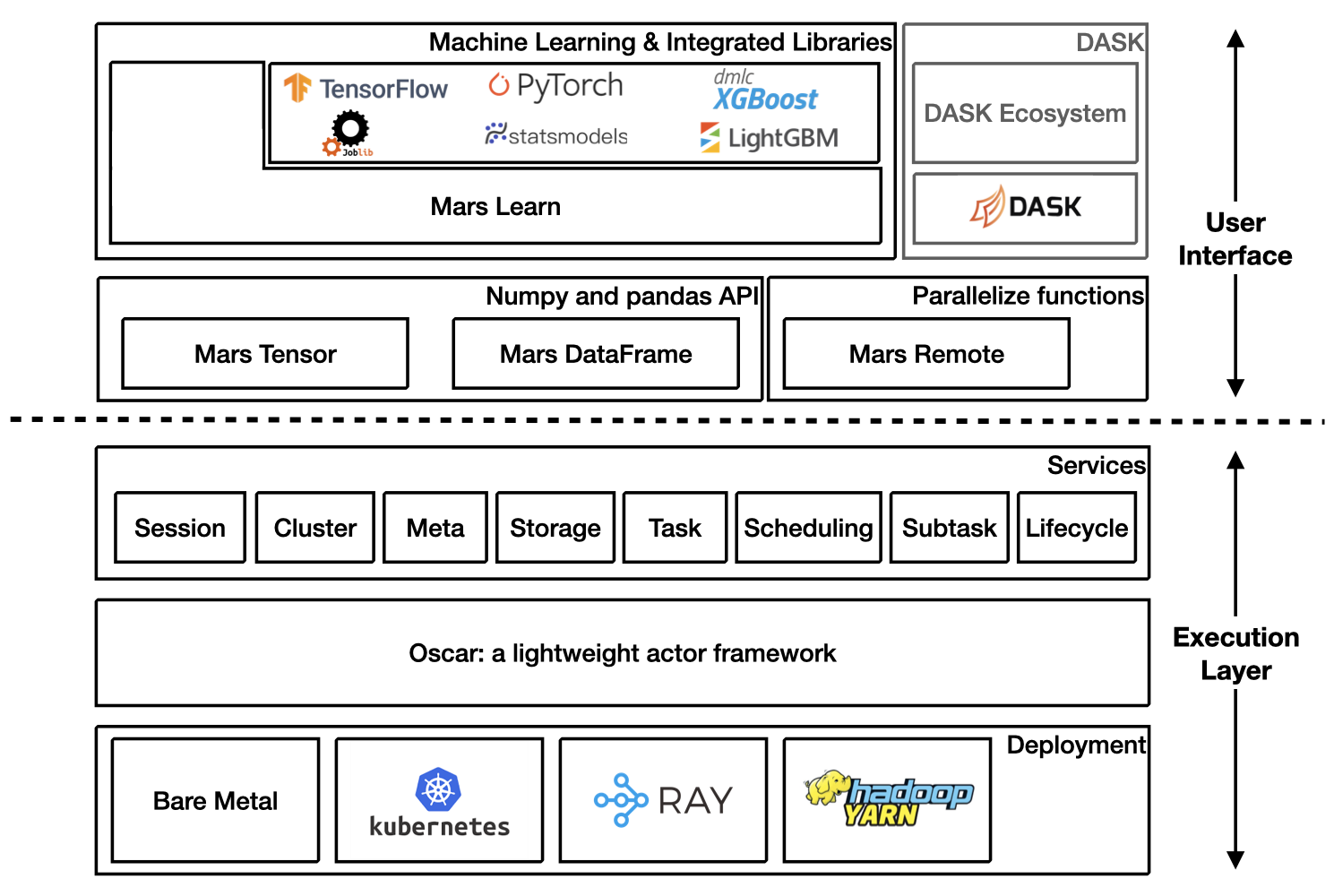

Mars is a tensor-based unified framework for large-scale data computation which scales numpy, pandas, scikit-learn and many other libraries.

Mars is easy to install by

When you want to contribute code to Mars, you can follow the instructions below to install Mars for development:

More details about installing Mars can be found at installation section in Mars document.

Starting a new runtime locally via:

Or connecting to a Mars cluster which is already initialized.

Mars tensor provides a familiar interface like Numpy.

| Numpy | Mars tensor |

|

|

Mars can leverage multiple cores, even on a laptop, and could be even faster for a distributed setting.

Mars DataFrame provides a familiar interface like pandas.

| Pandas | Mars DataFrame |

|

|

Mars learn provides a familiar interface like scikit-learn.

Mars learn also integrates with many libraries:

Mars remote allows users to execute functions in parallel.

| Vanilla function calls | Mars remote |

|

|

|

|

Refer to DASK on Mars for more information.

Mars supports eager mode which makes it friendly for developing and easy to debug.

Users can enable the eager mode by options, set options at the beginning of the program or console session.

Or use a context.

If eager mode is on, tensor, DataFrame etc will be executed immediately by default session once it is created.

Mars also has deep integration with Ray and can run on Ray efficiently and interact with the large ecosystem of machine learning and distributed systems built on top of the core Ray.

Starting a new Mars on Ray runtime locally via:

Interact with Ray Dataset:

import mars.tensor as mt

import mars.dataframe as md

df = md.DataFrame(

mt.random.rand(1000_0000, 4),

columns=list('abcd'))

# Convert mars dataframe to ray dataset

ds = md.to_ray_dataset(df)

print(ds.schema(), ds.count())

ds.filter(lambda row: row["a"] > 0.5).show(5)

# Convert ray dataset to mars dataframe

df2 = md.read_ray_dataset(ds)

print(df2.head(5).execute())Refer to Mars on Ray for more information.

Mars can scale in to a single machine, and scale out to a cluster with thousands of machines. It's fairly simple to migrate from a single machine to a cluster to process more data or gain a better performance.

Mars is easy to scale out to a cluster by starting different components of mars distributed runtime on different machines in the cluster.

A node can be selected as supervisor which integrated a web service, leaving other nodes as workers. The supervisor can be started with the following command:

Workers can be started with the following command:

After all mars processes are started, users can run

Refer to Run on Kubernetes for more information.

Refer to Run on Yarn for more information.

- Read development guide.

- Join our Slack workgroup: Slack.

- Join the mailing list: send an email to mars-dev@googlegroups.com.

- Please report bugs by submitting a GitHub issue.

- Submit contributions using pull requests.

Thank you in advance for your contributions!