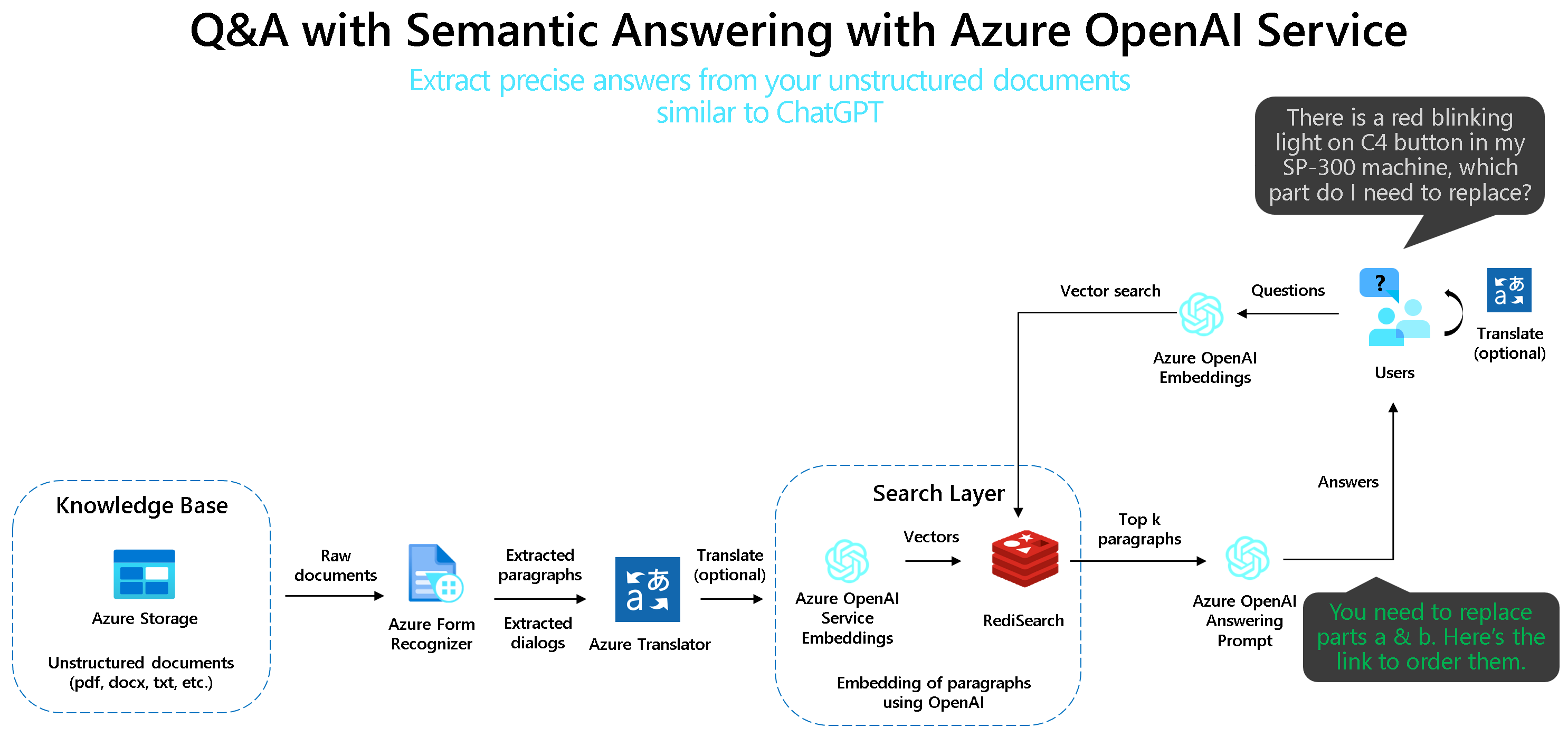

A simple web application for a OpenAI-enabled document search. This repo uses Azure OpenAI Service for creating embeddings vectors from documents. For answering the question of a user, it retrieves the most relevant document and then uses GPT-3 to extract the matching answer for the question.

You have multiple options to run the code:

- Deploy on Azure (WebApp + Redis Stack + Batch Processing)

- Run everything locally in Docker (WebApp + Redis Stack + Batch Processing)

- Run everything locally in Python with Conda (WebApp only)

- Run everything locally in Python with venv

- Run WebApp locally in Docker against an existing Redis deployment

Click on the Deploy to Azure button and configure your settings in the Azure Portal as described in the Environment variables section.

Please be aware that you need:

- an existing OpenAI with deployed models (instruction models e.g. text-davinci-003, and embeddings models e.g. text-search-davinci-doc-001 and text-search-davinci-query-001)

- an existing Form Recognizer Resource (OPTIONAL - if you want to extract text out of documents)

- an existing Translator Resource (OPTIONAL - if you want to translate documents)

First, clone the repo:

git clone https://github.com/ruoccofabrizio/azure-open-ai-embeddings-qna

cd azure-open-ai-embeddings-qnaNext, configure your .env as described in Environment variables:

cp .env.template .env

vi .env # or use whatever you feel comfortable withFinally run the application:

docker compose upOpen your browser at http://localhost:8080

This will spin up three Docker containers:

- The WebApp itself

- Redis Stack for storing the embeddings

- Batch Processing Azure Function

This requires Redis running somewhere and expects that you've setup .env as described above. In this case, point REDIS_ADDRESS to your Redis deployment.

You can run a local Redis instance via:

docker run -p 6379:6379 redis/redis-stack-server:latestYou can run a local Batch Processing Azure Function:

docker run -p 7071:80 fruocco/oai-batch:latestCreate conda environment for Python:

conda env create -f code/environment.yml

conda activate openai-qna-envConfigure your .env as described in as described in Environment variables

Run WebApp:

cd code

streamlit run OpenAI_Queries.pyThis requires Redis running somewhere and expects that you've setup .env as described above. In this case, point REDIS_ADDRESS to your Redis deployment.

You can run a local Redis instance via:

docker run -p 6379:6379 redis/redis-stack-server:latestYou can run a local Batch Processing Azure Function:

docker run -p 7071:80 fruocco/oai-batch:latestPlease ensure you have Python 3.9+ installed.

Create venv environment for Python:

python -m venv .venv

.venv\Scripts\activateInstall PIP Requirements

pip install -r code\requirements.txtConfigure your .env as described in as described in Environment variables

Run the WebApp

cd code

streamlit run OpenAI_Queries.pyConfigure your .env as described in as described in Environment variables

Then run:

docker run -e .env -p 8080:80 fruocco/oai-embeddings:latestConfigure your .env as described in as described in Environment variables

docker build . -t your_docker_registry/your_docker_image:your_tag

docker run -e .env -p 8080:80 your_docker_registry/your_docker_image:your_tagHere is the explanation of the parameters:

| App Setting | Value | Note |

|---|---|---|

| OPENAI_ENGINES | text-davinci-003 | Instruction engines deployed in your Azure OpenAI resource |

| OPENAI_EMBEDDINGS_ENGINE_DOC | text-embedding-ada-002 | Embedding engine for documents deployed in your Azure OpenAI resource |

| OPENAI_EMBEDDINGS_ENGINE_QUERY | text-embedding-ada-002 | Embedding engine for query deployed in your Azure OpenAI resource |

| OPENAI_API_BASE | https://YOUR_AZURE_OPENAI_RESOURCE.openai.azure.com/ | Your Azure OpenAI Resource name. Get it in the Azure Portal |

| OPENAI_API_KEY | YOUR_AZURE_OPENAI_KEY | Your Azure OpenAI API Key. Get it in the Azure Portal |

| REDIS_ADDRESS | api | URL for Redis Stack: "api" for docker compose |

| REDIS_PASSWORD | redis-stack-password | OPTIONAL - Password for your Redis Stack |

| REDIS_ARGS | --requirepass redis-stack-password | OPTIONAL - Password for your Redis Stack |

| CONVERT_ADD_EMBEDDINGS_URL | http://batch/api/BatchStartProcessing | URL for Batch processing Function: "http://batch/api/BatchStartProcessing" for docker compose |

| AzureWebJobsStorage | AZURE_BLOB_STORAGE_CONNECTION_STRING_FOR_AZURE_FUNCTION_EXECUTION | Azure Blob Storage Connection string for Azure Function - Batch Processing |

Optional parameters for additional features (e.g. document text extraction with OCR):

| App Setting | Value | Note |

|---|---|---|

| BLOB_ACCOUNT_NAME | YOUR_AZURE_BLOB_STORAGE_ACCOUNT_NAME | OPTIONAL - Get it in the Azure Portal if you want to use the document extraction feature |

| BLOB_ACCOUNT_KEY | YOUR_AZURE_BLOB_STORAGE_ACCOUNT_KEY | OPTIONAL - Get it in the Azure Portalif you want to use document extraction feature |

| BLOB_CONTAINER_NAME | YOUR_AZURE_BLOB_STORAGE_CONTAINER_NAME | OPTIONAL - Get it in the Azure Portal if you want to use document extraction feature |

| FORM_RECOGNIZER_ENDPOINT | YOUR_AZURE_FORM_RECOGNIZER_ENDPOINT | OPTIONAL - Get it in the Azure Portal if you want to use document extraction feature |

| FORM_RECOGNIZER_KEY | YOUR_AZURE_FORM_RECOGNIZER_KEY | OPTIONAL - Get it in the Azure Portal if you want to use document extraction feature |

| PAGES_PER_EMBEDDINGS | Number of pages for embeddings creation. Keep in mind you should have less than 3K token for each embedding. | Default: A new embedding is created every 2 pages. |

| TRANSLATE_ENDPOINT | YOUR_AZURE_TRANSLATE_ENDPOINT | OPTIONAL - Get it in the Azure Portal if you want to use translation feature |

| TRANSLATE_KEY | YOUR_TRANSLATE_KEY | OPTIONAL - Get it in the Azure Portal if you want to use translation feature |

| TRANSLATE_REGION | YOUR_TRANSLATE_REGION | OPTIONAL - Get it in the Azure Portal if you want to use translation feature |