Data, code and materials from

"ImageNet-trained CNNs are biased towards texture; increasing shape bias improves accuracy and robustness"

This repository contains information, data and materials from the paper ImageNet-trained CNNs are biased towards texture; increasing shape bias improves accuracy and robustness by Robert Geirhos, Patricia Rubisch, Claudio Michaelis, Matthias Bethge, Felix A. Wichmann, and Wieland Brendel. We hope that you may find this repository a useful resource for your own research.

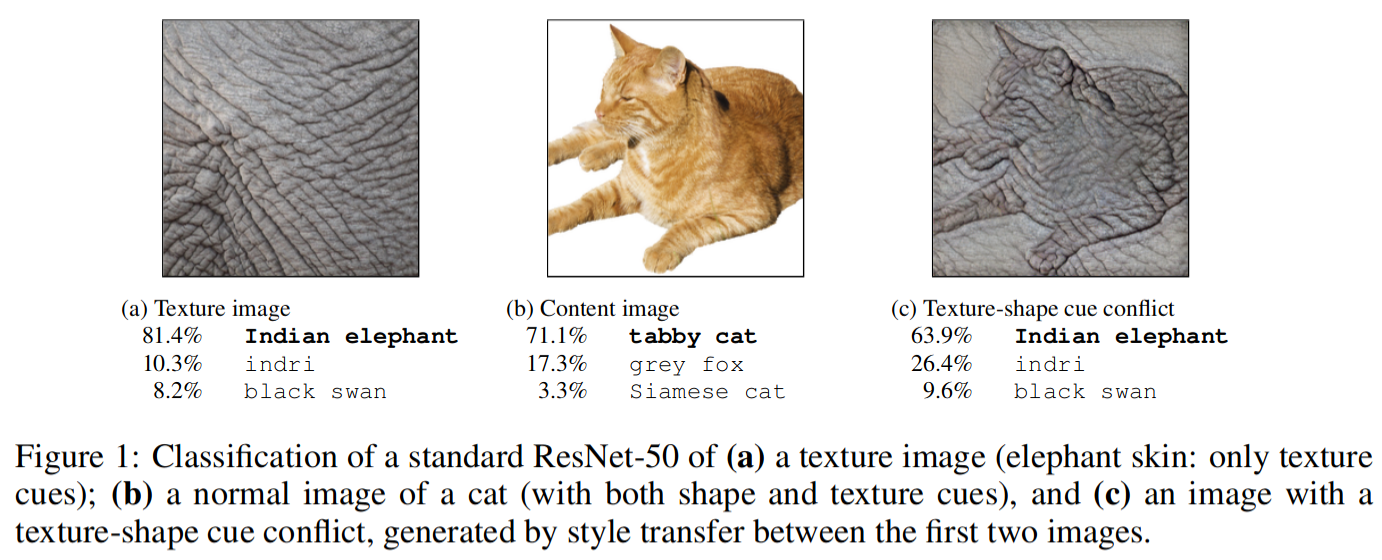

The core idea is explained in the Figure below: If a Convolutional Neural Network sees a cat with elephant texture, it thinks it's an elephant even though the shape is still clearly a cat. We found this "texture bias" to be common for ImageNet-trained CNNs, which is in contrast to the widely held belief that CNNs mostly learn to recognise objects by detecting their shapes.

Please don't hesitate to contact me at robert.geirhos@bethgelab.org or open an issue in case there is any question! Reproducibility & Open Science are important to me, and I appreciate feedback on what could be improved.

This README is structured according to the repo's structure: one section per subdirectory (alphabetically).

Note that Stylized-ImageNet, an important dataset used in this paper, has its own repository at rgeirhos:Stylized-ImageNet.

Some aspects of this repository are borrowed from our earlier work, "Generalisation in humans and deep neural networks" (published at NeurIPS 2018). The corresponding code, data and materials can be obtained from rgeirhos:generalisation-humans-DNNs. For convencience, some human data from this repo (which are used in the texture-vs-shape work for comparison) are included here directly (under raw-data/raw-data-from-generalisation-paper/).

The data-analysis/ directory contains the main analysis script data-analysis.R and some helper functionality. All created plots will then be stored in the paper-figures/ directory.

Everything necessary to run an experiment in the lab with human participants. This is based on MATLAB.

Contains the main MATLAB experiment, shape_texture_experiment.m, as well as a .yaml file where the specific parameter values used in an experiment are specified (such as the stimulus presentation duration). Some functions depend on our in-house iShow library which can be obtained from here.

Some of the helper functions are based on other people's code, please check out the corresponding files for the copyright notices.

The file load_pretrained_models.py will load the following models that are trained on Stylized-ImageNet:

model_A = "resnet50_trained_on_SIN"

model_B = "resnet50_trained_on_SIN_and_IN"

model_C = "resnet50_trained_on_SIN_and_IN_then_finetuned_on_IN"These correspond to the models reported in Table 2 of the paper (method details in Section A.5 of the Appendix).

Contains all figures of the paper. All figures reporting results can be generated by the scripts in data-analysis/.

Here, a .csv file for each observer and network experiment contains the raw data, including a total number of 48,560 human psychophysical trials across 97 participants in a controlled lab setting.

These are the raw stimuli used in our experiments. Each directory contains stimuli images split into 16 subdirectories (one per category).