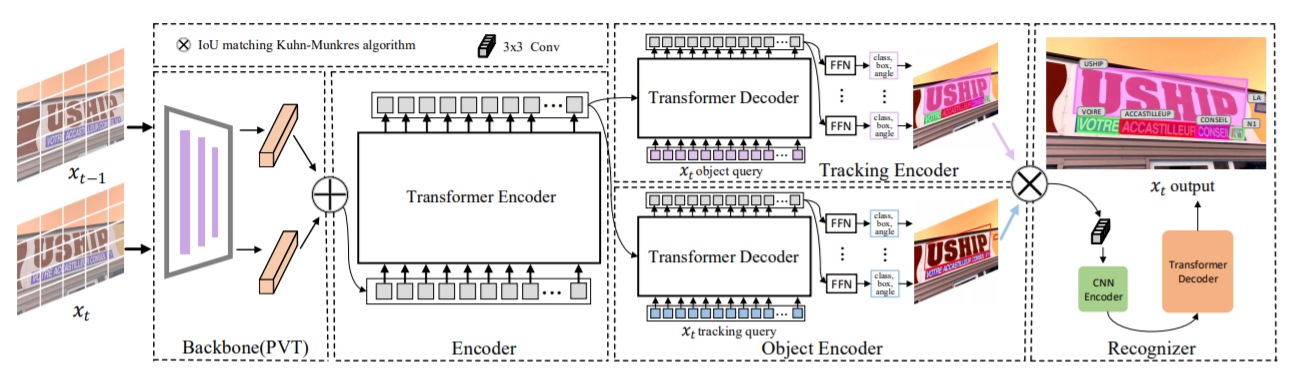

[A Multilingual, Open World Video Text Dataset and End-to-end Video Text Spotter with Transformer]

Link to our MOVText: A Large-Scale, Multilingual Open World Dataset for Video Text Spotting

-

(08/04/2021) Refactoring the code.

-

(10/20/2021) The complete code has been released .

| Methods | MOTA | MOTP | IDF1 | Mostly Matched | Partially Matched | Mostly Lost |

|---|---|---|---|---|---|---|

| TransVTSpotter | 45.75 | 73.58 | 57.56 | 658 | 611 | 647 |

Models are also available in Baidu Drive by code m4iv.

- The training time is on 8 NVIDIA V100 GPUs with batchsize 16.

- We use the models pre-trained on COCOTextV2.

- We do not release the recognition code due to the company's regulations.

The codebases are built on top of Deformable DETR and TransTrack.

- Linux, CUDA>=9.2, GCC>=5.4

- Python>=3.7

- PyTorch ≥ 1.5 and torchvision that matches the PyTorch installation. You can install them together at pytorch.org to make sure of this

- OpenCV is optional and needed by demo and visualization

- Install and build libs

git clone git@github.com:weijiawu/TransVTSpotter.git

cd TransVTSpotter

cd models/ops

python setup.py build install

cd ../..

pip install -r requirements.txt

- Prepare datasets and annotations

# pretrain COCOTextV2

python3 track_tools/convert_COCOText_to_coco.py

# ICDAR15

python3 track_tools/convert_ICDAR15video_to_coco.py

COCOTextV2 dataset is available in COCOTextV2.

python3 track_tools/convert_crowdhuman_to_coco.py

ICDAR2015 dataset is available in icdar2015.

python3 track_tools/convert_mot_to_coco.py

- Pre-train on COCOTextV2

python3 -m torch.distributed.launch --nproc_per_node=8 --use_env main_track.py --output_dir ./output/Pretrain_COCOTextV2 --dataset_file pretrain --coco_path ./Data/COCOTextV2 --batch_size 2 --with_box_refine --num_queries 500 --epochs 300 --lr_drop 100 --resume ./output/Pretrain_COCOTextV2/checkpoint.pth

python3 track_tools/Pretrain_model_to_mot.py

The pre-trained model is available COCOTextV2_pretrain.pth, password:59w8. And the MOTA 44% can be found here password:xnlw.

- Train TransVTSpotter

python3 -m torch.distributed.launch --nproc_per_node=8 --use_env main_track.py --output_dir ./output/ICDAR15 --dataset_file text --coco_path ./Data/ICDAR2015_video --batch_size 2 --with_box_refine --num_queries 300 --epochs 80 --lr_drop 40 --resume ./output/Pretrain_COCOTextV2/pretrain_coco.pth

- Visualize TransVTSpotter

python3 track_tools/Evaluation_ICDAR15_video/vis_tracking.py

TransVTSpotter is released under MIT License.

If you use TranVTSpotter in your research or wish to refer to the baseline results published here, please use the following BibTeX entries: