a way to reduce the camera to display time to sub-second latencies

bottleneck tasks

- encoding video

- buffer buildup at client to stop freezes new segment is only sent once requested

possible solutions and caveats

- reducing segment sizes to sub-second intervals

- Advantages

- less time to encode

- less time to transport

- smaller temporal buffer

- faster rate adaption

- Disadvantages

- Many http requests (larger network and server overhead)

- Many extra round-trip times adding overhead (when RTT is higher than segment duration this becomes inefficient)

- Advantages

Solutions by HTTP Adaptive Streaming providers

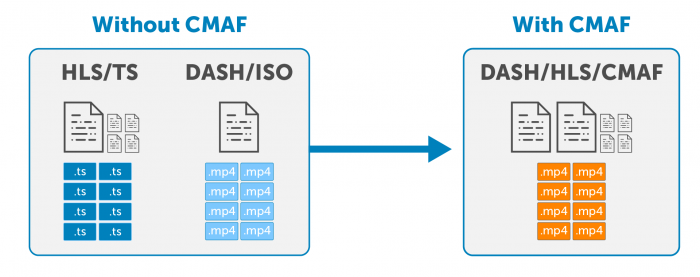

Self explanatory this is a common media format to contain the different codecs and bitrates

Sending segments as chunks of data.

- LL-HLS (Apple)

- LHLS (OpenSource group)

- DASH-LL (MPEG)

Uses chunk transfer

- Gets a request and sends multiple chuncks within the segment

- Client only request for playlist update after a segment

Apples implementation of low latency

- Client was sending requests for playlist update along with the part request

- that is one request for each part which dramatically increases the load on server

- built up on server push (HTTP2)

- Client does not have to request and do rount trips for the content. Parts can be announced before the preparation in the manifest so the player can anticipate the newer parts without having to request them.

- FFMPEG doesn't support sub-second video slicing for LL-HLS

- Developing media server with HTTP/2 functionality

- Most of the existing players doesn't support incomplete segment files. HLS.JS developement milestones

Discussion on LL-HLS - Wowza Webinar | LL-HLS Test tools | LHLS Proof of concept | MUX blog | LL-DASH | AWS LL-HLS

Apple LL-HLS is not yest supported by majority of the clients and CDNs (ios 13 and up)