Contents

- Lab 1 - Getting started with Apache NiFi

- Consuming the Meetup RSVP stream

- Extracting JSON elements we are interested in

- Splitting JSON into smaller fragments

- Writing JSON to File System

- Lab 2

- Access the cluster

- Lab 3 - MiNiFi CPP and Java Agents with EFM

- Designing the MiNiFi Flow

- Preparing the flow

- Running MiNiFi agents

- Lab 4 - Kafka Basics

- Creating a topic

- Producing data

- Consuming data

- Lab 5 - Integrating Kafka with NiFi

- Creating the Kafka topic

- Adding the Kafka producer processor

- Verifying the data is flowing

- Lab 6 - Integrating the Schema Registry

- Creating the Kafka topic

- Adding the Meetup Avro Schema

- Sending Avro data to Kafka

Login to Ambari

-

Login to Ambari web UI by opening http://{YOUR_IP}:8080 and log in with admin/StrongPassword

-

You will see a list of Hadoop components running on your node on the left side of the page

- They should all show green (ie started) status. If not, start them by Ambari via 'Service Actions' menu for that service

NiFi Installation Directory

- NiFi is installed at: /usr/hdf/current/nifi

Lab 1

In this lab, we will learn how to:

- Consume the Meetup RSVP stream

- Extract the JSON elements we are interested in

- Split the JSON into smaller fragments

- Write the JSON to the file system

Consuming RSVP Data

To get started we need to consume the data from the Meetup RSVP stream, extract what we need, splt the content and save it to a file:

Goals:

- Consume Meetup RSVP stream

- Extract the JSON elements we are interested in

- Split the JSON into smaller fragments

- Write the JSON files to disk

Our final flow for this lab will look like the following:

A template for this flow can be found here

A template for this flow can be found here

-

Step 1: Add a ConnectWebSocket processor to the cavas

- In case you are using a downloaded template, the ControllerService will be prepopulated. You will need to enable the ControllerService. Double-click the processor and follow the arrow next to the JettyWebSocketClient

ws://stream.meetup.com/2/rsvps

- Set WebSocket Client ID to your favorite number.

-

Step 2: Add an UpdateAttribute procesor

- Configure it to have a custom property called

mime.typewith the value ofapplication/json

- Configure it to have a custom property called

-

Step 3. Add an EvaluateJsonPath processor and configure it as shown below:

The properties to add are:

event.name $.event.event_name event.url $.event.event_url group.city $.group.group_city group.state $.group.group_state group.country $.group.group_country group.name $.group.group_name venue.lat $.venue.lat venue.lon $.venue.lon venue.name $.venue.venue_name -

Step 4: Add a SplitJson processor and configure the JsonPath Expression to be

$.group.group_topics -

Step 5: Add a ReplaceText processor and configure the Search Value to be

([{])([\S\s]+)([}])and the Replacement Value to be{ "event_name": "${event.name}", "event_url": "${event.url}", "venue" : { "lat": "${venue.lat}", "lon": "${venue.lon}", "name": "${venue.name}" }, "group": { "group_city": "${group.city}", "group_country": "${group.country}", "group_name": "${group.name}", "group_state": "${group.state}", $2 } } -

Step 6: Add a PutFile processor to the canvas and configure it to write the data out to

/tmp/rsvp-data

Questions to Answer

- What does a full RSVP Json object look like?

- How many output files do you end up with?

- How can you change the file name that JSON is saved as from PutFile?

- Why do you think we are splitting out the RSVP's by group?

- Why are we using the UpdateAttribute processor to add a mime.type?

- How can you change the flow to get the member photo from the JSON file and download it.

Lab 2

Accessing your Cluster

Credentials will be provided for these services by the instructor:

- SSH

- Ambari

Use your Cluster

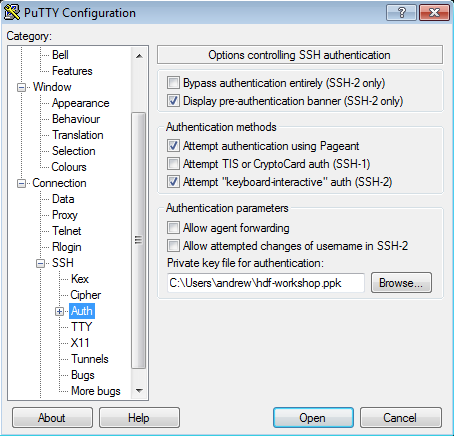

To connect using Putty from Windows laptop

NOTE: The following instructions are for using Putty. You can also use other popular SSH tools such as MobaXterm or SmarTTY

-

You were sent a PEM and a PPK.

-

Use Putty to connect to your node using the ppk key:

-

Create a new session called

cdf-workshop- For the Host Name use: centos@IP_ADDRESS_OF_EC2_NODE

- Click "Save" on the session page before logging in

To connect from Linux/MacOSX laptop

-

SSH into your EC2 node using below steps:

-

Copy pem key to ~/.ssh dir and correct permissions

cp ~/Downloads/cdf.pem ~/.ssh/ chmod 400 ~/.ssh/cdf.pem -

Login to the ec2 node of the you have been assigned by replacing IP_ADDRESS_OF_EC2_NODE below with EC2 node IP Address (your instructor will provide this)

ssh -i ~/.ssh/cdf.pem centos@IP_ADDRESS_OF_EC2_NODE -

To change user to root you can:

sudo su -

Lab 3

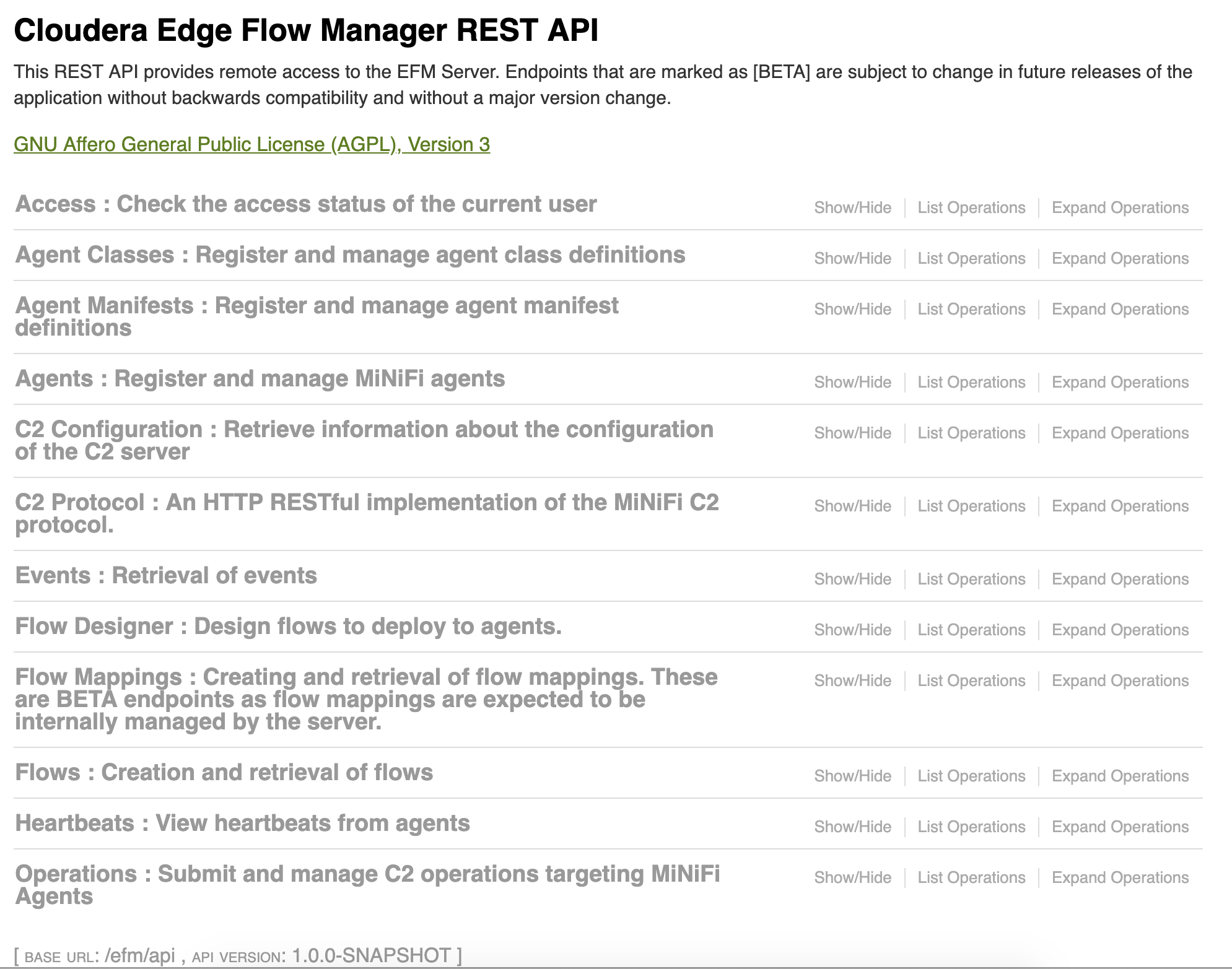

Getting started with MiNiFi and EFM

In this lab, we will learn how to configure MiNiFi to send data to NiFi:

- Setting up the Flow for NiFi

- Setting up the Flow for MiNiFi

- Preparing the flow for MiNiFi

- Configuring and starting MiNiFi

- Enjoying the data flow!

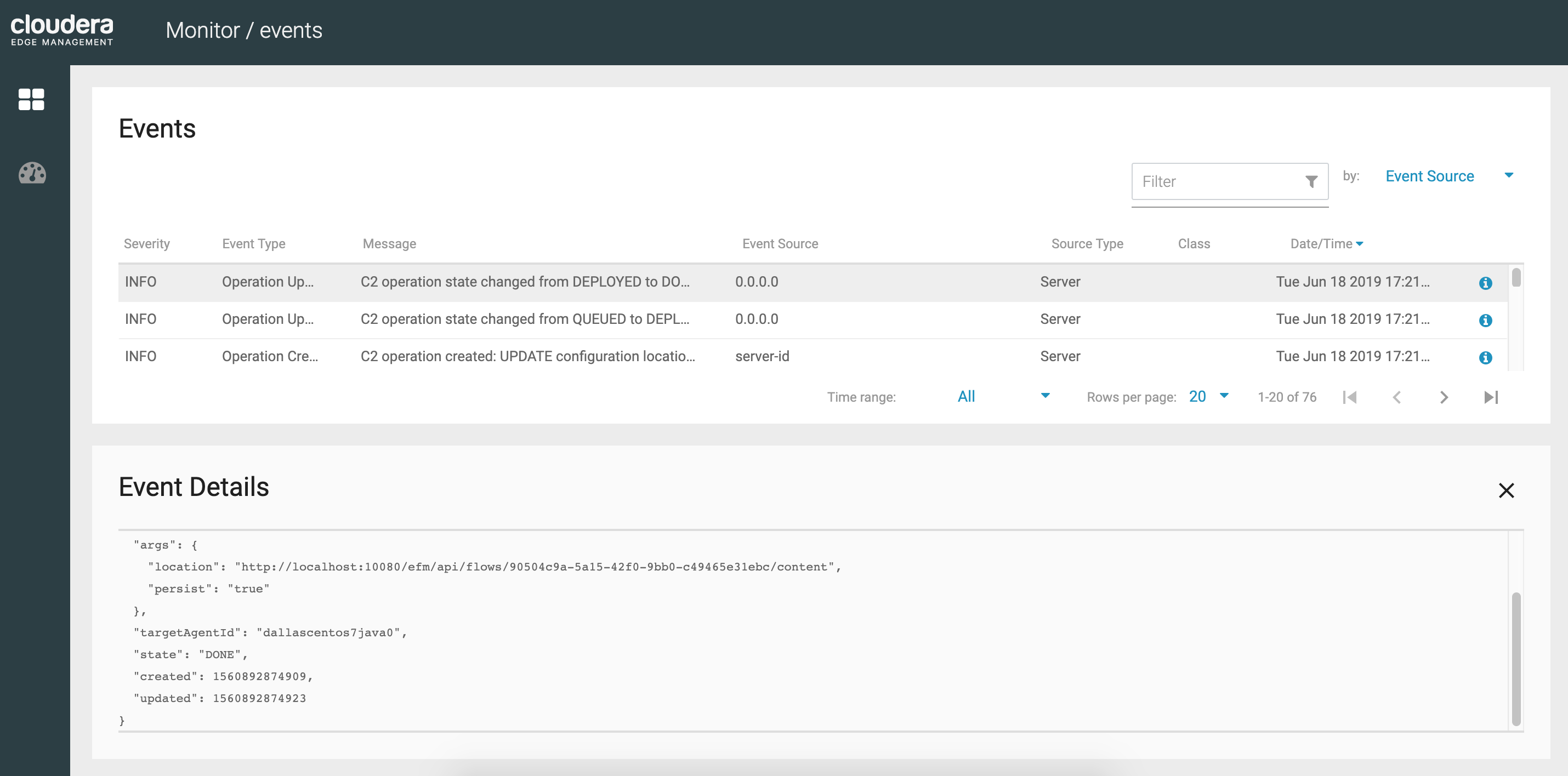

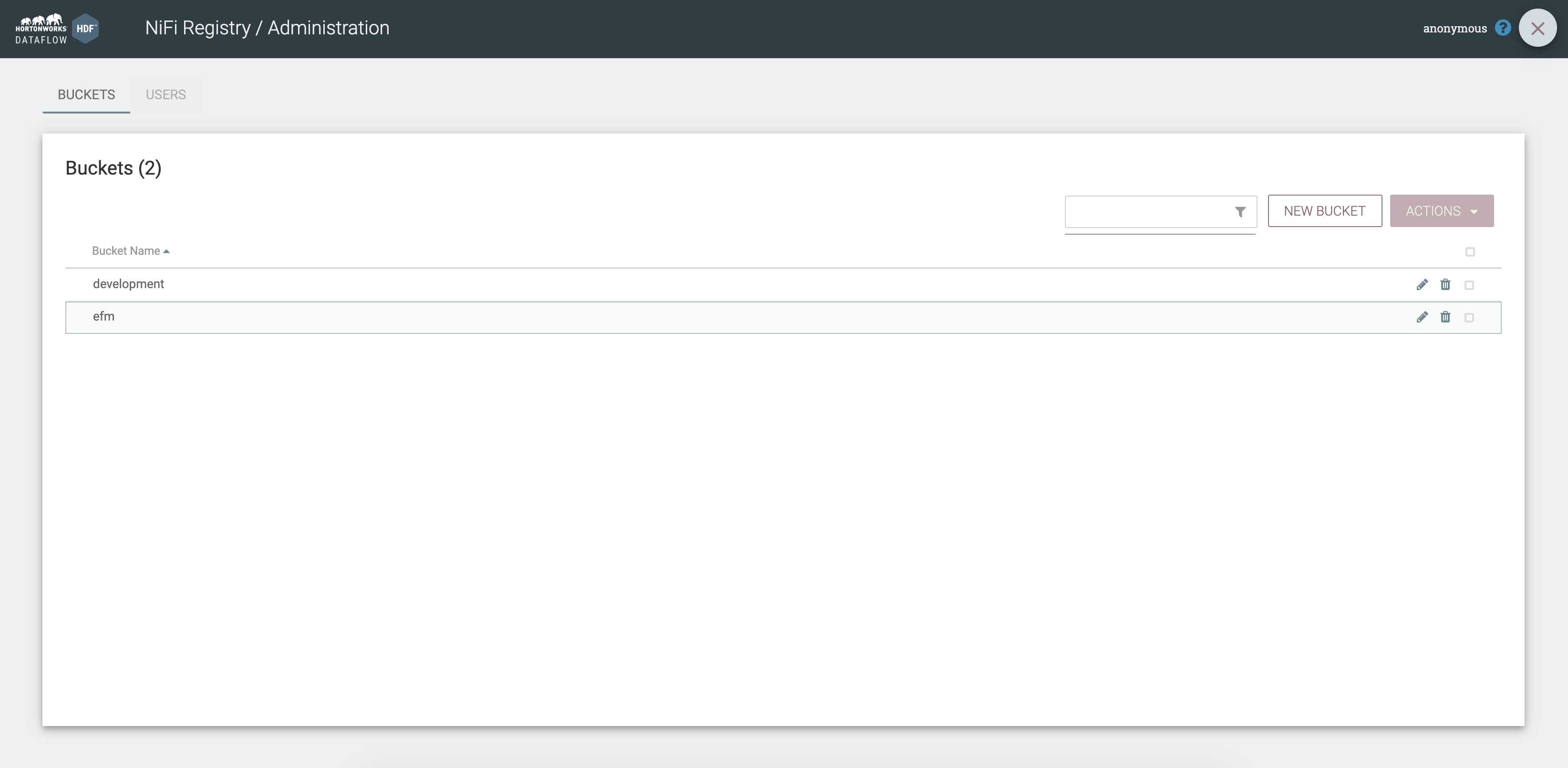

Go to NiFi Registry and create a bucket named cem

As root (sudo su -) start EFM, MiNiFi C++, MiNiFi Java

/etc/efm/efm-1.0.0.1.0.0.0-54/bin/efm.sh start

/etc/minifi-cpp/nifi-minifi-cpp-0.6.0/bin/run.sh

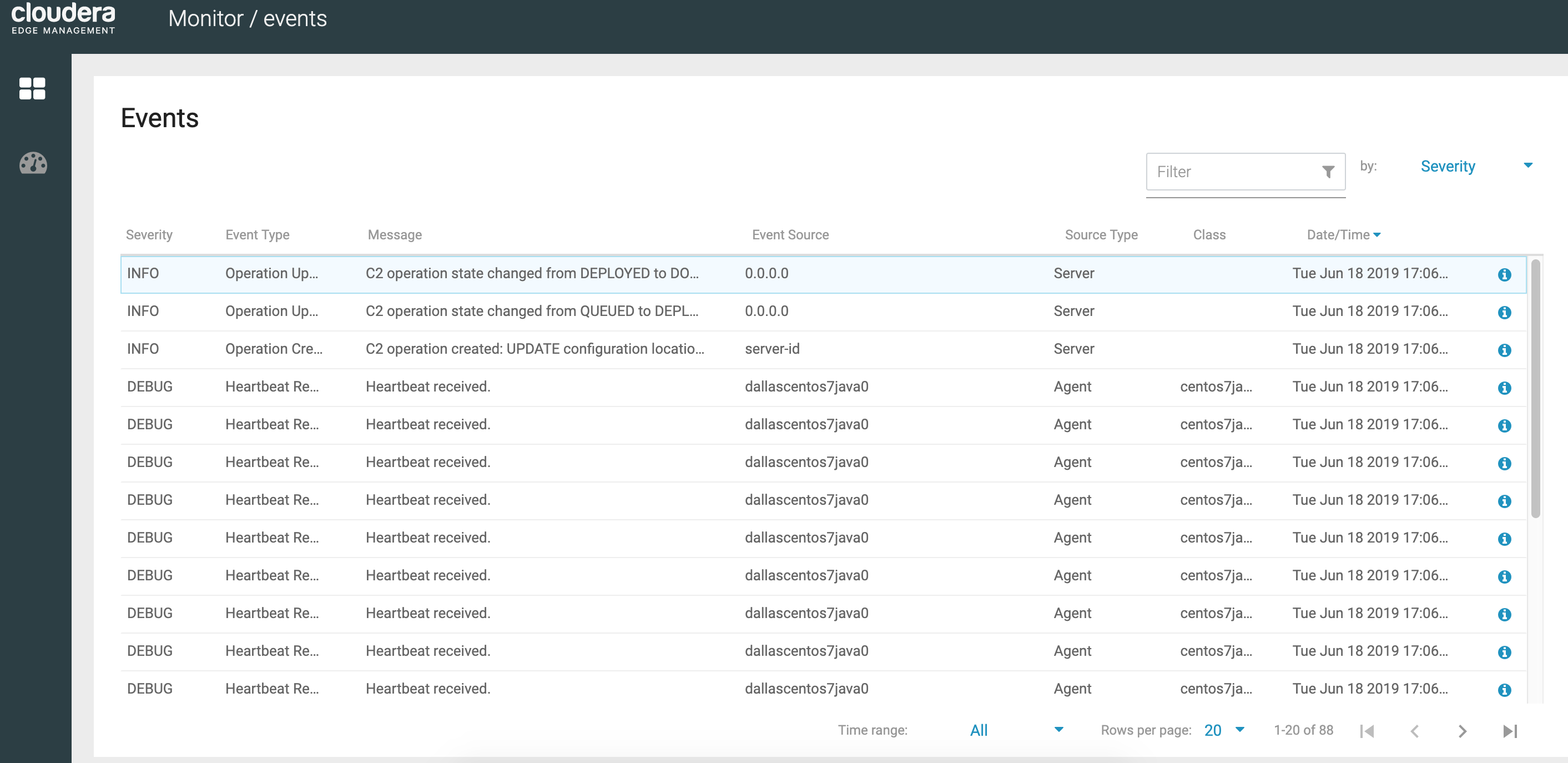

/etc/minifi-java/minifi-0.6.0.1.0.0.0-54/bin/minifi.sh startVisit EFM UI

You should see heartbeats coming from the agent.

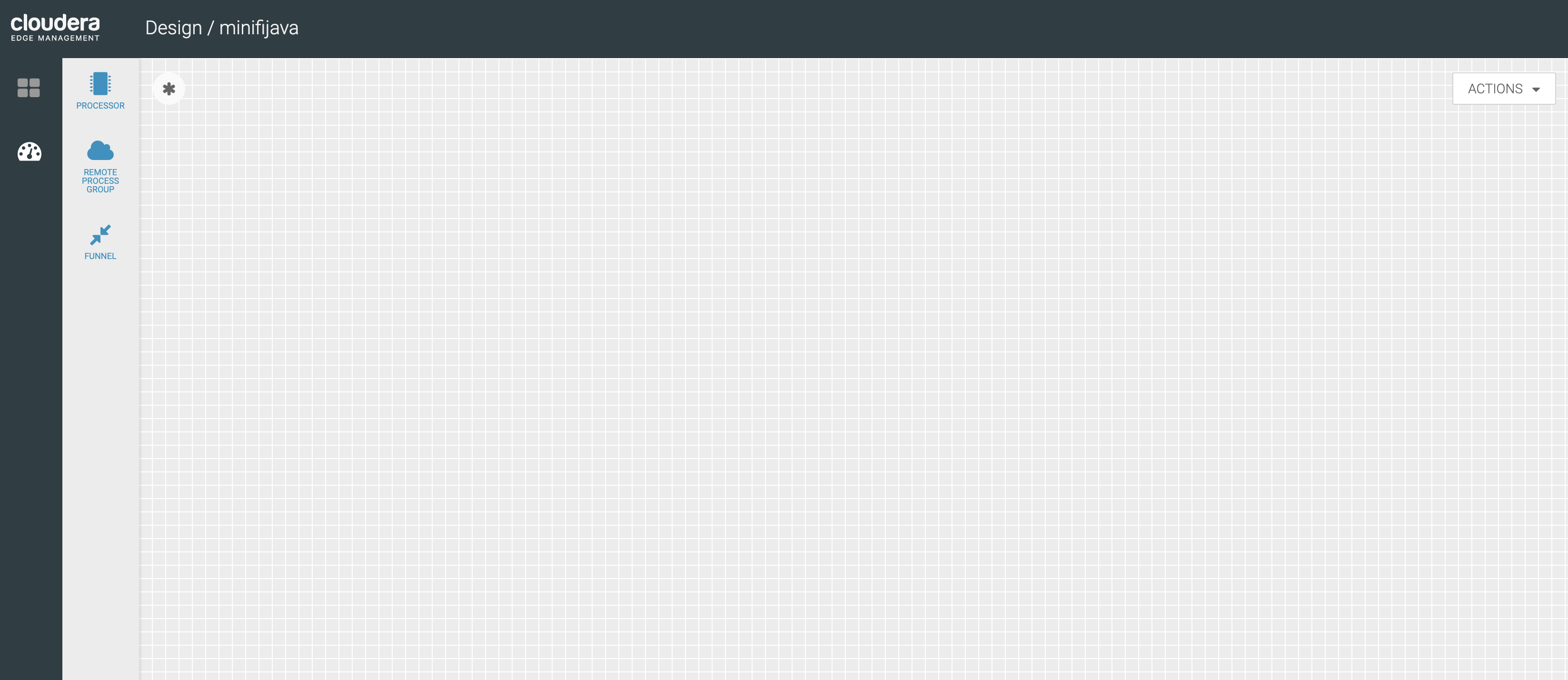

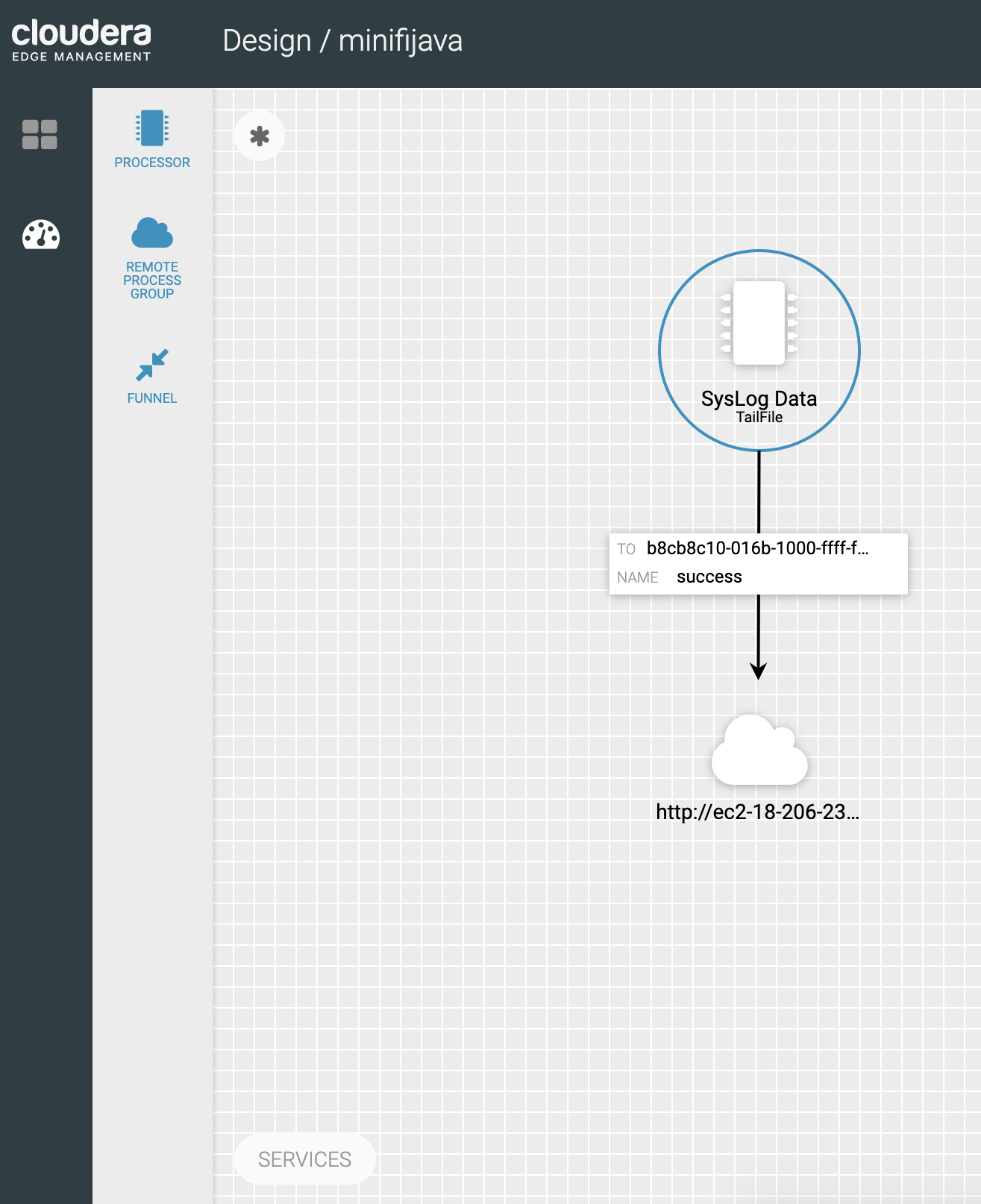

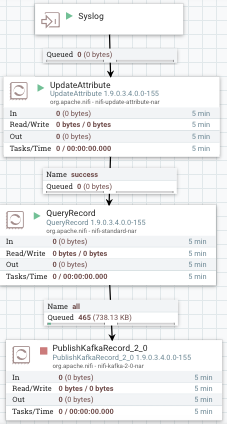

Now, on the root canvas, create a simple flow to collect local syslog messages and forward them to NiFi, where the logs will be parsed, transformed into another format and pushed to a Kafka topic.

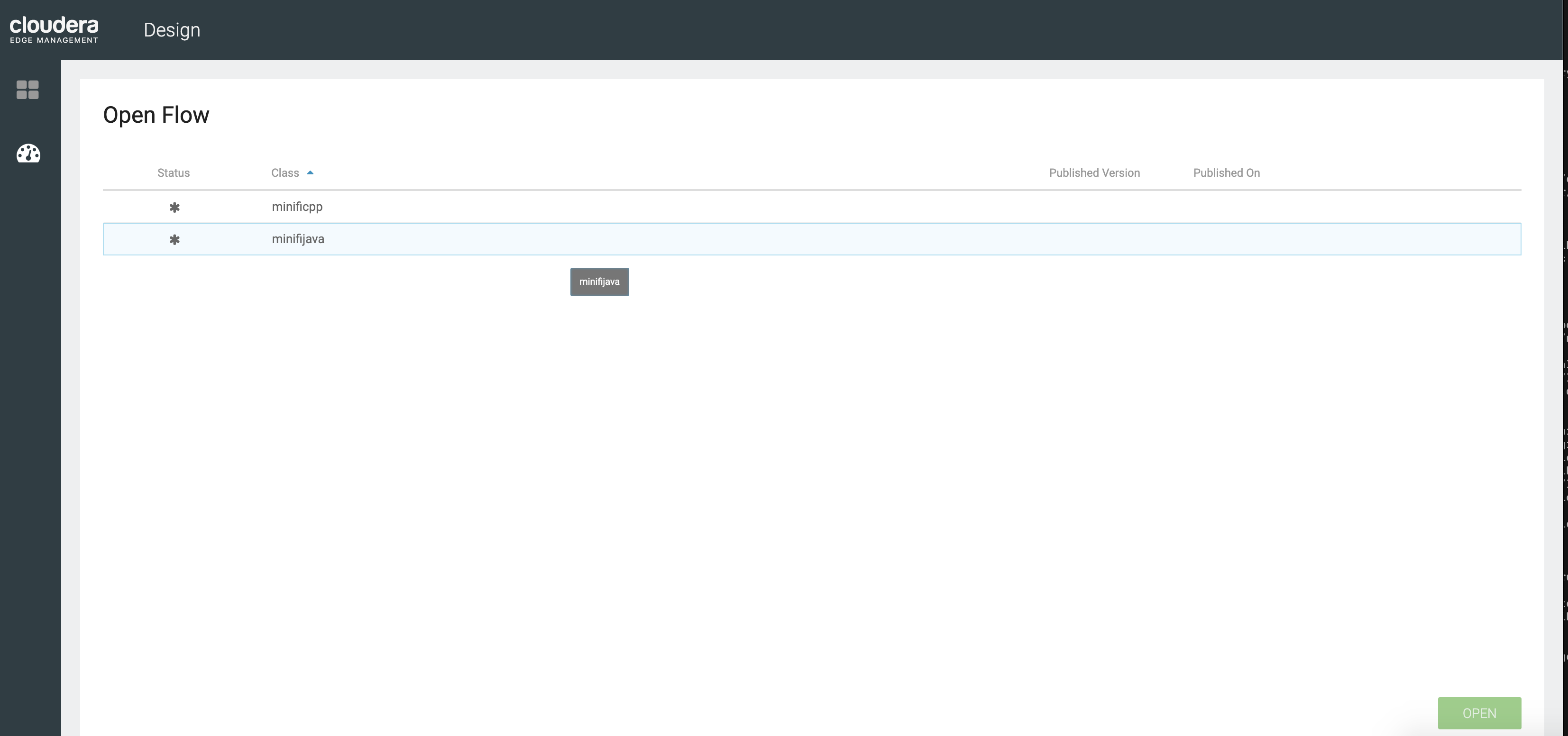

Our Java MiNiFi Agent has been tagged with the class 'minifijava' (check nifi.c2.agent.class property in /etc/minifi-java/minifi-0.6.0.1.0.0.0-54/conf/bootstrap.conf) so we are going to create a template under this specific class.

Our MiNiFi C++ Agent has been tagged with the class 'minificpp' (check nifi.c2.agent.class property in /etc/minifi-cpp/nifi-minifi-cpp-0.6.0/conf/minifi.properties) so we are going to create a template under this specific class.

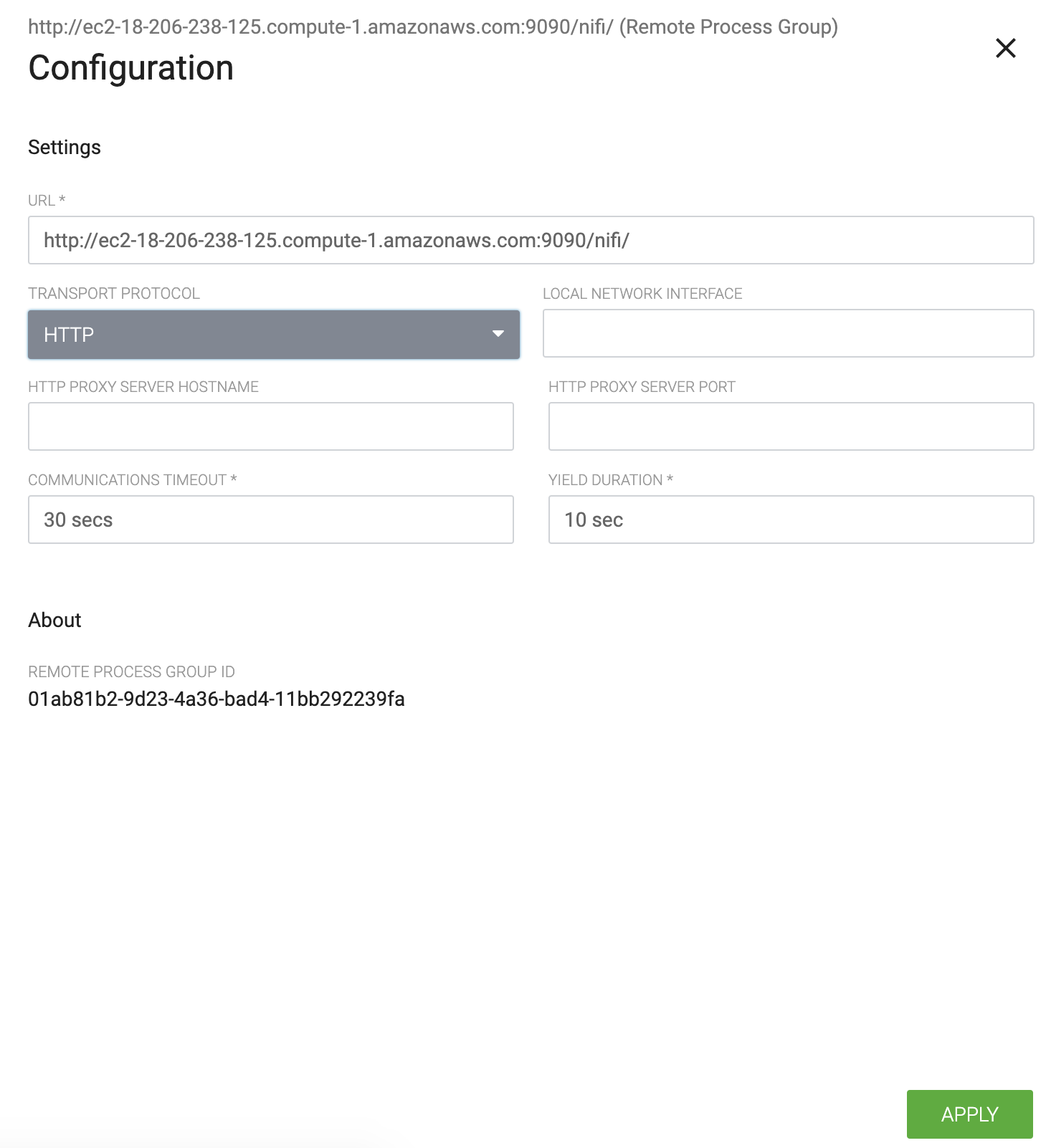

But first we need to add an Input Port to the root canvas of NiFi and build a flow as described before. Input Port are used to receive flow files from remote MiNiFi agents or other NiFi instances. We create one for each agent class to make it easy.

Don't forget to create a new Kafka topic.

We are going to use a Grok parser to parse the Syslog messages. Here is a Grok expression that can be used to parse such logs format:

%{SYSLOGTIMESTAMP:syslog_timestamp} %{SYSLOGHOST:syslog_hostname} %{DATA:syslog_program}(?:\[%{POSINT:syslog_pid}\])?: %{GREEDYDATA:syslog_message}

Now that we have built the Apache NiFi flow that will receive the logs, let's go back to the EFM UI and build the MiNiFi flow as below:

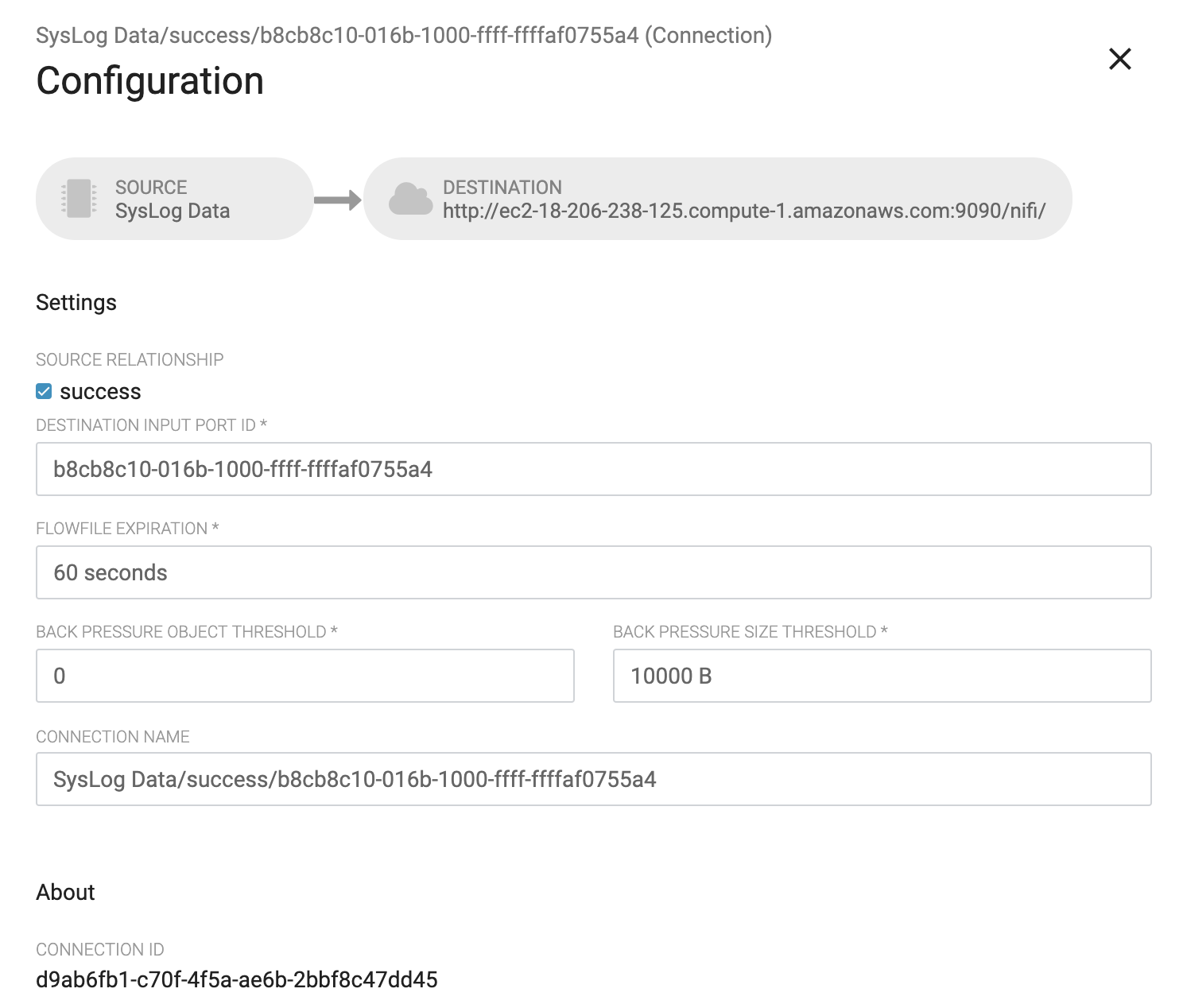

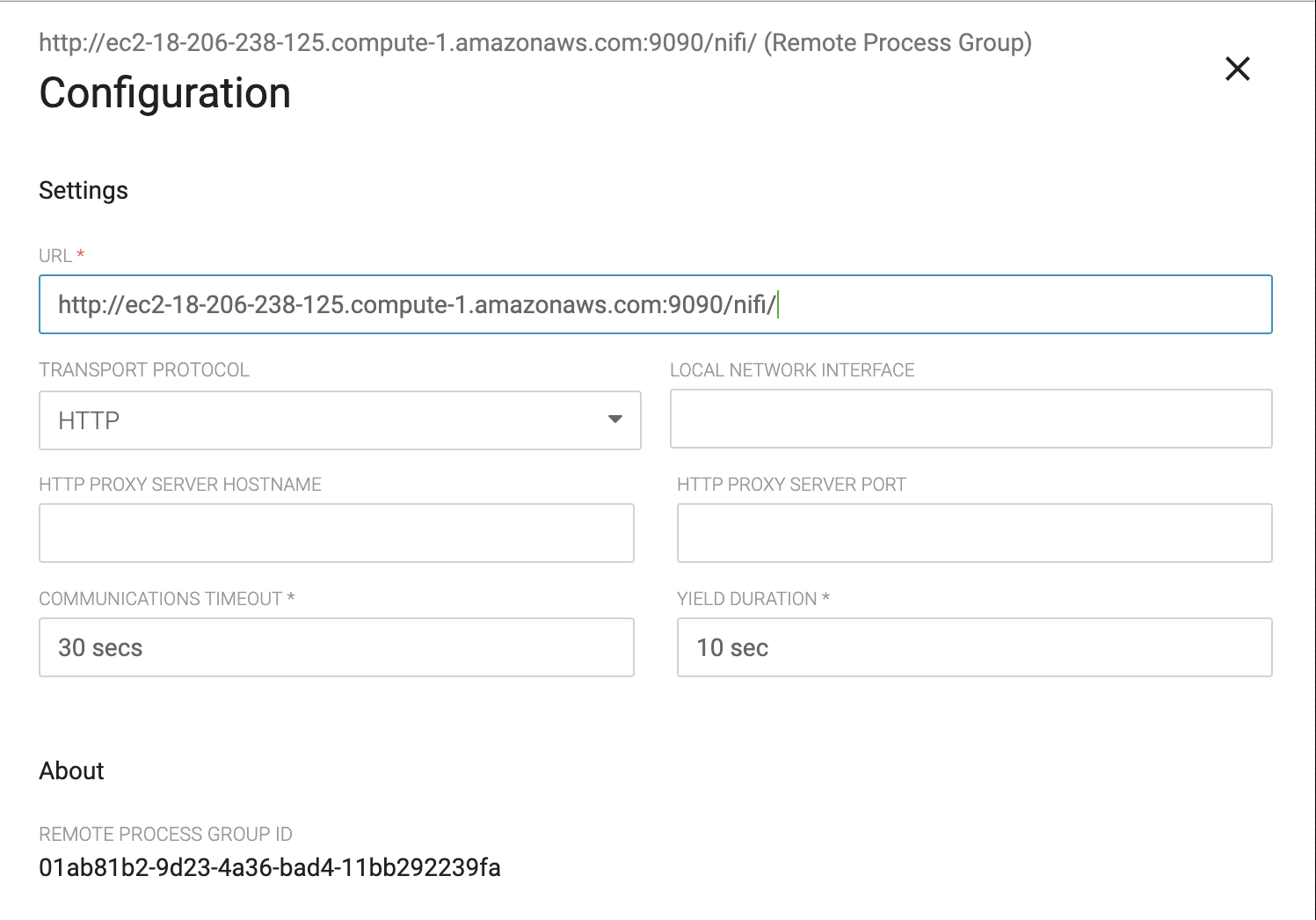

This MiNiFi agent will tail /var/log/messages or /var/log/secure and send the logs to a remote process group (our NiFi instance) using the Input Port.

Check to make sure only 1 concurrent call.

Please note that the NiFi instance has been configured to receive data over HTTP only, not RAW!

Now we can start the NiFi flow and publish the MiNiFi flow to NiFi registry (Actions > Publish...)

Visit NiFi Registry UI to make sure your flow has been published successfully.

Within few seconds, you should be able to see syslog messages streaming through your NiFi flow and be published to the Kafka topic you have created.

Now we should be ready to create our flow. To do this do the following:

- The first thing we are going to do is setup an Input Port. This is the port that MiNiFi will be sending data to. To do this drag the Input Port icon to the canvas and call it whatever you like. We are only interested in the id.

- Now that the Input Port is configured we need to have somewhere for the data to go once we receive it. In this case we will keep it very simple and just log the attributes. To do this drag the Processor icon to the canvas and choose the LogAttribute processor.

You may tail the log of the MiNiFi application by

tail -f (See Log Directories) logs/minifi-app.log

If you see error logs such as "the remote instance indicates that the port is not in a valid state", it is because the Input Port has not been started. Start the port and you will see messages being accumulated in its downstream queue.

Lab 4

Kafka Basics

In this lab we are going to explore creating, writing to and consuming Kafka topics. This will come in handy when we later integrate Kafka with NiFi and Streaming Analytics Manager. See: https://kafka.apache.org/quickstart

- Creating a topic

-

Step 1: Open an SSH connection to your EC2 Node.

-

Step 2: Naviagte to the Kafka directory (

/usr/hdf/current/kafka-broker), this is where Kafka is installed, we will use the utilities located in the bin directory.cd /usr/hdf/current/kafka-broker/ -

Step 3: Create a topic using the kafka-topics.sh script

bin/kafka-topics.sh --create --zookeeper demo.hortonworks.com:2181 --replication-factor 1 --partitions 1 --topic meetup_rsvp_rawNOTE: Based on how Kafka reports metrics topics with a period ('.') or underscore ('_') may collide with metric names and should be avoided. If they cannot be avoided, then you should only use one of them.

-

Step 4: Ensure the topic was created

bin/kafka-topics.sh --list --zookeeper demo.hortonworks.com:2181

- Testing Producers and Consumers

-

Step 1: Open a second terminal to your EC2 node and navigate to the Kafka directory

-

In one shell window connect a consumer:

bin/kafka-console-consumer.sh --bootstrap-server demo.hortonworks.com:6667 --topic meetup_rsvp_raw --from-beginningNote: using –from-beginning will tell the broker we want to consume from the first message in the topic. Otherwise it will be from the latest offset.

-

In the second shell window connect a producer:

bin/kafka-console-producer.sh --broker-list demo.hortonworks.com:6667 --topic meetup_rsvp_raw

-

Sending messages. Now that the producer is connected we can type messages.

- Type a message in the producer window

-

Messages should appear in the consumer window.

-

Close the consumer (Ctrl-C) and reconnect using the default offset, of latest. You will now see only new messages typed in the producer window.

-

As you type messages in the producer window they should appear in the consumer window.

Make sure all the messages are consumed from the topic before closing as we will use this topic for your integration example.

Lab 5

Integrating Kafka with NiFi

Using the topic already created, meetup_rsvp_raw, we will publish from Apache NiFi.

- Integrating NiFi

-

Step 1: Add a PublishKafka_*_0 processor to the canvas.

-

Step 2: Add a routing for the success relationship of the ReplaceText processor to the PublishKafka_*_0 processor added in Step 1 as shown below:

-

Step 3: Configure the topic and broker for the PublishKafka_*_0 processor, where topic is meetup_rsvp_raw and broker is localhost:6667.

-

Start the NiFi flow

-

In a terminal window to your EC2 node and navigate to the Kafka directory and connect a consumer to the

meetup_rsvp_rawtopic:bin/kafka-console-consumer.sh --bootstrap-server demo.hortonworks.com:6667 --topic meetup_rsvp_raw --from-beginning -

Messages should now appear in the consumer window.

Lab 6

Integrating the Schema Registry

- Creating the topic

-

Step 1: Open an SSH connection to your EC2 Node.

-

Step 2: Naviagte to the Kafka directory (

/usr/hdf/current/kafka-broker), this is where Kafka is installed, we will use the utilities located in the bin directory.cd /usr/hdf/current/kafka-broker/ -

Step 3: Create a topic using the kafka-topics.sh script

bin/kafka-topics.sh --create --zookeeper demo.hortonworks.com:2181 --replication-factor 1 --partitions 1 --topic meetup_rsvp_avroNOTE: Based on how Kafka reports metrics topics with a period ('.') or underscore ('_') may collide with metric names and should be avoided. If they cannot be avoided, then you should only use one of them.

-

Step 4: Ensure the topic was created

bin/kafka-topics.sh --list --zookeeper demo.hortonworks.com:2181

- Adding the Schema to the Schema Registry

-

Step 1: Open a browser and navigate to the Schema Registry UI. You can get to this from the either the

Quick Linksdrop down in Ambari, as shown below:or by going to ````http://<EC2_NODE>:7788/ui/#/

-

Step 2: Create Meetup RSVP Schema in the Schema Registry

-

Click on “+” button to add new schemas. A window called “Add New Schema” will appear.

-

Fill in the fields of the

Add Schema Dialogas follows:For the Schema Text you can download it here and either copy and paste it or upload the file.

Once the schema information fields have been filled and schema uploaded, click Save.

-

- We are now ready to integrate the schema with NiFi

-

Step 0: Remove the PutFile and PublishKafka* processors from the canvas, we will not need them for this section.

-

Step 1: Add a UpdateAttribute processor to the canvas.

-

Step 2: Add a routing for the success relationship of the ReplaceText processor to the UpdateAttrbute processor added in Step 1.

-

Step 3: Configure the UpdateAttribute processor as shown below:

-

Step 4: Add a JoltTransformJSON processor to the canvas.

-

Step 5: Add a routing for the success relationship of the UpdateAttribute processor to the JoltTransformJSON processor added in Step 5.

-

Step 6: Configure the JoltTransformJSON processor as shown below:

The JSON used in the 'Jolt Specification' property is as follows:

{ "venue": { "lat": ["=toDouble", 0.0], "lon": ["=toDouble", 0.0] } } -

Step 7: Add a LogAttribute processor to the canvas.

-

Step 8: Add a routing for the failure relationship of the JoltTransformJSON processor to the LogAttribute processor added in Step 7.

-

Step 9: Add a PublishKafkaRecord_*_0 to the canvas.

-

Step 10: Add a routing for the success relationship of the JoltTransformJSON processor to the PublishKafkaRecord_1_0 processor added in Step 9.

-

Step 11: Configure the PublishKafkaRecord_*_0 processor to look like the following:

-

Step 12: When you configure the JsonTreeReader and AvroRecordSetWriter, you will first need to configure a schema registry controller service. The schema registry controller service we are going to use is the 'HWX Schema Registry', it should be configured as shown below:

-

Step 13: Configure the JsonTreeReader as shown below:

-

Step 14: Configure the AvroRecordSetWriter as shown below:

After following the above steps this section of your flow should look like the following:

-

Start the NiFi flow

-

In a terminal window to your EC2 node and navigate to the Kafka directory and connect a consumer to the

meetup_rsvp_avrotopic:bin/kafka-console-consumer.sh --bootstrap-server demo.hortonworks.com:6667 --topic meetup_rsvp_avro --from-beginning -

Messages should now appear in the consumer window.