This repository is the official implementation of Text2Video-Zero.

Text2Video-Zero: Text-to-Image Diffusion Models are Zero-Shot Video Generators

Levon Khachatryan,

Andranik Movsisyan,

Vahram Tadevosyan,

Roberto Henschel,

Zhangyang Wang, Shant Navasardyan, Humphrey Shi

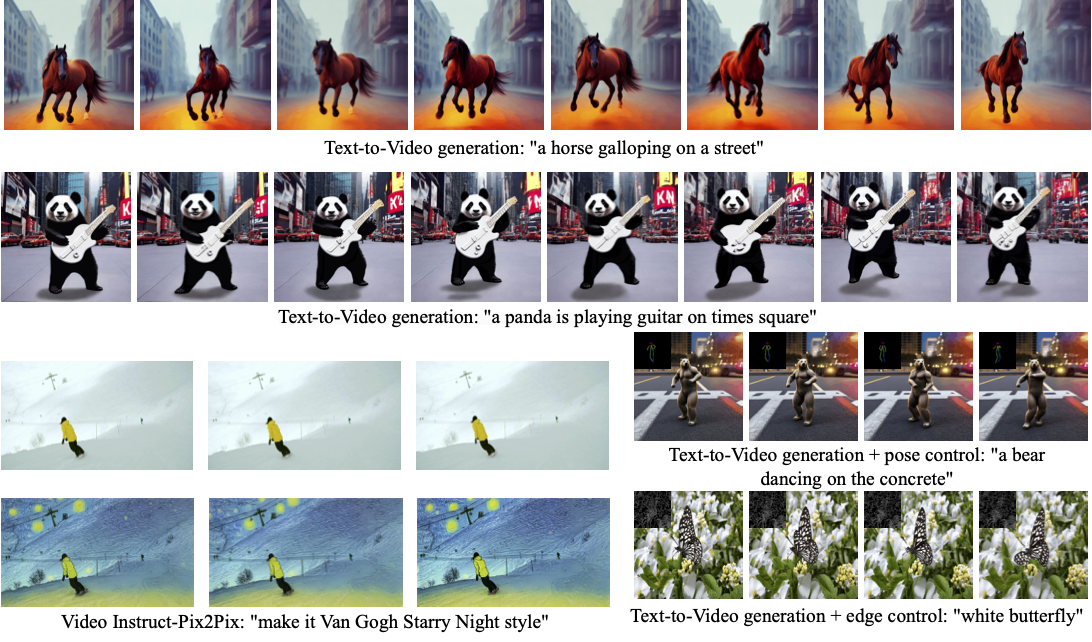

Our method Text2Video-Zero enables zero-shot video generation using (i) a textual prompt (see rows 1, 2), (ii) a prompt combined with guidance from poses or edges (see lower right), and (iii) Video Instruct-Pix2Pix, i.e., instruction-guided video editing (see lower left).

Results are temporally consistent and follow closely the guidance and textual prompts.

- [03/23/2023] Paper Text2Video-Zero released!

- [03/25/2023] The first version of our huggingface demo (containing

zero-shot text-to-video generationandVideo Instruct Pix2Pix) released! - [03/27/2023] The full version of our huggingface demo released! Now also included:

text and pose conditional video generation,text and canny-edge conditional video generation, andtext, canny-edge and dreambooth conditional video generation. - [03/28/2023] Code for all our generation methods released! We added a new low-memory setup. Minimum required GPU VRAM is currently 12 GB. It will be further reduced in the upcoming releases.

pip install -r requirements.txtDownload the pose model weights used in ControlNet:

wget -P annotator/ckpts https://huggingface.co/lllyasviel/ControlNet/resolve/main/annotator/ckpts/hand_pose_model.pth

wget -P annotator/ckpts https://huggingface.co/lllyasviel/ControlNet/resolve/main/annotator/ckpts/body_pose_model.pthIntegrate a SD1.4 Dreambooth model into ControlNet using this procedure. Load the model into models/control_db/. Dreambooth models can be obtained, for instance, from CIVITAI.

We provide already prepared model files for anime (keyword 1girl), arcane style (keyword arcane style) avatar (keyword avatar style) and gta-5 style (keyword gtav style). To this end, download the model files from google drive and extract them into models/control_db/.

To run inferences create an instance of Model class

import torch

from model import Model

model = Model(device = "cuda", dtype = torch.float16)To directly call our text-to-video generator, run this python command which stores the result in tmp/text2video/A_horse_galloping_on_a_street.mp4 :

from pathlib import Path

prompt = "A horse galloping on a street"

params = {"t0": 44, "t1": 47 , "motion_field_strength_x" : 12, "motion_field_strength_y" : 12, "video_length": 8}

out_path, fps = Path(f"tmp/text2video/{prompt.replace(' ','_')}.mp4"), 4

if not out_path.parent.exists():

out_path.parent.mkdir(parents=True)

model.process_text2video(prompt, fps = fps, path = out_path.as_posix(), **params)You can define the following hyperparameters:

-

Motion field strength:

motion_field_strength_x=$\delta_x$ andmotion_field_strength_y=$\delta_x$ (see our paper, Sect. 3.3.1). Default:motion_field_strength_x=motion_field_strength_y= 12. -

$T$ and$T'$ (see our paper, Sect. 3.3.1). Define valuest0andt1in the range{0,...,50}. Default:t0=44,t1=47(DDIM steps). Corresponds to timesteps881and941, respectively. -

Video length: Define the number of frames

video_lengthto be generated. Default:video_length=8.

To directly call our text-to-video generator with pose control, run this python command:

from pathlib import Path

prompt = 'an astronaut dancing in outer space'

motion_path = Path('__assets__/poses_skeleton_gifs/dance1_corr.mp4')

out_path = Path(f'./{prompt}.gif')

model.process_controlnet_pose(motion_path.as_posix(), prompt=prompt, save_path=out_path.as_posix())To directly call our text-to-video generator with edge control, run this python command:

from pathlib import Path

prompt = 'oil painting of a deer, a high-quality, detailed, and professional photo'

video_path = Path('__assets__/canny_videos_mp4/deer.mp4')

out_path = Path(f'./{prompt}.mp4')

model.process_controlnet_canny(video_path.as_posix(), prompt=prompt, save_path=out_path.as_posix())You can define the following hyperparameters for Canny edge detection:

-

low threshold. Define value

low_thresholdin the range$(0, 255)$ . Default:low_threshold=100. -

high threshold. Define value

high_thresholdin the range$(0, 255)$ . Default:high_threshold=200. Make sure thathigh_threshold>low_threshold.

You can give hyperparameters as arguments to model.process_controlnet_canny

Load a dreambooth model then proceed as described in Text-To-Video with Edge Guidance

from pathlib import Path

prompt = 'your prompt'

video_path = Path('path/to/your/video')

dreambooth_model_path = Path('path/to/your/dreambooth/model')

out_path = Path(f'./{prompt}.gif')

model.process_controlnet_canny_db(dreambooth_model_path.as_posix(), video_path.as_posix(), prompt=prompt, save_path=out_path.as_posix())To perform pix2pix video editing, run this python command:

from pathlib import Path

prompt = 'make it Van Gogh Starry Night'

video_path = Path('__assets__/pix2pix video/camel.mp4')

out_path = Path(f'./{prompt}.mp4')

model.process_pix2pix(video_path.as_posix(), prompt=prompt, save_path=out_path.as_posix())Each of the above introduced interface can be run in a low memory setup. In the minimal setup, a GPU with 12 GB VRAM is sufficient.

To reduce the memory usage, add chunk_size=k as additional parameter when calling one of the above defined inference APIs. The integer value k must be in the range {2,...,video_length}. It defines the number of frames that are processed at once (without any loss in quality). The lower the value the less memory is needed.

We plan to release soon a new version that further reduces the memory usage.

To replicate the ablation study, add additional parameters when calling the above defined inference APIs.

- To deactivate

cross-frame attention: Adduse_cf_attn=Falseto the parameter list. - To deactivate enriching latent codes with

motion dynamics: Adduse_motion_field=Falseto the parameter list.

Note: Adding smooth_bg=True activates background smoothing. However, our code does not include the salient object detector necessary to run that code.

From the project root folder, run this shell command:

python app.pyThen access the app locally with a browser.

|

|

|

|

| "A bear dancing on the concrete" | "An alien dancing under a flying saucer | "A panda dancing in Antarctica" | "An astronaut dancing in the outer space" |

|

|

|

|

| "White butterfly" | "Beautiful girl | "A jellyfish" | "beautiful girl halloween style" |

|

|

|

|

| "Wild fox is walking" | "Oil painting of a beautiful girl close-up | "A santa claus" | "A deer" |

|

|

|

|

| "anime style" | "arcane style | "gta-5 man" | "avatar style" |

|

|

|

| "Replace man with chimpanze" | "Make it Van Gogh Starry Night style" | "Make it Picasso style" |

|

|

|

| "Make it Expressionism style" | "Make it night" | "Make it autumn" |

Our code is published under the CreativeML Open RAIL-M license. The license provided in this repository applies to all additions and contributions we make upon the original stable diffusion code. The original stable diffusion code is under the CreativeML Open RAIL-M license, which can found here.

If you use our work in your research, please cite our publication:

@article{text2video-zero,

title={Text2Video-Zero: Text-to-Image Diffusion Models are Zero-Shot Video Generators},

author={Khachatryan, Levon and Movsisyan, Andranik and Tadevosyan, Vahram and Henschel, Roberto and Wang, Zhangyang and Navasardyan, Shant and Shi, Humphrey},

journal={arXiv preprint arXiv:2303.13439},

year={2023}

}