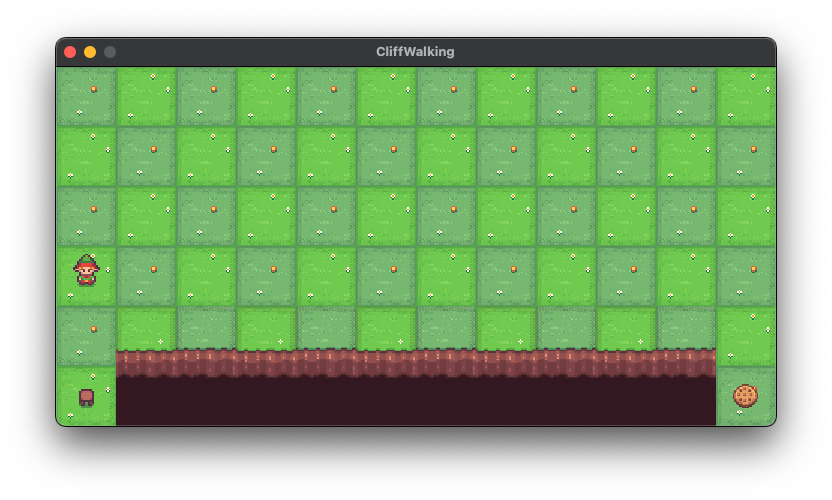

Welcome to the official repository for General Tree Evaluation for AlphaZero, a novel approach to enhancing model-based deep reinforcement learning. This repository contains the code and resources developed as part of the research that extends and refines the AlphaZero algorithm, particularly focusing on decoupling tree construction from action policies.

These plots demonstrate the empirical benefits of our proposed policies in classical Gym environments, especially under constrained simulation budgets.

- Logging to TensorBoard

- Logging to Weights & Biases

- Customizable environment configurations

- ... and more!

For a quick start, feel free to use the notebook kaggle.ipynb which should run on Kaggle/Google Colab with minimal setup. If you want to run the code locally, follow the instructions below.

Start by cloning the repository:

git clone https://github.com/albinjal/GeneralAlphaZero.git

cd GeneralAlphaZeroYou can set up the environment using either Pip or Conda. Choose one of the following methods to install the dependencies:

- Create a virtual environment and activate it:

python3 -m venv venv

source venv/bin/activate # On Windows use `venv\Scripts\activate`- Install the dependencies:

pip install -r requirements.txtCreate and activate the Conda environment:

conda env create -f environment.yml

conda activate az10TODO

Contributions are welcome! Please open an issue or submit a pull request for any improvements, bug fixes, or new features.

This project is licensed under the MIT License. See the LICENSE file for details.

Feel free to explore and modify the code, and don't hesitate to reach out if you have any questions or need further assistance. Happy coding!