Serving opennmt_tf framework

julsal opened this issue · comments

Dears, thanks for sharing this nice piece of work.

I'd like to serve the opennmt_tf framework using docker, exposing a web service as described in the main README (POST /translate).

As an example, I downloaded the trained corpus 'averaged-ende-export500k'. As far as I understood, after building the Docker image, I need to run the image as :

docker run -p 5000:5000 -v /root/models:/path/to/averaged-ende-export500k opennmt_tf:latest serve --host 0.0.0.0 --port 5000 --config config.json

I have some questions about the configuration file.

{

"source": "en",

"target": "de",

"model": "1539080952", // (mandatory for trans, serve) Full model name as uuid64

"imageTag": "string", // (mandatory) Full URL of the image: url/image:tag.

"tokenization": {

// Vocabularies and tokenization options (from OpenNMT/Tokenizer).

"source": {

"vocabulary": "string"

// other source specific tokenization options

},

"target": {

"vocabulary": "string"

// other target specific tokenization options

}

}

}

I removed everything marked as optional. I guess uuid64 required in "model" is the directory name from the corpus averaged-ende-export500k.

Is everything correct up to this point?

What should I put in "imageTag" and "tokenization"?

EDIT: The steps below have been updated for models exported with OpenNMT-tf 2.x.

Hi,

Here are the steps to serve this pretrained model.

- Download and adapt the model directory:

wget https://s3.amazonaws.com/opennmt-models/averaged-ende-export500k-v2.tar.gz

tar xf averaged-ende-export500k-v2.tar.gz

mkdir ende

mv averaged-ende-export500k-v2 ende/saved_model- Create

config.jsonin theendedirectory:

{

"source": "en",

"target": "de",

"model": "ende",

"modelType": "release",

"tokenization": {

"source": {

"mode": "none",

"sp_model_path": "${MODEL_DIR}/saved_model/assets.extra/wmtende.model",

"vocabulary": "${MODEL_DIR}/saved_model/assets/wmtende.vocab"

},

"target": {

"mode": "none",

"sp_model_path": "${MODEL_DIR}/saved_model/assets.extra/wmtende.model",

"vocabulary": "${MODEL_DIR}/saved_model/assets/wmtende.vocab"

}

}

}- Run the server:

docker run -p 4000:4000 -v $PWD:/root/models nmtwizard/opennmt-tf --model ende serve --host 0.0.0.0 --port 4000- Test the server:

$ curl -X POST http://localhost:4000/translate -d '{"src":[{"text": "Hello world!"}]}'

{"tgt": [[{"text": "Hallo Welt!", "score": -0.27484220266342163}, {"text": "Hallo!", "score": -1.6019006967544556}, {"text": "Hallo, die Welt!", "score": -0.8488588333129883}, {"text": "Hallo Welten!", "score": -1.1288902759552002}]]}Hope this helps.

thank you very much, @guillaumekln Worked perfectly!

@guillaumekln Can I add inference config in the config.json?

like this

"options": {

"config": {

"infer": {

"n_best": 3,

"with_scores": true,

"with_alignments": "hard"

},

"score": {

"with_alignments": "hard"

}

}

}

I have tried but it is not working..

Do you have any idea on that?

Thank you!

Hello @guillaumekln,

I'm getting these errors, what am I doing wrong?

Did you follow step 1. in the instructions above? Make sure the directory averaged-ende-export500k exists in $PWD.

@guillaumekln

I followed step 1 inside root/models/ folder

(I had to change: mv averaged-ende-export500k/1539080952/ averaged-ende-export500k/1

to: mv averaged-ende-export500k/1554540232/ averaged-ende-export500k/1)

I followed the rest as is, on step 3 I'm getting the same error.

I'm not sure what you mean by:

Make sure the directory averaged-ende-export500k exists in $PWD.

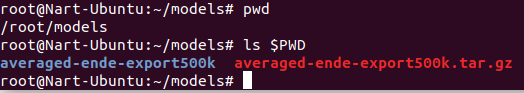

When you run ls $PWD, does the directory averaged-ende-export500k exist?

@guillaumekln

When I run it in the terminal inside root/models/, then yes it exists.

Can you run the command from this directory?

@guillaumekln Can you be more clear, I can hardly understand you!

You should run the Docker command when you are in ~/models. Please note that the instructions above do not indicate to create a models directory or change directory between steps.

@guillaumekln It's working!

I'm running the Docker command in ~/models.

I did create root/models/ directory and execute the 1 step in there.