Instead of using complex and resource-demanding deep learning techniques, which could be considered state-of-the-art solutions, we propose using a combination of feature extractors with an ensemble of lightweight models obtained by the algorithmic kernel of AutoML framework FEDOT.

Application field of the framework is the following:

- Classification (time series or image)

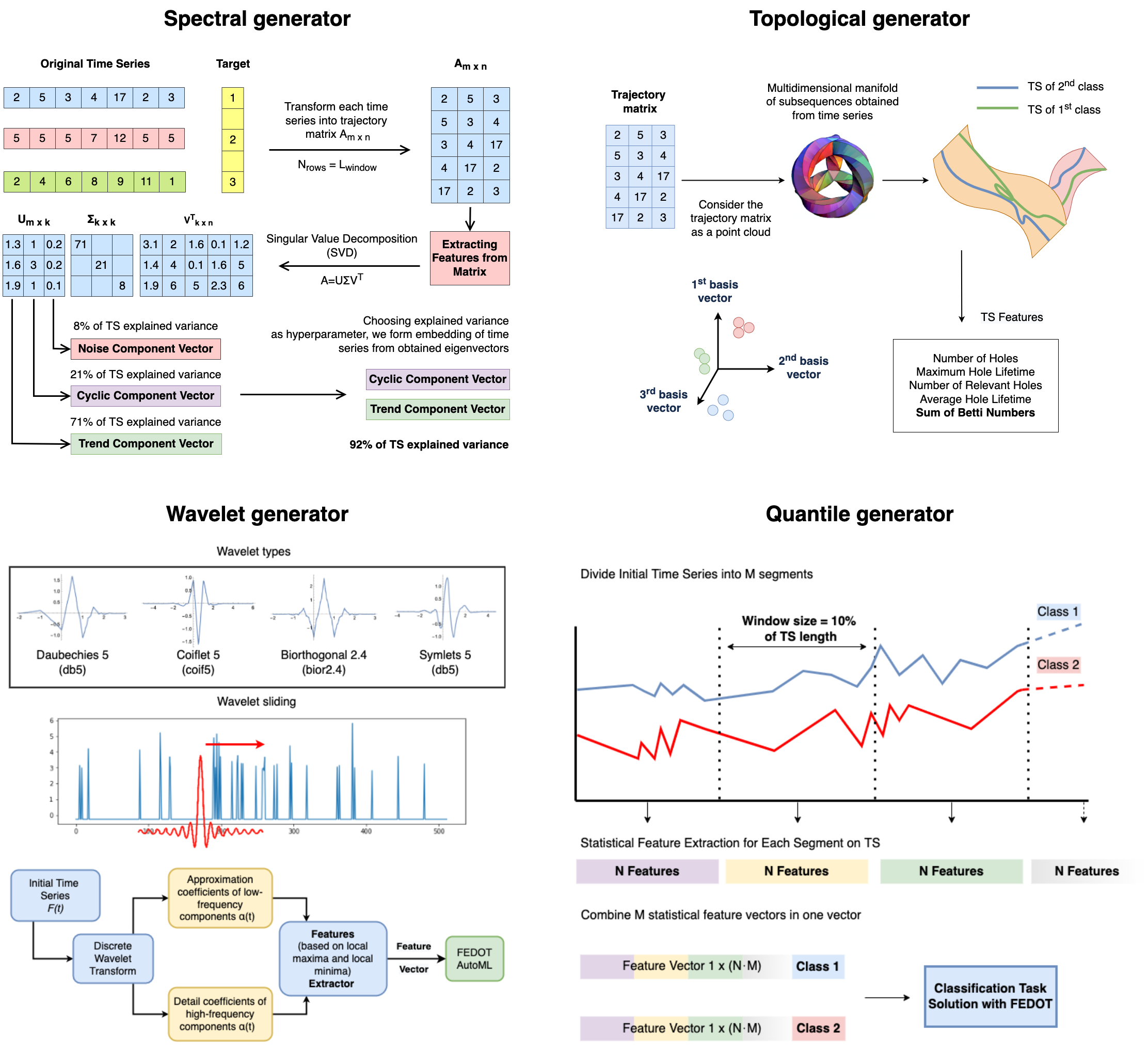

For this purpose we introduce four feature generators:

After feature generation process apply evolutionary algorithm of FEDOT to find the best model for classification task.

- Anomaly detection (time series or image)

--work in progress--

- Change point detection (only time series)

--work in progress--

- Object detection (only image)

--work in progress--

FEDOT.Industrial provides a high-level API that allows you to use its capabilities in a simple way.

To conduct time series classification you need to set experiment configuration via dictionary, then make an instance if Industrial class, and call its run_experiment method:

from core.api.API import Industrial

if __name__ == '__main__':

config = {'feature_generator': ['spectral', 'wavelet'],

'datasets_list': ['UMD', 'Lightning7'],

'use_cache': True,

'error_correction': False,

'launches': 3,

'timeout': 15}

ExperimentHelper = Industrial()

ExperimentHelper.run_experiment(config)Config contains the following parameters:

feature_generators- list of feature generators to use in the experimentuse_cache- whether to use cache or notdatasets_list- list of datasets to use in the experimentlaunches- number of launches for each dataseterror_correction- flag for application of error correction model in the experimentn_ecm_cycles- number of cycles for error correction modeltimeout- the maximum amount of time for classification pipeline composition

Datasets for classification should be stored in the data directory and

divided into train and test sets with .tsv extension. So the name of folder

in the data directory should be equal to the name of dataset that you want

to use in the experiment. In case of data absence in the local folder, implemented DataLoader

class will try to load data from the UCR archive.

Possible feature generators which could be specified in configuration are

window_quantile, quantile, spectral_window, spectral,

wavelet, recurrence and topological.

There is also a possibility to ensemble several feature generators.

It could be done by the following instruction in

feature_generator field of config where

you need to specify the list of feature generators:

'ensemble: topological wavelet window_quantile quantile spectral spectral_window'Results of experiment which include generated features, predicted classes, metrics and

pipelines are stored in results_of_experiments/{feature_generator name} directory.

Logs of experiment are stored in log directory.

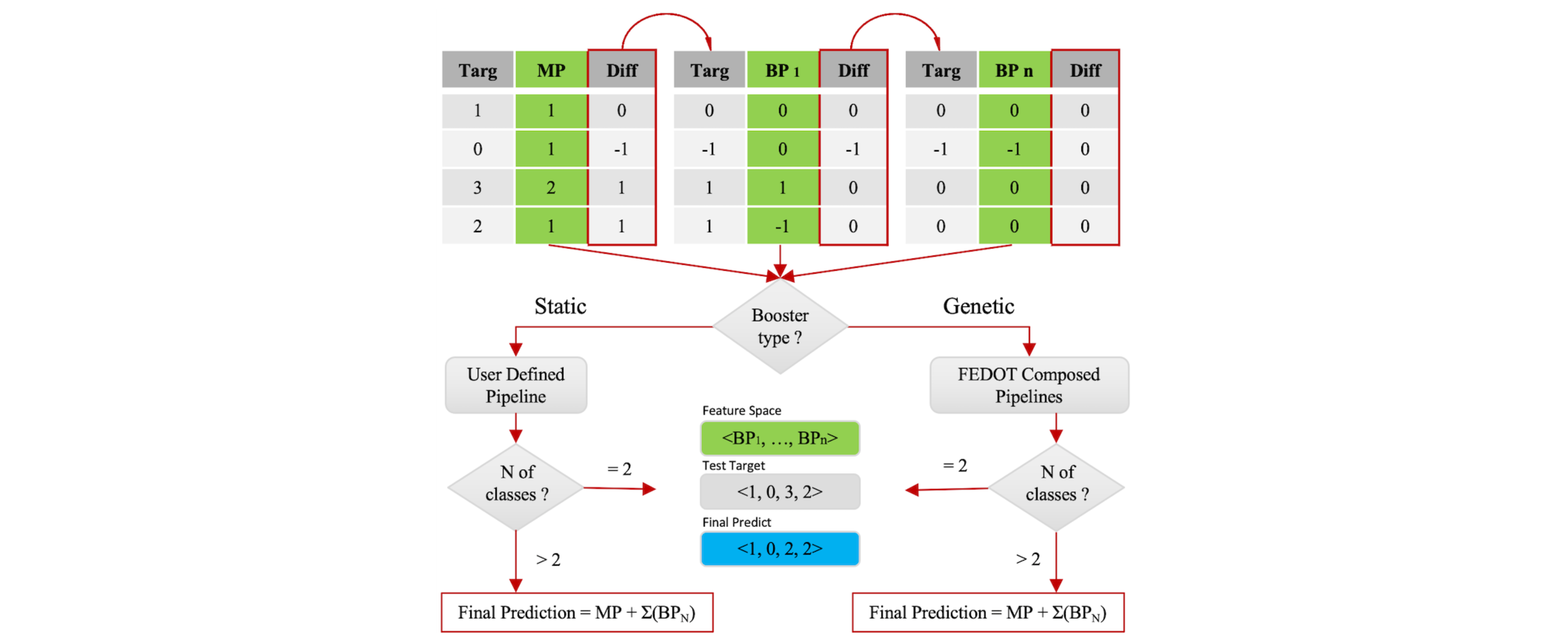

It is up to you to decide whether to use error correction model or not. To apply it, the error_correction

flag in the config should be set to True. By default the number of

cycles n_ecm_cycles=3, but using advanced technique of experiment managing through YAML config file

you can easily adjust it.

In this case after each launch of FEDOT algorithmic kernel the error correction model will be trained on the

produced error.

The error correction model is a linear regression model of

three stages: at every next stage the model learn the error of

prediction. The type of ensemble model for error correction is dependent

on the number of classes:

- For binary classification the ensemble is also

linear regression, trained on predictions of correction stages.

- For multiclass classification the ensemble is a sum of previous predictions.

To speed up the experiment, you can cache the features generated by the feature generators.

If use_cache bool flag in config is True, then every feature space generated during experiment is

cached into corresponding folder. To do so a hash from function get_features arguments and generator attributes

is obtained. Then resulting feature space is dumped via pickle library.

The next time when the same feature space is requested, the hash is calculated again and the corresponding feature space is loaded from the cache which is much faster than generating it from scratch.

--work in progress--

--work in progress--

--work in progress--

Comprehensive tutorial will be available soon.

Our plan for publication activity is to publish papers related to framework's usability and its applications. Here is a list of articles which are under review process:

| [1] | AUTOMATED MACHINE LEARNING APPROACH FOR TIME SERIES CLASSIFICATION PIPELINES USING EVOLUTIONARY OPTIMISATION` by Ilya E. Revin, Vadim A. Potemkin, Nikita R. Balabanov, Nikolay O. Nikitin |

| [2] | AUTOMATED ROCKBURST FORECASTING USING COMPOSITE MODELLING FOR SEISMIC SENSORS DATA by Ilya E. Revin, Vadim A. Potemkin, and Nikolay O. Nikitin |

Stay tuned!

The latest stable release of FEDOT.Industrial is on the main branch.

The repository includes the following directories:

- Package

corecontains the main classes and scripts - Package

casesincludes several how-to-use-cases where you can start to discover how framework works - All unit and integration tests will be observed in the

testdirectory - The sources of the documentation are in the

docs

– Implement feature space caching for feature generators (DONE)

– Development of model containerization module

– Development of meta-knowledge storage for data obtained from the experiments

– Research on time series clusterization

Comprehensive documentation is available at readthedocs.

The study is supported by Research Center Strong Artificial Intelligence in Industry of ITMO University (Saint Petersburg, Russia)

Here will be provided a list of citations for the project as soon as articles will be published.

So far you can use citation for this repository:

@online{fedot_industrial,

author = {Revin, Ilya and Potemkin, Vadim and Balabanov, Nikita and Nikitin, Nikolay},

title = {FEDOT.Industrial - Framework for automated time series analysis},

year = 2022,

url = {https://github.com/ITMO-NSS-team/Fedot.Industrial},

urldate = {2022-05-05}

}