We provide the training and testing codes for SAN, implemented in PyTorch.

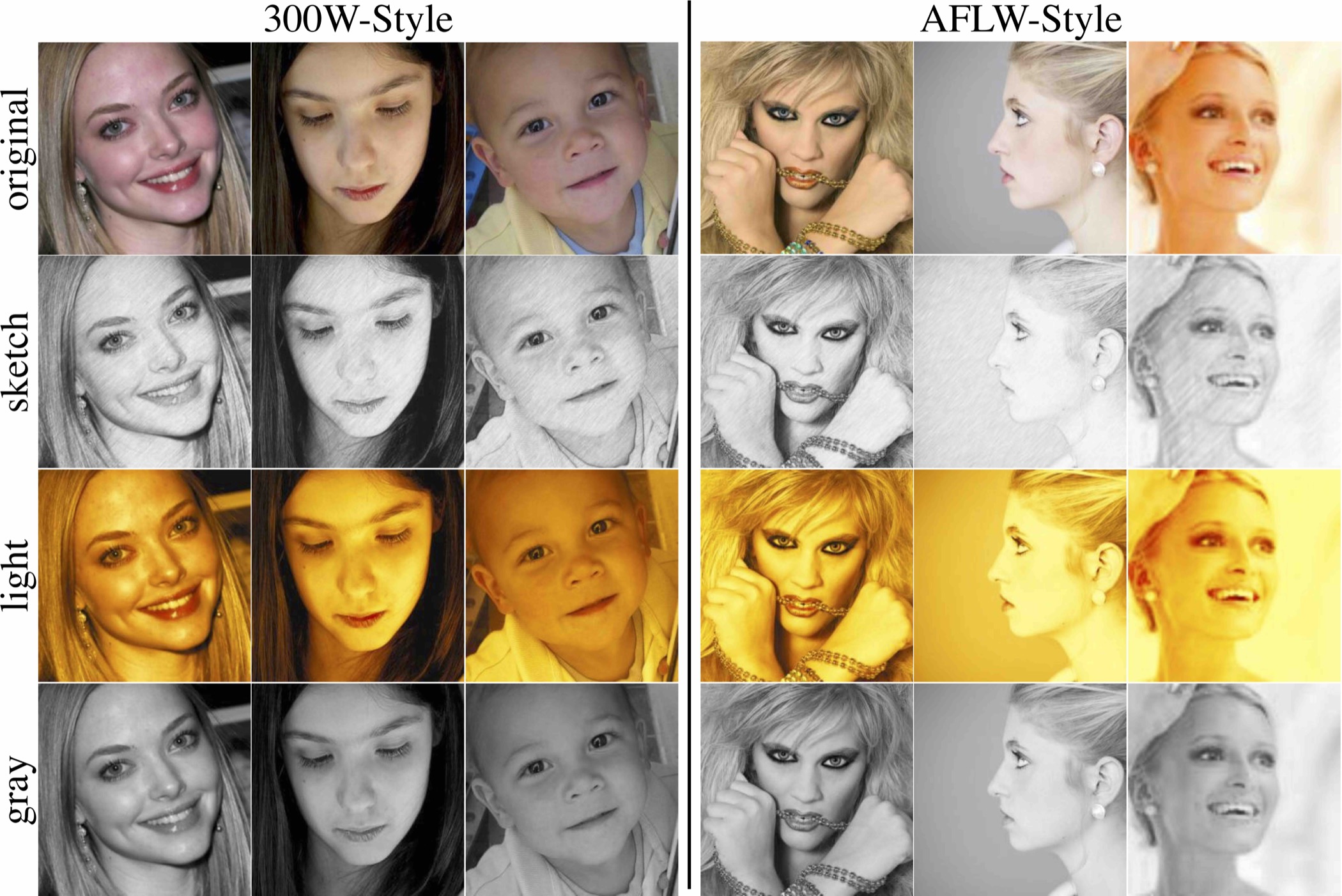

- Download 300W-Style and AFLW-Style from Google Drive, and extract the downloaded files into

~/datasets/. - In 300W-Style and AFLW-Style directories, the

Originalsub-directory contains the original images from 300-W and AFLW - The sketch, light, and gray style images are used to analyze the image style variance in facial landmark detection.

- For simplification, we change some file names, such as removing the space or unifying the file extension.

300W-Style.tgz should be extracted into ~/datasets/300W-Style by typing tar xzvf 300W-Style.tgz; mv 300W-Convert 300W-Style.

It has the following structure:

--300W-Gray

--300W ; afw ; helen ; ibug ; lfpw

--300W-Light

--300W ; afw ; helen ; ibug ; lfpw

--300W-Sketch

--300W ; afw ; helen ; ibug ; lfpw

--300W-Original

--300W ; afw ; helen ; ibug ; lfpw

--Bounding_Boxes

--*.mat

AFLW-Style.tgz should be extracted into ~/datasets/AFLW-Style by typing tar xzvf AFLW-Style.tgz; mv AFLW-Convert AFLW-Style.

It has the following structure (annotation is generated by python aflw_from_mat.py):

--aflw-Gray

--0 2 3

--aflw-Light

--0 2 3

--aflw-Sketch

--0 2 3

--aflw-Original

--0 2 3

--annotation

--0 2 3

cd cache_data

python aflw_from_mat.py

python generate_300W.py

The generated list file will be saved into ./cache_data/lists/300W and ./cache_data/lists/AFLW.

python crop_pic.py

The above commands will pre-crop the face images, and save them into ./cache_data/cache/300W and ./cache_data/cache/AFLW.

- Step-1 : cluster images into different groups, for example

sh scripts/300W/300W_Cluster.sh 0,1 GTB 3. - Step-2 : use

sh scripts/300W/300W_CYCLE_128.sh 0,1 GTBorsh scripts/300W/300W_CYCLE_128.sh 0,1 DETto train SAN on 300-W. GTBmeans using the ground truth face bounding box, andDETmeans using the face detection results from a pre-trained detector (these results are provided from the official 300-W website).

- Step-1 : cluster images into different groups, for example

sh scripts/AFLW/AFLW_Cluster.sh 0,1 GTB 3. - Step-2 : use

sh scripts/AFLW/AFLW_CYCLE_128.FULL.shorsh scripts/AFLW/AFLW_CYCLE_128.FRONT.shto train SAN on AFLW.

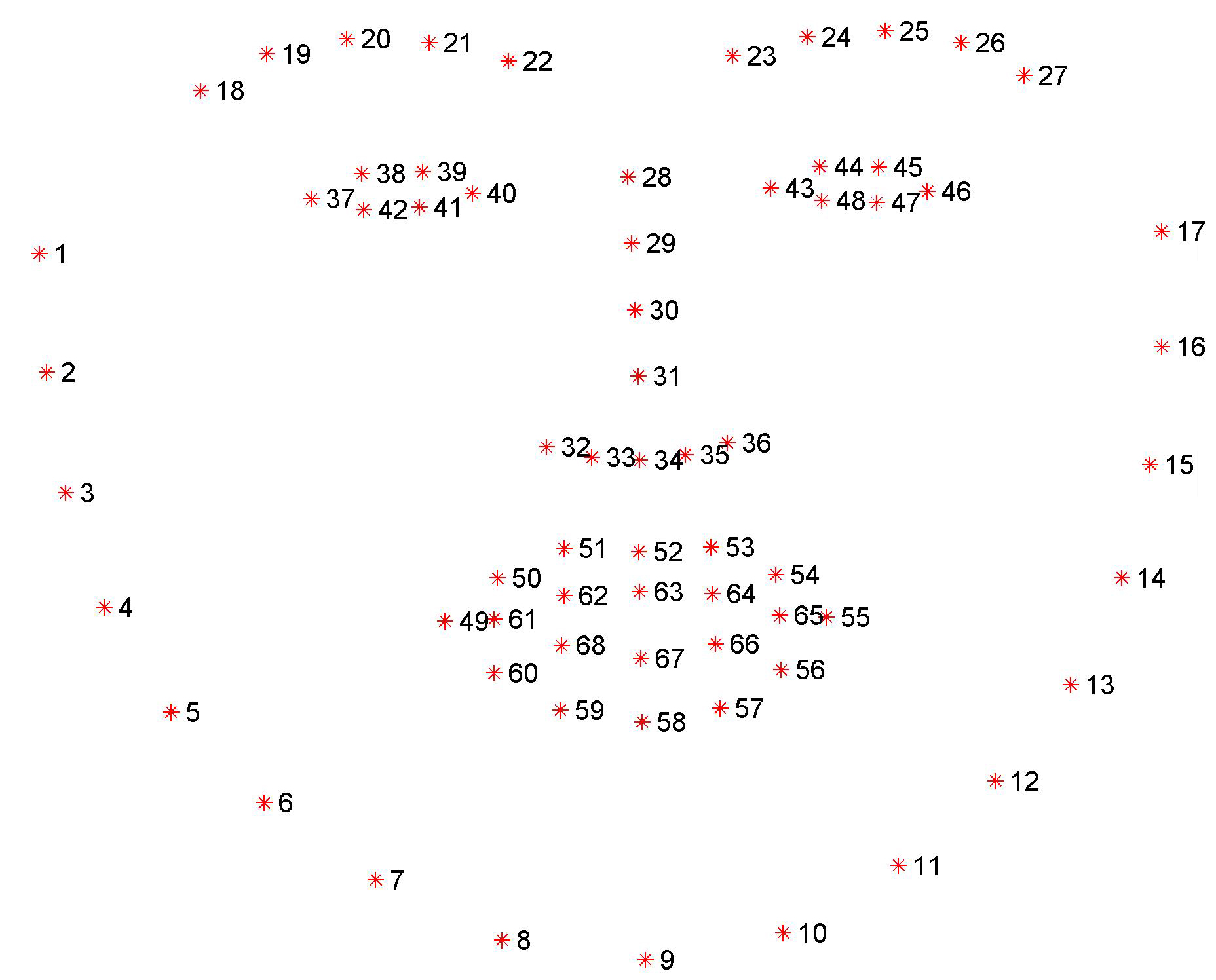

You can donwload a pre-trained model from the snapshots directory of here, which is trained on 300-W. Put it in snapshots and use the following command to evaluate a single image. This command will print the location of each landmark.

CUDA_VISIBLE_DEVICES=1 python san_eval.py --image ./cache_data/cache/test_1.jpg --model ./snapshots/SAN_300W_GTB_itn_cpm_3_50_sigma4_128x128x8/checkpoint_49.pth.tar --face 819.27 432.15 971.70 575.87

The parameter image is the image path to be evaluated, model is the trained SAN model, and face denotes the coordinates of the face bounding box.

The ground truth landmark annotation for ./cache_data/cache/test_1.jpg is ./cache_data/cache/test_1.pts.

If this project helps your research, please cite the following papers:

@inproceedings{dong2018san,

title={Style Aggregated Network for Facial Landmark Detection},

author={Dong, Xuanyi and Yan, Yan and Ouyang, Wanli and Yang, Yi},

booktitle={Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition},

year={2018}

}

@inproceedings{dong2018sbr,

title={{Supervision-by-Registration}: An Unsupervised Approach to Improve the Precision of Facial Landmark Detectors},

author={Dong, Xuanyi and Yu, Shoou-I and Weng, Xinshuo and Wei, Shih-En and Yang, Yi and Sheikh, Yaser},

booktitle={Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition},

year={2018}

}

To ask questions or report issues, please open an issue on the issues tracker.