How to Run

Folder explaination

ppo_baseline_v0.01: stable version v0.01

ppo_baseline_v0.02: curiosity

ppo_baseline_v0.03: actually map-plan-baseline, spl=0.923

ppo_baseline_v0.04: map_and_plan dev

ppo_baseline_v0.05: imitation learning

ppo_baseline_v0.06: AStar Planner

ppo_baseline_v0.07: map-plan-baseline `2 steps plan`.

ppo_baseline_v0.08: actually map-plan-baseline, spl=0.923, for submit

evaluate_ppo_vis_single_env.py: add visualization of V-saliency (depth v-saliency, rgb v-saliency)

Development

docker login --username=$USERNAME$ registry.sensetime.com

docker pull registry.sensetime.com/habitat/jack/habitat:v0.03

docker tag registry.sensetime.com/habitat/jack/habitat:v0.03 jack/habitat:v0.03

cd ppo_baseline

bash run_habitat_docker.sh

# For lustre: bash srun_habitat_docker.sh

# Inside the habitat docker container

conda activate habitat

cd /habitat-baseline

ln -s /habitat-api/data/ .

bash train_ppo.sh

bash evaluate_ppo.sh

Submission

bash rgbd_build_docker.sh

bash rgb_build_docker.sh

evalai push rgbd_submission:latest --phase habitat19-rgbd-val

# habitat19-{rgb, rgbd}-{val, test-std, test-ch}

Tools

ppo_baseline_v0.02/simple_agent.py: Compute mean/std of dataset:

Habitat-Challenge

This repository contains starter code for the challenge and details of task, training and evaluation. For an overview of habitat-challenge visit aihabitat.org/challenge.

Task

The objective of the agent is to navigate successfully to a target location specified by agent-relative Euclidean coordinates (e.g. "Go 5m north, 3m west relative to current location"). Importantly, updated goal-specification (relative coordinates) is provided at all times (as the episode progresses) and not just at the outset of an episode. The action space for the agent consists of turn-left, turn-right, move forward and STOP actions. The turn actions produce a turn of 10 degrees and move forward action produces a linear displacement of 0.25m. The STOP action is used by the agent to indicate completion of an episode. We use an idealized embodied agent with a cylindrical body with diameter 0.2m and height 1.5m. The challenge consists of two tracks, RGB (only RGB input) and RGBD (RGB and Depth inputs).

Challenge Dataset

We create a set of PointGoal navigation episodes for the Gibson [1] 3D scenes as the main dataset for the challenge. Gibson was preferred over SUNCG because unlike SUNCG it contains scans of real-world indoor environments. Gibson was chosen over Matterport3D because unlike Matterport3D Gibson's raw meshes are not publicly available allowing us to sequester a test set. We use the splits provided by the Gibson dataset, retaining the train, and val sets, and separating the test set into test-standard and test-challenge. The train and val scenes are provided to participants. The test scenes are used for the official challenge evaluation and are not provided to participants.

Evaluation

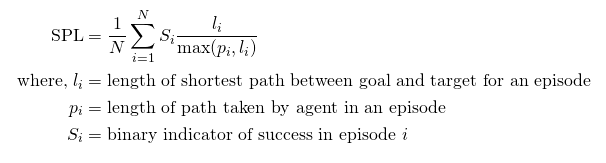

After calling the STOP action, the agent is evaluated using the "Success weighted by Path Length" (SPL) metric [1].

An episode is deemed successful if on calling the STOP action, the agent is within 0.2m of the goal position. The evaluation will be carried out in completely new houses which are not present in training and validation splits.

Participation Guidelines

Participate in the contest by registering on the EvalAI challenge page and creating a team. Participants will upload docker containers with their agents that evaluated on a AWS GPU-enabled instance. Before pushing the submissions for remote evaluation, participants should test the submission docker locally to make sure it is working. Instructions for training, local evaluation, and online submission are provided below.

Local Evaluation

-

Clone the challenge repository:

git clone https://github.com/facebookresearch/habitat-challenge.git cd habitat-challengeImplement your own agent or try one of ours. We provide hand-coded agents in

myagent/agent.py, below is an example forward-only code for agent:import habitat class ForwardOnlyAgent(habitat.Agent): def reset(self): pass def act(self, observations): action = SIM_NAME_TO_ACTION[SimulatorActions.FORWARD.value] return action def main(): agent = ForwardOnlyAgent() challenge = habitat.Challenge() challenge.submit(agent) if __name__ == "__main__": main()

[Optional] Modify submission.sh file if your agent needs any custom modifications (e.g. command-line arguments). Otherwise, nothing to do. Default submission.sh is simply a call to

GoalFolloweragent inmyagent/agent.py. -

Install nvidia-docker Note: only supports Linux; no Windows or MacOS.

-

Modify the provided Dockerfile if you need custom modifications. Let's say your code needs

pytorch, these dependencies should be pip installed inside a conda environment calledhabitatthat is shipped with our habitat-challenge docker, as shown below:FROM fairembodied/habitat-challenge:latest # install dependencies in the habitat conda environment RUN /bin/bash -c ". activate habitat; pip install torch" ADD myagent /myagent ADD submission.sh /submission.sh

Build your docker container:

docker build -t my_submission .(Note: you will needsudopriviliges to run this command) -

Download Gibson scenes used for Habitat Challenge. Accept terms here and select the download corresponding to “Habit at Challenge Data for Gibson (1.4 GB)“. Place this data in:

habitat-challenge/habitat-challenge-data/gibson -

Evaluate your docker container locally on RGB-D modalities:

./test_locally_rgbd.sh --docker-name my_submission

If the above command runs successfully you will get an output similar to:

2019-04-04 21:23:51,798 initializing sim Sim-v0 2019-04-04 21:23:52,820 initializing task Nav-v0 2019-04-04 21:24:14,508 spl: 0.16539757116003695Note: this same command will be run to evaluate your agent for the leaderboard. Please submit your docker for remote evaluation (below) only if it runs successfully on your local setup.

To evaluate on RGB modality run:./test_locally_rgb.sh --docker-name my_submission

Online submission

Follow instructions in the submit tab of the EvalAI challenge page to submit your docker image. Pasting those instructions here for convenience:

# Installing EvalAI Command Line Interface

pip install evalai

# Set EvalAI account token

evalai set_token <your EvalAI participant token>

# Push docker image to EvalAI docker registry

evalai push my_submission:latest --phase habitat19-rgb-valValid challenge phases are habitat19-{rgb, rgbd}-{val, test-std, test-ch}.

Note: Your agent will be evaluated on 1000 episodes and will have a total available time of 30mins to finish. Your submissions will be evaluated on AWS EC2 p2.xlarge instance which has a Tesla K80 GPU (12 GB Memory), 4 CPU cores, and 61 GB RAM.

Starter code and Training

-

Install the Habitat-Sim and Habitat-API packages.

-

Download the Gibson dataset following the instructions here. After downloading extract the dataset to folder

habitat-api/data/scene_datasets/gibson/folder (this folder should contain the.glbfiles from gibson). Note that thehabitat-apifolder is the habitat-api repository folder. -

Download the dataset for Gibson pointnav from link and place it in the folder

habitat-api/data/datasets/pointnav/gibson. If placed correctly, you should have the train and val splits athabitat-api/data/datasets/pointnav/gibson/v1/train/andhabitat-api/data/datasets/pointnav/gibson/v1/val/respectively. Place Gibson scenes downloaded in step-4 of local-evaluation under thehabitat-api/data/scene_datasetsfolder. -

An example PPO baseline for the Pointnav task is present at

habitat-api/baselines. To start training on the Gibson dataset using this implementation follow instructions in the baselines README. Set--task-configtotasks/pointnav_gibson.yamlto train on Gibson data. This is a good starting point for participants to start building their own models. The PPO implementation contains initialization and interaction with the environment as well as tracking of training statistics. Participants can borrow the basic blocks from this implementation to start building their own models. -

To evaluate a trained PPO model on Gibson val split run

evaluate_ppo.pyusing instructions in the README athabitat-api/baselineswith--task-configset totasks/pointnav_gibson.yaml. The evaluation script will report SPL metric. -

You can also use the general benchmarking script present at

benchmark.py. Set--task-configtotasks/pointnav_gibson.yamland inside the filetasks/pointnav_gibson.yamlset theSPLITtoval. -

Once you have trained your agents you can follow the submission instructions above to test them locally as well as submit them for online evaluation.

-

We also provide trained RGB, RGBD, Blind PPO models. Code for the models is present inside the

habitat-challenge/baselinesfolder. To use them:- Download pre-trained pytorch models from link and unzip into

habitat-challenge/models. - Add loading of the baselines folder and models folder to Dockerfile:

ADD baselines /baselines ADD models /models

- Modify

submission.shappropriately:

python baselines/agents/ppo_agents.py --input_type {blind, depth, rgb, rgbd} --model_path baselines/{blind, depth, rgb, rgbd}.pth`- Build docker and run local evaluation:

docker build -t my_submission .; ./test_locally_{rgb, rgbd}.sh --docker-name my_submission

- Download pre-trained pytorch models from link and unzip into

Acknowledgments

The Habitat challenge would not have been possible without the infrastructure and support of EvalAI team and the data of Gibson team. We are especially grateful to Rishabh Jain, Deshraj Yadav, Fei Xia and Amir Zamir.

References

[1] F. Xia, A. R. Zamir, Z. He, A. Sax, J. Malik, and S. Savarese, "Gibson env: Real-world perception for embodied agents," in CVPR, 2018