Pytorch implementation for AttnGAN with CelebA dataset.

Code from official github code.

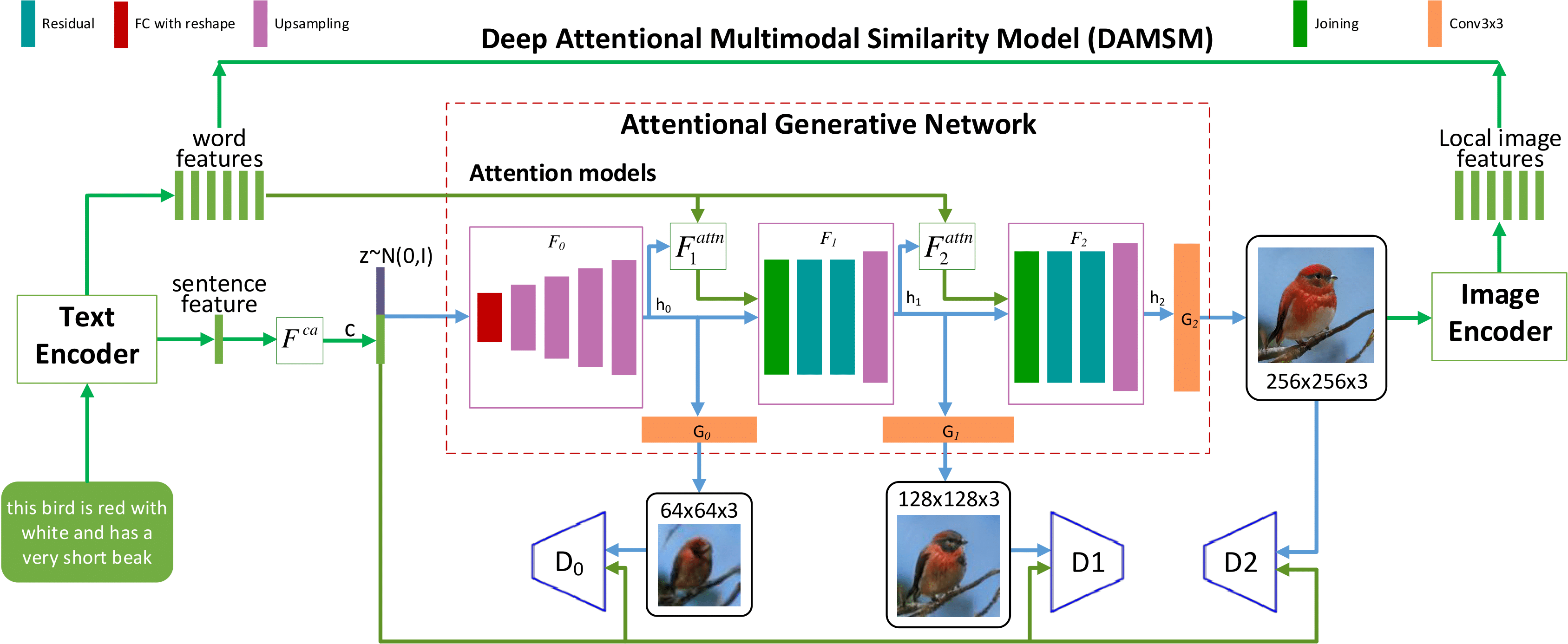

Architecture

- python 2.7

- Pytorch

- In addition, please add the project folder to PYTHONPATH and

pip installthe following packages:python-dateutileasydictpandastorchfilenltkscikit-imagepyyaml

- Download our preprocessed text for CelebA and extract them to

data/CelebA/- file directory example:

data/CelebA/text/0/000012.txt

- file directory example:

- Download the preprocessed CelebA image data and extract them to

data/CelebA/- file directory example:

data/CelebA/images/000012.jpg

- file directory example:

-

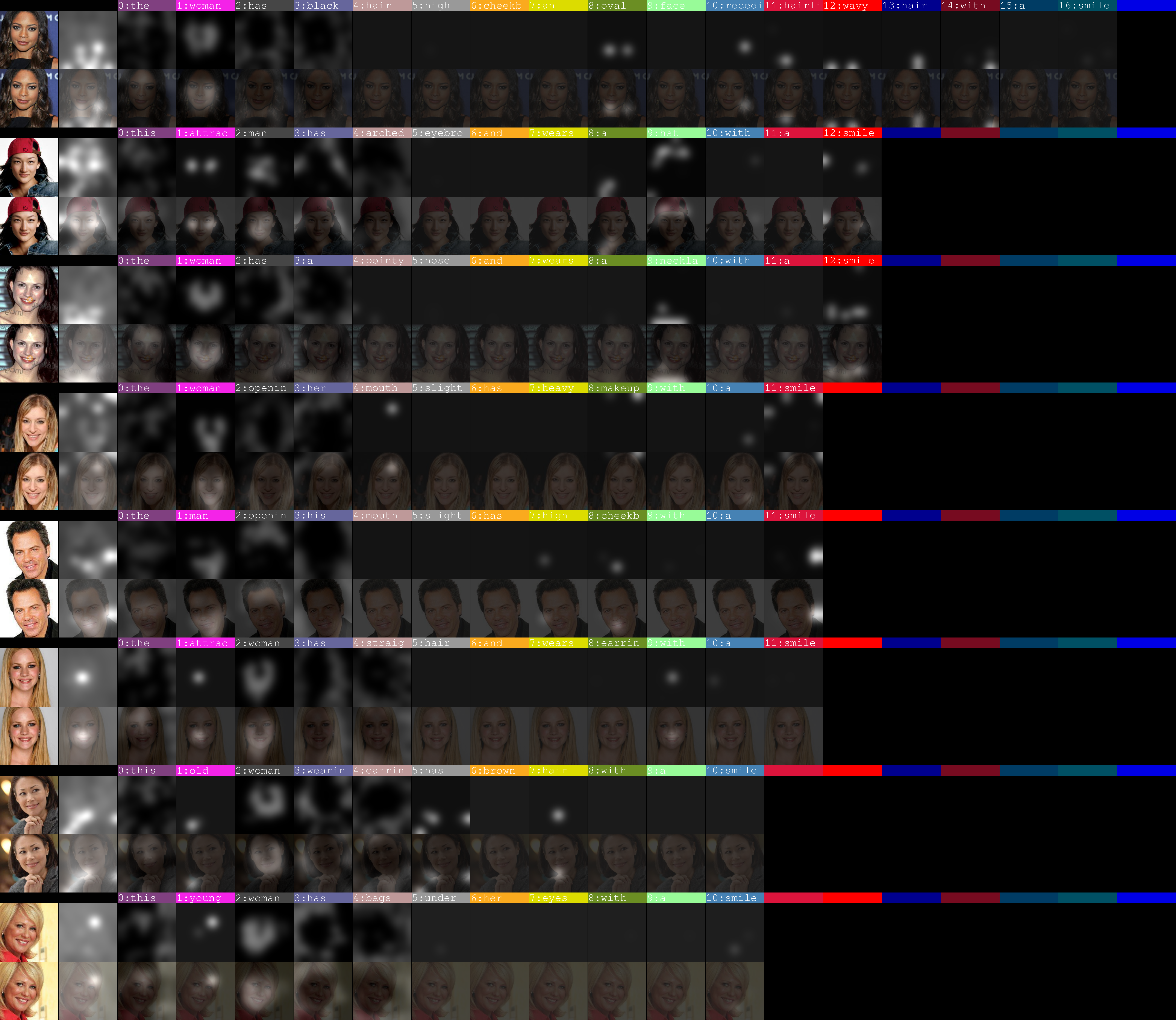

Pre-train DAMSM models:

For CelebA dataset:

python pretrain_DAMSM.py --cfg cfg/DAMSM/CelebA.yml --gpu 0 -

Train AttnGAN models:

For CelebA dataset:

python main.py --cfg cfg/CelebA_attn2.yml --gpu 0

*.ymlfiles are example configuration files for training/evaluation our models.

- Run

python main.py --cfg cfg/eval_CelebA.yml --gpu 0to generate examples from captions in files listed in "./data/CelebA/example_filenames.txt". Results are saved toDAMSMencoders/. - Input your own sentence in "./data/CelebA/example_captions.txt" if you want to generate images from customized sentences.

- To generate images for all captions in the validation dataset, change B_VALIDATION to True in the eval_*.yml. and then run

python main.py --cfg cfg/eval_CelebA.yml --gpu 0

- 60epoch